标签:

本文转自:http://www.analyticsvidhya.com/blog/2016/06/quick-guide-build-recommendation-engine-python/

This could help you in building your first project!

Be it a fresher or an experienced professional in data science, doing voluntary projects always adds to one’s candidature. My sole reason behind writing this article is to get your started with recommendation systems so that you can build one. If you struggle to get open data, write to me in comments.

Recommendation engines are nothing but an automated form of a “shop counter guy”. You ask him for a product. Not only he shows that product, but also the related ones which you could buy. They are well trained in cross selling and up selling. So, does our recommendation engines.

The ability of these engines to recommend personalized content, based on past behavior is incredible. It brings customer delight and gives them a reason to keep returning to the website.

In this post, I will cover the fundamentals of creating a recommendation system using GraphLab in Python. We will get some intuition into how recommendation work and create basic popularity model and a collaborative filtering model.

Before taking a look at the different types of recommendation engines, lets take a step back and see if we can make some intuitive recommendations. Consider the following cases:

A simple approach could be to recommend the items which are liked by most number of users. This is a blazing fast and dirty approach and thus has a major drawback. The things is, there is no personalization involved with this approach.

Basically the most popular items would be same for each user since popularity is defined on the entire user pool. So everybody will see the same results. It sounds like, ‘a website recommends you to buy microwave just because it’s been liked by other users and doesn’t care if you are even interested in buying or not’.

Surprisingly, such approach still works in places like news portals. Whenever you login to say bbcnews, you’ll see a column of “Popular News” which is subdivided into sections and the most read articles of each sections are displayed. This approach can work in this case because:

We already know lots of classification algorithms. Let’s see how we can use the same technique to make recommendations. Classifiers are parametric solutions so we just need to define some parameters (features) of the user and the item. The outcome can be 1 if the user likes it or 0 otherwise. This might work out in some cases because of following advantages:

But has some major drawbacks as well because of which it is not used much in practice:

Now lets come to the special class of algorithms which are tailor-made for solving the recommendation problem. There are typically two types of algorithms – Content Based and Collaborative Filtering. You should refer to our previous article to get a complete sense of how they work. I’ll give a short recap here.

We will be using the MovieLens dataset for this purpose. It has been collected by the GroupLens Research Project at the University of Minnesota. MovieLens 100K dataset can be downloaded from here. It consists of:

Lets load this data into Python. There are many files in the ml-100k.zip file which we can use. Lets load the three most importance files to get a sense of the data. I also recommend you to read thereadme document which gives a lot of information about the difference files.

import pandas as pd

# pass in column names for each CSV and read them using pandas.

# Column names available in the readme file

#Reading users file:

u_cols = [‘user_id‘, ‘age‘, ‘sex‘, ‘occupation‘, ‘zip_code‘]

users = pd.read_csv(‘ml-100k/u.user‘, sep=‘|‘, names=u_cols,

encoding=‘latin-1‘)

#Reading ratings file:

r_cols = [‘user_id‘, ‘movie_id‘, ‘rating‘, ‘unix_timestamp‘]

ratings = pd.read_csv(‘ml-100k/u.data‘, sep=‘\t‘, names=r_cols,

encoding=‘latin-1‘)

#Reading items file:

i_cols = [‘movie id‘, ‘movie title‘ ,‘release date‘,‘video release date‘, ‘IMDb URL‘, ‘unknown‘, ‘Action‘, ‘Adventure‘,

‘Animation‘, ‘Children\‘s‘, ‘Comedy‘, ‘Crime‘, ‘Documentary‘, ‘Drama‘, ‘Fantasy‘,

‘Film-Noir‘, ‘Horror‘, ‘Musical‘, ‘Mystery‘, ‘Romance‘, ‘Sci-Fi‘, ‘Thriller‘, ‘War‘, ‘Western‘]

items = pd.read_csv(‘ml-100k/u.item‘, sep=‘|‘, names=i_cols,

encoding=‘latin-1‘)

Now lets take a peak into the content of each file to understand them better.

print users.shape

users.head()

This reconfirms that there are 943 users and we have 5 features for each namely their unique ID, age, gender, occupation and the zip code they are living in.

print ratings.shape

ratings.head()

This confirms that there are 100K ratings for different user and movie combinations. Also notice that each rating has a timestamp associated with it.

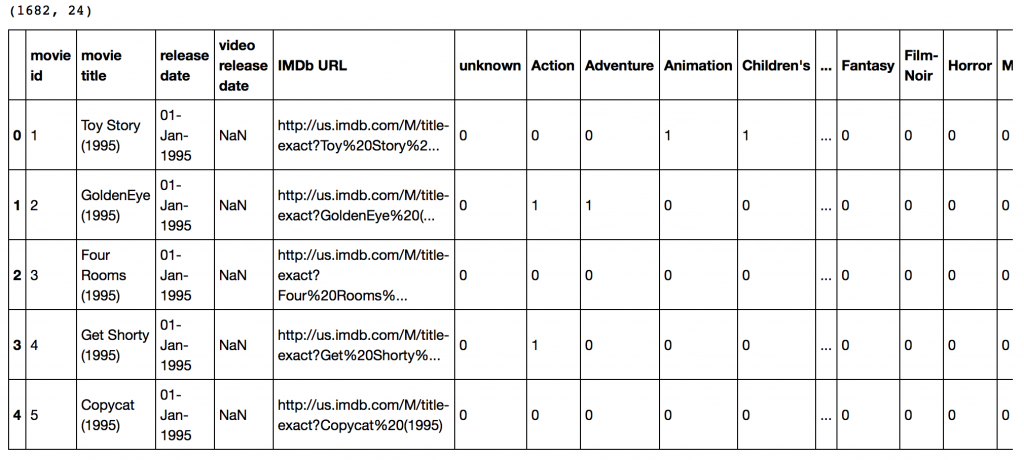

print items.shape

items.head()

This dataset contains attributes of the 1682 movies. There are 24 columns out of which 19 specify the genre of a particular movie. The last 19 columns are for each genre and a value of 1 denotes movie belongs to that genre and 0 otherwise.

This dataset contains attributes of the 1682 movies. There are 24 columns out of which 19 specify the genre of a particular movie. The last 19 columns are for each genre and a value of 1 denotes movie belongs to that genre and 0 otherwise.

Now we have to divide the ratings data set into test and train data for making models. Luckily GroupLens provides pre-divided data wherein the test data has 10 ratings for each user, i.e. 9430 rows in total. Lets load that:

r_cols = [‘user_id‘, ‘movie_id‘, ‘rating‘, ‘unix_timestamp‘]

ratings_base = pd.read_csv(‘ml-100k/ua.base‘, sep=‘\t‘, names=r_cols, encoding=‘latin-1‘)

ratings_test = pd.read_csv(‘ml-100k/ua.test‘, sep=‘\t‘, names=r_cols, encoding=‘latin-1‘)

ratings_base.shape, ratings_test.shape

Output: ((90570, 4), (9430, 4))

Since we’ll be using GraphLab, lets convert these in SFrames.

import graphlab

train_data = graphlab.SFrame(ratings_base)

test_data = graphlab.SFrame(ratings_test)

We can use this data for training and testing. Now that we have gathered all the data available. Note that here we have user behaviour as well as attributes of the users and movies. So we can make content based as well as collaborative filtering algorithms.

Lets start with making a popularity based model, i.e. the one where all the users have same recommendation based on the most popular choices. We’ll use the graphlab recommender functions popularity_recommender for this.

We can train a recommendation as:

popularity_model = graphlab.popularity_recommender.create(train_data, user_id=‘user_id‘, item_id=‘movie_id‘, target=‘rating‘)

Arguments:

Lets use this model to make top 5 recommendations for first 5 users and see what comes out:

#Get recommendations for first 5 users and print them

#users = range(1,6) specifies user ID of first 5 users

#k=5 specifies top 5 recommendations to be given

popularity_recomm = popularity_model.recommend(users=range(1,6),k=5)

popularity_recomm.print_rows(num_rows=25)

Did you notice something? The recommendations for all users are same – 1500,1201,1189,1122,814 in the same order. This can be verified by checking the movies with highest mean recommendations in our ratings_base data set:

ratings_base.groupby(by=‘movie_id‘)[‘rating‘].mean().sort_values(ascending=False).head(20)

This confirms that all the recommended movies have an average rating of 5, i.e. all the users who watched the movie gave a top rating. Thus we can see that our popularity system works as expected. But it is good enough? We’ll analyze it in detail later.

Lets start by understanding the basics of a collaborative filtering algorithm. The core idea works in 2 steps:

To give you a high level overview, this is done by making an item-item matrix in which we keep a record of the pair of items which were rated together.

In this case, an item is a movie. Once we have the matrix, we use it to determine the best recommendations for a user based on the movies he has already rated. Note that there a few more things to take care in actual implementation which would require deeper mathematical introspection, which I’ll skip for now.

I would just like to mention that there are 3 types of item similarity metrics supported by graphlab. These are:

Lets create a model based on item similarity as follow:

#Train Model

item_sim_model = graphlab.item_similarity_recommender.create(train_data, user_id=‘user_id‘, item_id=‘movie_id‘, target=‘rating‘, similarity_type=‘pearson‘)

#Make Recommendations:

item_sim_recomm = item_sim_model.recommend(users=range(1,6),k=5)

item_sim_recomm.print_rows(num_rows=25)

Here we can see that the recommendations are different for each user. So, personalization exists. But how good is this model? We need some means of evaluating a recommendation engine. Lets focus on that in the next section.

For evaluating recommendation engines, we can use the concept of precision-recall. You must be familiar with this in terms of classification and the idea is very similar. Let me define them in terms of recommendations.

Now if we think about recall, how can we maximize it? If we simply recommend all the items, they will definitely cover the items which the user likes. So we have 100% recall! But think about precision for a second. If we recommend say 1000 items and user like only say 10 of them then precision is 0.1%. This is really low. Our aim is to maximize both precision and recall.

An idea recommender system is the one which only recommends the items which user likes. So in this case precision=recall=1. This is an optimal recommender and we should try and get as close as possible.

Lets compare both the models we have built till now based on precision-recall characteristics:

model_performance = graphlab.compare(test_data, [popularity_model, item_sim_model])

graphlab.show_comparison(model_performance,[popularity_model, item_sim_model])

Here we can make 2 very quick observations:

There is a big scope of improvement here. But I leave it up to you to figure out how to improve this further. I would like to give a couple of tips:

In the end, I would like to mention that along with GraphLab, you can also use some other open source python packages like Crab. Crab is till under development and supports only basic collaborative filtering techniques for now. But this is something to watch out for in future for sure!

In this article, we traversed through the process of making a basic recommendation engine in Python using GrpahLab. We started by understanding the fundamentals of recommendations. Then we went on to load the MovieLens 100K data set for the purpose of experimentation.

Subsequently we made a first model as a simple popularity model in which the most popular movies were recommended for each user. Since this lacked personalization, we made another model based on collaborative filtering and observed the impact of personalization.

Finally, we discussed precision-recall as evaluation metrics for recommendation systems and on comparison found the collaborative filtering model to be more than 10x better than the popularity model.

Did you like reading this article ? Do share your experience / suggestions in the comments section below.

(转) Quick Guide to Build a Recommendation Engine in Python

标签:

原文地址:http://www.cnblogs.com/wangxiaocvpr/p/5554830.html