标签:

最近在公司做个系统,由于要获取网页的一些数据,以及一些网页的数据,所以就写的一个公用的HttpUtils.下面是针对乌云网我写的一个例子。

一、首先是获取指定路径下的网页内容。

public static String httpGet(String urlStr, Map<String, String> params) throws Exception {

StringBuilder sb = new StringBuilder();

if (null != params && params.size() > 0) {

sb.append("?");

Entry<String, String> en;

for (Iterator<Entry<String, String>> ir = params.entrySet().iterator(); ir.hasNext();) {

en = ir.next();

sb.append(en.getKey() + "=" + URLEncoder.encode(en.getValue(),"utf-8") + (ir.hasNext() ? "&" : ""));

}

}

URL url = new URL(urlStr + sb);

HttpURLConnection conn = (HttpURLConnection) url.openConnection();

conn.setConnectTimeout(5000);

conn.setReadTimeout(5000);

conn.setRequestMethod("GET");

if (conn.getResponseCode() != 200)

throw new Exception("请求异常状态值:" + conn.getResponseCode());

BufferedInputStream bis = new BufferedInputStream(conn.getInputStream());

Reader reader = new InputStreamReader(bis,"gbk");

char[] buffer = new char[2048];

int len = 0;

CharArrayWriter caw = new CharArrayWriter();

while ((len = reader.read(buffer)) > -1)

caw.write(buffer, 0, len);

reader.close();

bis.close();

conn.disconnect();

//System.out.println(caw);

return caw.toString();

}

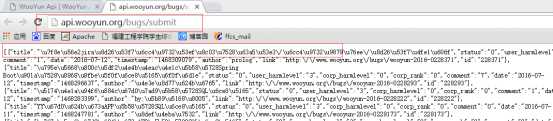

浏览器询问结果:

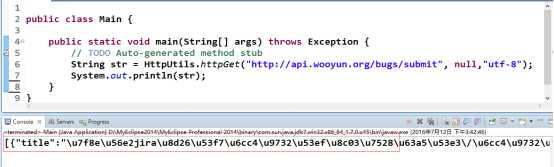

代码询问结果与上面一致:

二、通过指定url获取,网页部分想要的数据。

对于这个方法,要导入Jsoup包,这个可以自己在网上下载。

Document doc = null;

try {

doc = Jsoup.connect("http://www.wooyun.org//bugs//wooyun-2016-0225856").userAgent("Mozilla/5.0 (Windows NT 10.0; Trident/7.0; rv:11.0) like Gecko").timeout(30000).get();

} catch (IOException e) {

e.printStackTrace();

}

for(Iterator<Element> ir = doc.select("h3").iterator();ir.hasNext();){

System.out.println(ir.next().text());

}

对于那个select选择器,根据条件来选择,doc.select("h3").iterator(),对于Jsoup有以下规则:

jsoup 是一款基于Java 的HTML解析器,可直接解析某个URL地址或HTML文本内容。它提供了一套非常省力的API,可通过DOM,CSS以及类似于jQuery的操作方法来取出和操作数据。

jsoup的强大在于它对文档元素的检索,Select方法将返回一个Elements集合,并提供一组方法来抽取和处理结果,要掌握Jsoup首先要熟悉它的选择器语法。

1、Selector选择器基本语法

2、Selector选择器组合使用语法

3、Selector伪选择器语法

注意:上述伪选择器索引是从0开始的,也就是说第一个元素索引值为0,第二个元素index为1等。

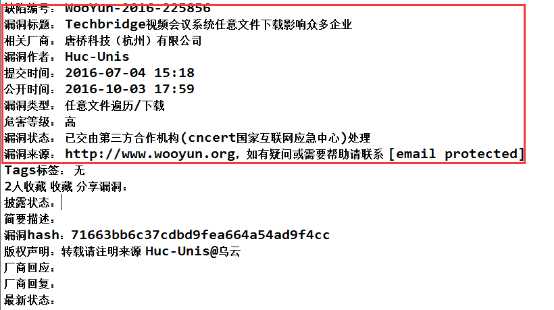

浏览器访问:

代码访问:

源代码HttpUtils.java:

package com.ffcs.lsoc.bug.utils;

import java.io.BufferedInputStream;

import java.io.CharArrayWriter;

import java.io.InputStreamReader;

import java.io.Reader;

import java.net.HttpURLConnection;

import java.net.URL;

import java.net.URLEncoder;

import java.util.Iterator;

import java.util.Map;

import java.util.Map.Entry;

import org.jsoup.nodes.Document;

import org.jsoup.nodes.Element;

/**

* 抓取网页工具类

* @author g-gaojp

* @date 2016-7-10

*/

public class HttpUtils {

/**

* 获取网页数据

* @param urlStr 访问地址

* @param params 参数

* @param charset 字符编码

* @return

* @throws Exception

*/

public static String httpGet(String urlStr, Map<String, String> params,String charset) throws Exception {

StringBuilder sb = new StringBuilder();

if (null != params && params.size() > 0) {

sb.append("?");

Entry<String, String> en;

for (Iterator<Entry<String, String>> ir = params.entrySet().iterator(); ir.hasNext();) {

en = ir.next();

sb.append(en.getKey() + "=" + URLEncoder.encode(en.getValue(),"utf-8") + (ir.hasNext() ? "&" : ""));

}

}

URL url = new URL(urlStr + sb);

HttpURLConnection conn = (HttpURLConnection) url.openConnection();

conn.setConnectTimeout(5000);

conn.setReadTimeout(5000);

conn.setRequestMethod("GET");

if (conn.getResponseCode() != 200){

throw new Exception("请求异常状态值:" + conn.getResponseCode());

}

BufferedInputStream bis = new BufferedInputStream(conn.getInputStream());

Reader reader = new InputStreamReader(bis,charset);

char[] buffer = new char[2048];

int len = 0;

CharArrayWriter caw = new CharArrayWriter();

while ((len = reader.read(buffer)) > -1)

caw.write(buffer, 0, len);

reader.close();

bis.close();

conn.disconnect();

return caw.toString();

}

/**

* 获取网页数据

* @param urlStr 访问地址

* @param params 参数

* @return

* @throws Exception

*/

public static String httpGet(String urlStr, Map<String, String> params) throws Exception {

StringBuilder sb = new StringBuilder();

if (null != params && params.size() > 0) {

sb.append("?");

Entry<String, String> en;

for (Iterator<Entry<String, String>> ir = params.entrySet().iterator(); ir.hasNext();) {

en = ir.next();

sb.append(en.getKey() + "=" + URLEncoder.encode(en.getValue(),"utf-8") + (ir.hasNext() ? "&" : ""));

}

}

URL url = new URL(urlStr + sb);

HttpURLConnection conn = (HttpURLConnection) url.openConnection();

conn.setConnectTimeout(5000);

conn.setReadTimeout(5000);

conn.setRequestMethod("GET");

if (conn.getResponseCode() != 200)

throw new Exception("请求异常状态值:" + conn.getResponseCode());

BufferedInputStream bis = new BufferedInputStream(conn.getInputStream());

Reader reader = new InputStreamReader(bis,"gbk");

char[] buffer = new char[2048];

int len = 0;

CharArrayWriter caw = new CharArrayWriter();

while ((len = reader.read(buffer)) > -1)

caw.write(buffer, 0, len);

reader.close();

bis.close();

conn.disconnect();

//System.out.println(caw);

return caw.toString();

}

/**

* 从获得的网页的document中获取指定条件的内容

* @param document

* @param condition 条件

* @return

*/

public static String catchInfomationFromDocument(Document document , String condition , int position){

if(document != null){

Iterator<Element> iterator = document.select(condition).iterator();

if(iterator.hasNext()){

String str = iterator.next().text();

return str.substring(position).trim();

}

}

return null;

}

/**

* 判断从获得的网页的document中<br/>

* 获取指定条件的内容是否存在

* @param document

* @param condition 条件

* @return

*/

public static boolean isExistInfomation(Document document , String condition){

if(document != null){

Iterator<Element> iterator = document.select(condition).iterator();

if(iterator.hasNext()){

return true;

}

}

return false;

}

}

标签:

原文地址:http://www.cnblogs.com/gaojupeng/p/5728094.html