标签:

页表:用于建立用户进程空间的虚拟地址空间和系统物理内存(内存、页帧)之间的关联。

向每个进程提供一致的虚拟地址空间。

将虚拟内存页映射到物理内存,因而支持共享内存的实现。

可以在不增加物理内存的情况下,将页换出到块设备来增加有效的可用内存空间。

内核内存管理总是假定使用四级页表。

3.3.1 数据结构

内核源代码假定void *和unsigned long long类型所需的比特位数相同,因此他们可以进行强制转换而不损失信息。即:假定sizeof(void *) == sizeof(unsigned long long),在Linux支持的所有体系结构上都是正确的。

1. 内存地址的分解

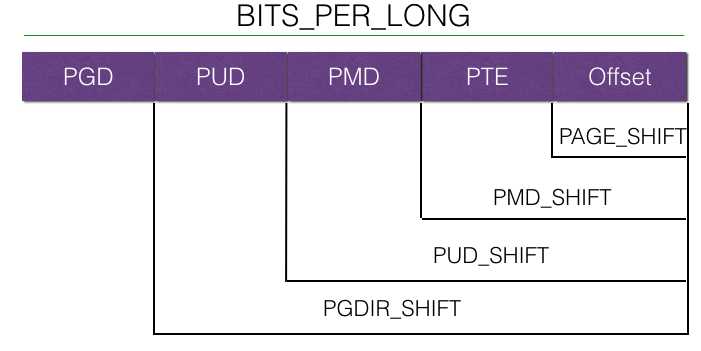

根据四级页表的结构需求,虚拟地址分为5部分。

各个体系结构不仅地址长度不一致,而且地址字拆分的方式也不同。因此内核定义了宏,用于将地址分解为各个分量。

BITS_PER_LONG定义用于unsigned long变量的比特位数目,因而也适用于指向虚拟地址空间的通用指针。

关于上述设计的宏例如PAGE_SHIFT的定义,是在文件page.h中定义的。在linux下,page.h的定义有2个地方,一个是linux-3.08/include/asm-generic/page.h,一个是在架构相关的目录,如mips是在linux-3.08/arch/mips/include/asm/page.h。一般而言,如果架构目录定义那么肯定会使用架构目录下的定义。

所以我们看看 linux-3.08/arch/mips/include/asm/page.h文件:

/* * This file is subject to the terms and conditions of the GNU General Public * License. See the file "COPYING" in the main directory of this archive * for more details. * * Copyright (C) 1994 - 1999, 2000, 03 Ralf Baechle * Copyright (C) 1999, 2000 Silicon Graphics, Inc. */ #ifndef _ASM_PAGE_H #define _ASM_PAGE_H #include <spaces.h> #include <linux/const.h> /* * PAGE_SHIFT determines the page size */ #ifdef CONFIG_PAGE_SIZE_4KB #define PAGE_SHIFT 12 #endif #ifdef CONFIG_PAGE_SIZE_8KB #define PAGE_SHIFT 13 #endif #ifdef CONFIG_PAGE_SIZE_16KB #define PAGE_SHIFT 14 #endif #ifdef CONFIG_PAGE_SIZE_32KB #define PAGE_SHIFT 15 #endif #ifdef CONFIG_PAGE_SIZE_64KB #define PAGE_SHIFT 16 #endif #define PAGE_SIZE (_AC(1,UL) << PAGE_SHIFT) #define PAGE_MASK (~((1 << PAGE_SHIFT) - 1)) #ifdef CONFIG_HUGETLB_PAGE #define HPAGE_SHIFT (PAGE_SHIFT + PAGE_SHIFT - 3) #define HPAGE_SIZE (_AC(1,UL) << HPAGE_SHIFT) #define HPAGE_MASK (~(HPAGE_SIZE - 1)) #define HUGETLB_PAGE_ORDER (HPAGE_SHIFT - PAGE_SHIFT) #endif /* CONFIG_HUGETLB_PAGE */ #ifndef __ASSEMBLY__ #include <linux/pfn.h> #include <asm/io.h> extern void build_clear_page(void); extern void build_copy_page(void); /* * It‘s normally defined only for FLATMEM config but it‘s * used in our early mem init code for all memory models. * So always define it. */ #define ARCH_PFN_OFFSET PFN_UP(PHYS_OFFSET) extern void clear_page(void * page); extern void copy_page(void * to, void * from); extern unsigned long shm_align_mask; static inline unsigned long pages_do_alias(unsigned long addr1, unsigned long addr2) { return (addr1 ^ addr2) & shm_align_mask; } struct page; static inline void clear_user_page(void *addr, unsigned long vaddr, struct page *page) { extern void (*flush_data_cache_page)(unsigned long addr); clear_page(addr); if (cpu_has_vtag_dcache || (cpu_has_dc_aliases && pages_do_alias((unsigned long) addr, vaddr & PAGE_MASK))) flush_data_cache_page((unsigned long)addr); } extern void copy_user_page(void *vto, void *vfrom, unsigned long vaddr, struct page *to); struct vm_area_struct; extern void copy_user_highpage(struct page *to, struct page *from, unsigned long vaddr, struct vm_area_struct *vma); #define __HAVE_ARCH_COPY_USER_HIGHPAGE /* * These are used to make use of C type-checking.. */ #ifdef CONFIG_64BIT_PHYS_ADDR #ifdef CONFIG_CPU_MIPS32 typedef struct { unsigned long pte_low, pte_high; } pte_t; #define pte_val(x) ((x).pte_low | ((unsigned long long)(x).pte_high << 32)) #define __pte(x) ({ pte_t __pte = {(x), ((unsigned long long)(x)) >> 32}; __pte; }) #else typedef struct { unsigned long long pte; } pte_t; #define pte_val(x) ((x).pte) #define __pte(x) ((pte_t) { (x) } ) #endif #else typedef struct { unsigned long pte; } pte_t; #define pte_val(x) ((x).pte) #define __pte(x) ((pte_t) { (x) } ) #endif typedef struct page *pgtable_t; /* * Right now we don‘t support 4-level pagetables, so all pud-related * definitions come from <asm-generic/pgtable-nopud.h>. */ /* * Finall the top of the hierarchy, the pgd */ typedef struct { unsigned long pgd; } pgd_t; #define pgd_val(x) ((x).pgd) #define __pgd(x) ((pgd_t) { (x) } ) /* * Manipulate page protection bits */ typedef struct { unsigned long pgprot; } pgprot_t; #define pgprot_val(x) ((x).pgprot) #define __pgprot(x) ((pgprot_t) { (x) } ) /* * On R4000-style MMUs where a TLB entry is mapping a adjacent even / odd * pair of pages we only have a single global bit per pair of pages. When * writing to the TLB make sure we always have the bit set for both pages * or none. This macro is used to access the `buddy‘ of the pte we‘re just * working on. */ #define ptep_buddy(x) ((pte_t *)((unsigned long)(x) ^ sizeof(pte_t))) #endif /* !__ASSEMBLY__ */ /* * __pa()/__va() should be used only during mem init. */ #ifdef CONFIG_64BIT #define __pa(x) \ ({ unsigned long __x = (unsigned long)(x); __x < CKSEG0 ? XPHYSADDR(__x) : CPHYSADDR(__x); }) #else #define __pa(x) \ ((unsigned long)(x) - PAGE_OFFSET + PHYS_OFFSET) #endif #define __va(x) ((void *)((unsigned long)(x) + PAGE_OFFSET - PHYS_OFFSET)) /* * RELOC_HIDE was originally added by 6007b903dfe5f1d13e0c711ac2894bdd4a61b1ad * (lmo) rsp. 8431fd094d625b94d364fe393076ccef88e6ce18 (kernel.org). The * discussion can be found in lkml posting * <a2ebde260608230500o3407b108hc03debb9da6e62c@mail.gmail.com> which is * archived at http://lists.linuxcoding.com/kernel/2006-q3/msg17360.html * * It is unclear if the misscompilations mentioned in * http://lkml.org/lkml/2010/8/8/138 also affect MIPS so we keep this one * until GCC 3.x has been retired before we can apply * https://patchwork.linux-mips.org/patch/1541/ */ #define __pa_symbol(x) __pa(RELOC_HIDE((unsigned long)(x), 0)) #define pfn_to_kaddr(pfn) __va((pfn) << PAGE_SHIFT) #ifdef CONFIG_FLATMEM #define pfn_valid(pfn) \ ({ unsigned long __pfn = (pfn); /* avoid <linux/bootmem.h> include hell */ extern unsigned long min_low_pfn; __pfn >= min_low_pfn && __pfn < max_mapnr; }) #elif defined(CONFIG_SPARSEMEM) /* pfn_valid is defined in linux/mmzone.h */ #elif defined(CONFIG_NEED_MULTIPLE_NODES) #define pfn_valid(pfn) \ ({ unsigned long __pfn = (pfn); int __n = pfn_to_nid(__pfn); ((__n >= 0) ? (__pfn < NODE_DATA(__n)->node_start_pfn + NODE_DATA(__n)->node_spanned_pages) : 0); }) #endif #define virt_to_page(kaddr) pfn_to_page(PFN_DOWN(virt_to_phys(kaddr))) #define virt_addr_valid(kaddr) pfn_valid(PFN_DOWN(virt_to_phys(kaddr))) /* #define VM_DATA_DEFAULT_FLAGS (VM_READ | VM_WRITE | VM_EXEC | VM_MAYREAD | VM_MAYWRITE | VM_MAYEXEC) */ #define VM_DATA_DEFAULT_FLAGS (VM_READ | VM_WRITE | \ VM_MAYREAD | VM_MAYWRITE) #define UNCAC_ADDR(addr) ((addr) - PAGE_OFFSET + UNCAC_BASE + \ PHYS_OFFSET) #define CAC_ADDR(addr) ((addr) - UNCAC_BASE + PAGE_OFFSET - \ PHYS_OFFSET) #include <asm-generic/memory_model.h> #include <asm-generic/getorder.h> #endif /* _ASM_PAGE_H */

阅读上述代码可以得到以下事实:

PAGE_SHIFT:最后一级页表项所需比特位的总是。对于32位系统,PAGE_SHIFT==12.

PAGE_SIZE: 一页的大小。对32位系统,PAGE_SIZE == 4096.

xxxx xxxx xxxx xxxx xxxx xxxx xxxx xxxx 0000 0000 0000 0000 0000 1000 0000 0000 (1 << 12) 【PAGE_SIZE】 0000 0000 0000 0000 0000 0111 1111 1111 ((1 << 12) -1) 1111 1111 1111 1111 1111 1000 0000 0000 (~((1 << 12) -1))【PAGE_MASK】

对于mips32架构,关于PGDIR_SHIFT的定义:linux-3.08/arch/mips/include/asm/pgtable-32.h

/* * This file is subject to the terms and conditions of the GNU General Public * License. See the file "COPYING" in the main directory of this archive * for more details. * * Copyright (C) 1994, 95, 96, 97, 98, 99, 2000, 2003 Ralf Baechle * Copyright (C) 1999, 2000, 2001 Silicon Graphics, Inc. */ #ifndef _ASM_PGTABLE_32_H #define _ASM_PGTABLE_32_H #include <asm/addrspace.h> #include <asm/page.h> #include <linux/linkage.h> #include <asm/cachectl.h> #include <asm/fixmap.h> #include <asm-generic/pgtable-nopmd.h> /* * - add_wired_entry() add a fixed TLB entry, and move wired register */ extern void add_wired_entry(unsigned long entrylo0, unsigned long entrylo1, unsigned long entryhi, unsigned long pagemask); /* * - add_temporary_entry() add a temporary TLB entry. We use TLB entries * starting at the top and working down. This is for populating the * TLB before trap_init() puts the TLB miss handler in place. It * should be used only for entries matching the actual page tables, * to prevent inconsistencies. */ extern int add_temporary_entry(unsigned long entrylo0, unsigned long entrylo1, unsigned long entryhi, unsigned long pagemask); /* Basically we have the same two-level (which is the logical three level * Linux page table layout folded) page tables as the i386. Some day * when we have proper page coloring support we can have a 1% quicker * tlb refill handling mechanism, but for now it is a bit slower but * works even with the cache aliasing problem the R4k and above have. */ /* PGDIR_SHIFT determines what a third-level page table entry can map */ #define PGDIR_SHIFT (2 * PAGE_SHIFT + PTE_ORDER - PTE_T_LOG2) #define PGDIR_SIZE (1UL << PGDIR_SHIFT) #define PGDIR_MASK (~(PGDIR_SIZE-1)) /* * Entries per page directory level: we use two-level, so * we don‘t really have any PUD/PMD directory physically. */ #define __PGD_ORDER (32 - 3 * PAGE_SHIFT + PGD_T_LOG2 + PTE_T_LOG2) #define PGD_ORDER (__PGD_ORDER >= 0 ? __PGD_ORDER : 0) #define PUD_ORDER aieeee_attempt_to_allocate_pud #define PMD_ORDER 1 #define PTE_ORDER 0 #define PTRS_PER_PGD (USER_PTRS_PER_PGD * 2) #define PTRS_PER_PTE ((PAGE_SIZE << PTE_ORDER) / sizeof(pte_t)) #define USER_PTRS_PER_PGD (0x80000000UL/PGDIR_SIZE) #define FIRST_USER_ADDRESS 0 #define VMALLOC_START MAP_BASE #define PKMAP_BASE (0xfe000000UL) #ifdef CONFIG_HIGHMEM # define VMALLOC_END (PKMAP_BASE-2*PAGE_SIZE) #else # define VMALLOC_END (FIXADDR_START-2*PAGE_SIZE) #endif #ifdef CONFIG_64BIT_PHYS_ADDR #define pte_ERROR(e) \ printk("%s:%d: bad pte %016Lx.\n", __FILE__, __LINE__, pte_val(e)) #else #define pte_ERROR(e) \ printk("%s:%d: bad pte %08lx.\n", __FILE__, __LINE__, pte_val(e)) #endif #define pgd_ERROR(e) \ printk("%s:%d: bad pgd %08lx.\n", __FILE__, __LINE__, pgd_val(e)) extern void load_pgd(unsigned long pg_dir); extern pte_t invalid_pte_table[PAGE_SIZE/sizeof(pte_t)]; /* * Empty pgd/pmd entries point to the invalid_pte_table. */ static inline int pmd_none(pmd_t pmd) { return pmd_val(pmd) == (unsigned long) invalid_pte_table; } #define pmd_bad(pmd) (pmd_val(pmd) & ~PAGE_MASK) static inline int pmd_present(pmd_t pmd) { return pmd_val(pmd) != (unsigned long) invalid_pte_table; } static inline void pmd_clear(pmd_t *pmdp) { pmd_val(*pmdp) = ((unsigned long) invalid_pte_table); } #if defined(CONFIG_64BIT_PHYS_ADDR) && defined(CONFIG_CPU_MIPS32) #define pte_page(x) pfn_to_page(pte_pfn(x)) #define pte_pfn(x) ((unsigned long)((x).pte_high >> 6)) static inline pte_t pfn_pte(unsigned long pfn, pgprot_t prot) { pte_t pte; pte.pte_high = (pfn << 6) | (pgprot_val(prot) & 0x3f); pte.pte_low = pgprot_val(prot); return pte; } #else #define pte_page(x) pfn_to_page(pte_pfn(x)) #ifdef CONFIG_CPU_VR41XX #define pte_pfn(x) ((unsigned long)((x).pte >> (PAGE_SHIFT + 2))) #define pfn_pte(pfn, prot) __pte(((pfn) << (PAGE_SHIFT + 2)) | pgprot_val(prot)) #else #define pte_pfn(x) ((unsigned long)((x).pte >> _PFN_SHIFT)) #define pfn_pte(pfn, prot) __pte(((unsigned long long)(pfn) << _PFN_SHIFT) | pgprot_val(prot)) #endif #endif /* defined(CONFIG_64BIT_PHYS_ADDR) && defined(CONFIG_CPU_MIPS32) */ #define __pgd_offset(address) pgd_index(address) #define __pud_offset(address) (((address) >> PUD_SHIFT) & (PTRS_PER_PUD-1)) #define __pmd_offset(address) (((address) >> PMD_SHIFT) & (PTRS_PER_PMD-1)) /* to find an entry in a kernel page-table-directory */ #define pgd_offset_k(address) pgd_offset(&init_mm, address) #define pgd_index(address) (((address) >> PGDIR_SHIFT) & (PTRS_PER_PGD-1)) /* to find an entry in a page-table-directory */ #define pgd_offset(mm, addr) ((mm)->pgd + pgd_index(addr)) /* Find an entry in the third-level page table.. */ #define __pte_offset(address) \ (((address) >> PAGE_SHIFT) & (PTRS_PER_PTE - 1)) #define pte_offset(dir, address) \ ((pte_t *) pmd_page_vaddr(*(dir)) + __pte_offset(address)) #define pte_offset_kernel(dir, address) \ ((pte_t *) pmd_page_vaddr(*(dir)) + __pte_offset(address)) #define pte_offset_map(dir, address) \ ((pte_t *)page_address(pmd_page(*(dir))) + __pte_offset(address)) #define pte_unmap(pte) ((void)(pte)) #if defined(CONFIG_CPU_R3000) || defined(CONFIG_CPU_TX39XX) /* Swap entries must have VALID bit cleared. */ #define __swp_type(x) (((x).val >> 10) & 0x1f) #define __swp_offset(x) ((x).val >> 15) #define __swp_entry(type,offset) \ ((swp_entry_t) { ((type) << 10) | ((offset) << 15) }) /* * Bits 0, 4, 8, and 9 are taken, split up 28 bits of offset into this range: */ #define PTE_FILE_MAX_BITS 28 #define pte_to_pgoff(_pte) ((((_pte).pte >> 1 ) & 0x07) | \ (((_pte).pte >> 2 ) & 0x38) | (((_pte).pte >> 10) << 6 )) #define pgoff_to_pte(off) ((pte_t) { (((off) & 0x07) << 1 ) | \ (((off) & 0x38) << 2 ) | (((off) >> 6 ) << 10) | _PAGE_FILE }) #else /* Swap entries must have VALID and GLOBAL bits cleared. */ #if defined(CONFIG_64BIT_PHYS_ADDR) && defined(CONFIG_CPU_MIPS32) #define __swp_type(x) (((x).val >> 2) & 0x1f) #define __swp_offset(x) ((x).val >> 7) #define __swp_entry(type,offset) \ ((swp_entry_t) { ((type) << 2) | ((offset) << 7) }) #else #define __swp_type(x) (((x).val >> 8) & 0x1f) #define __swp_offset(x) ((x).val >> 13) #define __swp_entry(type,offset) \ ((swp_entry_t) { ((type) << 8) | ((offset) << 13) }) #endif /* defined(CONFIG_64BIT_PHYS_ADDR) && defined(CONFIG_CPU_MIPS32) */ #if defined(CONFIG_64BIT_PHYS_ADDR) && defined(CONFIG_CPU_MIPS32) /* * Bits 0 and 1 of pte_high are taken, use the rest for the page offset... */ #define PTE_FILE_MAX_BITS 30 #define pte_to_pgoff(_pte) ((_pte).pte_high >> 2) #define pgoff_to_pte(off) ((pte_t) { _PAGE_FILE, (off) << 2 }) #else /* * Bits 0, 4, 6, and 7 are taken, split up 28 bits of offset into this range: */ #define PTE_FILE_MAX_BITS 28 #define pte_to_pgoff(_pte) ((((_pte).pte >> 1) & 0x7) | \ (((_pte).pte >> 2) & 0x8) | (((_pte).pte >> 8) << 4)) #define pgoff_to_pte(off) ((pte_t) { (((off) & 0x7) << 1) | \ (((off) & 0x8) << 2) | (((off) >> 4) << 8) | _PAGE_FILE }) #endif #endif #if defined(CONFIG_64BIT_PHYS_ADDR) && defined(CONFIG_CPU_MIPS32) #define __pte_to_swp_entry(pte) ((swp_entry_t) { (pte).pte_high }) #define __swp_entry_to_pte(x) ((pte_t) { 0, (x).val }) #else #define __pte_to_swp_entry(pte) ((swp_entry_t) { pte_val(pte) }) #define __swp_entry_to_pte(x) ((pte_t) { (x).val }) #endif #endif /* _ASM_PGTABLE_32_H */

我只关心mips32架构的相关配置,所以,此时,对于四级页表的Linux,如何为mips32设置成3级页表?

Linux提供了通用的没有PUD和PMD的相关的配置。配置文件:linux-3.08/include/asm-generic/pgtable-nopmd.h和pgtable-nopud.h:

/* linux-3.08/include/asm-generic/pgtable-nopmd.h */ #ifndef _PGTABLE_NOPMD_H #define _PGTABLE_NOPMD_H #ifndef __ASSEMBLY__ #include <asm-generic/pgtable-nopud.h> struct mm_struct; #define __PAGETABLE_PMD_FOLDED /* * Having the pmd type consist of a pud gets the size right, and allows * us to conceptually access the pud entry that this pmd is folded into * without casting. */ typedef struct { pud_t pud; } pmd_t; #define PMD_SHIFT PUD_SHIFT #define PTRS_PER_PMD 1 #define PMD_SIZE (1UL << PMD_SHIFT) #define PMD_MASK (~(PMD_SIZE-1)) /* * The "pud_xxx()" functions here are trivial for a folded two-level * setup: the pmd is never bad, and a pmd always exists (as it‘s folded * into the pud entry) */ static inline int pud_none(pud_t pud) { return 0; } static inline int pud_bad(pud_t pud) { return 0; } static inline int pud_present(pud_t pud) { return 1; } static inline void pud_clear(pud_t *pud) { } #define pmd_ERROR(pmd) (pud_ERROR((pmd).pud)) #define pud_populate(mm, pmd, pte) do { } while (0) /* * (pmds are folded into puds so this doesn‘t get actually called, * but the define is needed for a generic inline function.) */ #define set_pud(pudptr, pudval) set_pmd((pmd_t *)(pudptr), (pmd_t) { pudval }) static inline pmd_t * pmd_offset(pud_t * pud, unsigned long address) { return (pmd_t *)pud; } #define pmd_val(x) (pud_val((x).pud)) #define __pmd(x) ((pmd_t) { __pud(x) } ) #define pud_page(pud) (pmd_page((pmd_t){ pud })) #define pud_page_vaddr(pud) (pmd_page_vaddr((pmd_t){ pud })) /* * allocating and freeing a pmd is trivial: the 1-entry pmd is * inside the pud, so has no extra memory associated with it. */ #define pmd_alloc_one(mm, address) NULL static inline void pmd_free(struct mm_struct *mm, pmd_t *pmd) { } #define __pmd_free_tlb(tlb, x, a) do { } while (0) #undef pmd_addr_end #define pmd_addr_end(addr, end) (end) #endif /* __ASSEMBLY__ */ #endif /* _PGTABLE_NOPMD_H */

/* linux-3.08/include/asm-generic/pgtable-nopud.h */ #ifndef _PGTABLE_NOPUD_H #define _PGTABLE_NOPUD_H #ifndef __ASSEMBLY__ #define __PAGETABLE_PUD_FOLDED /* * Having the pud type consist of a pgd gets the size right, and allows * us to conceptually access the pgd entry that this pud is folded into * without casting. */ typedef struct { pgd_t pgd; } pud_t; #define PUD_SHIFT PGDIR_SHIFT #define PTRS_PER_PUD 1 #define PUD_SIZE (1UL << PUD_SHIFT) #define PUD_MASK (~(PUD_SIZE-1)) /* * The "pgd_xxx()" functions here are trivial for a folded two-level * setup: the pud is never bad, and a pud always exists (as it‘s folded * into the pgd entry) */ static inline int pgd_none(pgd_t pgd) { return 0; } static inline int pgd_bad(pgd_t pgd) { return 0; } static inline int pgd_present(pgd_t pgd) { return 1; } static inline void pgd_clear(pgd_t *pgd) { } #define pud_ERROR(pud) (pgd_ERROR((pud).pgd)) #define pgd_populate(mm, pgd, pud) do { } while (0) /* * (puds are folded into pgds so this doesn‘t get actually called, * but the define is needed for a generic inline function.) */ #define set_pgd(pgdptr, pgdval) set_pud((pud_t *)(pgdptr), (pud_t) { pgdval }) static inline pud_t * pud_offset(pgd_t * pgd, unsigned long address) { return (pud_t *)pgd; } #define pud_val(x) (pgd_val((x).pgd)) #define __pud(x) ((pud_t) { __pgd(x) } ) #define pgd_page(pgd) (pud_page((pud_t){ pgd })) #define pgd_page_vaddr(pgd) (pud_page_vaddr((pud_t){ pgd })) /* * allocating and freeing a pud is trivial: the 1-entry pud is * inside the pgd, so has no extra memory associated with it. */ #define pud_alloc_one(mm, address) NULL #define pud_free(mm, x) do { } while (0) #define __pud_free_tlb(tlb, x, a) do { } while (0) #undef pud_addr_end #define pud_addr_end(addr, end) (end) #endif /* __ASSEMBLY__ */ #endif /* _PGTABLE_NOPUD_H */

尽管:PMD_SHIFT和PUD_SHIFT都定义为PGDIR_SHIFT。但是关键字:PDRS_PER_PUD和PTRS_PER_PMD都定义为1。那么意义是什么呢?

PDRS_PER_PUD:指定了二级页表(PUD)所能存储的指针数目。

PDRS_PED_PMD:指定了三级页表(PMD)所能存储的指针数目。

设置为1,Linux内核还是以为是四级页表,但是实际上只有二级页表。

在PAGE_SHIFT代码中,我们看到了PAGE_MASK。类似存在:PUD_MASK、PMD_MASK、PGDIR_MASK。

那么这些MASK的作用是:从给定地址中提取各个分量。【用给定地址与对应的MASK位与即可获得各个分量】

2. 页表的格式

pgd_t:全局页目录项。

pud_t:上层页目录项。

pmd_t:中间页目录项。

pte_t:直接页表项。

typedef struct { unsigned long pgd; } pgd_t; typedef struct { pgd_t pgd; } pud_t; typedef struct { pgd_t pud; } pmd_t; typedef struct { unsigned long pte; } pte_t;

PAGE_ALIGN:将输入的地址对其到下一页的起始处。如页大小是4096,该宏总返回其倍数。PAGE_ALIGN(6000) = 8192.

3. 特定于PTE的信息

最后一级页表中的项不仅包含了只想页的内存位置的指针,还在上述的多余的比特位包含了与页有关的附加信息。这些信息特定于CPU,提供了页的访问控制信息。

详细内容不再细述。用到时我们再回头来看。

标签:

原文地址:http://www.cnblogs.com/ronnydm/p/5756158.html