标签:应该 page 个数 type trait 发送 val mit pat

前情提要:最近使用PHP实现了简单的网盘搜索程序,并且关联了微信公众平台,名字是网盘小说。用户可以通过公众号输入关键字,公众号会返回相应的网盘下载地址。就是这么一个简单的功能,类似很多的网盘搜索类网站,我这个采集和搜索程序都是PHP实现的,全文和分词搜索部分使用到了开源软件xunsearch。

上一篇([PHP] 网盘搜索引擎-采集爬取百度网盘分享文件实现网盘搜索)中我重点介绍了怎样去获取一大批的百度网盘用户,这一篇介绍怎样获得指定网盘用户的分享列表。同样的原理,也是找到百度获取分享列表的接口,然后去循环就可以了。

查找分享接口

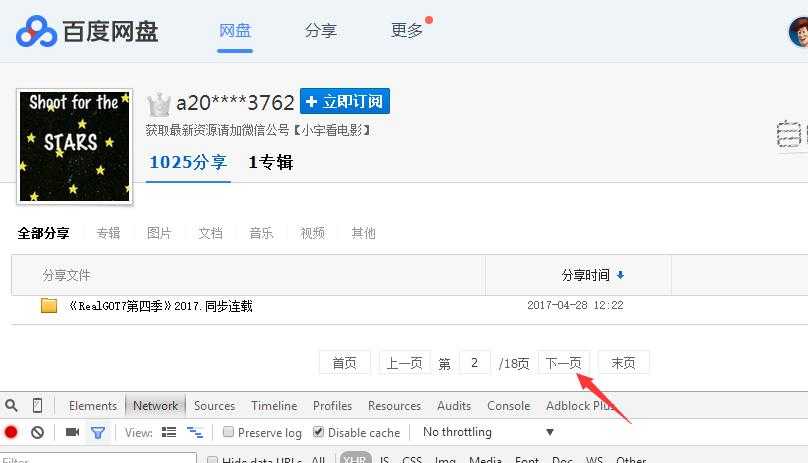

随便找一个网盘用户的分享页面,点击最下面的分页链接,可以看到发起的请求接口,这个就是获取分享列表的接口。

整个的请求url是这个 https://pan.baidu.com/pcloud/feed/getsharelist?t=1493892795526&category=0&auth_type=1&request_location=share_home&start=60&limit=60&query_uk=4162539356&channel=chunlei&clienttype=0&web=1&logid=MTQ5Mzg5Mjc5NTUyNzAuOTEwNDc2NTU1NTgyMTM1OQ==&bdstoken=bc329b0677cad94231e973953a09b46f

调用接口获取数据

使用PHP的CURL去请求这个接口,看看是否能够获取到数据。测试后发现,返回的是{"errno":2,"request_id":1775381927},并没有获取到数据。这是因为百度对header头信息里面的Referer进行了限制,我把Referer改成http://www.baidu.com,就可以获取到数据了。接口的参数也可以进行简化成 https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=60&limit=60&query_uk=4162539356

测试代码如下:

<?php /* * 获取分享列表 */ class TextsSpider{ /** * 发送请求 */ public function sendRequest($url,$data = null,$header=null){ $curl = curl_init(); curl_setopt($curl, CURLOPT_URL, $url); curl_setopt($curl, CURLOPT_SSL_VERIFYPEER, FALSE); curl_setopt($curl, CURLOPT_SSL_VERIFYHOST, FALSE); if (!empty($data)){ curl_setopt($curl, CURLOPT_POST, 1); curl_setopt($curl, CURLOPT_POSTFIELDS, $data); } if (!empty($header)){ curl_setopt($curl, CURLOPT_HTTPHEADER, $header); } curl_setopt($curl, CURLOPT_RETURNTRANSFER, 1); $output = curl_exec($curl); curl_close($curl); return $output; } } $textsSpider=new TextsSpider(); $header=array( ‘Referer:http://www.baidu.com‘ ); $str=$textsSpider->sendRequest("https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=60&limit=60&query_uk=4162539356",null,$header); echo $str;

分享列表的json结果如下:

{ "errno": 0, "request_id": 1985680203, "total_count": 1025, "records": [ { "feed_type": "share", "category": 6, "public": "1", "shareid": "98963537", "data_id": "1799945104803474515", "title": "《通灵少女》2017.同步台视(完结待删)", "third": 0, "clienttype": 0, "filecount": 1, "uk": 4162539356, "username": "a20****3762", "feed_time": 1493626027308, "desc": "", "avatar_url": "https://ss0.bdstatic.com/7Ls0a8Sm1A5BphGlnYG/sys/portrait/item/01f8831f.jpg", "dir_cnt": 1, "filelist": [ { "server_filename": "《通灵少女》2017.同步台视(完结待删)", "category": 6, "isdir": 1, "size": 1024, "fs_id": 98994643773159, "path": "%2F%E3%80%8A%E9%80%9A%E7%81%B5%E5%B0%91%E5%A5%B3%E3%80%8B2017.%E5%90%8C%E6%AD%A5%E5%8F%B0%E8%A7%86%EF%BC%88%E5%AE%8C%E7%BB%93%E5%BE%85%E5%88%A0%EF%BC%89", "md5": "0", "sign": "86de8a14f72e6e3798d525c689c0e4575b1a7728", "time_stamp": 1493895381 } ], "source_uid": "528742401", "source_id": "98963537", "shorturl": "1pKPCF0J", "vCnt": 356, "dCnt": 29, "tCnt": 184 }, { "source_uid": "528742401", "source_id": "152434783", "shorturl": "1qYdhFkC", "vCnt": 1022, "dCnt": 29, "tCnt": 345 } ] }

还是和上次一样,综合性的搜索站,可以把有用的数据都留下存住,我只是做个最简单的,就只要了标题title和shareid

每个分享文件的下载页面url是这样的:http://pan.baidu.com/share/link?shareid={$shareId}&uk={$uk} ,只需要用户编号和分享id就可以拼出下载url。

生成分页接口URL

假设用户最多分享了30000个,每页60个,可以分500页,这样url可以这样生成

<?php /* * 获取分享列表 */ class TextsSpider{ private $pages=500;//分页数 private $start=60;//每页个数 /** * 生成分页接口的url */ public function makeUrl($rootUk){ $urls=array(); for($i=0;$i<=$this->pages;$i++){ $start=$this->start*$i; $url="https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start={$start}&limit={$this->start}&query_uk={$rootUk}"; $urls[]=$url; } return $urls; } } $textsSpider=new TextsSpider(); $urls=$textsSpider->makeUrl(4162539356); print_r($urls);

分页的url结果是这样的

Array ( [0] => https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=0&limit=60&query_uk=4162539356 [1] => https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=60&limit=60&query_uk=4162539356 [2] => https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=120&limit=60&query_uk=4162539356 [3] => https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=180&limit=60&query_uk=4162539356 [4] => https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=240&limit=60&query_uk=4162539356 [5] => https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=300&limit=60&query_uk=4162539356 [6] => https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=360&limit=60&query_uk=4162539356 [7] => https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=420&limit=60&query_uk=4162539356 [8] => https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=480&limit=60&query_uk=4162539356 [9] => https://pan.baidu.com/pcloud/feed/getsharelist?&auth_type=1&request_location=share_home&start=540&limit=60&query_uk=4162539356

数据表存储结构

CREATE TABLE `texts` ( `id` int(10) unsigned NOT NULL AUTO_INCREMENT, `title` varchar(255) NOT NULL DEFAULT ‘‘, `url` varchar(255) NOT NULL DEFAULT ‘‘, `time` int(10) unsigned NOT NULL DEFAULT ‘0‘, PRIMARY KEY (`id`), KEY `title` (`title`(250)) ) ENGINE=MyISAM

循环读取的时候,应该注意,每次间隔一定的时间,防止被封。

下一篇主要介绍xunsearch分词和全文搜索和这次的完整代码

演示地址,关注微信公众号:网盘小说,或者扫描下面的二维码

上一篇循环获取uk并存入数据库的完整代码如下:

<?php /* * 获取订阅者 */ class UkSpider{ private $pages;//分页数 private $start=24;//每页个数 private $db=null;//数据库 public function __construct($pages=100){ $this->pages=$pages; $this->db = new PDO("mysql:host=localhost;dbname=pan","root","root"); $this->db->query(‘set names utf8‘); } /** * 生成分页接口的url */ public function makeUrl($rootUk){ $urls=array(); for($i=0;$i<=$this->pages;$i++){ $start=$this->start*$i; $url="https://pan.baidu.com/pcloud/friend/getfollowlist?query_uk={$rootUk}&limit={$this->start}&start={$start}"; $urls[]=$url; } return $urls; } /** * 根据URL获取订阅用户id */ public function getFollowsByUrl($url){ $result=$this->sendRequest($url); $arr=json_decode($result,true); if(empty($arr)||!isset($arr[‘follow_list‘])){ return; } $ret=array(); foreach($arr[‘follow_list‘] as $fan){ $ret[]=$fan[‘follow_uk‘]; } return $ret; } /** * 发送请求 */ public function sendRequest($url,$data = null,$header=null){ $curl = curl_init(); curl_setopt($curl, CURLOPT_URL, $url); curl_setopt($curl, CURLOPT_SSL_VERIFYPEER, FALSE); curl_setopt($curl, CURLOPT_SSL_VERIFYHOST, FALSE); if (!empty($data)){ curl_setopt($curl, CURLOPT_POST, 1); curl_setopt($curl, CURLOPT_POSTFIELDS, $data); } if (!empty($header)){ curl_setopt($curl, CURLOPT_HTTPHEADER, $header); } curl_setopt($curl, CURLOPT_RETURNTRANSFER, 1); $output = curl_exec($curl); curl_close($curl); return $output; } /* 获取到的uks存入数据 */ public function addUks($uks){ foreach($uks as $uk){ $sql="insert into uks (uk)values({$uk})"; $this->db->prepare($sql)->execute(); } } /* 获取某个用户的所有订阅并入库 */ public function sleepGetByUk($uk){ $urls=$this->makeUrl($uk); //$this->updateUkFollow($uk); //循环分页url foreach($urls as $url){ echo "loading:".$url."\r\n"; //随机睡眠7到11秒 $second=rand(7,11); echo "sleep...{$second}s\r\n"; sleep($second); //发起请求 $followList=$this->getFollowsByUrl($url); //如果已经没有数据了,要停掉请求 if(empty($followList)){ break; } $this->addUks($followList); } } /*从数据库取get_follow=0的uk*/ public function getUksFromDb(){ $sth = $this->db->prepare("select * from uks where get_follow=0"); $sth->execute(); $uks = $sth->fetchAll(PDO::FETCH_ASSOC); $result=array(); foreach ($uks as $key => $uk) { $result[]=$uk[‘uk‘]; } return $result; } /*已经取过follow的置为1*/ public function updateUkFollow($uk){ $sql="UPDATE uks SET get_follow=1 where uk={$uk}"; $this->db->prepare($sql)->execute(); } } $ukSpider=new UkSpider(); $uks=$ukSpider->getUksFromDb(); foreach($uks as $uk){ $ukSpider->sleepGetByUk($uk); }

[PHP] 网盘搜索引擎-采集爬取百度网盘分享文件实现网盘搜索(二)

标签:应该 page 个数 type trait 发送 val mit pat

原文地址:http://www.cnblogs.com/taoshihan/p/6808575.html