标签:red inno and hdfs isolation resultset import hive --

Sqoop 将mysql 数据导入到hive(import)

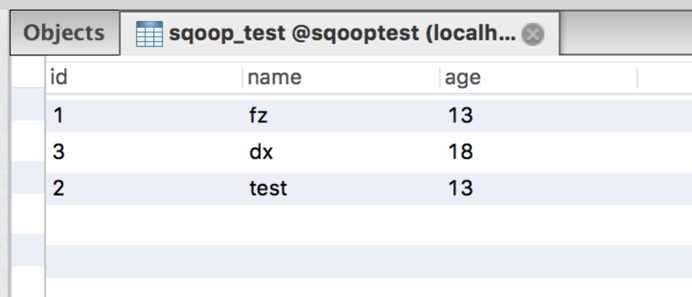

CREATE TABLE `sqoop_test` ( `id` int(11) DEFAULT NULL, `name` varchar(255) DEFAULT NULL, `age` int(11) DEFAULT NULL ) ENGINE=InnoDB DEFAULT CHARSET=latin1

插入数据

hive> create external table sqoop_test(id int,name string,age int) > ROW FORMAT DELIMITED > FIELDS TERMINATED BY ‘,‘ > STORED AS TEXTFILE > location ‘/user/hive/external/sqoop_test‘; OK Time taken: 0.145 seconds

sqoop import --connect jdbc:mysql://localhost:3306/sqooptest --username root --password 123qwe --table sqoop_test --columns id,name,age --fields-terminated-by , --delete-target-dir --target-dir /user/hive/external/sqoop_test/ -m 1

--delete-target-dir:如果目标目录存在则删除。

EFdeMacBook-Pro:bin FengZhen$ sqoop import --connect jdbc:mysql://localhost:3306/sqooptest --username root --password 123qwe --table sqoop_test --columns id,name,age --fields-terminated-by , --delete-target-dir --target-dir /user/hive/external/sqoop_test/ -m 1 Warning: /Users/FengZhen/Desktop/Hadoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../hcatalog does not exist! HCatalog jobs will fail. Please set $HCAT_HOME to the root of your HCatalog installation. Warning: /Users/FengZhen/Desktop/Hadoop/sqoop-1.4.6.bin__hadoop-2.0.4-alpha/../accumulo does not exist! Accumulo imports will fail. Please set $ACCUMULO_HOME to the root of your Accumulo installation. SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/Users/FengZhen/Desktop/Hadoop/hadoop-2.8.0/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/Users/FengZhen/Desktop/Hadoop/hbase-1.3.0/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] 17/09/13 11:12:19 INFO sqoop.Sqoop: Running Sqoop version: 1.4.6 17/09/13 11:12:19 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead. 17/09/13 11:12:19 INFO manager.MySQLManager: Preparing to use a MySQL streaming resultset. 17/09/13 11:12:19 INFO tool.CodeGenTool: Beginning code generation 17/09/13 11:12:19 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM `sqoop_test` AS t LIMIT 1 17/09/13 11:12:19 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM `sqoop_test` AS t LIMIT 1 17/09/13 11:12:19 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /Users/FengZhen/Desktop/Hadoop/hadoop-2.8.0 17/09/13 11:12:21 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-FengZhen/compile/1a0c4154ffefb21d4af720813dd0b3fc/sqoop_test.jar 17/09/13 11:12:21 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable 17/09/13 11:12:22 INFO tool.ImportTool: Destination directory /user/hive/external/sqoop_test deleted. 17/09/13 11:12:22 WARN manager.MySQLManager: It looks like you are importing from mysql. 17/09/13 11:12:22 WARN manager.MySQLManager: This transfer can be faster! Use the --direct 17/09/13 11:12:22 WARN manager.MySQLManager: option to exercise a MySQL-specific fast path. 17/09/13 11:12:22 INFO manager.MySQLManager: Setting zero DATETIME behavior to convertToNull (mysql) 17/09/13 11:12:22 INFO mapreduce.ImportJobBase: Beginning import of sqoop_test 17/09/13 11:12:22 INFO Configuration.deprecation: mapred.job.tracker is deprecated. Instead, use mapreduce.jobtracker.address 17/09/13 11:12:22 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar 17/09/13 11:12:22 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps 17/09/13 11:12:22 INFO client.RMProxy: Connecting to ResourceManager at localhost/127.0.0.1:8032 17/09/13 11:12:24 INFO db.DBInputFormat: Using read commited transaction isolation 17/09/13 11:12:24 INFO mapreduce.JobSubmitter: number of splits:1 17/09/13 11:12:24 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1505268150495_0008 17/09/13 11:12:25 INFO impl.YarnClientImpl: Submitted application application_1505268150495_0008 17/09/13 11:12:25 INFO mapreduce.Job: The url to track the job: http://192.168.1.64:8088/proxy/application_1505268150495_0008/ 17/09/13 11:12:25 INFO mapreduce.Job: Running job: job_1505268150495_0008 17/09/13 11:12:35 INFO mapreduce.Job: Job job_1505268150495_0008 running in uber mode : false 17/09/13 11:12:35 INFO mapreduce.Job: map 0% reduce 0% 17/09/13 11:12:41 INFO mapreduce.Job: map 100% reduce 0% 17/09/13 11:12:41 INFO mapreduce.Job: Job job_1505268150495_0008 completed successfully 17/09/13 11:12:41 INFO mapreduce.Job: Counters: 30 File System Counters FILE: Number of bytes read=0 FILE: Number of bytes written=156817 FILE: Number of read operations=0 FILE: Number of large read operations=0 FILE: Number of write operations=0 HDFS: Number of bytes read=87 HDFS: Number of bytes written=26 HDFS: Number of read operations=4 HDFS: Number of large read operations=0 HDFS: Number of write operations=2 Job Counters Launched map tasks=1 Other local map tasks=1 Total time spent by all maps in occupied slots (ms)=3817 Total time spent by all reduces in occupied slots (ms)=0 Total time spent by all map tasks (ms)=3817 Total vcore-milliseconds taken by all map tasks=3817 Total megabyte-milliseconds taken by all map tasks=3908608 Map-Reduce Framework Map input records=3 Map output records=3 Input split bytes=87 Spilled Records=0 Failed Shuffles=0 Merged Map outputs=0 GC time elapsed (ms)=33 CPU time spent (ms)=0 Physical memory (bytes) snapshot=0 Virtual memory (bytes) snapshot=0 Total committed heap usage (bytes)=154140672 File Input Format Counters Bytes Read=0 File Output Format Counters Bytes Written=26 17/09/13 11:12:41 INFO mapreduce.ImportJobBase: Transferred 26 bytes in 18.6372 seconds (1.3951 bytes/sec) 17/09/13 11:12:41 INFO mapreduce.ImportJobBase: Retrieved 3 records.

导入成功,在hive中查看数据。

hive> select * from sqoop_test; OK 1 fz 13 3 dx 18 2 test 13 Time taken: 1.756 seconds, Fetched: 3 row(s)

使用sqoop将hive数据导出到mysql(export)

使用 sqoop 将mysql数据导入到hive(import)

标签:red inno and hdfs isolation resultset import hive --

原文地址:http://www.cnblogs.com/EnzoDin/p/7513995.html