标签:sync tags 最大连接数 ptime _id image 执行 操作 规划

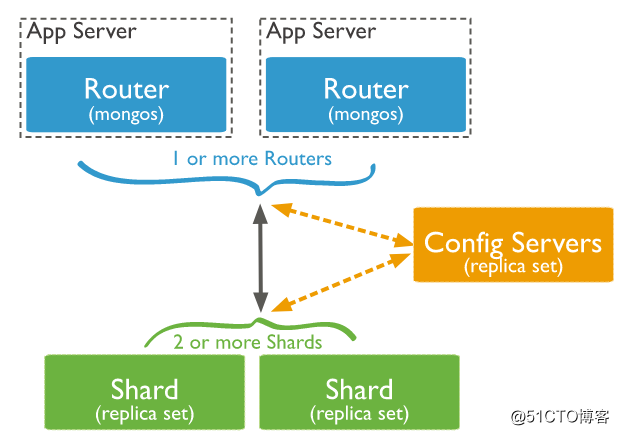

笔记内容:MongoDB分片搭建分片(sharding)是指将数据库拆分,将其分散在不同的机器上的过程。将数据分散到不同的机器上,不需要功能强大的服务器就可以存储更多的数据和处理更大的负载。基本思想就是将集合切成小块,这些块分散到若干片里,每个片只负责总数据的一部分,最后通过一个均衡器来对各个分片进行均衡(数据迁移)。通过一个名为mongos的路由进程进行操作,mongos知道数据和片的对应关系(通过配置服务器)。大部分使用场景都是解决磁盘空间的问题,对于写入有可能会变差,查询则尽量避免跨分片查询。使用分片的时机:

1,机器的磁盘不够用了。使用分片解决磁盘空间的问题。

2,单个mongod已经不能满足写数据的性能要求。通过分片让写压力分散到各个分片上面,使用分片服务器自身的资源。

3,想把大量数据放到内存里提高性能。和上面一样,通过分片使用分片服务器自身的资源。

所以简单来说分片就是将数据库进行拆分,将大型集合分隔到不同服务器上,所以组成分片的单元是副本集。比如,本来100G的数据,可以分割成10份存储到10台服务器上,这样每台机器只有10G的数据,一般分片在大型企业或者数据量很大的公司才会使用。

MongoDB通过一个mongos的进程(路由分发)实现分片后的数据存储与访问,也就是说mongos是整个分片架构的核心,是分片的总入口,对客户端而言是不知道是否有分片的,客户端只需要把读写操作转达给mongos即可。

虽然分片会把数据分隔到很多台服务器上,但是每一个节点都是需要有一个备用角色的,这样才能保证数据的高可用。

当系统需要更多空间或者资源的时候,分片可以让我们按照需求方便的横向扩展,只需要把mongodb服务的机器加入到分片集群中即可

MongoDB分片架构图:

MongoDB分片相关概念:

mongos: 数据库集群请求的入口,所有的请求都通过mongos进行协调,不需要在应用程序添加一个路由选择器,mongos自己就是一个请求分发中心,它负责把对应的数据请求请求转发到对应的shard服务器上。在生产环境通常有多mongos作为请求的入口,防止其中一个挂掉所有的mongodb请求都没有办法操作。

config server: 配置服务器,存储所有数据库元信息(路由、分片)的配置。mongos本身没有物理存储分片服务器和数据路由信息,只是缓存在内存里,配置服务器则实际存储这些数据。mongos第一次启动或者关掉重启就会从 config server 加载配置信息,以后如果配置服务器信息变化会通知到所有的 mongos 更新自己的状态,这样 mongos 就能继续准确路由。在生产环境通常有多个 config server 配置服务器,因为它存储了分片路由的元数据,防止数据丢失!

资源有限,我这里使用三台机器 A B C 作为演示:

三台机器的IP分别是:

A机器:192.168.77.128

B机器:192.168.77.130

C机器:192.168.77.134

分别在三台机器上创建各个角色所需要的目录:

mkdir -p /data/mongodb/mongos/log

mkdir -p /data/mongodb/config/{data,log}

mkdir -p /data/mongodb/shard1/{data,log}

mkdir -p /data/mongodb/shard2/{data,log}

mkdir -p /data/mongodb/shard3/{data,log}

mongodb3.4版本以后需要对config server创建副本集

添加配置文件(三台机器都操作)

[root@localhost ~]# mkdir /etc/mongod/

[root@localhost ~]# vim /etc/mongod/config.conf # 加入如下内容

pidfilepath = /var/run/mongodb/configsrv.pid

dbpath = /data/mongodb/config/data

logpath = /data/mongodb/config/log/congigsrv.log

logappend = true

bind_ip = 0.0.0.0 # 绑定你的监听ip

port = 21000

fork = true

configsvr = true #declare this is a config db of a cluster;

replSet=configs #副本集名称

maxConns=20000 #设置最大连接数启动三台机器的config server:

[root@localhost ~]# mongod -f /etc/mongod/config.conf # 三台机器都要操作

about to fork child process, waiting until server is ready for connections.

forked process: 4183

child process started successfully, parent exiting

[root@localhost ~]# ps aux |grep mongo

mongod 2518 1.1 2.3 1544488 89064 ? Sl 09:57 0:42 /usr/bin/mongod -f /etc/mongod.conf

root 4183 1.1 1.3 1072404 50992 ? Sl 10:56 0:00 mongod -f /etc/mongod/config.conf

root 4240 0.0 0.0 112660 964 pts/0 S+ 10:57 0:00 grep --color=auto mongo

[root@localhost ~]# netstat -lntp |grep mongod

tcp 0 0 192.168.77.128:21000 0.0.0.0:* LISTEN 4183/mongod

tcp 0 0 192.168.77.128:27017 0.0.0.0:* LISTEN 2518/mongod

tcp 0 0 127.0.0.1:27017 0.0.0.0:* LISTEN 2518/mongod

[root@localhost ~]#登录任意一台机器的21000端口,初始化副本集:

[root@localhost ~]# mongo --host 192.168.77.128 --port 21000

> config = { _id: "configs", members: [ {_id : 0, host : "192.168.77.128:21000"},{_id : 1, host : "192.168.77.130:21000"},{_id : 2, host : "192.168.77.134:21000"}] }

{

"_id" : "configs",

"members" : [

{

"_id" : 0,

"host" : "192.168.77.128:21000"

},

{

"_id" : 1,

"host" : "192.168.77.130:21000"

},

{

"_id" : 2,

"host" : "192.168.77.134:21000"

}

]

}

> rs.initiate(config) # 初始化副本集

{

"ok" : 1,

"operationTime" : Timestamp(1515553318, 1),

"$gleStats" : {

"lastOpTime" : Timestamp(1515553318, 1),

"electionId" : ObjectId("000000000000000000000000")

},

"$clusterTime" : {

"clusterTime" : Timestamp(1515553318, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

configs:SECONDARY> rs.status() # 确保每台机器都正常

{

"set" : "configs",

"date" : ISODate("2018-01-10T03:03:40.244Z"),

"myState" : 1,

"term" : NumberLong(1),

"configsvr" : true,

"heartbeatIntervalMillis" : NumberLong(2000),

"optimes" : {

"lastCommittedOpTime" : {

"ts" : Timestamp(1515553411, 1),

"t" : NumberLong(1)

},

"readConcernMajorityOpTime" : {

"ts" : Timestamp(1515553411, 1),

"t" : NumberLong(1)

},

"appliedOpTime" : {

"ts" : Timestamp(1515553411, 1),

"t" : NumberLong(1)

},

"durableOpTime" : {

"ts" : Timestamp(1515553411, 1),

"t" : NumberLong(1)

}

},

"members" : [

{

"_id" : 0,

"name" : "192.168.77.128:21000",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY",

"uptime" : 415,

"optime" : {

"ts" : Timestamp(1515553411, 1),

"t" : NumberLong(1)

},

"optimeDate" : ISODate("2018-01-10T03:03:31Z"),

"infoMessage" : "could not find member to sync from",

"electionTime" : Timestamp(1515553329, 1),

"electionDate" : ISODate("2018-01-10T03:02:09Z"),

"configVersion" : 1,

"self" : true

},

{

"_id" : 1,

"name" : "192.168.77.130:21000",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 101,

"optime" : {

"ts" : Timestamp(1515553411, 1),

"t" : NumberLong(1)

},

"optimeDurable" : {

"ts" : Timestamp(1515553411, 1),

"t" : NumberLong(1)

},

"optimeDate" : ISODate("2018-01-10T03:03:31Z"),

"optimeDurableDate" : ISODate("2018-01-10T03:03:31Z"),

"lastHeartbeat" : ISODate("2018-01-10T03:03:39.973Z"),

"lastHeartbeatRecv" : ISODate("2018-01-10T03:03:38.804Z"),

"pingMs" : NumberLong(0),

"syncingTo" : "192.168.77.134:21000",

"configVersion" : 1

},

{

"_id" : 2,

"name" : "192.168.77.134:21000",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 101,

"optime" : {

"ts" : Timestamp(1515553411, 1),

"t" : NumberLong(1)

},

"optimeDurable" : {

"ts" : Timestamp(1515553411, 1),

"t" : NumberLong(1)

},

"optimeDate" : ISODate("2018-01-10T03:03:31Z"),

"optimeDurableDate" : ISODate("2018-01-10T03:03:31Z"),

"lastHeartbeat" : ISODate("2018-01-10T03:03:39.945Z"),

"lastHeartbeatRecv" : ISODate("2018-01-10T03:03:38.726Z"),

"pingMs" : NumberLong(0),

"syncingTo" : "192.168.77.128:21000",

"configVersion" : 1

}

],

"ok" : 1,

"operationTime" : Timestamp(1515553411, 1),

"$gleStats" : {

"lastOpTime" : Timestamp(1515553318, 1),

"electionId" : ObjectId("7fffffff0000000000000001")

},

"$clusterTime" : {

"clusterTime" : Timestamp(1515553411, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

configs:PRIMARY>添加配置文件(三台机器都需要操作):

[root@localhost ~]# vim /etc/mongod/shard1.conf

pidfilepath = /var/run/mongodb/shard1.pid

dbpath = /data/mongodb/shard1/data

logpath = /data/mongodb/shard1/log/shard1.log

logappend = true

logRotate=rename

bind_ip = 0.0.0.0 # 绑定你的监听IP

port = 27001

fork = true

replSet=shard1 #副本集名称

shardsvr = true #declare this is a shard db of a cluster;

maxConns=20000 #设置最大连接数

[root@localhost ~]# vim /etc/mongod/shard2.conf //加入如下内容

pidfilepath = /var/run/mongodb/shard2.pid

dbpath = /data/mongodb/shard2/data

logpath = /data/mongodb/shard2/log/shard2.log

logappend = true

logRotate=rename

bind_ip = 0.0.0.0 # 绑定你的监听IP

port = 27002

fork = true

replSet=shard2 #副本集名称

shardsvr = true #declare this is a shard db of a cluster;

maxConns=20000 #设置最大连接数

[root@localhost ~]# vim /etc/mongod/shard3.conf //加入如下内容

pidfilepath = /var/run/mongodb/shard3.pid

dbpath = /data/mongodb/shard3/data

logpath = /data/mongodb/shard3/log/shard3.log

logappend = true

logRotate=rename

bind_ip = 0.0.0.0 # 绑定你的监听IP

port = 27003

fork = true

replSet=shard3 #副本集名称

shardsvr = true #declare this is a shard db of a cluster;

maxConns=20000 #设置最大连接数都配置完成之后逐个进行启动,三台机器都需要启动:

1.先启动shard1:

[root@localhost ~]# mongod -f /etc/mongod/shard1.conf # 三台机器都要操作

about to fork child process, waiting until server is ready for connections.

forked process: 13615

child process started successfully, parent exiting

[root@localhost ~]# ps aux |grep shard1

root 13615 0.7 1.3 1023224 52660 ? Sl 17:16 0:00 mongod -f /etc/mongod/shard1.conf

root 13670 0.0 0.0 112660 964 pts/0 R+ 17:17 0:00 grep --color=auto shard1

[root@localhost ~]#然后登录128或者130机器的27001端口初始化副本集,134之所以不行,是因为shard1我们把134这台机器的27001端口作为了仲裁节点:

[root@localhost ~]# mongo --host 192.168.77.128 --port 27001

> use admin

switched to db admin

> config = { _id: "shard1", members: [ {_id : 0, host : "192.168.77.128:27001"}, {_id: 1,host : "192.168.77.130:27001"},{_id : 2, host : "192.168.77.134:27001",arbiterOnly:true}] }

{

"_id" : "shard1",

"members" : [

{

"_id" : 0,

"host" : "192.168.77.128:27001"

},

{

"_id" : 1,

"host" : "192.168.77.130:27001"

},

{

"_id" : 2,

"host" : "192.168.77.134:27001",

"arbiterOnly" : true

}

]

}

> rs.initiate(config) # 初始化副本集

{ "ok" : 1 }

shard1:SECONDARY> rs.status() # 查看状态

{

"set" : "shard1",

"date" : ISODate("2018-01-10T09:21:37.682Z"),

"myState" : 1,

"term" : NumberLong(1),

"heartbeatIntervalMillis" : NumberLong(2000),

"optimes" : {

"lastCommittedOpTime" : {

"ts" : Timestamp(1515576097, 1),

"t" : NumberLong(1)

},

"readConcernMajorityOpTime" : {

"ts" : Timestamp(1515576097, 1),

"t" : NumberLong(1)

},

"appliedOpTime" : {

"ts" : Timestamp(1515576097, 1),

"t" : NumberLong(1)

},

"durableOpTime" : {

"ts" : Timestamp(1515576097, 1),

"t" : NumberLong(1)

}

},

"members" : [

{

"_id" : 0,

"name" : "192.168.77.128:27001",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY",

"uptime" : 317,

"optime" : {

"ts" : Timestamp(1515576097, 1),

"t" : NumberLong(1)

},

"optimeDate" : ISODate("2018-01-10T09:21:37Z"),

"infoMessage" : "could not find member to sync from",

"electionTime" : Timestamp(1515576075, 1),

"electionDate" : ISODate("2018-01-10T09:21:15Z"),

"configVersion" : 1,

"self" : true

},

{

"_id" : 1,

"name" : "192.168.77.130:27001",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 33,

"optime" : {

"ts" : Timestamp(1515576097, 1),

"t" : NumberLong(1)

},

"optimeDurable" : {

"ts" : Timestamp(1515576097, 1),

"t" : NumberLong(1)

},

"optimeDate" : ISODate("2018-01-10T09:21:37Z"),

"optimeDurableDate" : ISODate("2018-01-10T09:21:37Z"),

"lastHeartbeat" : ISODate("2018-01-10T09:21:37.262Z"),

"lastHeartbeatRecv" : ISODate("2018-01-10T09:21:36.213Z"),

"pingMs" : NumberLong(0),

"syncingTo" : "192.168.77.128:27001",

"configVersion" : 1

},

{

"_id" : 2,

"name" : "192.168.77.134:27001",

"health" : 1,

"state" : 7,

"stateStr" : "ARBITER", # 可以看到134是仲裁节点

"uptime" : 33,

"lastHeartbeat" : ISODate("2018-01-10T09:21:37.256Z"),

"lastHeartbeatRecv" : ISODate("2018-01-10T09:21:36.024Z"),

"pingMs" : NumberLong(0),

"configVersion" : 1

}

],

"ok" : 1

}

shard1:PRIMARY>2.shard1配置完毕之后启动shard2:

[root@localhost ~]# mongod -f /etc/mongod/shard2.conf # 三台机器都要进行启动操作

about to fork child process, waiting until server is ready for connections.

forked process: 13910

child process started successfully, parent exiting

[root@localhost ~]# ps aux |grep shard2

root 13910 1.9 1.2 1023224 50096 ? Sl 17:25 0:00 mongod -f /etc/mongod/shard2.conf

root 13943 0.0 0.0 112660 964 pts/0 S+ 17:25 0:00 grep --color=auto shard2

[root@localhost ~]#登录130或者134任何一台机器的27002端口初始化副本集,128之所以不行,是因为shard2我们把128这台机器的27002端口作为了仲裁节点:

[root@localhost ~]# mongo --host 192.168.77.130 --port 27002

> use admin

switched to db admin

> config = { _id: "shard2", members: [ {_id : 0, host : "192.168.77.128:27002" ,arbiterOnly:true},{_id : 1, host : "192.168.77.130:27002"},{_id : 2, host : "192.168.77.134:27002"}] }

{

"_id" : "shard2",

"members" : [

{

"_id" : 0,

"host" : "192.168.77.128:27002",

"arbiterOnly" : true

},

{

"_id" : 1,

"host" : "192.168.77.130:27002"

},

{

"_id" : 2,

"host" : "192.168.77.134:27002"

}

]

}

> rs.initiate(config)

{ "ok" : 1 }

shard2:SECONDARY> rs.status()

{

"set" : "shard2",

"date" : ISODate("2018-01-10T17:26:12.250Z"),

"myState" : 1,

"term" : NumberLong(1),

"heartbeatIntervalMillis" : NumberLong(2000),

"optimes" : {

"lastCommittedOpTime" : {

"ts" : Timestamp(1515605171, 1),

"t" : NumberLong(1)

},

"readConcernMajorityOpTime" : {

"ts" : Timestamp(1515605171, 1),

"t" : NumberLong(1)

},

"appliedOpTime" : {

"ts" : Timestamp(1515605171, 1),

"t" : NumberLong(1)

},

"durableOpTime" : {

"ts" : Timestamp(1515605171, 1),

"t" : NumberLong(1)

}

},

"members" : [

{

"_id" : 0,

"name" : "192.168.77.128:27002",

"health" : 1,

"state" : 7,

"stateStr" : "ARBITER", # 仲裁节点

"uptime" : 42,

"lastHeartbeat" : ISODate("2018-01-10T17:26:10.792Z"),

"lastHeartbeatRecv" : ISODate("2018-01-10T17:26:11.607Z"),

"pingMs" : NumberLong(0),

"configVersion" : 1

},

{

"_id" : 1,

"name" : "192.168.77.130:27002",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY", # 主节点

"uptime" : 546,

"optime" : {

"ts" : Timestamp(1515605171, 1),

"t" : NumberLong(1)

},

"optimeDate" : ISODate("2018-01-10T17:26:11Z"),

"infoMessage" : "could not find member to sync from",

"electionTime" : Timestamp(1515605140, 1),

"electionDate" : ISODate("2018-01-10T17:25:40Z"),

"configVersion" : 1,

"self" : true

},

{

"_id" : 2,

"name" : "192.168.77.134:27002",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY", # 从节点

"uptime" : 42,

"optime" : {

"ts" : Timestamp(1515605161, 1),

"t" : NumberLong(1)

},

"optimeDurable" : {

"ts" : Timestamp(1515605161, 1),

"t" : NumberLong(1)

},

"optimeDate" : ISODate("2018-01-10T17:26:01Z"),

"optimeDurableDate" : ISODate("2018-01-10T17:26:01Z"),

"lastHeartbeat" : ISODate("2018-01-10T17:26:10.776Z"),

"lastHeartbeatRecv" : ISODate("2018-01-10T17:26:10.823Z"),

"pingMs" : NumberLong(0),

"syncingTo" : "192.168.77.130:27002",

"configVersion" : 1

}

],

"ok" : 1

}

shard2:PRIMARY>3.接着启动shard3:

[root@localhost ~]# mongod -f /etc/mongod/shard3.conf # 三台机器都要操作

about to fork child process, waiting until server is ready for connections.

forked process: 14204

child process started successfully, parent exiting

[root@localhost ~]# ps aux |grep shard3

root 14204 2.2 1.2 1023228 50096 ? Sl 17:36 0:00 mongod -f /etc/mongod/shard3.conf

root 14237 0.0 0.0 112660 960 pts/0 S+ 17:36 0:00 grep --color=auto shard3

[root@localhost ~]#然后登录128或者134任何一台机器的27003端口初始化副本集,130之所以不行,是因为shard3我们把130这台机器的27003端口作为了仲裁节点:

[root@localhost ~]# mongo --host 192.168.77.128 --port 27003

> use admin

switched to db admin

> config = { _id: "shard3", members: [ {_id : 0, host : "192.168.77.128:27003"}, {_id : 1, host : "192.168.77.130:27003", arbiterOnly:true}, {_id : 2, host : "192.168.77.134:27003"}] }

{

"_id" : "shard3",

"members" : [

{

"_id" : 0,

"host" : "192.168.77.128:27003"

},

{

"_id" : 1,

"host" : "192.168.77.130:27003",

"arbiterOnly" : true

},

{

"_id" : 2,

"host" : "192.168.77.134:27003"

}

]

}

> rs.initiate(config)

{ "ok" : 1 }

shard3:SECONDARY> rs.status()

{

"set" : "shard3",

"date" : ISODate("2018-01-10T09:39:47.530Z"),

"myState" : 1,

"term" : NumberLong(1),

"heartbeatIntervalMillis" : NumberLong(2000),

"optimes" : {

"lastCommittedOpTime" : {

"ts" : Timestamp(1515577180, 2),

"t" : NumberLong(1)

},

"readConcernMajorityOpTime" : {

"ts" : Timestamp(1515577180, 2),

"t" : NumberLong(1)

},

"appliedOpTime" : {

"ts" : Timestamp(1515577180, 2),

"t" : NumberLong(1)

},

"durableOpTime" : {

"ts" : Timestamp(1515577180, 2),

"t" : NumberLong(1)

}

},

"members" : [

{

"_id" : 0,

"name" : "192.168.77.128:27003",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY", # 主节点

"uptime" : 221,

"optime" : {

"ts" : Timestamp(1515577180, 2),

"t" : NumberLong(1)

},

"optimeDate" : ISODate("2018-01-10T09:39:40Z"),

"infoMessage" : "could not find member to sync from",

"electionTime" : Timestamp(1515577179, 1),

"electionDate" : ISODate("2018-01-10T09:39:39Z"),

"configVersion" : 1,

"self" : true

},

{

"_id" : 1,

"name" : "192.168.77.130:27003",

"health" : 1,

"state" : 7,

"stateStr" : "ARBITER", # 仲裁节点

"uptime" : 18,

"lastHeartbeat" : ISODate("2018-01-10T09:39:47.477Z"),

"lastHeartbeatRecv" : ISODate("2018-01-10T09:39:45.715Z"),

"pingMs" : NumberLong(0),

"configVersion" : 1

},

{

"_id" : 2,

"name" : "192.168.77.134:27003",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY", # 从节点

"uptime" : 18,

"optime" : {

"ts" : Timestamp(1515577180, 2),

"t" : NumberLong(1)

},

"optimeDurable" : {

"ts" : Timestamp(1515577180, 2),

"t" : NumberLong(1)

},

"optimeDate" : ISODate("2018-01-10T09:39:40Z"),

"optimeDurableDate" : ISODate("2018-01-10T09:39:40Z"),

"lastHeartbeat" : ISODate("2018-01-10T09:39:47.477Z"),

"lastHeartbeatRecv" : ISODate("2018-01-10T09:39:45.779Z"),

"pingMs" : NumberLong(0),

"syncingTo" : "192.168.77.128:27003",

"configVersion" : 1

}

],

"ok" : 1

}

shard3:PRIMARY>mongos放在最后面配置是因为它需要知道作为config server的是哪个机器,以及作为shard副本集的机器。

1添加配置文件(三台机器都操作):

[root@localhost ~]# vim /etc/mongod/mongos.conf # 加入如下内容

pidfilepath = /var/run/mongodb/mongos.pid

logpath = /data/mongodb/mongos/log/mongos.log

logappend = true

bind_ip = 0.0.0.0 # 绑定你的监听ip

port = 20000

fork = true

#监听的配置服务器,只能有1个或者3个,configs为配置服务器的副本集名字

configdb = configs/192.168.77.128:21000, 192.168.77.130:21000, 192.168.77.134:21000

maxConns=20000 #设置最大连接数2.然后三台机器上都启动mongos服务,注意命令,前面都是mongod,这里是mongos:

[root@localhost ~]# mongos -f /etc/mongod/mongos.conf # 三台机器上都需要执行

2018-01-10T18:26:02.566+0800 I NETWORK [main] getaddrinfo(" 192.168.77.130") failed: Name or service not known

2018-01-10T18:26:22.583+0800 I NETWORK [main] getaddrinfo(" 192.168.77.134") failed: Name or service not known

about to fork child process, waiting until server is ready for connections.

forked process: 15552

child process started successfully, parent exiting

[root@localhost ~]# ps aux |grep mongos # 三台机器上都需要检查进程是否已启动

root 15552 0.2 0.3 279940 15380 ? Sl 18:26 0:00 mongos -f /etc/mongod/mongos.conf

root 15597 0.0 0.0 112660 964 pts/0 S+ 18:27 0:00 grep --color=auto mongos

[root@localhost ~]# netstat -lntp |grep mongos # 三台机器上都需要检查端口是否已监听

tcp 0 0 0.0.0.0:20000 0.0.0.0:* LISTEN 15552/mongos

[root@localhost ~]#1.登录任意一台机器的20000端口,然后把所有分片和路由器串联:

[root@localhost ~]# mongo --host 192.168.77.128 --port 20000

# 串联shard1

mongos> sh.addShard("shard1/192.168.77.128:27001,192.168.77.130:27001,192.168.77.134:27001")

{

"shardAdded" : "shard1", # 这里得对应的是shard1才行

"ok" : 1, # 注意,这里得是1才是成功

"$clusterTime" : {

"clusterTime" : Timestamp(1515580345, 6),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1515580345, 6)

}

# 串联shard2

mongos> sh.addShard("shard2/192.168.77.128:27002,192.168.77.130:27002,192.168.77.134:27002")

{

"shardAdded" : "shard2", # 这里得对应的是shard2才行

"ok" : 1, # 注意,这里得是1才是成功

"$clusterTime" : {

"clusterTime" : Timestamp(1515608789, 6),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1515608789, 6)

}

# 串联shard3

mongos> sh.addShard("shard3/192.168.77.128:27003,192.168.77.130:27003,192.168.77.134:27003")

{

"shardAdded" : "shard3", # 这里得对应的是shard3才行

"ok" : 1, # 注意,这里得是1才是成功

"$clusterTime" : {

"clusterTime" : Timestamp(1515608789, 14),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1515608789, 14)

}

mongos>使用sh.status()命令查询分片状态,要确认状态正常:

mongos> sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("5a55823348aee75ba3928fea")

}

shards: # 成功的情况下,这里会列出分片信息和状态,state的值要为1

{ "_id" : "shard1", "host" : "shard1/192.168.77.128:27001,192.168.77.130:27001", "state" : 1 }

{ "_id" : "shard2", "host" : "shard2/192.168.77.130:27002,192.168.77.134:27002", "state" : 1 }

{ "_id" : "shard3", "host" : "shard3/192.168.77.128:27003,192.168.77.134:27003", "state" : 1 }

active mongoses:

"3.6.1" : 1

autosplit:

Currently enabled: yes # 成功的情况下,这里是yes

balancer:

Currently enabled: yes # 成功的情况下,这里是yes

Currently running: no # 没有创建库和表的情况下,这里是no,反之则得是yes

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

No recent migrations

databases:

{ "_id" : "config", "primary" : "config", "partitioned" : true }

config.system.sessions

shard key: { "_id" : 1 }

unique: false

balancing: true

chunks:

shard1 1

{ "_id" : { "$minKey" : 1 } } -->> { "_id" : { "$maxKey" : 1 } } on : shard1 Timestamp(1, 0)

mongos>1.登录任意一台20000端口:

[root@localhost ~]# mongo --host 192.168.77.128 --port 20000

2.进入admin库,使用以下任意一条命令指定要分片的数据库:

db.runCommand({ enablesharding : "testdb"})

sh.enableSharding("testdb")

示例:

mongos> use admin

switched to db admin

mongos> sh.enableSharding("testdb")

{

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1515609562, 6),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1515609562, 6)

}

mongos>3.使用以下任意一条命令指定数据库里需要分片的集合和片键:

db.runCommand( { shardcollection : "testdb.table1",key : {id: 1} } )

sh.shardCollection("testdb.table1",{"id":1} )

示例:

mongos> sh.shardCollection("testdb.table1",{"id":1} )

{

"collectionsharded" : "testdb.table1",

"collectionUUID" : UUID("f98762a6-8b2b-4ae5-9142-3d8acc589255"),

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1515609671, 12),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1515609671, 12)

}

mongos>4.进入刚刚创建的testdb库里插入测试数据:

mongos> use testdb

switched to db testdb

mongos> for (var i = 1; i <= 10000; i++) db.table1.save({id:i,"test1":"testval1"})

WriteResult({ "nInserted" : 1 })

mongos>5.然后创建多几个库和集合:

mongos> sh.enableSharding("db1")

mongos> sh.shardCollection("db1.table1",{"id":1} )

mongos> sh.enableSharding("db2")

mongos> sh.shardCollection("db2.table1",{"id":1} )

mongos> sh.enableSharding("db3")

mongos> sh.shardCollection("db3.table1",{"id":1} )

6.查看状态:

mongos> sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("5a55823348aee75ba3928fea")

}

shards:

{ "_id" : "shard1", "host" : "shard1/192.168.77.128:27001,192.168.77.130:27001", "state" : 1 }

{ "_id" : "shard2", "host" : "shard2/192.168.77.130:27002,192.168.77.134:27002", "state" : 1 }

{ "_id" : "shard3", "host" : "shard3/192.168.77.128:27003,192.168.77.134:27003", "state" : 1 }

active mongoses:

"3.6.1" : 1

autosplit:

Currently enabled: yes

balancer:

Currently enabled: yes

Currently running: no

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

No recent migrations

databases:

{ "_id" : "config", "primary" : "config", "partitioned" : true }

config.system.sessions

shard key: { "_id" : 1 }

unique: false

balancing: true

chunks:

shard1 1

{ "_id" : { "$minKey" : 1 } } -->> { "_id" : { "$maxKey" : 1 } } on : shard1 Timestamp(1, 0)

{ "_id" : "db1", "primary" : "shard3", "partitioned" : true }

db1.table1

shard key: { "id" : 1 }

unique: false

balancing: true

chunks:

shard3 1 # db1存储到了shard3中

{ "id" : { "$minKey" : 1 } } -->> { "id" : { "$maxKey" : 1 } } on : shard3 Timestamp(1, 0)

{ "_id" : "db2", "primary" : "shard1", "partitioned" : true }

db2.table1

shard key: { "id" : 1 }

unique: false

balancing: true

chunks:

shard1 1 # db2存储到了shard1中

{ "id" : { "$minKey" : 1 } } -->> { "id" : { "$maxKey" : 1 } } on : shard1 Timestamp(1, 0)

{ "_id" : "db3", "primary" : "shard3", "partitioned" : true }

db3.table1

shard key: { "id" : 1 }

unique: false

balancing: true

chunks:

shard3 1 # db3存储到了shard3中

{ "id" : { "$minKey" : 1 } } -->> { "id" : { "$maxKey" : 1 } } on : shard3 Timestamp(1, 0)

{ "_id" : "testdb", "primary" : "shard2", "partitioned" : true }

testdb.table1

shard key: { "id" : 1 }

unique: false

balancing: true

chunks:

shard2 1 # testdb存储到了shard2中

{ "id" : { "$minKey" : 1 } } -->> { "id" : { "$maxKey" : 1 } } on : shard2 Timestamp(1, 0)

mongos> 如上,可以看到,刚刚创建的库都存储在了各个分片上,证明分片已经搭建成功。

使用以下命令可以查看某个集合的状态:

db.集合名称.stats()

1.首先演示备份某个指定库:

[root@localhost ~]# mkdir /tmp/mongobak # 先创建一个目录用来存放备份文件

[root@localhost ~]# mongodump --host 192.168.77.128 --port 20000 -d testdb -o /tmp/mongobak

2018-01-10T20:47:51.893+0800 writing testdb.table1 to

2018-01-10T20:47:51.968+0800 done dumping testdb.table1 (10000 documents)

[root@localhost ~]# ls /tmp/mongobak/ # 备份成功后会生成一个目录

testdb

[root@localhost ~]# ls /tmp/mongobak/testdb/ # 目录里则会生成相应的数据文件

table1.bson table1.metadata.json

[root@localhost /tmp/mongobak/testdb]# du -sh * # 可以看到,存放数据的是.bson文件

528K table1.bson

4.0K table1.metadata.json

[root@localhost /tmp/mongobak/testdb]#mongodump 命令中,-d指定需要备份的库,-o指定备份路径

2.备份所有库示例:

[root@localhost ~]# mongodump --host 192.168.77.128 --port 20000 -o /tmp/mongobak

2018-01-10T20:52:28.231+0800 writing admin.system.version to

2018-01-10T20:52:28.233+0800 done dumping admin.system.version (1 document)

2018-01-10T20:52:28.233+0800 writing testdb.table1 to

2018-01-10T20:52:28.234+0800 writing config.locks to

2018-01-10T20:52:28.234+0800 writing config.changelog to

2018-01-10T20:52:28.234+0800 writing config.lockpings to

2018-01-10T20:52:28.235+0800 done dumping config.locks (15 documents)

2018-01-10T20:52:28.236+0800 writing config.chunks to

2018-01-10T20:52:28.236+0800 done dumping config.lockpings (10 documents)

2018-01-10T20:52:28.236+0800 writing config.collections to

2018-01-10T20:52:28.236+0800 done dumping config.changelog (13 documents)

2018-01-10T20:52:28.236+0800 writing config.databases to

2018-01-10T20:52:28.237+0800 done dumping config.collections (5 documents)

2018-01-10T20:52:28.237+0800 writing config.shards to

2018-01-10T20:52:28.237+0800 done dumping config.chunks (5 documents)

2018-01-10T20:52:28.237+0800 writing config.version to

2018-01-10T20:52:28.238+0800 done dumping config.databases (4 documents)

2018-01-10T20:52:28.238+0800 writing config.mongos to

2018-01-10T20:52:28.238+0800 done dumping config.version (1 document)

2018-01-10T20:52:28.238+0800 writing config.migrations to

2018-01-10T20:52:28.239+0800 done dumping config.mongos (1 document)

2018-01-10T20:52:28.239+0800 writing db1.table1 to

2018-01-10T20:52:28.239+0800 done dumping config.shards (3 documents)

2018-01-10T20:52:28.239+0800 writing db2.table1 to

2018-01-10T20:52:28.239+0800 done dumping config.migrations (0 documents)

2018-01-10T20:52:28.239+0800 writing db3.table1 to

2018-01-10T20:52:28.241+0800 done dumping db2.table1 (0 documents)

2018-01-10T20:52:28.241+0800 writing config.tags to

2018-01-10T20:52:28.241+0800 done dumping db1.table1 (0 documents)

2018-01-10T20:52:28.242+0800 done dumping db3.table1 (0 documents)

2018-01-10T20:52:28.243+0800 done dumping config.tags (0 documents)

2018-01-10T20:52:28.272+0800 done dumping testdb.table1 (10000 documents)

[root@localhost ~]# ls /tmp/mongobak/

admin config db1 db2 db3 testdb

[root@localhost ~]#没有指定-d选项就会备份所有的库。

3.除了备份库之外,还可以备份某个指定的集合:

[root@localhost ~]# mongodump --host 192.168.77.128 --port 20000 -d testdb -c table1 -o /tmp/collectionbak

2018-01-10T20:56:55.300+0800 writing testdb.table1 to

2018-01-10T20:56:55.335+0800 done dumping testdb.table1 (10000 documents)

[root@localhost ~]# ls !$

ls /tmp/collectionbak

testdb

[root@localhost ~]# ls /tmp/collectionbak/testdb/

table1.bson table1.metadata.json

[root@localhost ~]#-c选项指定需要备份的集合,如果没有指定-c选项,则会备份该库的所有集合。

4.mongoexport 命令可以将集合导出为json文件:

[root@localhost ~]# mongoexport --host 192.168.77.128 --port 20000 -d testdb -c table1 -o /tmp/table1.json # 导出来的是一个json文件

2018-01-10T21:00:48.098+0800 connected to: 192.168.77.128:20000

2018-01-10T21:00:48.236+0800 exported 10000 records

[root@localhost ~]# ls !$

ls /tmp/table1.json

/tmp/table1.json

[root@localhost ~]# tail -n5 !$ # 可以看到文件中都是json格式的数据

tail -n5 /tmp/table1.json

{"_id":{"$oid":"5a55f036f6179723bfb03611"},"id":9996.0,"test1":"testval1"}

{"_id":{"$oid":"5a55f036f6179723bfb03612"},"id":9997.0,"test1":"testval1"}

{"_id":{"$oid":"5a55f036f6179723bfb03613"},"id":9998.0,"test1":"testval1"}

{"_id":{"$oid":"5a55f036f6179723bfb03614"},"id":9999.0,"test1":"testval1"}

{"_id":{"$oid":"5a55f036f6179723bfb03615"},"id":10000.0,"test1":"testval1"}

[root@localhost ~]#1.上面我们已经备份好了数据,现在我们先把MongoDB中的数据都删除:

[root@localhost ~]# mongo --host 192.168.77.128 --port 20000

mongos> use testdb

switched to db testdb

mongos> db.dropDatabase()

{

"dropped" : "testdb",

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1515617938, 13),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1515617938, 13)

}

mongos> use db1

switched to db db1

mongos> db.dropDatabase()

{

"dropped" : "db1",

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1515617993, 19),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1515617993, 19)

}

mongos> use db2

switched to db db2

mongos> db.dropDatabase()

{

"dropped" : "db2",

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1515618003, 13),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1515618003, 13)

}

mongos> use db3

switched to db db3

mongos> db.dropDatabase()

{

"dropped" : "db3",

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1515618003, 34),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1515618003, 34)

}

mongos> show databases

admin 0.000GB

config 0.001GB

mongos>2.恢复所有的库:

[root@localhost ~]# rm -rf /tmp/mongobak/config/ # 因为不需要恢复config和admin库,所以先把备份文件删掉

[root@localhost ~]# rm -rf /tmp/mongobak/admin/

[root@localhost ~]# mongorestore --host 192.168.77.128 --port 20000 --drop /tmp/mongobak/

2018-01-10T21:11:40.031+0800 preparing collections to restore from

2018-01-10T21:11:40.033+0800 reading metadata for testdb.table1 from /tmp/mongobak/testdb/table1.metadata.json

2018-01-10T21:11:40.035+0800 reading metadata for db2.table1 from /tmp/mongobak/db2/table1.metadata.json

2018-01-10T21:11:40.040+0800 reading metadata for db3.table1 from /tmp/mongobak/db3/table1.metadata.json

2018-01-10T21:11:40.050+0800 reading metadata for db1.table1 from /tmp/mongobak/db1/table1.metadata.json

2018-01-10T21:11:40.086+0800 restoring testdb.table1 from /tmp/mongobak/testdb/table1.bson

2018-01-10T21:11:40.100+0800 restoring db2.table1 from /tmp/mongobak/db2/table1.bson

2018-01-10T21:11:40.102+0800 restoring indexes for collection db2.table1 from metadata

2018-01-10T21:11:40.118+0800 finished restoring db2.table1 (0 documents)

2018-01-10T21:11:40.123+0800 restoring db3.table1 from /tmp/mongobak/db3/table1.bson

2018-01-10T21:11:40.124+0800 restoring indexes for collection db3.table1 from metadata

2018-01-10T21:11:40.126+0800 restoring db1.table1 from /tmp/mongobak/db1/table1.bson

2018-01-10T21:11:40.172+0800 finished restoring db3.table1 (0 documents)

2018-01-10T21:11:40.173+0800 restoring indexes for collection db1.table1 from metadata

2018-01-10T21:11:40.185+0800 finished restoring db1.table1 (0 documents)

2018-01-10T21:11:40.417+0800 restoring indexes for collection testdb.table1 from metadata

2018-01-10T21:11:40.437+0800 finished restoring testdb.table1 (10000 documents)

2018-01-10T21:11:40.437+0800 done

[root@localhost ~]# mongo --host 192.168.77.128 --port 20000

mongos> show databases; # 可以看到,所有的库都恢复了

admin 0.000GB

config 0.001GB

db1 0.000GB

db2 0.000GB

db3 0.000GB

testdb 0.000GB

mongos>mongorestore 命令中的--drop可选,意思是当恢复之前先把之前的数据删除,生产环境不建议使用

3.恢复指定的库:

[root@localhost ~]# mongorestore --host 192.168.77.128 --port 20000 -d testdb --drop /tmp/mongobak/testdb/

2018-01-10T21:15:40.185+0800 the --db and --collection args should only be used when restoring from a BSON file. Other uses are deprecated and will not exist in the future; use --nsInclude instead

2018-01-10T21:15:40.185+0800 building a list of collections to restore from /tmp/mongobak/testdb dir

2018-01-10T21:15:40.232+0800 reading metadata for testdb.table1 from /tmp/mongobak/testdb/table1.metadata.json

2018-01-10T21:15:40.241+0800 restoring testdb.table1 from /tmp/mongobak/testdb/table1.bson

2018-01-10T21:15:40.507+0800 restoring indexes for collection testdb.table1 from metadata

2018-01-10T21:15:40.529+0800 finished restoring testdb.table1 (10000 documents)

2018-01-10T21:15:40.529+0800 done

[root@localhost ~]#恢复某个指定库的时候要指定到具体的备份该库的目录。

4.恢复指定的集合:

[root@localhost ~]# mongorestore --host 192.168.77.128 --port 20000 -d testdb -c table1 --drop /tmp/mongobak/testdb/table1.bson

2018-01-10T21:18:14.097+0800 checking for collection data in /tmp/mongobak/testdb/table1.bson

2018-01-10T21:18:14.139+0800 reading metadata for testdb.table1 from /tmp/mongobak/testdb/table1.metadata.json

2018-01-10T21:18:14.149+0800 restoring testdb.table1 from /tmp/mongobak/testdb/table1.bson

2018-01-10T21:18:14.331+0800 restoring indexes for collection testdb.table1 from metadata

2018-01-10T21:18:14.353+0800 finished restoring testdb.table1 (10000 documents)

2018-01-10T21:18:14.353+0800 done

[root@localhost ~]#同样的恢复某个指定集合的时候要指定到具体的备份该集合的.bson文件。

5.恢复json文件中的集合数据:

[root@localhost ~]# mongoimport --host 192.168.77.128 --port 20000 -d testdb -c table1 --file /tmp/table1.json

恢复json文件中的集合数据使用的是mongoimport 命令,--file指定json文件所在路径。

标签:sync tags 最大连接数 ptime _id image 执行 操作 规划

原文地址:http://blog.51cto.com/zero01/2059598