标签:count bec K8S集群 jsonp 必须 add dml oar grep

访问部署在kubernetes集群中服务,有两种类型:

但是不管是集群内部还是外部访问都是要经过kube-proxy的

Clusterip是集群内部的私有ip,在集群内部访问服务非常方便,也是kuberentes集群默认的方式,直接通过service的Clusterip访问,也可以直接通过ServiceName访问。集群外部则是无法访问的。

**创建nginx服务,提供web服务z

nginx-ds.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: nginx-dm

spec:

replicas: 2

template:

metadata:

labels:

name: nginx

spec:

containers:

- name: nginx

image: nginx:alpine

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx-svc

spec:

ports:

- port: 80

targetPort: 80

protocol: TCP

selector:

name: nginx创建一个pod作为client

alpine.yaml

apiVersion: v1

kind: Pod

metadata:

name: alpine

spec:

containers:

- name: alpine

image: alpine

command:

- sh

- -c

- while true; do sleep 1; done 应用并测试访问

kubectl create -f nginx-ds.yaml

kubectl create -f alpine.yaml

> kubectl get svc | grep nginx

nginx-svc ClusterIP 10.254.105.39 <none> 80/TCP 11m

> kubectl exec -it alpine ping nginx-svc

PING nginx-svc (10.254.105.39): 56 data bytes

64 bytes from 10.254.105.39: seq=0 ttl=64 time=0.073 ms

64 bytes from 10.254.105.39: seq=1 ttl=64 time=0.083 ms

64 bytes from 10.254.105.39: seq=2 ttl=64 time=0.091 ms

64 bytes from 10.254.105.39: seq=3 ttl=64 time=0.088 ms

> kubectl exec -it alpine ping 10.254.105.39

PING 10.254.105.39 (10.254.105.39): 56 data bytes

64 bytes from 10.254.105.39: seq=0 ttl=64 time=0.137 ms

64 bytes from 10.254.105.39: seq=1 ttl=64 time=0.106 ms

64 bytes from 10.254.105.39: seq=2 ttl=64 time=0.083 ms使用curl访问测试

> kubectl exec -it alpine sh # 默认没有安装curl

/ # apk update && apk upgrade

/ # apk add curl

kubectl exec -it alpine curl http://10.254.105.39

kubectl exec -it alpine curl http://nginx-svc # 都可以访问到nginx默认页面NodePort在kubenretes里是一个早期广泛应用的服务暴露方式。Kubernetes中的service默认情况下都是使用的ClusterIP这种类型,这样的service会产生一个ClusterIP,这个IP只能在集群内部访问,要想让外部能够直接访问service,需要将service type修改为 nodePort。将service监听端口映射到node节点。

nginx-ds.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: nginx-dm

spec:

replicas: 2

template:

metadata:

labels:

name: nginx

spec:

containers:

- name: nginx

image: nginx:alpine

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx-svc

spec:

type: NodePort

ports:

- port: 80

targetPort: 80

nodePort: 30004

protocol: TCP

selector:

name: nginx创建

kubectl create -f nginx-ds.yaml访问测试

在集群之外,可以通过任何一个node节点的ip+nodeport都可以访问集群中服务

> curl http://192.168.16.238:30004

> curl http://192.168.16.239:30004

....

> curl http://192.168.16.243:30004

> curl http://192.168.16.244:30004LoadBlancer Service 是 kubernetes 深度结合云平台的一个组件;当使用 LoadBlancer Service 暴露服务时,实际上是通过向底层云平台申请创建一个负载均衡器来向外暴露服务;目前 LoadBlancer Service 支持的云平台已经相对完善,比如国外的 GCE、DigitalOcean,国内的 阿里云,私有云 Openstack 等等,由于 LoadBlancer Service 深度结合了云平台,所以只能在一些云平台上来使用

Ingress是自kubernetes1.1版本后引入的资源类型。必须要部署Ingress controller才能创建Ingress资源,Ingress controller是以一种插件的形式提供。

使用 Ingress 时一般会有三个组件:

反向代理负载均衡器

反向代理负载均衡器很简单,类似nginx,haproxy;在集群中反向代理负载均衡器可以自由部署,可以使用 Replication Controller、Deployment、DaemonSet 等等,推荐DaemonSet 的方式部署

Ingress Controller

Ingress Controller 实质上可以理解为是个监视器,Ingress Controller 通过不断地跟 kubernetes API 打交道,实时的感知后端 service、pod 等变化,比如新增和减少 pod,service 增加与减少等;当得到这些变化信息后,Ingress Controller 再结合下文的 Ingress 生成配置,然后更新反向代理负载均衡器,并刷新其配置,达到服务发现的作用

Ingress

Ingress 简单理解就是个规则定义;比如说某个域名对应某个 service,即当某个域名的请求进来时转发给某个 service;这个规则将与 Ingress Controller 结合,然后 Ingress Controller 将其动态写入到负载均衡器配置中,从而实现整体的服务发现和负载均衡

这种方式不需再经过kube-proxy的转发,比LoadBalancer方式更高效。

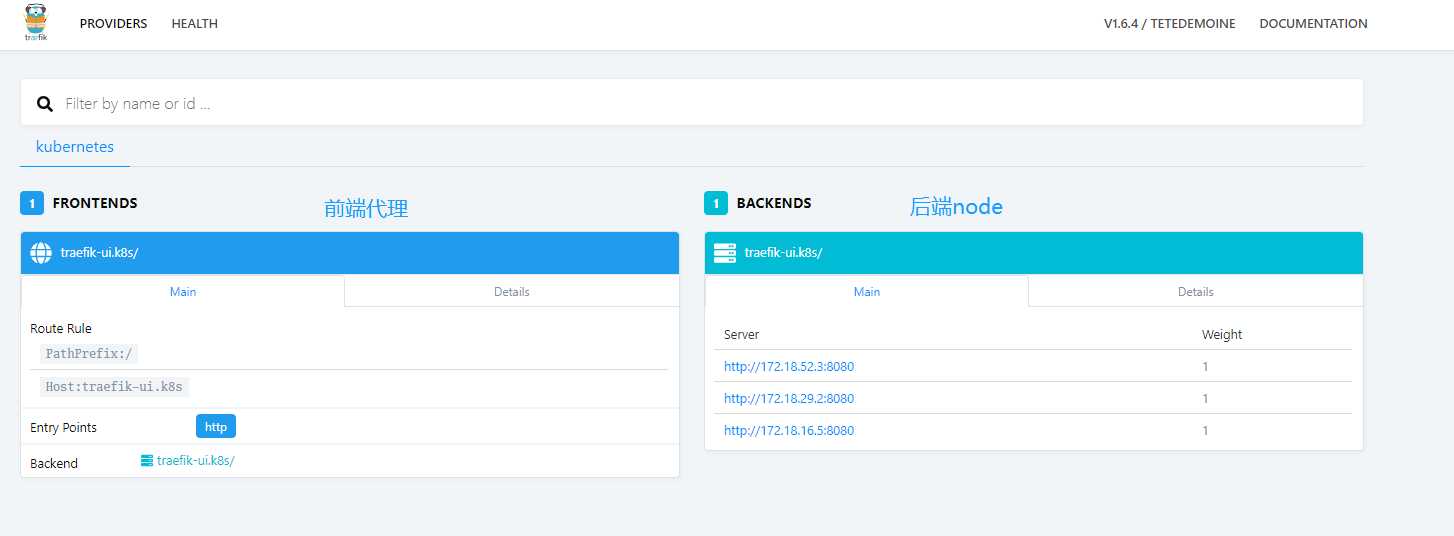

Traefik是一款开源的反向代理与负载均衡工具。它最大的优点是能够与常见的微服务系统直接整合,可以实现自动化动态配置。目前支持Docker、Swarm、Mesos/Marathon、 Mesos、Kubernetes、Consul、Etcd、Zookeeper、BoltDB、Rest API等等后端模型。

获取traefik-rbac.yaml文件并应用,用于service account验证:

wget https://raw.githubusercontent.com/containous/traefik/master/examples/k8s/traefik-rbac.yaml #不用修改直接应用就行

> kubectl create -f traefik-rbac.yaml 以 Daemon Set 的方式在每个 node 上启动一个 traefik,并使用 hostPort 的方式让其监听每个 node 的 80 端口

获取yaml文件

wget https://raw.githubusercontent.com/containous/traefik/master/examples/k8s/traefik-ds.yaml

> kubectl get pod,svc -n kube-system | grep traefik

> kubectl get pod,svc -n kube-system | grep traefik

pod/traefik-ingress-controller-7fkp7 1/1 Running 0 10m

pod/traefik-ingress-controller-mqnm9 1/1 Running 0 10m

pod/traefik-ingress-controller-tdg77 1/1 Running 0 10m

service/traefik-ingress-service ClusterIP 10.254.179.212 <none> 80/TCP,8080/TCP 22straefik 本身还提供了一套 UI 供我们使用,其同样以 Ingress 方式暴露

获取yaml文件

wget https://raw.githubusercontent.com/containous/traefik/master/examples/k8s/ui.yaml

> kubectl create -f ui.yaml

- host: traefik-ui.k8s # 可以绑定node节点ip做对应hosts解析访问web-ui

hosts

192.168.16.238 traefik-ui.k8s访问

http://traefik-ui.k8s/dashboard/

nginx-ingress.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: nginx-traefik

annotations:

kubernetes.io/ingress.class: traefik

spec:

rules:

- host: nginx.svc

http:

paths:

- path: /

backend:

serviceName: nginx-svc

servicePort: 80

kubectl create -f nginx-ingress.yaml 访问测试

hosts

192.168.16.238 nginx.svc

http://nginx.svc

k8s-dashboard-ingress.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: dashboard-k8s-traefik

namespace: kube-system

annotations:

kubernetes.io/ingress.class: traefik

spec:

rules:

- host: dashboard.k8s

http:

paths:

- path: /

backend:

serviceName: kubernetes-dashboard

servicePort: 80 # dashboard使用https则需要改成443 应用并测试

> kubectl create -f k8s-dashboard-ingress.yaml 访问web-ui

hosts

192.168.16.238 dashboard.k8s使用token登录

> kubectl get secret -n kube-system|grep admin-token

admin-token-4sk8z kubernetes.io/service-account-token 3 7s

> kubectl get secret admin-token-4sk8z -o jsonpath={.data.token} -n kube-system |base64 -d

eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi10b2tlbi00c2s4eiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJhZG1pbiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6ImUwYTc5YzA0LTc3OWQtMTFlOC05MzQwLTAwNTA1Njk4NzU5MCIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlLXN5c3RlbTphZG1pbiJ9.AiIHiuti7WDU0DWAzy3hYj4R66oUOaBGpyBkG5n2OrYk_LYlYyyLf-0DxavtD8MECpfC6aV0TtTlQsiSGS9RceyF3SIiqxNn0-73dz8w-LFquz-IBidvLq4U-POEQY9DOygW9MBnu82eUrN_qUg5Yf5HUZndIFgWyT_p5w0EGtXUl96S05ULVVAqVoglNdZCDOWxWw_VU6z_HU5DnIomiLFG3Ujtxe1hEk9V-Inxs-Z2pDr-SCUFMPqTn2k8oHJn3wFkLyvDP5DXulTYNp5N40lUkpJzThEi1es5PDGUo-v9dW0w2RJ7YSlb6NNDanbEyA8DbPB4kUvUNjmKNzsw-g实现Ingress很多service mesh也是可以解决的

[k8s集群系列-10]Kubernetes Service暴露方式及Traefik使用

标签:count bec K8S集群 jsonp 必须 add dml oar grep

原文地址:https://www.cnblogs.com/knmax/p/9222350.html