标签:账号 obs 获得 mil 集群配置 ros 端口 file trigger

TIDB的安装

TiDB 是 PingCAP 公司受 Google Spanner / F1 论文启发而设计的开源分布式 HTAP (Hybrid Transactional and Analytical Processing) 数据库,结合了传统的 RDBMS 和 NoSQL 的最佳特性。TiDB 兼容 MySQL,支持无限的水平扩展,具备强一致性和高可用性。(官网介绍)

用mysql客户端工具连接进去tidb。tidb和mysql的各类命令用法是一样的。没有过高的学习成本,开发成本。

TiDB目前官网是强烈推荐用ansible部署。ansible可以方便快速部署完毕。为了更好的了解整个架构,可以手动去部署一次。

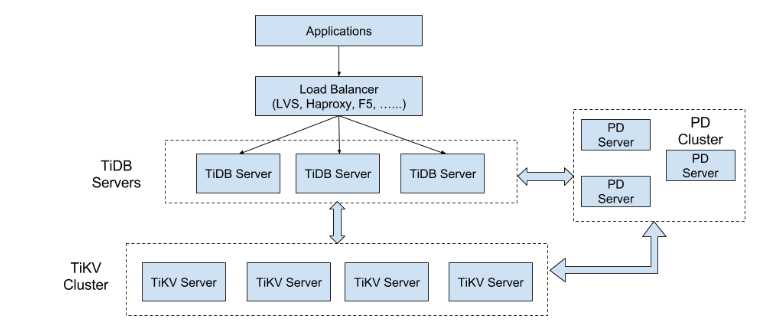

TIDB的架构(图来自官网):

安装TiDB

ansible方式下载:

cd tidb-ansible

ansible-playbook local_prepare.yml

cd downloads (可以看到下载的tidb安装包,包括工具等。)

或者

或者

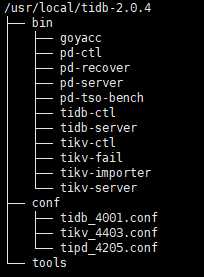

手动下载完成tidb安装包并解压后,会看tidb的目录架构如下:

除此之外tidb官网还提供了众多工具如(checker dump_region loader syncer。)可以用于导出mysql数据并导入,根据binlog实时同步等。

安装TiDB

需要安装3类节点。tipd。tikv。tidb。 启动顺序是: tipd》tikv》tidb。

tipd节点:管理节点,管理元数据,对tikv节点数据的均衡调度。

tikv节点:存储数据节点,可设置多个副本互备。

tidb节点:客户端链接,计算节点。是无数据,无状态的。

1、安装环境:

系统:centos6.6 (官网推荐是centos7。centos6需要升级GLIBC库到2.17以上)

磁盘:tidb仅对ssdb进行优化,建议使用ssdb。

go环境:建议 go version go1.10.2 linux/amd64 以上 (注:tidb为go语言编写,需要配置go环境。 自行配置。编译需要go。二进制解压即可用方式不用安装go)

2、目录文件规范:

按照我的安装习惯,先会对安装目录,数据目录,文件名称进行规划顺带说一下:

安装至/usr/local。解压后目录:

/usr/local/tidb-2.0.4

ln -s /usr/local/tidb-2.0.4 tidb

conf 放配置文件: 配置文件为 tipd_端口号.conf tikv_端口号.conf tidb_端口号.conf 。

tools防止tidb的其他额外工具。

数据目录:mkdir /data_db3/tidb/ 》 db 、kv、pd 》端口

节点规划:

3台机器(192.168.100.73,192.168.100.74,192.168.100.75)

tipd集群:192.168.100.73,192.168.100.74,192.168.100.75

tikv数据节点:192.168.100.73,192.168.100.74,192.168.100.75

tidb节点:192.168.100.75

3、配置tipd节点(集群方式):

/usr/local/tidb-2.0.4/conf/tipd_4205.conf

client-urls="http://192.168.100.75:4205"

name="pd3"

data-dir="/data_db3/tidb/pd/4205/"

peer-urls="http://192.168.100.75:4206"

initial-cluster="pd1=http://192.168.100.74:4202,pd2=http://192.168.100.73:4204,pd3=http://192.168.100.75:4206"

log-file="/data_db3/tidb/pd/4205_run.log"

说明:name指定的名称必须和初始化集群名称的“pd3=” 相同。

pd节点有3类端口:

peer-urls 端口为集群tipd集群之间通信用的端口。健康检查等。

client-urls 为何tikv通信用的端口。

log-file 是指定日志文件,有一个很奇怪的规定,就是日志文件目录不能放在data-dir 目录下层。

设置完配置文件启动:

/usr/local/tidb-2.0.4/bin/pd-server --config=/usr/local/tidb-2.0.4/conf/tipd_4205.conf

首次启动首个tipd会做初始化。如果tipd节点报错不成功。重新初始化需要先删除目录节点内容data-dir。

(pd1 pd2节点安装同pd3。)

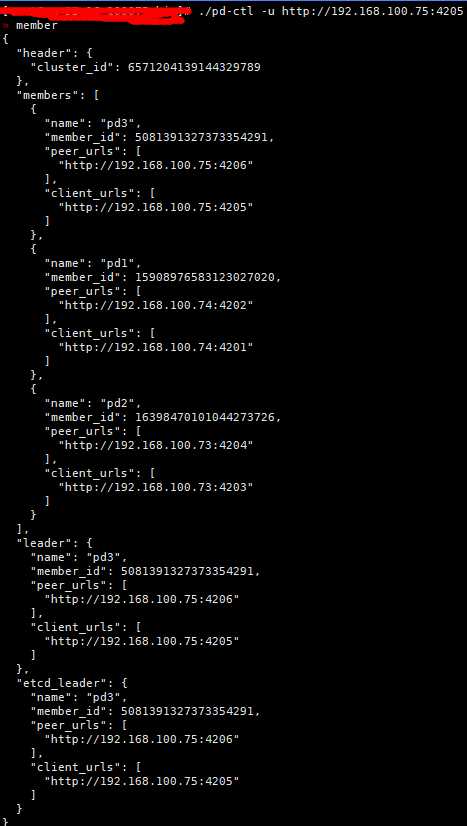

安装完成tipd节点后。登陆pd-ctl的其中一个节点(客户端url)。查看 集群信息:

./pd-ctl -u http://192.168.100.75:4205

help 查看更多命令, 命令 help 查看命令的选项信息。如 help member 查看member的更多操作。可以delete tipd节点,leader优先级等。

config show 查看集群配置,health 节点之间健康检查。

4、安装tikv节点:

tikv节点是真正存放数据的节点。参数调优更多在于tikv节点上。

配置文件:

主要需配置信息:

/usr/local/tidb-2.0.4/conf/tikv_4402.conf

log-level = "info"

log-file = "/data_db3/tidb/kv/4402/run.log"

[server]

addr = "192.168.100.74:4402"

[storage]

data-dir = "/data_db3/tidb/kv/4402"

scheduler-concurrency = 1024000

scheduler-worker-pool-size = 100

#labels = {zone = "ZONE1", host = "10074"}

[pd]

#指定tipd节点 这里指定的都是tipd的client-urls

endpoints = ["192.168.100.73:4203","192.168.100.74:4201","192.168.100.75:4205"]

[metric]

interval = "15s"

address = ""

job = "tikv"

[raftstore]

sync-log = false

region-max-size = "384MB"

region-split-size = "256MB"

[rocksdb]

max-background-jobs = 28

max-open-files = 409600

max-manifest-file-size = "20MB"

compaction-readahead-size = "20MB"

[rocksdb.defaultcf]

block-size = "64KB"

compression-per-level = ["no", "no", "lz4", "lz4", "lz4", "zstd", "zstd"]

write-buffer-size = "128MB"

max-write-buffer-number = 10

level0-slowdown-writes-trigger = 20

level0-stop-writes-trigger = 36

max-bytes-for-level-base = "512MB"

target-file-size-base = "32MB"

[rocksdb.writecf]

compression-per-level = ["no", "no", "lz4", "lz4", "lz4", "zstd", "zstd"]

write-buffer-size = "128MB"

max-write-buffer-number = 5

min-write-buffer-number-to-merge = 1

max-bytes-for-level-base = "512MB"

target-file-size-base = "32MB"

[raftdb]

max-open-files = 409600

compaction-readahead-size = "20MB"

[raftdb.defaultcf]

compression-per-level = ["no", "no", "lz4", "lz4", "lz4", "zstd", "zstd"]

write-buffer-size = "128MB"

max-write-buffer-number = 5

min-write-buffer-number-to-merge = 1

max-bytes-for-level-base = "512MB"

target-file-size-base = "32MB"

block-cache-size = "10G"

[import]

import-dir = "/data_db3/tidb/kv/4402/import"

num-threads = 8

stream-channel-window = 128

#(参数为个人定义,未经线上调优。)

注意:

一台机器上安装多个tikv时候,可以打标签,防止复制副本存放在同个机器上。

tikv-server --labels zone=<zone>,rack=<rack>,host=<host> disk = <ssd>

标签分为4个级别:

zone。机房

rack 。机架

host。主机

disk。磁盘

tidb会从大到小。尽量让副本数据不要放在同一个地方。

启动tikv:

/usr/local/tidb-2.0.4/bin/tikv-server --config=/usr/local/tidb-2.0.4/conf/tikv_4402.conf

启动完毕没报错的话可以到pd-ctl集群管理工具查看tikv是否加入集群store的kv节点。

./pd-ctl -u http://192.168.100.75:4205

? store

{

"store": {

"id": 30,

"address": "192.168.100.74:4402",

"state_name": "Up"

},

"status": {

"capacity": "446 GiB",

"available": "63 GiB",

"leader_count": 1301,

"leader_weight": 1,

"leader_score": 307618,

"leader_size": 307618,

"region_count": 2638,

"region_weight": 1,

"region_score": 1073677587.6132812,

"region_size": 615726,

"start_ts": "2018-06-26T10:33:17+08:00",

"last_heartbeat_ts": "2018-07-17T11:27:17.074373767+08:00",

"uptime": "504h54m0.074373767s"

}

}

5、配置tidb节点:

tidb节点为客户端链接处理和计算节点。启动一般在tipd节点和tikv节点之后,否则无法启动。

/usr/local/tidb-2.0.4/conf/tidb_4001.conf

配置文件详细说明:

主要参数说明:

host = "0.0.0.0"

port = 4001

#存储类型指定为tikv。

store = "tikv"

#指定tipd节点。这里指定的都是tipd的client-urls

path = "192.168.100.74:4201,192.168.100.73:4203,192.168.100.75:4205"

socket = ""

run-ddl = true

lease = "45s"

split-table = true

token-limit = 1000

oom-action = "log"

enable-streaming = false

lower-case-table-names = 2

[log]

level = "info"

log-file = "/data_db3/tidb/db/4001/tidb.log"

format = "text"

disable-timestamp = false

slow-query-file = ""

slow-threshold = 300

expensive-threshold = 10000

query-log-max-len = 2048

[log.file]

filename = ""

max-size = 300

max-days = 0

max-backups = 0

log-rotate = true

[security]

ssl-ca = ""

ssl-cert = ""

ssl-key = ""

cluster-ssl-ca = ""

cluster-ssl-cert = ""

cluster-ssl-key = ""

[status]

report-status = true

status-port = 10080 #报告tidb状态的通讯端口

metrics-addr = ""

metrics-interval = 15

[performance]

max-procs = 0

stmt-count-limit = 5000

tcp-keep-alive = true

cross-join = true

stats-lease = "3s"

run-auto-analyze = true

feedback-probability = 0.05

query-feedback-limit = 1024

pseudo-estimate-ratio = 0.8

[proxy-protocol]

networks = ""

header-timeout = 5

[plan-cache]

enabled = false

capacity = 2560

shards = 256

[prepared-plan-cache]

enabled = false

capacity = 100

[opentracing]

enable = false

rpc-metrics = false

[opentracing.sampler]

type = "const"

param = 1.0

sampling-server-url = ""

max-operations = 0

sampling-refresh-interval = 0

[opentracing.reporter]

queue-size = 0

buffer-flush-interval = 0

log-spans = false

local-agent-host-port = ""

[tikv-client]

grpc-connection-count = 16

commit-timeout = "41s"

[txn-local-latches]

enabled = false

capacity = 1024000

[binlog]

binlog-socket = ""

启动tidb。

/usr/local/tidb-2.0.4/bin/tidb-server --config=/usr/local/tidb-2.0.4/conf/tidb_4001.conf。

启动tidb发现一个问题。就是日志参数log-file不生效(目前不知为何)可以这么启动:

/usr/local/tidb-2.0.4/bin/tidb-server --config=/usr/local/tidb-2.0.4/conf/tidb_4001.conf --log-file=/data_db3/tidb/db/4001/tidb.log

安装完成tidb后。至此。可以通过mysql客户端工具查看。tidb里面的内容信息。命令基本和mysql一样。封装的视图等和mysql相近似。兼容mysql的协议。一句话。就是使用上把tidb当mysql用。

初始化完tidb有root账号,无密码。

mysql -h 192.168.100.75 -uroot -P 4001

至此。tidb安装完成。

如何把各个地方的mysql数据同步给tidb呢?

3个工具:mydumper+loader+syncer

例如:要从 mysql(192.168.100.56 3345)实时同步以下数据库

"test1","test2","test3","mytab1"

1、mydumper+loader导入数据后获得日志点。

2、syncer配置文件。

100.56_3345.toml

#基础信息,指定同步规则,过滤规则等等。

log-level = "info"

server-id = 101

#指定同步的日志点。

meta = "/usr/local/tidb-2.0.4/tools/syncer/100.56_3345.meta"

worker-count = 16

batch = 10

status-addr = "127.0.0.1:10097"

skip-ddls = ["^DROP\\s"]

replicate-do-db = ["test1","test2","test3","mytab1"]

#源mysql链接

[from]

host = "192.168.100.56"

user = "tidbrepl"

password = "xxxxxx"

port = 3345

#tidb链接

[to]

host = "192.168.100.75"

user = "root"

password = ""

port = 4001

/usr/local/tidb-2.0.4/tools/syncer/100.56_3345.meta配置文件:

binlog-name = "mysql-bin.000089"

binlog-pos = 1070520171

binlog-gtid = "" #gtid可以先不填写,同步的时候该文件每隔一段时间刷新,会填入gitd的信息。

启动同步:

/usr/local/tidb-2.0.4/bin/syncer -config /usr/local/tidb-2.0.4/tools/syncer/100.78_3317.toml >>/tmp/logfilexxxxx。

启动的时候建议把日志文件保存起来。/tmp/logfilexxxxx。 在复制失败的时候可以找回日志点。

TiDB的手动安装

标签:账号 obs 获得 mil 集群配置 ros 端口 file trigger

原文地址:https://www.cnblogs.com/vansky/p/9328375.html