标签:run 进入 comm read data 数字 结束 windows 队列

1 # 使用了线程库

2 import threading

3 # 队列

4 from queue import Queue

5 # 解析库

6 from lxml import etree

7 # 请求处理

8 import requests

9 # json处理

10 import time

11

12

13 class ThreadCrawl(threading.Thread):

14 def __init__(self, thread_name, page_queue, data_queue):

15 #threading.Thread.__init__(self)

16 # 调用父类初始化方法

17 super(ThreadCrawl, self).__init__()

18 # 线程名

19 self.thread_name = thread_name

20 # 页码队列

21 self.page_queue = page_queue

22 # 数据队列

23 self.data_queue = data_queue

24 # 请求报头

25 self.headers = {"User-Agent" : "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Trident/5.0;"}

26

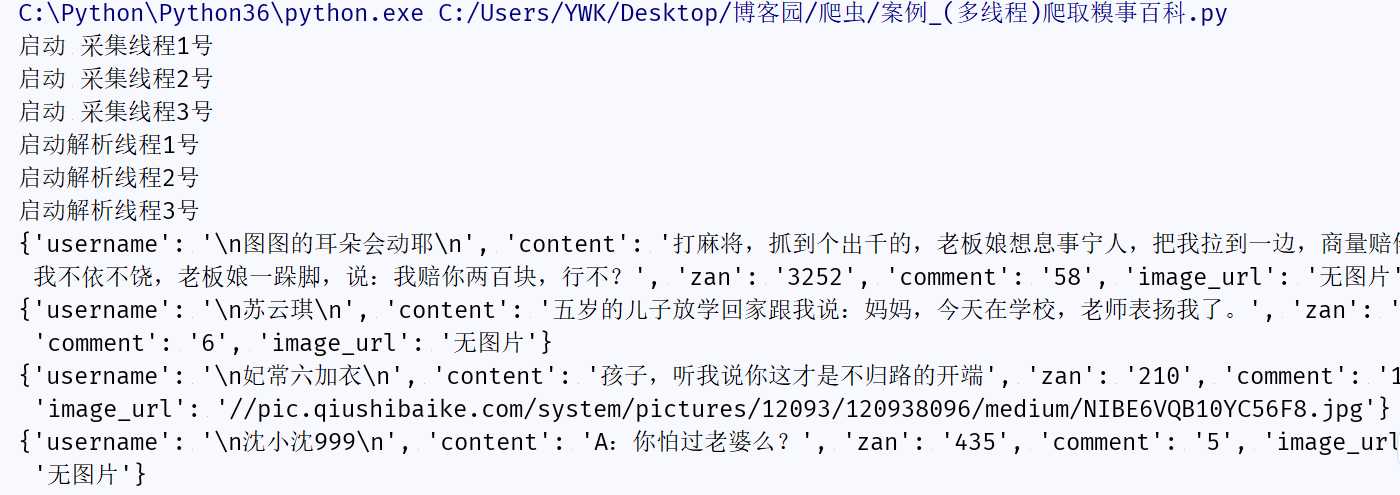

27 def run(self):

28 print("启动 " + self.thread_name)

29 while not CRAWL_EXIT:

30 try:

31 # 取出一个数字,先进先出

32 # 可选参数block,默认值为True

33 #1. 如果对列为空,block为True的话,不会结束,会进入阻塞状态,直到队列有新的数据

34 #2. 如果队列为空,block为False的话,就弹出一个Queue.empty()异常,

35 page = self.page_queue.get(False)

36 url = "http://www.qiushibaike.com/8hr/page/" + str(page) +"/"

37 #print url

38 content = requests.get(url, headers = self.headers).text

39 time.sleep(1)

40 self.data_queue.put(content)

41 #print len(content)

42 except:

43 pass

44 print("结束 " + self.thread_name)

45

46 class ThreadParse(threading.Thread):

47 def __init__(self, thread_name, data_queue):

48 super(ThreadParse, self).__init__()

49 # 线程名

50 self.thread_name = thread_name

51 # 数据队列

52 self.data_queue = data_queue

53

54 def run(self):

55 print("启动" + self.thread_name)

56 while not PARSE_EXIT:

57 try:

58 html = self.data_queue.get(False)

59 self.parse(html)

60 except:

61 pass

62 print("退出" + self.thread_name)

63

64 def parse(self, html):

65 selector = etree.HTML(html)

66

67 # 返回所有段子的节点位置,contant()模糊查询方法,第一个参数是要匹配的标签,第二个参数是这个标签的部分内容

68 # 每个节点包括一条完整的段子(用户名,段子内容,点赞,评论等)

69 node_list = selector.xpath(‘//div[contains(@id,"qiushi_tag_")]‘)

70

71 for node in node_list:

72 # 爬取所有用户名信息

73 # 取出标签里的内容,使用.text方法

74 user_name = node.xpath(‘./div[@class="author clearfix"]//h2‘)[0].text

75

76 # 爬取段子内容,匹配规则必须加点 不然还是会从整个页面开始匹配

77 # 注意:如果span标签中有br 在插件中没问题,在代码中会把br也弄进来

78 duanzi_info = node.xpath(‘.//div[@class="content"]/span‘)[0].text.strip()

79

80 # 爬取段子的点赞数

81 vote_num = node.xpath(‘.//span[@class="stats-vote"]/i‘)[0].text

82

83 # 爬取评论数

84 comment_num = node.xpath(‘.//span[@class="stats-comments"]//i‘)[0].text

85

86 # 爬取图片链接

87 # 属性src的值,所以不需要.text

88 img_url = node.xpath(‘.//div[@class="thumb"]//@src‘)

89 if len(img_url) > 0:

90 img_url = img_url[0]

91 else:

92 img_url = "无图片"

93

94 self.save_info(user_name, duanzi_info, vote_num, comment_num, img_url)

95

96 def save_info(self, user_name, duanzi_info, vote_num, comment_num, img_url):

97 """把每条段子的相关信息写进字典"""

98 item = {

99 "username": user_name,

100 "content": duanzi_info,

101 "zan": vote_num,

102 "comment": comment_num,

103 "image_url": img_url

104 }

105

106 print(item)

107

108

109

110 CRAWL_EXIT = False

111 PARSE_EXIT = False

112

113

114 def main():

115 # 页码的队列,表示20个页面

116 pageQueue = Queue(20)

117 # 放入1~10的数字,先进先出

118 for i in range(1, 21):

119 pageQueue.put(i)

120

121 # 采集结果(每页的HTML源码)的数据队列,参数为空表示不限制

122 dataQueue = Queue()

123

124 filename = open("duanzi.json", "a")

125 # 创建锁

126 lock = threading.Lock()

127

128 # 三个采集线程的名字

129 crawlList = ["采集线程1号", "采集线程2号", "采集线程3号"]

130 # 存储三个采集线程的列表集合

131 thread_crawl = []

132 for threadName in crawlList:

133 thread = ThreadCrawl(threadName, pageQueue, dataQueue)

134 thread.start()

135 thread_crawl.append(thread)

136

137

138 # 三个解析线程的名字

139 parseList = ["解析线程1号","解析线程2号","解析线程3号"]

140 # 存储三个解析线程

141 thread_parse = []

142 for threadName in parseList:

143 thread = ThreadParse(threadName, dataQueue)

144 thread.start()

145 thread_parse.append(thread)

146

147 # 等待pageQueue队列为空,也就是等待之前的操作执行完毕

148 while not pageQueue.empty():

149 pass

150

151 # 如果pageQueue为空,采集线程退出循环

152 global CRAWL_EXIT

153 CRAWL_EXIT = True

154

155 print("pageQueue为空")

156

157 for thread in thread_crawl:

158 thread.join()

159

160 while not dataQueue.empty():

161 pass

162

163 global PARSE_EXIT

164 PARSE_EXIT = True

165

166 for thread in thread_parse:

167 thread.join()

168

169

170

171 if __name__ == "__main__":

172 main()

如果你和我有共同爱好,我们可以加个好友一起交流哈!

标签:run 进入 comm read data 数字 结束 windows 队列

原文地址:https://www.cnblogs.com/ywk-1994/p/9589030.html