标签:als 数据 带宽 strip() 取数据 like define .json 决定

1. 架构

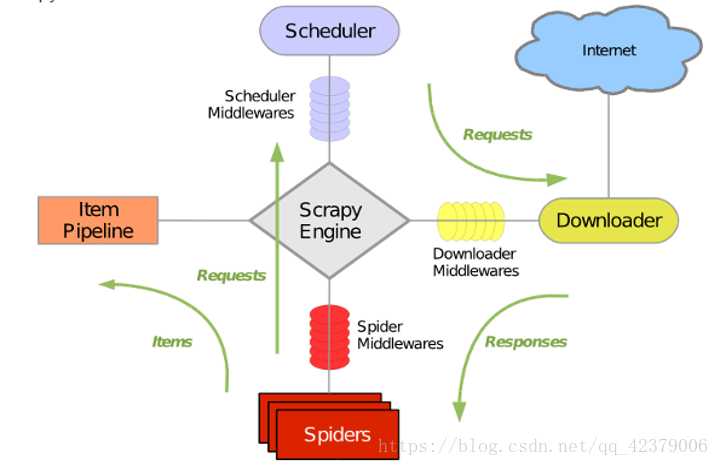

引擎(Scrapy):用来处理整个系统的数据流处理, 触发事务(框架核心)

调度器(Scheduler):用来接受引擎发过来的请求, 压入队列中, 并在引擎再次请求的时候返回. 可以想像成一个URL(抓取网页的网址或者说是链接)的优先队列, 由它来决定下一个要抓取的网址是什么, 同时去除重复的网址

下载器(Downloader):用于下载网页内容, 并将网页内容返回给蜘蛛(Scrapy下载器是建立在twisted这个高效的异步模型上的)

爬虫(Spiders):爬虫是主要干活的, 用于从特定的网页中提取自己需要的信息, 即所谓的实体(Item)。用户也可以从中提取出链接,让Scrapy继续抓取下一个页面

项目管道(Pipeline):负责处理爬虫从网页中抽取的实体,主要的功能是持久化实体、验证实体的有效性、清除不需要的信息。当页面被爬虫解析后,将被发送到项目管道,并经过几个特定的次序处理数据。

下载器中间件(Downloader Middlewares):位于Scrapy引擎和下载器之间的框架,主要是处理Scrapy引擎与下载器之间的请求及响应。

爬虫中间件(Spider Middlewares):介于Scrapy引擎和爬虫之间的框架,主要工作是处理蜘蛛的响应输入和请求输出。

调度中间件(Scheduler Middewares):介于Scrapy引擎和调度之间的中间件,从Scrapy引擎发送到调度的请求和响应。

2. 数据流

Scrapy 中的数据流由引擎控制:

(I) Engine首先打开一个网站,找到处理该网站的 Spider,并向该 Spider请求第一个要爬取的 URL。

(2)Engine从 Spider中获取到第一个要爬取的 URL,并通过 Scheduler以Request的形式调度。

(3) Engine 向 Scheduler请求下一个要爬取的 URL。

(4) Scheduler返回下一个要爬取的 URL给 Engine, Engine将 URL通过 DownloaderMiddJewares转发给 Downloader下载。

(5)一旦页面下载完毕, Downloader生成该页面的 Response,并将其通过 DownloaderMiddlewares发送给 Engine。

(6) Engine从下载器中接收到lResponse,并将其通过 SpiderMiddlewares发送给 Spider处理。

(7) Spider处理 Response,并返回爬取到的 Item及新的 Request给 Engine。

(8) Engine将 Spider返回的 Item 给 Item Pipeline,将新 的 Request给 Scheduler。

(9)重复第(2)步到第(8)步,直到 Scheduler中没有更多的 Request, Engine关闭该网站,爬取结束。

通过多个组件的相互协作、不同组件完成工作的不同、组件对异步处理的支持 , Scrapy最大限度 地利用了网络带宽,大大提高了数据爬取和处理的效率 。

2. 实战

创建项目

scrapy startproject tutorial

创建Spider

cd tutorial

scrapy genspider quotes quotes.toscrape.com

创建Item

Item是保存爬取数据的容器,它的使用方法和字典类似。 不过,相比字典,Item多了额外的保护机制,可以避免拼写错误或者定义字段错误 。

创建 Item 需要继承 scrapy.Item类,并且定义类型为 scrapy.Field 的字段 。 观察目标网站,我们可以获取到到内容有 text、 author、 tags。

使用Item

items.py

1 import scrapy 2 3 4 class TutorialItem(scrapy.Item): 5 # define the fields for your item here like: 6 # name = scrapy.Field() 7 text = scrapy.Field() 8 author = scrapy.Field() 9 tags = scrapy.Field()

quotes.py

1 # -*- coding: utf-8 -*- 2 import scrapy 3 4 from tutorial.items import TutorialItem 5 6 7 class QuotesSpider(scrapy.Spider): 8 name = "quotes" 9 allowed_domains = ["quotes.toscrape.com"] 10 start_urls = [‘http://quotes.toscrape.com/‘] 11 12 def parse(self, response): 13 quotes = response.css(‘.quote‘) 14 for quote in quotes: 15 item = TutorialItem() 16 item[‘text‘] = quote.css(‘.text::text‘).extract_first() 17 item[‘author‘] = quote.css(‘.author::text‘).extract_first() 18 item[‘tags‘] = quote.css(‘.tags .tag::text‘).extract() 19 yield item 20 21 next = response.css(‘.pager .next a::attr(href)‘).extract_first() 22 url = response.urljoin(next) 23 yield scrapy.Request(url=url, callback=self.parse)

scrapy crawl quotes -o quotes.json scrapy crawl quotes -o quotes .csv scrapy crawl quotes -o quotes.xml scrapy crawl quotes -o quotes.pickle scrapy crawl quotes -o quotes.marshal scrapy crawl quotes -o ftp://user:pass@ftp.example.com/path/to/quotes.csv

3. 使用Item Pipeline

Item Pipeline为项目管道 。 当 Item生成后,它会自动被送到 ItemPipeline进行处理,我们常用 ItemPipeline来做如下操作 。

清理 HTML数据。

验证爬取数据,检查爬取字段。

查重井丢弃重复内容。

将爬取结果保存到数据库。

定义一个类并实现 process_item()方法即可。启用 ItemPipeline后,Item Pipeline会自动调用这个方法。 process_item()方法必须返回包含数据的字典或 Item对象,或者抛出 Dropltem异常。

process_item()方法有两个参数。 一个参数是 item,每次 Spider生成的 Item都会作为参数传递过来。 另一个参数是 spider,就是 Spider的实例。

修改项目里面的pipelines.py

# -*- coding: utf-8 -*- # Define your item pipelines here # # Don‘t forget to add your pipeline to the ITEM_PIPELINES setting # See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html from scrapy.exceptions import DropItem import pymongo class TextPipeline(object): def __init__(self): self.limit = 50 def process_item(self, item, spider): if item[‘text‘]: if len(item[‘text‘]) > self.limit: item[‘text‘] = item[‘text‘][0:self.limit].rstrip() + ‘...‘ return item else: return DropItem(‘Missing Text‘) class MongoPipeline(object): def __init__(self, mongo_uri, mongo_db): self.mongo_uri = mongo_uri self.mongo_db = mongo_db @classmethod def from_crawler(cls, crawler): return cls( mongo_uri=crawler.settings.get(‘MONGO_URI‘), mongo_db=crawler.settings.get(‘MONGO_DB‘) ) def open_spider(self, spider): self.client = pymongo.MongoClient(self.mongo_uri) self.db = self.client[self.mongo_db] def process_item(self, item, spider): name = item.__class__.__name__ self.db[name].insert(dict(item)) return item def close_spider(self, spider): self.client.close()

seetings.py

# -*- coding: utf-8 -*- # Scrapy settings for tutorial project # # For simplicity, this file contains only settings considered important or # commonly used. You can find more settings consulting the documentation: # # https://doc.scrapy.org/en/latest/topics/settings.html # https://doc.scrapy.org/en/latest/topics/downloader-middleware.html # https://doc.scrapy.org/en/latest/topics/spider-middleware.html BOT_NAME = ‘tutorial‘ SPIDER_MODULES = [‘tutorial.spiders‘] NEWSPIDER_MODULE = ‘tutorial.spiders‘ # Crawl responsibly by identifying yourself (and your website) on the user-agent #USER_AGENT = ‘tutorial (+http://www.yourdomain.com)‘ # Obey robots.txt rules ROBOTSTXT_OBEY = True # Configure maximum concurrent requests performed by Scrapy (default: 16) #CONCURRENT_REQUESTS = 32 # Configure a delay for requests for the same website (default: 0) # See https://doc.scrapy.org/en/latest/topics/settings.html#download-delay # See also autothrottle settings and docs #DOWNLOAD_DELAY = 3 # The download delay setting will honor only one of: #CONCURRENT_REQUESTS_PER_DOMAIN = 16 #CONCURRENT_REQUESTS_PER_IP = 16 # Disable cookies (enabled by default) #COOKIES_ENABLED = False # Disable Telnet Console (enabled by default) #TELNETCONSOLE_ENABLED = False # Override the default request headers: #DEFAULT_REQUEST_HEADERS = { # ‘Accept‘: ‘text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8‘, # ‘Accept-Language‘: ‘en‘, #} # Enable or disable spider middlewares # See https://doc.scrapy.org/en/latest/topics/spider-middleware.html #SPIDER_MIDDLEWARES = { # ‘tutorial.middlewares.TutorialSpiderMiddleware‘: 543, #} # Enable or disable downloader middlewares # See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html #DOWNLOADER_MIDDLEWARES = { # ‘tutorial.middlewares.TutorialDownloaderMiddleware‘: 543, #} # Enable or disable extensions # See https://doc.scrapy.org/en/latest/topics/extensions.html #EXTENSIONS = { # ‘scrapy.extensions.telnet.TelnetConsole‘: None, #} # Configure item pipelines # See https://doc.scrapy.org/en/latest/topics/item-pipeline.html ITEM_PIPELINES = { ‘tutorial.pipelines.TextPipeline‘: 300, ‘tutorial.pipelines.MongoPipeline‘: 400, } # Enable and configure the AutoThrottle extension (disabled by default) # See https://doc.scrapy.org/en/latest/topics/autothrottle.html #AUTOTHROTTLE_ENABLED = True # The initial download delay #AUTOTHROTTLE_START_DELAY = 5 # The maximum download delay to be set in case of high latencies #AUTOTHROTTLE_MAX_DELAY = 60 # The average number of requests Scrapy should be sending in parallel to # each remote server #AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0 # Enable showing throttling stats for every response received: #AUTOTHROTTLE_DEBUG = False # Enable and configure HTTP caching (disabled by default) # See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings #HTTPCACHE_ENABLED = True #HTTPCACHE_EXPIRATION_SECS = 0 #HTTPCACHE_DIR = ‘httpcache‘ #HTTPCACHE_IGNORE_HTTP_CODES = [] #HTTPCACHE_STORAGE = ‘scrapy.extensions.httpcache.FilesystemCacheStorage‘ MONGO_URI=‘localhost‘ MONGO_DB=‘tutorial‘

4. Selector的用法

Selector是一个可以独立使用的模块。我们可以直接利用 Selector这个类来构建一个选择器对象,然后调用它的相关方法如 xpath()、 css()等来提取数据。

标签:als 数据 带宽 strip() 取数据 like define .json 决定

原文地址:https://www.cnblogs.com/chengchengaqin/p/9813817.html