标签:model mod 写代码 存储 换行 art src min 模式

一般情况下,开发大数据处理程序,我们希望能够在本地编写代码并调试通过,能够在本地进行数据测试,然后在生产环境去跑“大”数据。

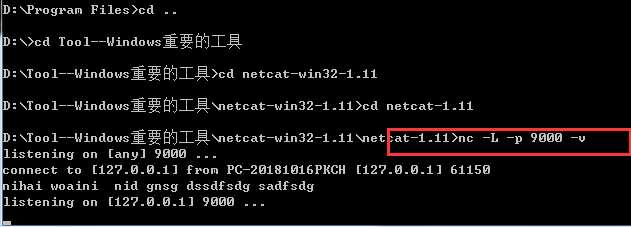

配置windows的nc端口,在网上下载nc.exe(https://eternallybored.org/misc/netcat/)

使用命令开始nc制定端口为9000(nc -L -p 9000 -v) 启动插件

maven配置:

<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>lims</groupId> <artifactId>flink-project</artifactId> <version>1.0-SNAPSHOT</version> <properties> <flink.version>1.7.2</flink.version> </properties> <dependencies> <!--log4j--> <dependency> <groupId>log4j</groupId> <artifactId>log4j</artifactId> <version>1.2.17</version> </dependency> <!--flink--> <dependency> <groupId>org.apache.flink</groupId> <artifactId>flink-java</artifactId> <version>${flink.version}</version> </dependency> <dependency> <groupId>org.apache.flink</groupId> <artifactId>flink-streaming-java_2.11</artifactId> <version>${flink.version}</version> </dependency> <dependency> <groupId>org.apache.flink</groupId> <artifactId>flink-clients_2.11</artifactId> <version>${flink.version}</version> </dependency> <dependency> <groupId>org.apache.flink</groupId> <artifactId>flink-connector-wikiedits_2.11</artifactId> <version>${flink.version}</version> </dependency> </dependencies> </project>

WordCount:

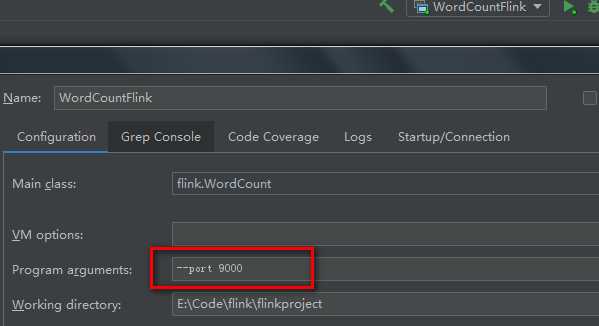

package flink; import org.apache.flink.api.common.functions.FlatMapFunction; import org.apache.flink.api.java.utils.ParameterTool; import org.apache.flink.streaming.api.datastream.DataStream; import org.apache.flink.streaming.api.datastream.DataStreamSource; import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment; import org.apache.flink.streaming.api.windowing.time.Time; import org.apache.flink.util.Collector; /** * @Description: TODO * @Date: 2019/2/25 23:49 */ public class WordCount { public static void main(String[] args) throws Exception { //定义socket的端口号 int port; try{ ParameterTool parameterTool = ParameterTool.fromArgs(args); port = parameterTool.getInt("port"); }catch (Exception e){ System.err.println("没有指定port参数,使用默认值9000"); port = 9000; } //获取运行环境 StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment(); //连接socket获取输入的数据 DataStreamSource<String> text = env.socketTextStream("127.0.0.1", port, "\n"); //计算数据 DataStream<WordWithCount> windowCount = text.flatMap(new FlatMapFunction<String, WordWithCount>() { public void flatMap(String value, Collector<WordWithCount> out) throws Exception { String[] splits = value.split("\\s"); for (String word:splits) { out.collect(new WordWithCount(word,1L)); } } })//打平操作,把每行的单词转为<word,count>类型的数据 .keyBy("word")//针对相同的word数据进行分组 .timeWindow(Time.seconds(2),Time.seconds(1))//指定计算数据的窗口大小和滑动窗口大小 .sum("count"); //把数据打印到控制台 windowCount.print() .setParallelism(1);//使用一个并行度 //注意:因为flink是懒加载的,所以必须调用execute方法,上面的代码才会执行 env.execute("streaming word count"); } /** * 主要为了存储单词以及单词出现的次数 */ public static class WordWithCount{ public String word; public long count; public WordWithCount(){} public WordWithCount(String word, long count) { this.word = word; this.count = count; } @Override public String toString() { return "WordWithCount{" + "word=‘" + word + ‘\‘‘ + ", count=" + count + ‘}‘; } } }

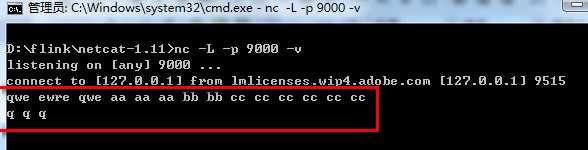

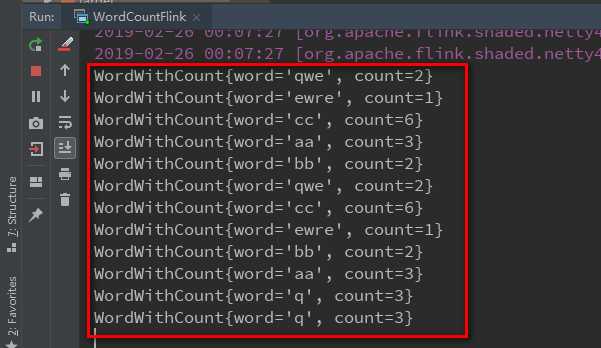

cmd中输入单词,空格分割,并换行,在idea的控制台中观察输出

本地开发调试实例完成

flink入门实例-Windows下本地模式跑SocketWordCount

标签:model mod 写代码 存储 换行 art src min 模式

原文地址:https://www.cnblogs.com/limaosheng/p/10434848.html