标签:park rdd handler handle out cti oss dde rmi

报错一:启动spark-shell后查询hive中的表信息,报错

$SPARK_HOME/bin/spark-shell

spark.sql("select * from student.student ").show()Caused by: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.ql.metadata.SessionHiveMetaStoreClient

at org.apache.hadoop.hive.metastore.MetaStoreUtils.newInstance(MetaStoreUtils.java:1523)

at org.apache.hadoop.hive.metastore.RetryingMetaSto

Caused by: org.datanucleus.store.rdbms.connectionpool.DatastoreDriverNotFoundException:

The specified datastore driver ("com.mysql.jdbc.Driver") was not found in the

CLASSPATH. Please check your CLASSPATH specification,

and the name of the driver.spark访问存放hive的Metastore的mysql数据库时,没有连接成功,因为spark没有没有mysql-connecter的jar包,即mysql驱动

有人说,那就直接把jar包cp到$SPARK_HOME/libs下呗,不好意思,这样再生产中是绝对不行的,并不是所有spark程序都会用到mysql驱动,所以我们要在提交作业时指定--jars,多个jar包用逗号分隔 注(我的mysql版本是5.1.73)

[hadoop@hadoop003 spark]$ spark-shell --jars ~/softwares/mysql-connector-java-5.1.47.jar

19/05/21 08:02:55 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Spark context Web UI available at http://hadoop003:4040

Spark context available as ‘sc‘ (master = local[*], app id = local-1558440185051).

Spark session available as ‘spark‘.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ ‘_/

/___/ .__/\_,_/_/ /_/\_\ version 2.4.2

/_/

Using Scala version 2.11.12 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_131)

Type in expressions to have them evaluated.

Type :help for more information.

scala> spark.sql("select * from student.student ").show()

19/05/21 08:04:42 WARN DataNucleus.General: Plugin (Bundle) "org.datanucleus" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/home/hadoop/app/spark-2.4.2-bin-hadoop-2.6.0-cdh5.7.0/jars/datanucleus-core-3.2.10.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/home/hadoop/app/spark/jars/datanucleus-core-3.2.10.jar."

19/05/21 08:04:42 WARN DataNucleus.General: Plugin (Bundle) "org.datanucleus.api.jdo" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/home/hadoop/app/spark/jars/datanucleus-api-jdo-3.2.6.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/home/hadoop/app/spark-2.4.2-bin-hadoop-2.6.0-cdh5.7.0/jars/datanucleus-api-jdo-3.2.6.jar."

19/05/21 08:04:42 WARN DataNucleus.General: Plugin (Bundle) "org.datanucleus.store.rdbms" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/home/hadoop/app/spark-2.4.2-bin-hadoop-2.6.0-cdh5.7.0/jars/datanucleus-rdbms-3.2.9.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/home/hadoop/app/spark/jars/datanucleus-rdbms-3.2.9.jar."

19/05/21 08:04:45 ERROR metastore.ObjectStore: Version information found in metastore differs 1.1.0 from expected schema version 1.2.0. Schema verififcation is disabled hive.metastore.schema.verification so setting version.

19/05/21 08:04:46 WARN metastore.ObjectStore: Failed to get database global_temp, returning NoSuchObjectException

+------+--------+--------------+--------------------+

|stu_id|stu_name| stu_phone_num| stu_email|

+------+--------+--------------+--------------------+

| 1| Burke|1-300-746-8446|ullamcorper.velit...|

| 2| Kamal|1-668-571-5046|pede.Suspendisse@...|

| 3| Olga|1-956-311-1686|Aenean.eget.metus...|

| 4| Belle|1-246-894-6340|vitae.aliquet.nec...|

| 5| Trevor|1-300-527-4967|dapibus.id@acturp...|

| 6| Laurel|1-691-379-9921|adipiscing@consec...|

| 7| Sara|1-608-140-1995|Donec.nibh@enimEt...|

| 8| Kaseem|1-881-586-2689|cursus.et.magna@e...|

| 9| Lev|1-916-367-5608|Vivamus.nisi@ipsu...|

| 10| Maya|1-271-683-2698|accumsan.convalli...|

| 11| Emi|1-467-270-1337| est@nunc.com|

| 12| Caleb|1-683-212-0896|Suspendisse@Quisq...|

| 13|Florence|1-603-575-2444|sit.amet.dapibus@...|

| 14| Anika|1-856-828-7883|euismod@ligulaeli...|

| 15| Tarik|1-398-171-2268|turpis@felisorci.com|

| 16| Amena|1-878-250-3129|lorem.luctus.ut@s...|

| 17| Blossom|1-154-406-9596|Nunc.commodo.auct...|

| 18| Guy|1-869-521-3230|senectus.et.netus...|

| 19| Malachi|1-608-637-2772|Proin.mi.Aliquam@...|

| 20| Edward|1-711-710-6552|lectus@aliquetlib...|

+------+--------+--------------+--------------------+

only showing top 20 rows解决了

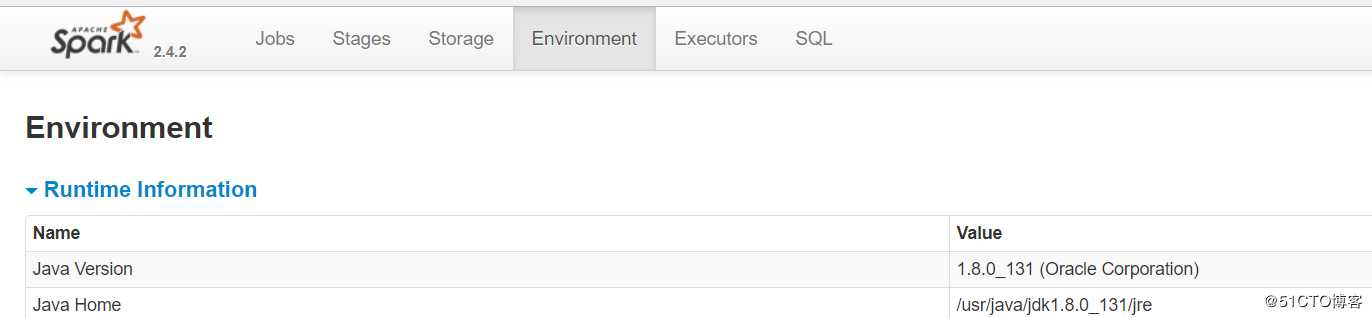

此时我们也可以在ui界面上看一下是不是将mysql驱动包添加到该任务中了

发现的确添加成功

启动spark-sql时,发生报错

spark-sql19/05/21 08:54:14 ERROR Datastore.Schema: Failed initialising database.

Unable to open a test connection to the given database.

JDBC url = jdbc:mysql://192.168.1.201:3306/hiveDB?createDatabaseIfNotExist=true, username = root. Terminating connection pool

(set lazyInit to true if you expect to start your database after your app).

Original Exception: ------

java.sql.SQLException: No suitable driver found for jdbc:mysql://192.168.1.201:3306/hiveDB?createDatabaseIfNotExist=true

Caused by: java.sql.SQLException: No suitable driver found for

jdbc:mysql://192.168.1.201:3306/hiveDB?createDatabaseIfNotExist=truedriver端没有mysql驱动,连接不上hive的元数据。

查看一下我的启动命令,发现已经使用--jars指定了mysql驱动包的路径

并且根据spark-sql --help的说明,可以将指定得jar包都添加到driver 和 executor的classpaths

--jars JARS Comma-separated list of jars to include on the driver and executor classpaths.但是driver的classpath依旧没有mysql的驱动,这是为什么呢? 暂时不得而知,,所以,我尝试了一下,启动时另外添加driver的classpath路径

spark-sql --jars softwares/mysql-connector-java-5.1.47.jar --driver-class-path softwares/mysql-connector-java-5.1.47.jar 19/05/21 09:19:30 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

19/05/21 09:19:31 INFO metastore.HiveMetaStore: 0: Opening raw store with implemenation class:org.apache.hadoop.hive.metastore.ObjectStore

19/05/21 09:19:31 INFO metastore.ObjectStore: ObjectStore, initialize called

19/05/21 09:19:31 INFO DataNucleus.Persistence: Property hive.metastore.integral.jdo.pushdown unknown - will be ignored

19/05/21 09:19:31 INFO DataNucleus.Persistence: Property datanucleus.cache.level2 unknown - will be ignored

19/05/21 09:19:33 INFO metastore.ObjectStore: Setting MetaStore object pin classes with hive.metastore.cache.pinobjtypes="Table,StorageDescriptor,SerDeInfo,Partition,Database,Type,FieldSchema,Order"

19/05/21 09:19:34 INFO DataNucleus.Datastore: The class "org.apache.hadoop.hive.metastore.model.MFieldSchema" is tagged as "embedded-only" so does not have its own datastore table.

19/05/21 09:19:34 INFO DataNucleus.Datastore: The class "org.apache.hadoop.hive.metastore.model.MOrder" is tagged as "embedded-only" so does not have its own datastore table.

19/05/21 09:19:34 INFO DataNucleus.Datastore: The class "org.apache.hadoop.hive.metastore.model.MFieldSchema" is tagged as "embedded-only" so does not have its own datastore table.

19/05/21 09:19:34 INFO DataNucleus.Datastore: The class "org.apache.hadoop.hive.metastore.model.MOrder" is tagged as "embedded-only" so does not have its own datastore table.

19/05/21 09:19:34 INFO DataNucleus.Query: Reading in results for query "org.datanucleus.store.rdbms.query.SQLQuery@0" since the connection used is closing

19/05/21 09:19:34 INFO metastore.MetaStoreDirectSql: Using direct SQL, underlying DB is MYSQL

19/05/21 09:19:34 INFO metastore.ObjectStore: Initialized ObjectStore

19/05/21 09:19:35 INFO metastore.HiveMetaStore: Added admin role in metastore

19/05/21 09:19:35 INFO metastore.HiveMetaStore: Added public role in metastore

19/05/21 09:19:35 INFO metastore.HiveMetaStore: No user is added in admin role, since config is empty

19/05/21 09:19:35 INFO metastore.HiveMetaStore: 0: get_all_databases

19/05/21 09:19:35 INFO HiveMetaStore.audit: ugi=hadoop ip=unknown-ip-addr cmd=get_all_databases

19/05/21 09:19:35 INFO metastore.HiveMetaStore: 0: get_functions: db=default pat=*

19/05/21 09:19:35 INFO HiveMetaStore.audit: ugi=hadoop ip=unknown-ip-addr cmd=get_functions: db=default pat=*

19/05/21 09:19:35 INFO DataNucleus.Datastore: The class "org.apache.hadoop.hive.metastore.model.MResourceUri" is tagged as "embedded-only" so does not have its own datastore table.

19/05/21 09:19:35 INFO metastore.HiveMetaStore: 0: get_functions: db=g6_hadoop pat=*

19/05/21 09:19:35 INFO HiveMetaStore.audit: ugi=hadoop ip=unknown-ip-addr cmd=get_functions: db=g6_hadoop pat=*

19/05/21 09:19:35 INFO metastore.HiveMetaStore: 0: get_functions: db=student pat=*

19/05/21 09:19:35 INFO HiveMetaStore.audit: ugi=hadoop ip=unknown-ip-addr cmd=get_functions: db=student pat=*

19/05/21 09:19:36 INFO session.SessionState: Created local directory: /tmp/b5ddbc6f-e572-4331-8a56-815dca0eaf1f_resources

19/05/21 09:19:36 INFO session.SessionState: Created HDFS directory: /tmp/hive/hadoop/b5ddbc6f-e572-4331-8a56-815dca0eaf1f

19/05/21 09:19:36 INFO session.SessionState: Created local directory: /tmp/hadoop/b5ddbc6f-e572-4331-8a56-815dca0eaf1f

19/05/21 09:19:36 INFO session.SessionState: Created HDFS directory: /tmp/hive/hadoop/b5ddbc6f-e572-4331-8a56-815dca0eaf1f/_tmp_space.db

19/05/21 09:19:36 INFO spark.SparkContext: Running Spark version 2.4.2

19/05/21 09:19:36 INFO spark.SparkContext: Submitted application: SparkSQL::192.168.1.203

19/05/21 09:19:36 INFO spark.SecurityManager: Changing view acls to: hadoop

19/05/21 09:19:36 INFO spark.SecurityManager: Changing modify acls to: hadoop

19/05/21 09:19:36 INFO spark.SecurityManager: Changing view acls groups to:

19/05/21 09:19:36 INFO spark.SecurityManager: Changing modify acls groups to:

19/05/21 09:19:36 INFO spark.SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(hadoop); groups with view permissions: Set(); users with modify permissions: Set(hadoop); groups with modify permissions: Set()

19/05/21 09:19:36 INFO util.Utils: Successfully started service ‘sparkDriver‘ on port 51505.

19/05/21 09:19:36 INFO spark.SparkEnv: Registering MapOutputTracker

19/05/21 09:19:36 INFO spark.SparkEnv: Registering BlockManagerMaster

19/05/21 09:19:36 INFO storage.BlockManagerMasterEndpoint: Using org.apache.spark.storage.DefaultTopologyMapper for getting topology information

19/05/21 09:19:36 INFO storage.BlockManagerMasterEndpoint: BlockManagerMasterEndpoint up

19/05/21 09:19:36 INFO storage.DiskBlockManager: Created local directory at /tmp/blockmgr-b61694d9-76da-4d85-8797-e9e1403b4596

19/05/21 09:19:36 INFO memory.MemoryStore: MemoryStore started with capacity 366.3 MB

19/05/21 09:19:36 INFO spark.SparkEnv: Registering OutputCommitCoordinator

19/05/21 09:19:37 INFO util.log: Logging initialized @8235ms

19/05/21 09:19:37 INFO server.Server: jetty-9.3.z-SNAPSHOT, build timestamp: unknown, git hash: unknown

19/05/21 09:19:37 INFO server.Server: Started @8471ms

19/05/21 09:19:37 INFO server.AbstractConnector: Started ServerConnector@55f0e536{HTTP/1.1,[http/1.1]}{0.0.0.0:4040}

19/05/21 09:19:37 INFO util.Utils: Successfully started service ‘SparkUI‘ on port 4040.

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@4f59a516{/jobs,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@37a0ec3c{/jobs/json,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@422ad5e2{/jobs/job,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@6048e26a{/jobs/job/json,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@50b93353{/stages,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@44286963{/stages/json,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@c8f97a7{/stages/stage,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@35adf623{/stages/stage/json,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@456f7d9e{/stages/pool,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@75d366c2{/stages/pool/json,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@8f40022{/storage,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@5232e3f1{/storage/json,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@6c8fe7a4{/storage/rdd,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@2047981{/storage/rdd/json,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@2ad99cf3{/environment,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@6f31df32{/environment/json,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@7c90b7b7{/executors,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@5d7911d5{/executors/json,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@3ba1308d{/executors/threadDump,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@5a08b084{/executors/threadDump/json,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@235c997d{/static,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@6ad179b4{/,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@2efd2f21{/api,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@17d2b075{/jobs/job/kill,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@6661d8c0{/stages/stage/kill,null,AVAILABLE,@Spark}

19/05/21 09:19:37 INFO ui.SparkUI: Bound SparkUI to 0.0.0.0, and started at http://hadoop003:4040

19/05/21 09:19:37 INFO spark.SparkContext: Added JAR file:///home/hadoop/softwares/mysql-connector-java-5.1.47.jar at spark://hadoop003:51505/jars/mysql-connector-java-5.1.47.jar with timestamp 1558444777501

19/05/21 09:19:37 INFO executor.Executor: Starting executor ID driver on host localhost

19/05/21 09:19:37 INFO util.Utils: Successfully started service ‘org.apache.spark.network.netty.NettyBlockTransferService‘ on port 43564.

19/05/21 09:19:37 INFO netty.NettyBlockTransferService: Server created on hadoop003:43564

19/05/21 09:19:37 INFO storage.BlockManager: Using org.apache.spark.storage.RandomBlockReplicationPolicy for block replication policy

19/05/21 09:19:37 INFO storage.BlockManagerMaster: Registering BlockManager BlockManagerId(driver, hadoop003, 43564, None)

19/05/21 09:19:37 INFO storage.BlockManagerMasterEndpoint: Registering block manager hadoop003:43564 with 366.3 MB RAM, BlockManagerId(driver, hadoop003, 43564, None)

19/05/21 09:19:37 INFO storage.BlockManagerMaster: Registered BlockManager BlockManagerId(driver, hadoop003, 43564, None)

19/05/21 09:19:37 INFO storage.BlockManager: Initialized BlockManager: BlockManagerId(driver, hadoop003, 43564, None)

19/05/21 09:19:37 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@33d7765a{/metrics/json,null,AVAILABLE,@Spark}

19/05/21 09:19:38 INFO scheduler.EventLoggingListener: Logging events to hdfs://ruozeclusterg6:8020/g6_direcory/local-1558444777553

19/05/21 09:19:38 INFO internal.SharedState: loading hive config file: file:/home/hadoop/app/spark-2.4.2-bin-hadoop-2.6.0-cdh5.7.0/conf/hive-site.xml

19/05/21 09:19:38 INFO internal.SharedState: Setting hive.metastore.warehouse.dir (‘null‘) to the value of spark.sql.warehouse.dir (‘file:/home/hadoop/spark-warehouse‘).

19/05/21 09:19:38 INFO internal.SharedState: Warehouse path is ‘file:/home/hadoop/spark-warehouse‘.

19/05/21 09:19:38 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@16e1219f{/SQL,null,AVAILABLE,@Spark}

19/05/21 09:19:38 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@13f40d71{/SQL/json,null,AVAILABLE,@Spark}

19/05/21 09:19:38 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@34f7b44f{/SQL/execution,null,AVAILABLE,@Spark}

19/05/21 09:19:38 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@5403907{/SQL/execution/json,null,AVAILABLE,@Spark}

19/05/21 09:19:38 INFO handler.ContextHandler: Started o.s.j.s.ServletContextHandler@7911cc15{/static/sql,null,AVAILABLE,@Spark}

19/05/21 09:19:38 INFO hive.HiveUtils: Initializing HiveMetastoreConnection version 1.2.1 using Spark classes.

19/05/21 09:19:38 INFO client.HiveClientImpl: Warehouse location for Hive client (version 1.2.2) is file:/home/hadoop/spark-warehouse

19/05/21 09:19:38 INFO hive.metastore: Mestastore configuration hive.metastore.warehouse.dir changed from /user/hive/warehouse to file:/home/hadoop/spark-warehouse

19/05/21 09:19:38 INFO metastore.HiveMetaStore: 0: Shutting down the object store...

19/05/21 09:19:38 INFO HiveMetaStore.audit: ugi=hadoop ip=unknown-ip-addr cmd=Shutting down the object store...

19/05/21 09:19:38 INFO metastore.HiveMetaStore: 0: Metastore shutdown complete.

19/05/21 09:19:38 INFO HiveMetaStore.audit: ugi=hadoop ip=unknown-ip-addr cmd=Metastore shutdown complete.

19/05/21 09:19:38 INFO metastore.HiveMetaStore: 0: get_database: default

19/05/21 09:19:38 INFO HiveMetaStore.audit: ugi=hadoop ip=unknown-ip-addr cmd=get_database: default

19/05/21 09:19:38 INFO metastore.HiveMetaStore: 0: Opening raw store with implemenation class:org.apache.hadoop.hive.metastore.ObjectStore

19/05/21 09:19:38 INFO metastore.ObjectStore: ObjectStore, initialize called

19/05/21 09:19:38 INFO DataNucleus.Query: Reading in results for query "org.datanucleus.store.rdbms.query.SQLQuery@0" since the connection used is closing

19/05/21 09:19:38 INFO metastore.MetaStoreDirectSql: Using direct SQL, underlying DB is MYSQL

19/05/21 09:19:38 INFO metastore.ObjectStore: Initialized ObjectStore

19/05/21 09:19:39 INFO state.StateStoreCoordinatorRef: Registered StateStoreCoordinator endpoint

Spark master: local[*], Application Id: local-1558444777553

19/05/21 09:19:40 INFO thriftserver.SparkSQLCLIDriver: Spark master: local[*], Application Id: local-1558444777553

spark-sql (default)>启动成功~,看来官方解释也不一定就是准确的呀,还是要实测!小坑

标签:park rdd handler handle out cti oss dde rmi

原文地址:https://blog.51cto.com/14309075/2398215