标签:rda 前言 opengl es array tcap cfs log 获得 长度

VideoToolBox是iOS8之后,苹果开发的用于硬解码编码H264/H265(iOS11以后支持)的API。

对于H264还不了解的童鞋一定要先看下这边的H264的简介。

我们实现一个简单的Demo,从摄像头获取到视频数据,然后再编码成H264裸数据保存在沙盒中。

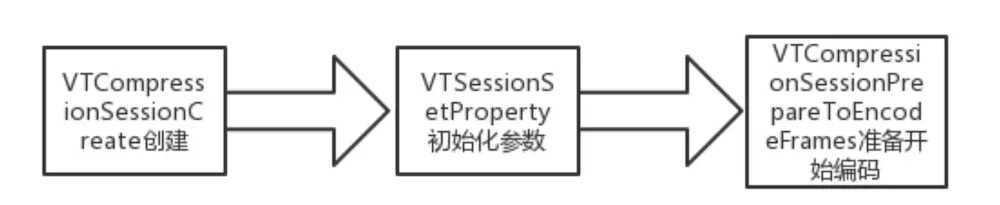

1. 创建初始化VideoToolBox

核心代码如下

- (void)initVideoToolBox {

dispatch_sync(encodeQueue , ^{

frameNO = 0;

int width = 480, height = 640;

OSStatus status = VTCompressionSessionCreate(NULL, width, height, kCMVideoCodecType_H264, NULL, NULL, NULL, didCompressH264, (__bridge void *)(self), &encodingSession);

NSLog(@"H264: VTCompressionSessionCreate %d", (int)status);

if (status != 0)

{

NSLog(@"H264: Unable to create a H264 session");

return ;

}

// 设置实时编码输出(避免延迟)

VTSessionSetProperty(encodingSession, kVTCompressionPropertyKey_RealTime, kCFBooleanTrue);

VTSessionSetProperty(encodingSession, kVTCompressionPropertyKey_ProfileLevel, kVTProfileLevel_H264_Baseline_AutoLevel);

// 设置关键帧(GOPsize)间隔

int frameInterval = 24;

CFNumberRef frameIntervalRef = CFNumberCreate(kCFAllocatorDefault, kCFNumberIntType, &frameInterval);

VTSessionSetProperty(encodingSession, kVTCompressionPropertyKey_MaxKeyFrameInterval, frameIntervalRef);

//设置期望帧率

int fps = 24;

CFNumberRef fpsRef = CFNumberCreate(kCFAllocatorDefault, kCFNumberIntType, &fps);

VTSessionSetProperty(encodingSession, kVTCompressionPropertyKey_ExpectedFrameRate, fpsRef);

//设置码率,均值,单位是byte

int bitRate = width * height * 3 * 4 * 8;

CFNumberRef bitRateRef = CFNumberCreate(kCFAllocatorDefault, kCFNumberSInt32Type, &bitRate);

VTSessionSetProperty(encodingSession, kVTCompressionPropertyKey_AverageBitRate, bitRateRef);

//设置码率,上限,单位是bps

int bitRateLimit = width * height * 3 * 4;

CFNumberRef bitRateLimitRef = CFNumberCreate(kCFAllocatorDefault, kCFNumberSInt32Type, &bitRateLimit);

VTSessionSetProperty(encodingSession, kVTCompressionPropertyKey_DataRateLimits, bitRateLimitRef);

//开始编码

VTCompressionSessionPrepareToEncodeFrames(encodingSession);

});

}

初始化这里设置了编码类型kCMVideoCodecType_H264,

分辨率640 * 480,fps,GOP,码率。

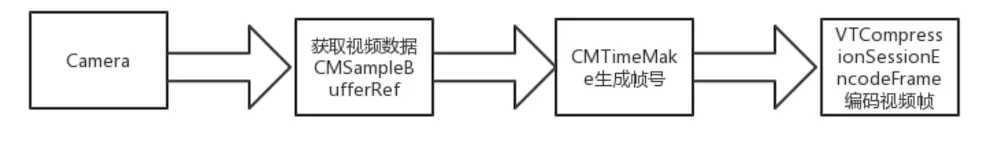

2. 从摄像头获取视频数据丢给VideoToolBox编码成H264

初始化视频采集端核心代码如下

//初始化摄像头采集端

- (void)initCapture{

self.captureSession = [[AVCaptureSession alloc]init];

//设置录制640 * 480

self.captureSession.sessionPreset = AVCaptureSessionPreset640x480;

AVCaptureDevice *inputCamera = [self cameraWithPostion:AVCaptureDevicePositionBack];

self.captureDeviceInput = [[AVCaptureDeviceInput alloc] initWithDevice:inputCamera error:nil];

if ([self.captureSession canAddInput:self.captureDeviceInput]) {

[self.captureSession addInput:self.captureDeviceInput];

}

self.captureDeviceOutput = [[AVCaptureVideoDataOutput alloc] init];

[self.captureDeviceOutput setAlwaysDiscardsLateVideoFrames:NO];

//设置YUV420p输出

[self.captureDeviceOutput setVideoSettings:[NSDictionary dictionaryWithObject:[NSNumber numberWithInt:kCVPixelFormatType_420YpCbCr8BiPlanarFullRange] forKey:(id)kCVPixelBufferPixelFormatTypeKey]];

[self.captureDeviceOutput setSampleBufferDelegate:self queue:captureQueue];

if ([self.captureSession canAddOutput:self.captureDeviceOutput]) {

[self.captureSession addOutput:self.captureDeviceOutput];

}

//建立连接

AVCaptureConnection *connection = [self.captureDeviceOutput connectionWithMediaType:AVMediaTypeVideo];

[connection setVideoOrientation:AVCaptureVideoOrientationPortrait];

}

这里需要注意设置的视频分辨率和编码器一致640 * 480. AVCaptureVideoDataOutput类型选用YUV420p。

摄像头数据回调部分

- (void)captureOutput:(AVCaptureOutput *)output didOutputSampleBuffer:(CMSampleBufferRef)sampleBuffer fromConnection:(AVCaptureConnection *)connection{

dispatch_sync(encodeQueue, ^{

[self encode:sampleBuffer];

});

}

//编码sampleBuffer

- (void) encode:(CMSampleBufferRef )sampleBuffer

{

CVImageBufferRef imageBuffer = (CVImageBufferRef)CMSampleBufferGetImageBuffer(sampleBuffer);

// 帧时间,如果不设置会导致时间轴过长。

CMTime presentationTimeStamp = CMTimeMake(frameNO++, 1000);

VTEncodeInfoFlags flags;

OSStatus statusCode = VTCompressionSessionEncodeFrame(encodingSession,

imageBuffer,

presentationTimeStamp,

kCMTimeInvalid,

NULL, NULL, &flags);

if (statusCode != noErr) {

NSLog(@"H264: VTCompressionSessionEncodeFrame failed with %d", (int)statusCode);

VTCompressionSessionInvalidate(encodingSession);

CFRelease(encodingSession);

encodingSession = NULL;

return;

}

NSLog(@"H264: VTCompressionSessionEncodeFrame Success");

}

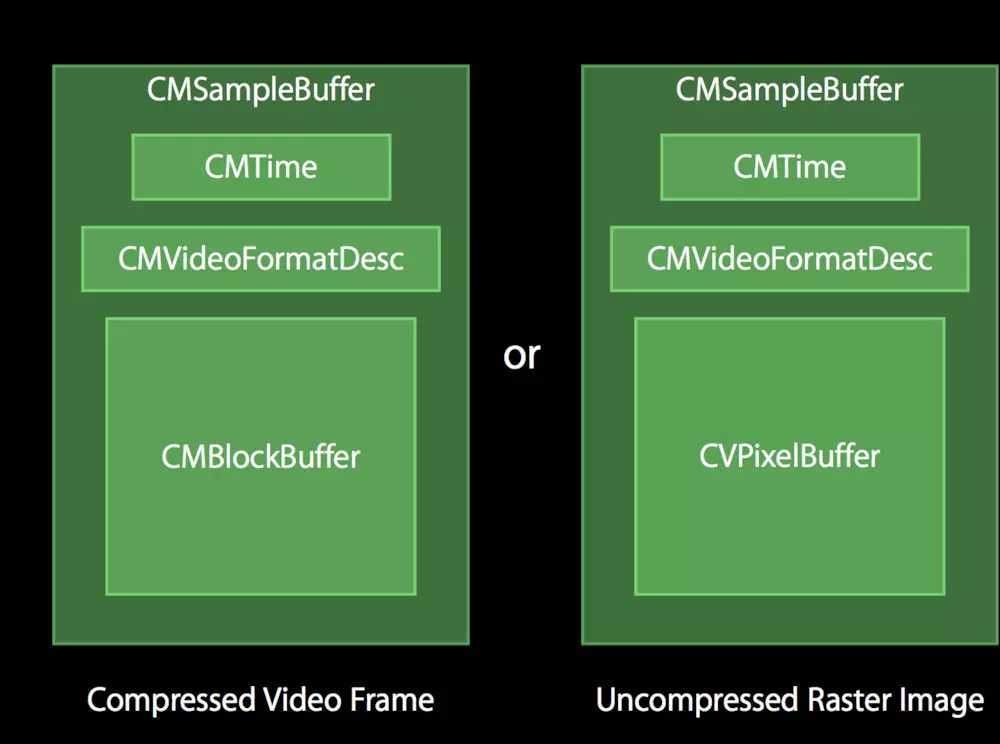

3.框架中出现的数据结构

CMSampleBufferRef

存放一个或者多个压缩或未压缩的媒体数据;

下图列举了两种CMSampleBuffer。

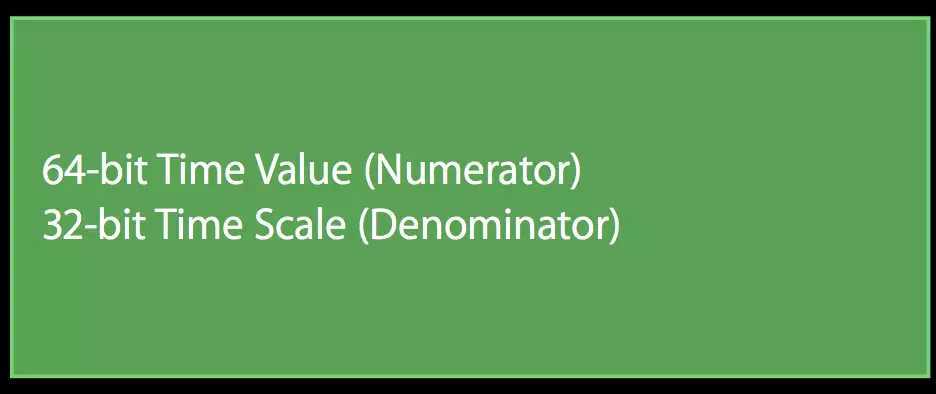

CMTime

64位的value,32位的scale,media的时间格式;

CMBlockBuffer

这里可以叫裸数据;

CVPixelBuffer

包含未压缩的像素数据,图像宽度、高度等;

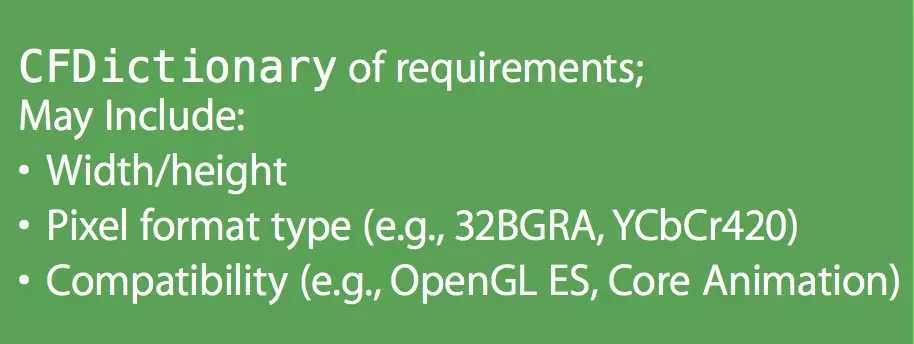

pixelBufferAttributes

CFDictionary包括宽高、像素格式(RGBA、YUV)、使用场景(OpenGL ES、Core Animation)

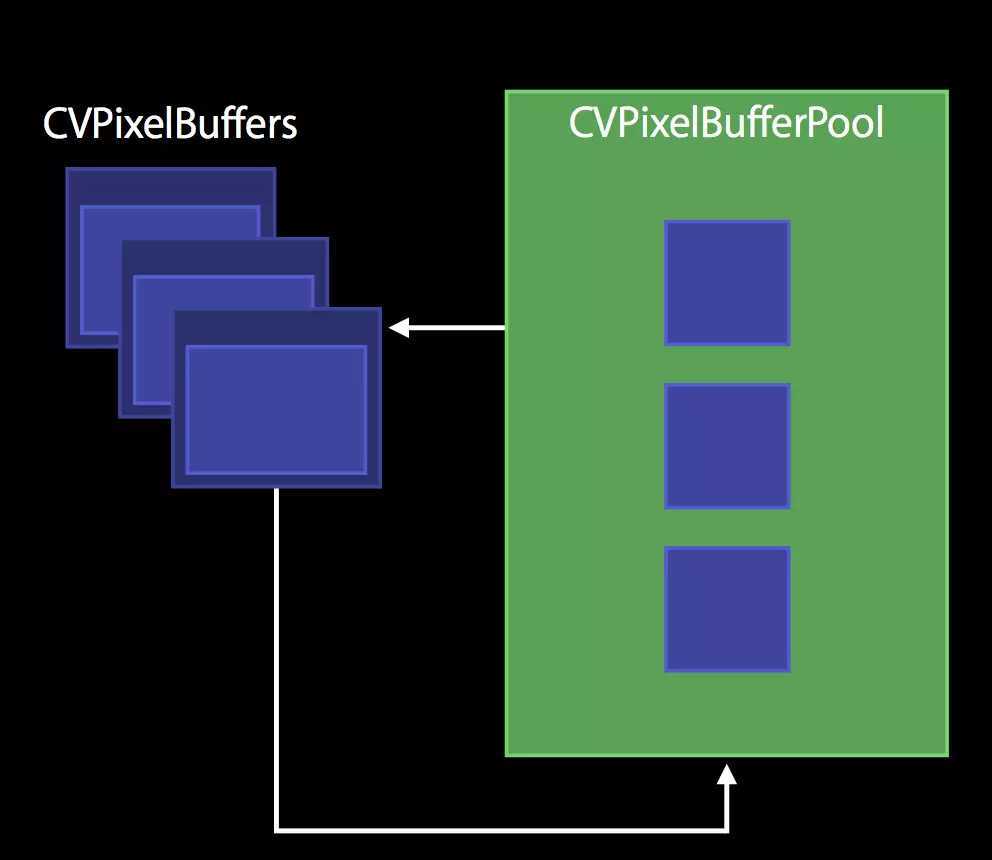

CVPixelBufferPool

CVPixelBuffer的缓冲池,因为CVPixelBuffer的创建和销毁开销很大

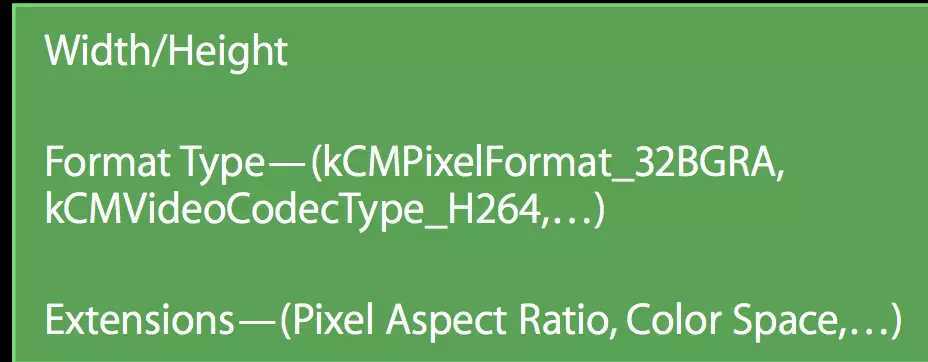

CMVideoFormatDescription

video格式,包括宽高、颜色空间、编码格式信息等;对于H264,还包含sps和pps数据;

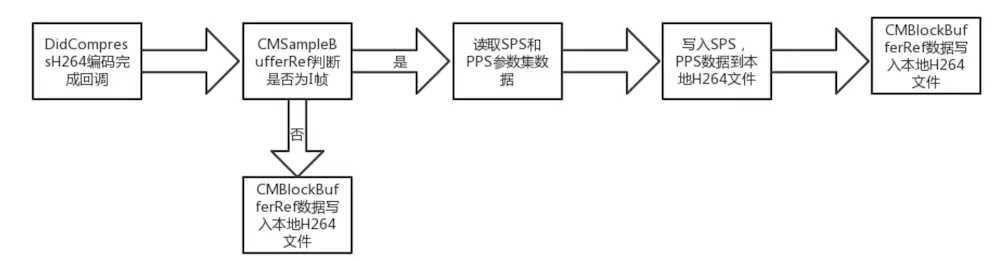

这里编码完成我们先判断的是否为I帧,如果是需要读取sps和pps参数集,为什么要这样呢?

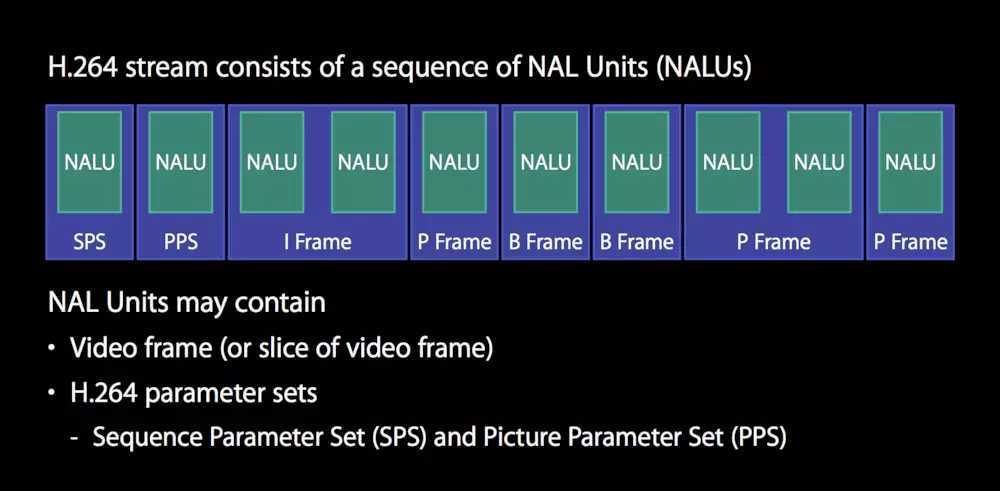

我们先看一下一个裸数据H264(Elementary Stream)的NALU构成

H.264裸流中,不存在单独的SPS、PPS包或帧,而是附加在I帧前面,存储的一般形式为

00 00 00 01 SPS 00 00 00 01 PPS 00 00 00 01 I帧

前面的这些00 00数据称为起始码(Start Code),它们不属于SPS、PPS的内容。

SPS(Sequence Parameter Sets)和PPS(Picture Parameter Set):H.264的SPS和PPS包含了初始化H.264解码器所需要的信息参数,包括编码所用的profile,level,图像的宽和高,deblock滤波器等。

上面介绍了sps和pps是封装在CMFormatDescriptionRef中,所以我们得先CMFormatDescriptionRef中取出sps和pps写入h264裸流中。

这就不难理解写入H264的流程了。

代码如下

// 编码完成回调

void didCompressH264(void *outputCallbackRefCon, void *sourceFrameRefCon, OSStatus status, VTEncodeInfoFlags infoFlags, CMSampleBufferRef sampleBuffer) {

NSLog(@"didCompressH264 called with status %d infoFlags %d", (int)status, (int)infoFlags);

if (status != 0) {

return;

}

if (!CMSampleBufferDataIsReady(sampleBuffer)) {

NSLog(@"didCompressH264 data is not ready ");

return;

}

ViewController* encoder = (__bridge ViewController*)outputCallbackRefCon;

bool keyframe = !CFDictionaryContainsKey( (CFArrayGetValueAtIndex(CMSampleBufferGetSampleAttachmentsArray(sampleBuffer, true), 0)), kCMSampleAttachmentKey_NotSync);

// 判断当前帧是否为关键帧

// 获取sps & pps数据

if (keyframe)

{

CMFormatDescriptionRef format = CMSampleBufferGetFormatDescription(sampleBuffer);

size_t sparameterSetSize, sparameterSetCount;

const uint8_t *sparameterSet;

OSStatus statusCode = CMVideoFormatDescriptionGetH264ParameterSetAtIndex(format, 0, &sparameterSet, &sparameterSetSize, &sparameterSetCount, 0 );

if (statusCode == noErr)

{

// 获得了sps,再获取pps

size_t pparameterSetSize, pparameterSetCount;

const uint8_t *pparameterSet;

OSStatus statusCode = CMVideoFormatDescriptionGetH264ParameterSetAtIndex(format, 1, &pparameterSet, &pparameterSetSize, &pparameterSetCount, 0 );

if (statusCode == noErr)

{

// 获取SPS和PPS data

NSData *sps = [NSData dataWithBytes:sparameterSet length:sparameterSetSize];

NSData *pps = [NSData dataWithBytes:pparameterSet length:pparameterSetSize];

if (encoder)

{

[encoder gotSpsPps:sps pps:pps];

}

}

}

}

CMBlockBufferRef dataBuffer = CMSampleBufferGetDataBuffer(sampleBuffer);

size_t length, totalLength;

char *dataPointer;

//这里获取了数据指针,和NALU的帧总长度,前四个字节里面保存的

OSStatus statusCodeRet = CMBlockBufferGetDataPointer(dataBuffer, 0, &length, &totalLength, &dataPointer);

if (statusCodeRet == noErr) {

size_t bufferOffset = 0;

static const int AVCCHeaderLength = 4; // 返回的nalu数据前四个字节不是0001的startcode,而是大端模式的帧长度length

// 循环获取nalu数据

while (bufferOffset < totalLength - AVCCHeaderLength) {

uint32_t NALUnitLength = 0;

// 读取NALU长度的数据

memcpy(&NALUnitLength, dataPointer + bufferOffset, AVCCHeaderLength);

// 从大端转系统端

NALUnitLength = CFSwapInt32BigToHost(NALUnitLength);

NSData* data = [[NSData alloc] initWithBytes:(dataPointer + bufferOffset + AVCCHeaderLength) length:NALUnitLength];

[encoder gotEncodedData:data];

// 移动到下一个NALU单元

bufferOffset += AVCCHeaderLength + NALUnitLength;

}

}

}

//填充SPS和PPS数据

- (void)gotSpsPps:(NSData*)sps pps:(NSData*)pps

{

NSLog(@"gotSpsPps %d %d", (int)[sps length], (int)[pps length]);

const char bytes[] = "\x00\x00\x00\x01";

size_t length = (sizeof bytes) - 1; //string literals have implicit trailing ‘\0‘

NSData *ByteHeader = [NSData dataWithBytes:bytes length:length];

//写入startcode

[self.h264FileHandle writeData:ByteHeader];

[self.h264FileHandle writeData:sps];

//写入startcode

[self.h264FileHandle writeData:ByteHeader];

[self.h264FileHandle writeData:pps];

}

//填充NALU数据

- (void)gotEncodedData:(NSData*)data

{

NSLog(@"gotEncodedData %d", (int)[data length]);

if (self.h264FileHandle != NULL)

{

const char bytes[] = "\x00\x00\x00\x01";

size_t length = (sizeof bytes) - 1; //string literals have implicit trailing ‘\0‘

NSData *ByteHeader = [NSData dataWithBytes:bytes length:length];

//写入startcode

[self.h264FileHandle writeData:ByteHeader];

//写入NALU数据

[self.h264FileHandle writeData:data];

}

}

结束编码后销毁session

- (void)EndVideoToolBox

{

VTCompressionSessionCompleteFrames(encodingSession, kCMTimeInvalid);

VTCompressionSessionInvalidate(encodingSession);

CFRelease(encodingSession);

encodingSession = NULL;

}

这样就完成了使用VideoToolbox 的H264编码。编码好的H264文件可以从沙盒中取出。

仅仅看流程不看代码肯定是学不会框架的,自己动手编码试试吧!

Demo下载地址:iOS-VideoToolBox-demo

标签:rda 前言 opengl es array tcap cfs log 获得 长度

原文地址:https://www.cnblogs.com/tangyuanby2/p/11449460.html