标签:输出 strong 能力 recently mat list lead spec 而且

发表在2018年CVPR。

摘要和结论都在强调方法的优势。我们还是先从RDN的结构看起,再理解它的背景和思想。

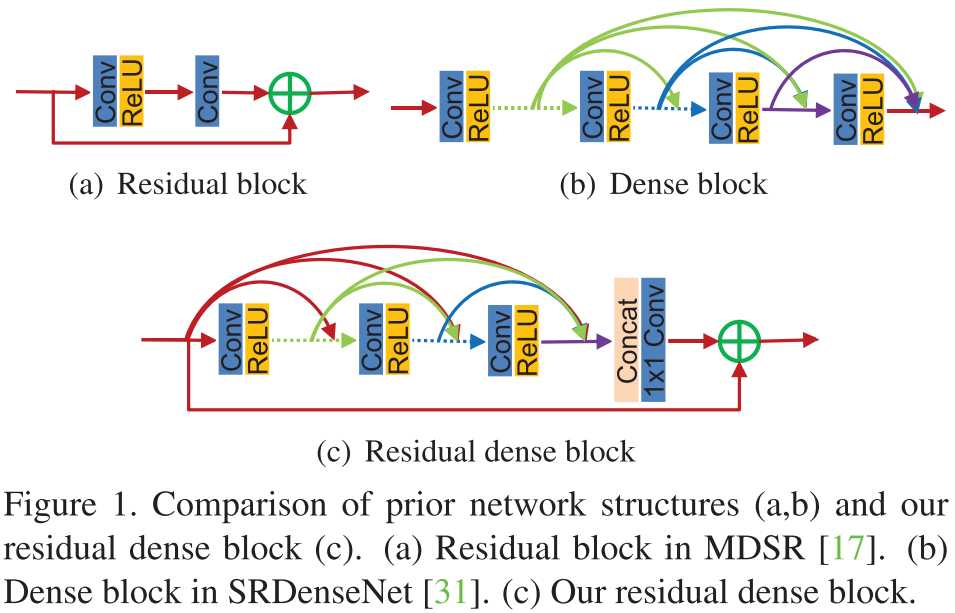

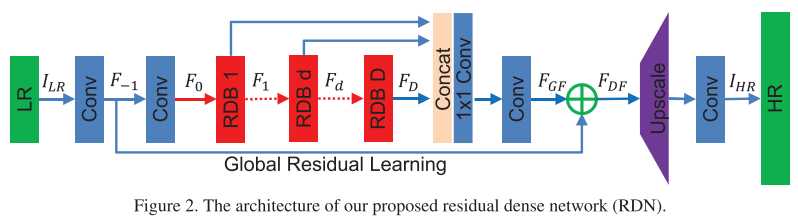

乍一看,这种block结构就是在内部采用了稠密连接,在外部采用残差学习。并且,RDN在全局上也是类似的设计:内部稠密,整体残差。无论是RDB还是RDN,内部都同时采用了\(3 \times 3\)和\(1 \times 1\)卷积。

我们来看看作者怎么解释这种设计的合理性,以及实验是否验证了其有效性。

在RDN和RDB中,我们取消了BN和池化层,因为作者认为它们不仅消耗资源,而且阻碍了网络学习(批注:在一些去噪工作中,有些作者也发现了BN无益于除高斯噪声以外的噪声去除)。

在DenseNet中,不同的block之间需要过渡层,但在这里采用\(1 \times 1\)卷积,即所谓的local feature fusion。(批注:本质是一样的,只不过过渡层多了BN和池化层,因为需要服务于高层视觉任务——图像分类)

全局和局部都有残差学习,而DenseNet中没有。这种局部残差连接,使得上一个RDB的输出,可以直接联系至当前RDB的输出结果。这就是作者所谓的contiguous memory(CM)。

算了,看完解释,我已经不想看实验了,因为还是比较trick的(没有太多能让人high的思想点,解释有点勉强)。我们回头看看摘要和结论吧。

摘要

A very deep convolutional neural network (CNN) has recently achieved great success for image super-resolution (SR) and offered hierarchical features as well. However, most deep CNN based SR models do not make full use of the hierarchical features from the original low-resolution (LR) images, thereby achieving relatively-low performance. In this paper, we propose a novel residual dense network (RDN) to address this problem in image SR. We fully exploit the hierarchical features from all the convolutional layers. Specifically, we propose residual dense block (RDB) to extract abundant local features via dense connected convolutional layers. RDB further allows direct connections from the state of preceding RDB to all the layers of current RDB, leading to a contiguous memory (CM) mechanism. Local feature fusion in RDB is then used to adaptively learn more effective features from preceding and current local features and stabilizes the training of wider network. After fully obtaining dense local features, we use global feature fusion to jointly and adaptively learn global hierarchical features in a holistic way. Experiments on benchmark datasets with different degradation models show that our RDN achieves favorable performance against state-of-the-art methods.

结论

In this paper, we proposed a very deep residual dense network (RDN) for image SR, where residual dense block (RDB) serves as the basic build module. In each RDB, the dense connections between each layers allow full usage of local layers. The local feature fusion (LFF) not only stabilizes the training wider network, but also adaptively controls the preservation of information from current and preceding RDBs. RDB further allows direct connections between the preceding RDB and each layer of current block, leading to a contiguous memory (CM) mechanism. The local residual leaning (LRL) further improves the flow of information and gradient. Moreover, we propose global feature fusion (GFF) to extract hierarchical features in the LR space. By fully using local and global features, our RDN leads to a dense feature fusion and deep supervision. We use the same RDN structure to handle three degradation models and real-world data. Extensive benchmark evaluations well demonstrate that our RDN achieves superiority over state-of-theart methods.

我来翻译一下:

在每个RDB内部,都有一个全局短连接;因此上一个RDB的输出,会直接送到当前RDB的输出端;这就是作者所谓的连续记忆(contiguous memory)机制。

每个RDB之间采用了\(1 \times 1\)卷积,作者将其称为local feature fusion;这不就是大家都在用的、降低通道数的方法嘛,有点故弄玄虚哦。作者还强调:该LFF可以稳定宽网络的训练。实际上,DenseNet为了降低计算量,特地让网络更窄。这是在增大冗余(增强泛化能力)和减小计算量之间的权衡,详情参见我的博客。

Paper | Residual Dense Network for Image Super-Resolution

标签:输出 strong 能力 recently mat list lead spec 而且

原文地址:https://www.cnblogs.com/RyanXing/p/11617352.html