标签:try sse term elk stack ref delay 另一个 耦合 搜索

1 ELK概念

2 K8S需要收集哪些日志

3 ELK Stack日志方案

4 容器中的日志怎么收集

5 K8S平台中应用日志收集

一套正常运行的k8s集群,kubeadm安装部署或者二进制部署即可

| ip地址 | 角色 | 备注 |

|---|---|---|

| 192.168.73.136 | nfs | |

| 192.168.73.138 | k8s-master | |

| 192.168.73.139 | k8s-node01 | |

| 192.168.73.140 | k8s-node02 |

ELK是Elasticsearch、Logstash、Kibana三大开源框架首字母大写简称。市面上也被成为Elastic Stack。其中Elasticsearch是一个基于Lucene、分布式、通过Restful方式进行交互的近实时搜索平台框架。像类似百度、谷歌这种大数据全文搜索引擎的场景都可以使用Elasticsearch作为底层支持框架,可见Elasticsearch提供的搜索能力确实强大,市面上很多时候我们简称Elasticsearch为es。Logstash是ELK的中央数据流引擎,用于从不同目标(文件/数据存储/MQ)收集的不同格式数据,经过过滤后支持输出到不同目的地(文件/MQ/redis/elasticsearch/kafka等)。Kibana可以将elasticsearch的数据通过友好的页面展示出来,提供实时分析的功能。

通过上面对ELK简单的介绍,我们知道了ELK字面意义包含的每个开源框架的功能。市面上很多开发只要提到ELK能够一致说出它是一个日志分析架构技术栈总称,但实际上ELK不仅仅适用于日志分析,它还可以支持其它任何数据分析和收集的场景,日志分析和收集只是更具有代表性。并非唯一性。我们本教程主要也是围绕通过ELK如何搭建一个生产级的日志分析平台来讲解ELK的使用。

官方网站:https://www.elastic.co/cn/products/

在过往的单体应用时代,我们所有组件都部署到一台服务器中,那时日志管理平台的需求可能并没有那么强烈,我们只需要登录到一台服务器通过shell命令就可以很方便的查看系统日志,并快速定位问题。随着互联网的发展,互联网已经全面渗入到生活的各个领域,使用互联网的用户量也越来越多,单体应用已不能够支持庞大的用户的并发量,尤其像中国这种人口大国。那么将单体应用进行拆分,通过水平扩展来支持庞大用户的使用迫在眉睫,微服务概念就是在类似这样的阶段诞生,在微服务盛行的互联网技术时代,单个应用被拆分为多个应用,每个应用集群部署进行负载均衡,那么如果某项业务发生系统错误,开发或运维人员还是以过往单体应用方式登录一台一台登录服务器查看日志来定位问题,这种解决线上问题的效率可想而知。日志管理平台的建设就显得极其重要。通过Logstash去收集每台服务器日志文件,然后按定义的正则模板过滤后传输到Kafka或redis,然后由另一个Logstash从KafKa或redis读取日志存储到elasticsearch中创建索引,最后通过Kibana展示给开发者或运维人员进行分析。这样大大提升了运维线上问题的效率。除此之外,还可以将收集的日志进行大数据分析,得到更有价值的数据给到高层进行决策。

这里只是以主要收集日志为例:

K8S Cluster里面部署的应用程序日志

-标准输出

-日志文件

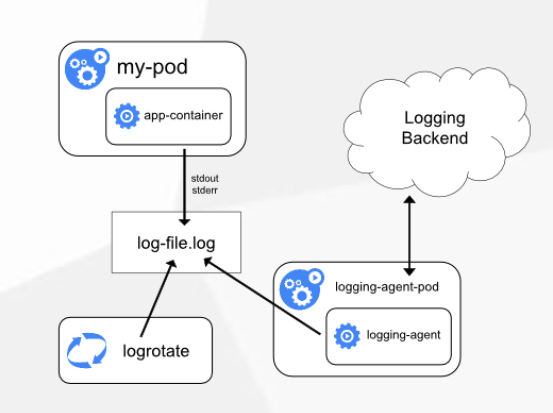

方案一:Node上部署一个日志收集程序

使用DaemonSet的方式去给每一个node上部署日志收集程序logging-agent

然后使用这个agent对本node节点上的/var/log和/var/lib/docker/containers/两个目录下的日志进行采集

或者把Pod中容器日志目录挂载到宿主机统一目录上,这样进行收集

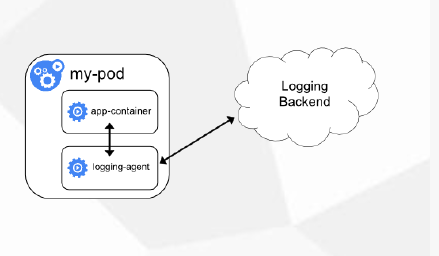

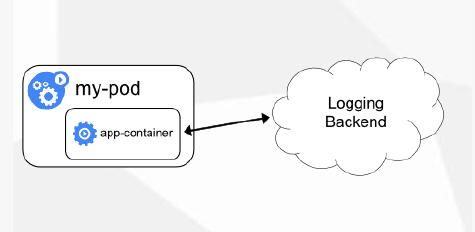

方案二:Pod中附加专用日志收集的容器

每个运行应用程序的Pod中增加一个日志收集容器,使用emtyDir共享日志目录让日志收集程序读取到。

方案三:应用程序直接推送日志

这个方案需要开发在代码中修改直接把应用程序直接推送到远程的存储上,不再输入出控制台或者本地文件了,使用不太多,超出Kubernetes范围

| 方式 | 优点 | 缺点 |

|---|---|---|

| 方案一:Node上部署一个日志收集程序 | 每个Node仅需部署一个日志收集程序,资源消耗少,对应用无侵入 | 应用程序日志需要写到标准输出和标准错误输出,不支持多行日志 |

| 方案二:Pod中附加专用日志收集的容器 | 低耦合 | 每个Pod启动一个日志收集代理,增加资源消耗,并增加运维维护成本 |

| 方案三:应用程序直接推送日志 | 无需额外收集工具 | 浸入应用,增加应用复杂度 |

单节点部署ELK的方法较简单,可以参考下面的yaml编排文件,整体就是创建一个es,然后创建kibana的可视化展示,创建一个es的service服务,然后通过ingress的方式对外暴露域名访问

首先,编写es的yaml,这里部署的是单机版,在k8s集群内中,通常当日志量每天超过20G以上的话,还是建议部署在k8s集群外部,支持分布式集群的架构,这里使用的是有状态部署的方式,并且使用动态存储进行持久化,需要提前创建好存储类,才能运行该yaml

[root@k8s-master fek]# vim elasticsearch.yaml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: elasticsearch

namespace: kube-system

labels:

k8s-app: elasticsearch

spec:

serviceName: elasticsearch

selector:

matchLabels:

k8s-app: elasticsearch

template:

metadata:

labels:

k8s-app: elasticsearch

spec:

containers:

- image: elasticsearch:7.3.1

name: elasticsearch

resources:

limits:

cpu: 1

memory: 2Gi

requests:

cpu: 0.5

memory: 500Mi

env:

- name: "discovery.type"

value: "single-node"

- name: ES_JAVA_OPTS

value: "-Xms512m -Xmx2g"

ports:

- containerPort: 9200

name: db

protocol: TCP

volumeMounts:

- name: elasticsearch-data

mountPath: /usr/share/elasticsearch/data

volumeClaimTemplates:

- metadata:

name: elasticsearch-data

spec:

storageClassName: "managed-nfs-storage"

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 20Gi

---

apiVersion: v1

kind: Service

metadata:

name: elasticsearch

namespace: kube-system

spec:

clusterIP: None

ports:

- port: 9200

protocol: TCP

targetPort: db

selector:

k8s-app: elasticsearch使用刚才编写好的yaml文件创建Elasticsearch,然后检查是否启动,如下所示能看到一个elasticsearch-0 的pod副本被创建,正常运行;如果不能正常启动可以使用kubectl describe查看详细描述,排查问题

[root@k8s-master fek]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-5bd5f9dbd9-95flw 1/1 Running 0 17h

elasticsearch-0 1/1 Running 1 16m

php-demo-85849d58df-4bvld 2/2 Running 2 18h

php-demo-85849d58df-7tbb2 2/2 Running 0 17h然后,需要部署一个Kibana来对搜集到的日志进行可视化展示,使用Deployment的方式编写一个yaml,使用ingress对外进行暴露访问,直接引用了es

[root@k8s-master fek]# vim kibana.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: kibana

namespace: kube-system

labels:

k8s-app: kibana

spec:

replicas: 1

selector:

matchLabels:

k8s-app: kibana

template:

metadata:

labels:

k8s-app: kibana

spec:

containers:

- name: kibana

image: kibana:7.3.1

resources:

limits:

cpu: 1

memory: 500Mi

requests:

cpu: 0.5

memory: 200Mi

env:

- name: ELASTICSEARCH_HOSTS

value: http://elasticsearch:9200

ports:

- containerPort: 5601

name: ui

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

name: kibana

namespace: kube-system

spec:

ports:

- port: 5601

protocol: TCP

targetPort: ui

selector:

k8s-app: kibana

---

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: kibana

namespace: kube-system

spec:

rules:

- host: kibana.ctnrs.com

http:

paths:

- path: /

backend:

serviceName: kibana

servicePort: 5601使用刚才编写好的yaml创建kibana,可以看到最后生成了一个kibana-b7d98644-lshsz的pod,并且正常运行

[root@k8s-master fek]# kubectl apply -f kibana.yaml

deployment.apps/kibana created

service/kibana created

ingress.extensions/kibana created

[root@k8s-master fek]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-5bd5f9dbd9-95flw 1/1 Running 0 17h

elasticsearch-0 1/1 Running 1 16m

kibana-b7d98644-48gtm 1/1 Running 1 17h

php-demo-85849d58df-4bvld 2/2 Running 2 18h

php-demo-85849d58df-7tbb2 2/2 Running 0 17h最后,需要编写yaml在每个node上创建一个ingress-nginx控制器来对外提供访问

[root@k8s-master demo2]# vim mandatory.yaml

apiVersion: v1

kind: Namespace

metadata:

name: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: ConfigMap

apiVersion: v1

metadata:

name: nginx-configuration

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: ConfigMap

apiVersion: v1

metadata:

name: tcp-services

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: ConfigMap

apiVersion: v1

metadata:

name: udp-services

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: nginx-ingress-clusterrole

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- "extensions"

- "networking.k8s.io"

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- "extensions"

- "networking.k8s.io"

resources:

- ingresses/status

verbs:

- update

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: Role

metadata:

name: nginx-ingress-role

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- configmaps

- pods

- secrets

- namespaces

verbs:

- get

- apiGroups:

- ""

resources:

- configmaps

resourceNames:

# Defaults to "<election-id>-<ingress-class>"

# Here: "<ingress-controller-leader>-<nginx>"

# This has to be adapted if you change either parameter

# when launching the nginx-ingress-controller.

- "ingress-controller-leader-nginx"

verbs:

- get

- update

- apiGroups:

- ""

resources:

- configmaps

verbs:

- create

- apiGroups:

- ""

resources:

- endpoints

verbs:

- get

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: RoleBinding

metadata:

name: nginx-ingress-role-nisa-binding

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: nginx-ingress-role

subjects:

- kind: ServiceAccount

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: nginx-ingress-clusterrole-nisa-binding

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: nginx-ingress-clusterrole

subjects:

- kind: ServiceAccount

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: nginx-ingress-controller

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

spec:

selector:

matchLabels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

template:

metadata:

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

annotations:

prometheus.io/port: "10254"

prometheus.io/scrape: "true"

spec:

serviceAccountName: nginx-ingress-serviceaccount

hostNetwork: true

containers:

- name: nginx-ingress-controller

image: lizhenliang/nginx-ingress-controller:0.20.0

args:

- /nginx-ingress-controller

- --configmap=$(POD_NAMESPACE)/nginx-configuration

- --tcp-services-configmap=$(POD_NAMESPACE)/tcp-services

- --udp-services-configmap=$(POD_NAMESPACE)/udp-services

- --publish-service=$(POD_NAMESPACE)/ingress-nginx

- --annotations-prefix=nginx.ingress.kubernetes.io

securityContext:

allowPrivilegeEscalation: true

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

# www-data -> 33

runAsUser: 33

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

ports:

- name: http

containerPort: 80

- name: https

containerPort: 443

livenessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

readinessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

---创建ingress控制器,可以看到使用的DaemonSet 的方式在每一个node都部署了ingress控制器,我们可以在本地host中绑定任意一个node ip,然后使用域名都可以访问

[root@k8s-master demo2]# kubectl apply -f mandatory.yaml

[root@k8s-master demo2]# kubectl get pod -n ingress-nginx

NAME READY STATUS RESTARTS AGE

nginx-ingress-controller-98769 1/1 Running 6 13h

nginx-ingress-controller-n6wpq 1/1 Running 0 13h

nginx-ingress-controller-tbfxq 1/1 Running 29 13h

nginx-ingress-controller-trxnj 1/1 Running 6 13h绑定本机hosts,访问域名验证

windows系统,hosts文件地址:C:\Windows\System32\drivers\etc,Mac系统sudo vi /private/etc/hosts 编辑hosts文件,在底部加入域名和ip,用于解析,这个ip地址为任意node节点ip地址

加入如下命令,然后保存

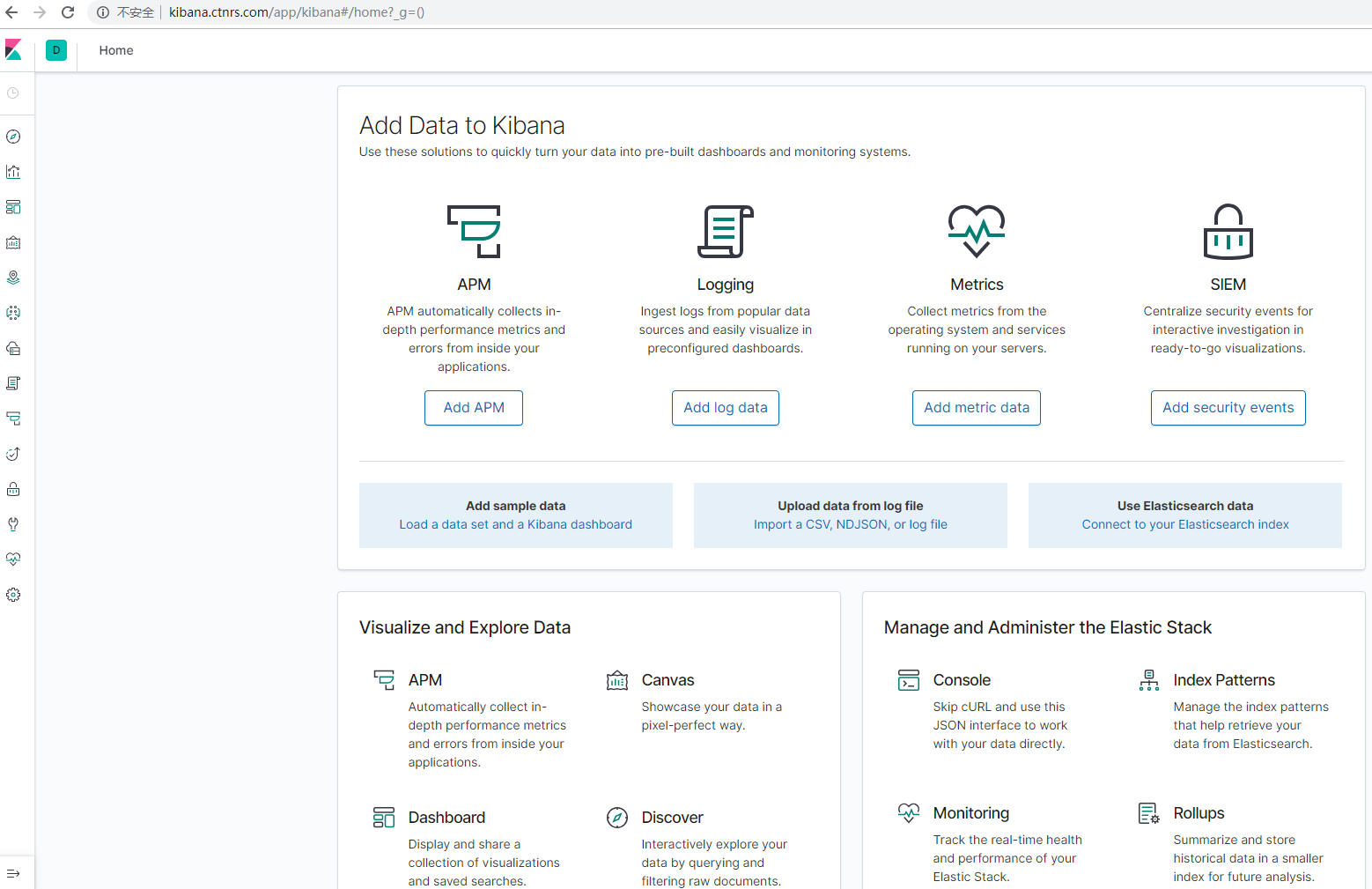

192.168.73.139 kibana.ctnrs.com最后在浏览器中,输入kibana.ctnrs.com,就会进入kibana的web界面,已设置了不需要进行登陆,当前页面都是全英文模式,可以修改上网搜一下修改配置文件的位置,建议使用英文版本

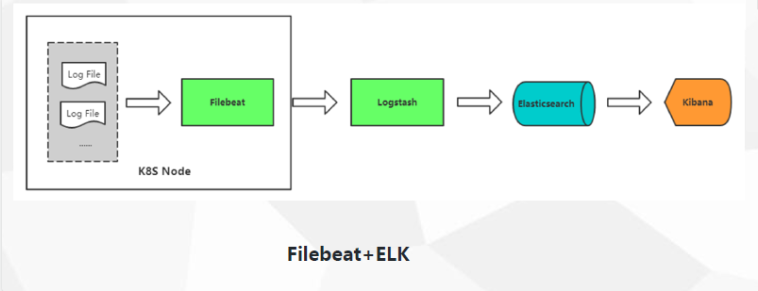

es和kibana部署好了之后,我们如何采集pod日志呢,我们采用方案一的方式,首先在每一个node上中部署一个filebeat的采集器,采用的是7.3.1版本,因为filebeat是对k8s有支持,可以连接api给pod日志打标签,所以yaml中需要进行认证,最后在配置文件中对获取数据采集了之后输入到es中,已在yaml中配置好

[root@k8s-master fek]# vim filebeat-kubernetes.yaml

---

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-config

namespace: kube-system

labels:

k8s-app: filebeat

data:

filebeat.yml: |-

filebeat.config:

inputs:

# Mounted `filebeat-inputs` configmap:

path: ${path.config}/inputs.d/*.yml

# Reload inputs configs as they change:

reload.enabled: false

modules:

path: ${path.config}/modules.d/*.yml

# Reload module configs as they change:

reload.enabled: false

# To enable hints based autodiscover, remove `filebeat.config.inputs` configuration and uncomment this:

#filebeat.autodiscover:

# providers:

# - type: kubernetes

# hints.enabled: true

output.elasticsearch:

hosts: ['${ELASTICSEARCH_HOST:elasticsearch}:${ELASTICSEARCH_PORT:9200}']

---

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-inputs

namespace: kube-system

labels:

k8s-app: filebeat

data:

kubernetes.yml: |-

- type: docker

containers.ids:

- "*"

processors:

- add_kubernetes_metadata:

in_cluster: true

---

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: filebeat

namespace: kube-system

labels:

k8s-app: filebeat

spec:

template:

metadata:

labels:

k8s-app: filebeat

spec:

serviceAccountName: filebeat

terminationGracePeriodSeconds: 30

containers:

- name: filebeat

image: elastic/filebeat:7.3.1

args: [

"-c", "/etc/filebeat.yml",

"-e",

]

env:

- name: ELASTICSEARCH_HOST

value: elasticsearch

- name: ELASTICSEARCH_PORT

value: "9200"

securityContext:

runAsUser: 0

# If using Red Hat OpenShift uncomment this:

#privileged: true

resources:

limits:

memory: 200Mi

requests:

cpu: 100m

memory: 100Mi

volumeMounts:

- name: config

mountPath: /etc/filebeat.yml

readOnly: true

subPath: filebeat.yml

- name: inputs

mountPath: /usr/share/filebeat/inputs.d

readOnly: true

- name: data

mountPath: /usr/share/filebeat/data

- name: varlibdockercontainers

mountPath: /var/lib/docker/containers

readOnly: true

volumes:

- name: config

configMap:

defaultMode: 0600

name: filebeat-config

- name: varlibdockercontainers

hostPath:

path: /var/lib/docker/containers

- name: inputs

configMap:

defaultMode: 0600

name: filebeat-inputs

# data folder stores a registry of read status for all files, so we don't send everything again on a Filebeat pod restart

- name: data

hostPath:

path: /var/lib/filebeat-data

type: DirectoryOrCreate

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: filebeat

subjects:

- kind: ServiceAccount

name: filebeat

namespace: kube-system

roleRef:

kind: ClusterRole

name: filebeat

apiGroup: rbac.authorization.k8s.io

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: filebeat

labels:

k8s-app: filebeat

rules:

- apiGroups: [""] # "" indicates the core API group

resources:

- namespaces

- pods

verbs:

- get

- watch

- list

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: filebeat

namespace: kube-system

labels:

k8s-app: filebeat

---使用编写好的yaml文件创建filebeat采集器,然后检查是否启动,如果不能正常启动可以使用kubectl describe查看详细描述,排查问题

[root@k8s-master fek]# kubectl apply -f filebeat-kubernetes.yaml

configmap/filebeat-config created

configmap/filebeat-inputs created

daemonset.extensions/filebeat created

clusterrolebinding.rbac.authorization.k8s.io/filebeat created

clusterrole.rbac.authorization.k8s.io/filebeat created

serviceaccount/filebeat created

[root@k8s-master fek]# ls

elasticsearch.yaml filebeat-kubernetes.yaml kibana.yaml

[root@k8s-master fek]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

alertmanager-5d75d5688f-fmlq6 2/2 Running 11 58d

coredns-5bd5f9dbd9-rv7ft 1/1 Running 2 47d

filebeat-2dlk8 1/1 ContainerCreating 0 27s

filebeat-cqvmk 1/1 ContainerCreating 0 27s

filebeat-s2xmm 1/1 ContainerCreating 0 28s

filebeat-w28qc 1/1 ContainerCreating 0 27s

grafana-0 1/1 Running 5 64d

kube-state-metrics-7c76bdbf68-48b7w 2/2 Running 4 47d

kubernetes-dashboard-7d77666777-d5ng4 1/1 Running 9 65d

prometheus-0 2/2 Running 2 47d需要对k8s组件的日志进行采集,因为我的环境是用的kubeadm进行部署的,因此我的组件日志都在/var/log/message里面,因此我们还需要部署一个采集k8s组件日志的pod副本,自定义了索引k8s-module-%{+yyyy.MM.dd},编写yaml如下:

[root@k8s-master elk]# vim k8s-logs.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: k8s-logs-filebeat-config

namespace: kube-system

data:

filebeat.yml: |

filebeat.inputs:

- type: log

paths:

- /var/log/messages

fields:

app: k8s

type: module

fields_under_root: true

setup.ilm.enabled: false

setup.template.name: "k8s-module"

setup.template.pattern: "k8s-module-*"

output.elasticsearch:

hosts: ['elasticsearch.kube-system:9200']

index: "k8s-module-%{+yyyy.MM.dd}"

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: k8s-logs

namespace: kube-system

spec:

selector:

matchLabels:

project: k8s

app: filebeat

template:

metadata:

labels:

project: k8s

app: filebeat

spec:

containers:

- name: filebeat

image: elastic/filebeat:7.3.1

args: [

"-c", "/etc/filebeat.yml",

"-e",

]

resources:

requests:

cpu: 100m

memory: 100Mi

limits:

cpu: 500m

memory: 500Mi

securityContext:

runAsUser: 0

volumeMounts:

- name: filebeat-config

mountPath: /etc/filebeat.yml

subPath: filebeat.yml

- name: k8s-logs

mountPath: /var/log/messages

volumes:

- name: k8s-logs

hostPath:

path: /var/log/messages

- name: filebeat-config

configMap:

name: k8s-logs-filebeat-config创建编写好的yaml,并且检查是否成功创建,能看到两个命名为k8s-log-xx的pod副本分别创建在两个nodes上

[root@k8s-master elk]# kubectl apply -f k8s-logs.yaml

[root@k8s-master elk]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-5bd5f9dbd9-8zdn5 1/1 Running 0 10h

elasticsearch-0 1/1 Running 1 13h

filebeat-2q5tz 1/1 Running 0 13h

filebeat-k6m27 1/1 Running 2 13h

k8s-logs-52xgk 1/1 Running 0 5h45m

k8s-logs-jpkqp 1/1 Running 0 5h45m

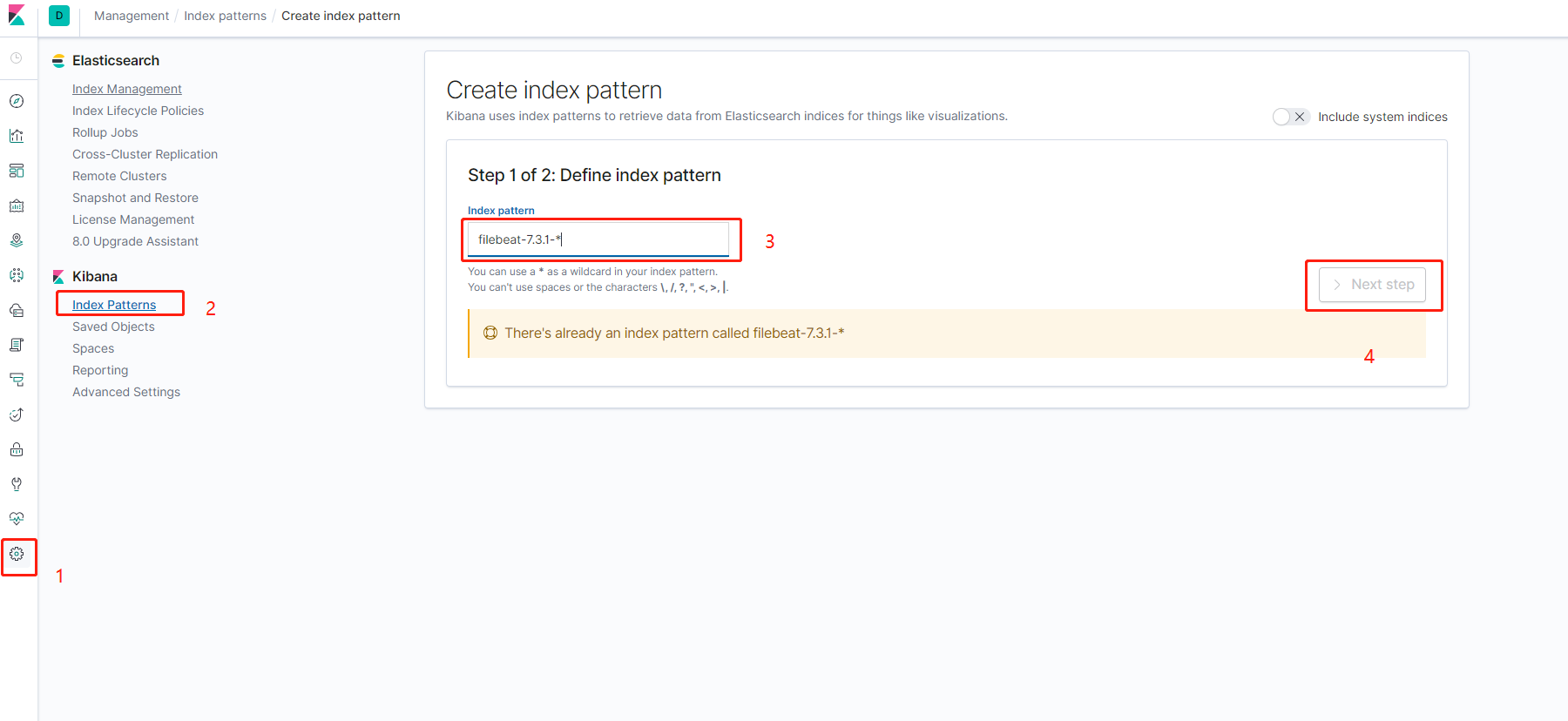

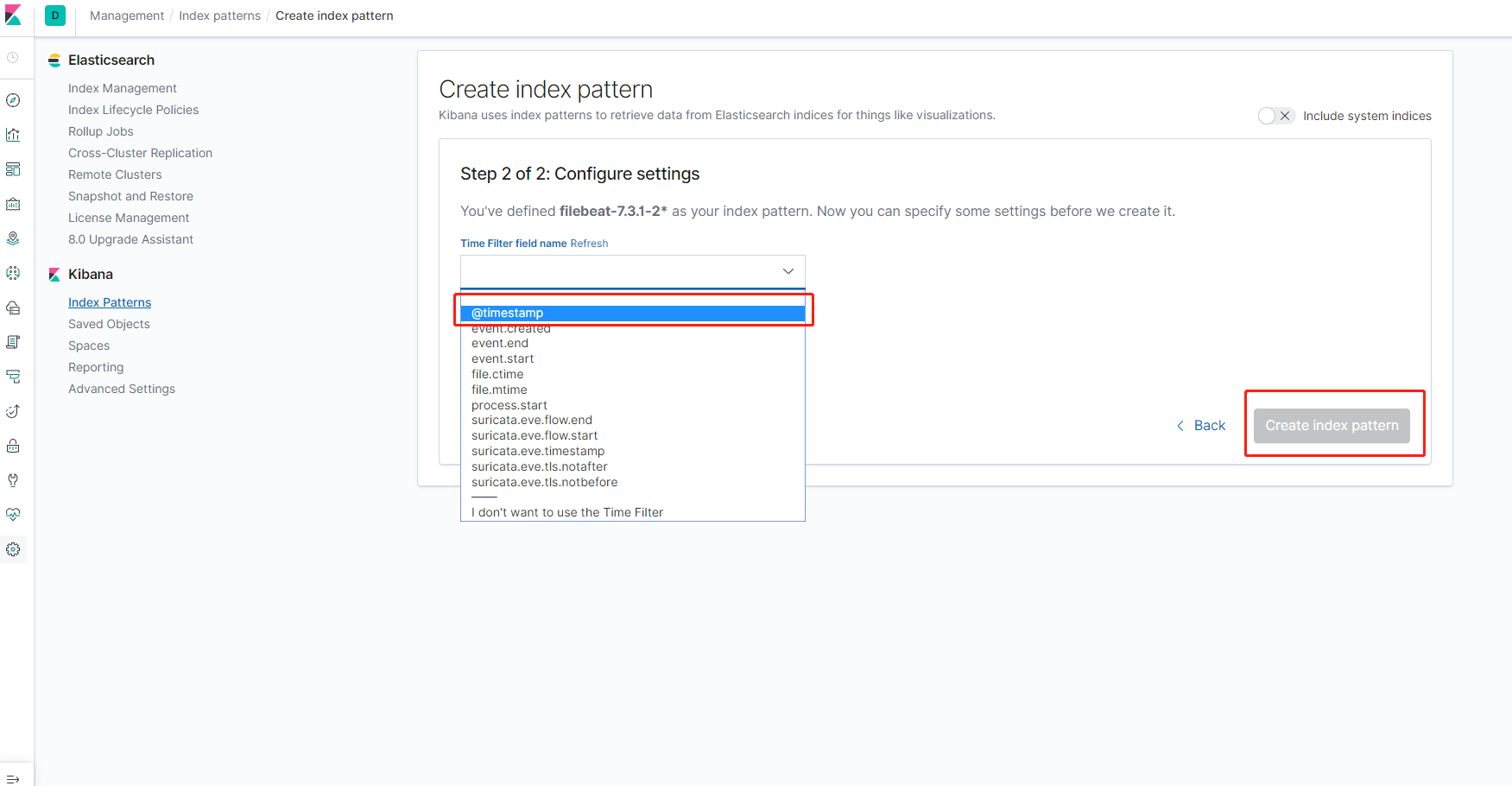

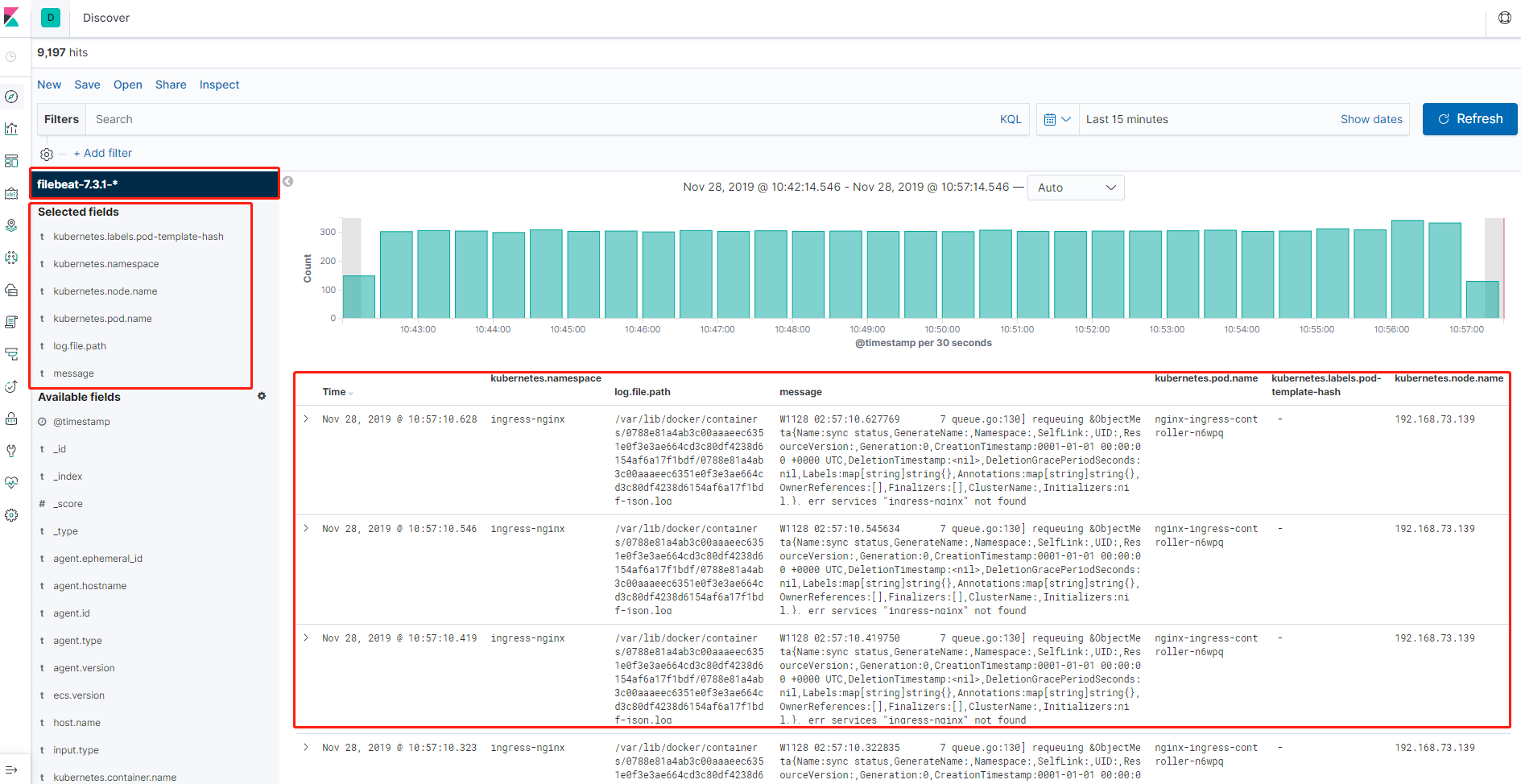

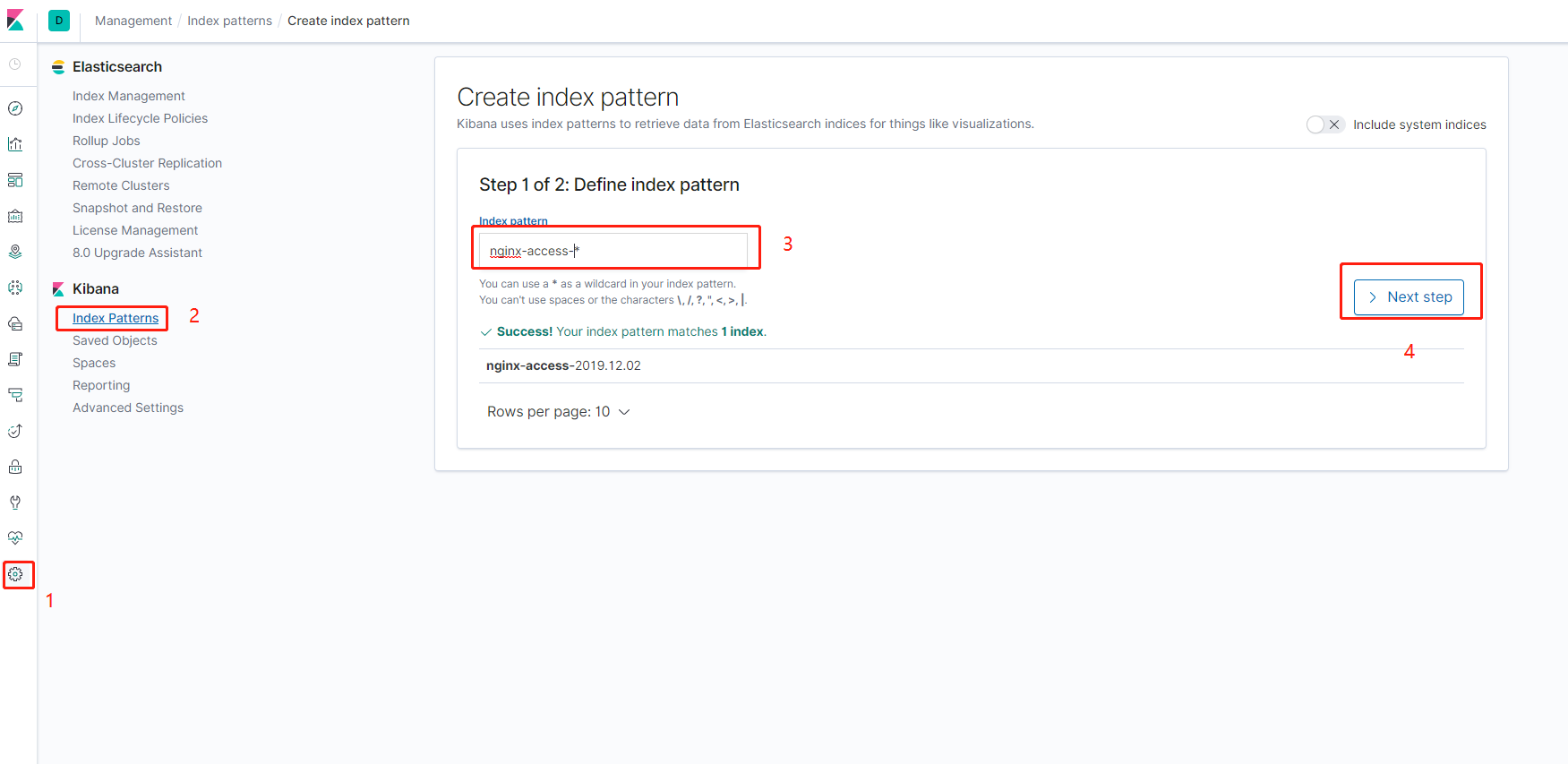

kibana-b7d98644-tllmm 1/1 Running 0 10h首先打开kibana的web界面,点击左边菜单栏汇中的设置,然后点击在Kibana下面的索引按钮,然后点击左上角的然后根据如图所示分别创建一个filebeat-7.3.1-和k8s-module-的filebeat采集器的索引匹配

然后按照时间过滤,完成创建

索引匹配创建以后,点击左边最上面的菜单Discove,然后可以在左侧看到我们刚才创建的索引,然后就可以在下面添加要展示的标签,也可以对标签进行筛选,最终效果如图所示,可以看到采集到的日志的所有信息

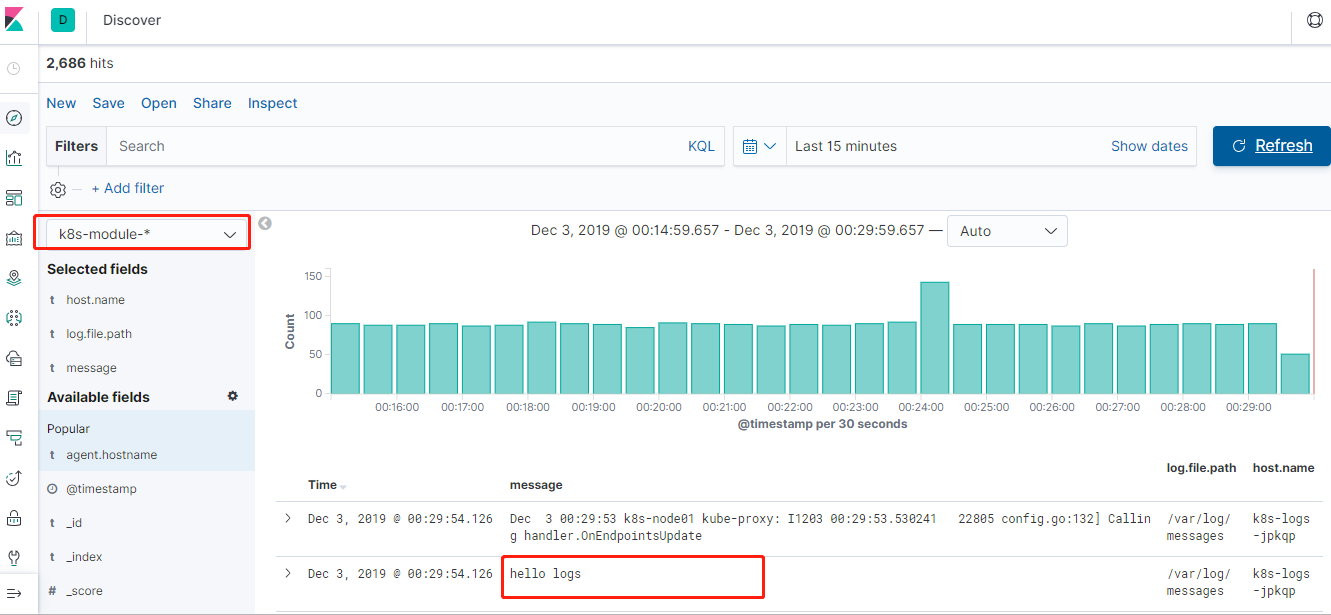

在其中一个node上,输入echo hello logs >>/var/log/messages,然后在web上选择k8s-module-*的索引匹配,就可以在采集到的日志中看到刚才输入的hello logs,则证明采集成功,如图所示

我们也可以使用方案的方式,通过在pod中注入一个日志收集的容器来采集pod的日志,以一个php-demo的应用为例,使用emptyDir的方式把日志目录共享给采集器的容器收集,编写nginx-deployment.yaml ,直接在pod中加入filebeat的容器,并且自定义索引为nginx-access-%{+yyyy.MM.dd}

[root@k8s-master fek]# vim nginx-deployment.yaml

apiVersion: apps/v1beta1

kind: Deployment

metadata:

name: php-demo

namespace: kube-system

spec:

replicas: 2

selector:

matchLabels:

project: www

app: php-demo

template:

metadata:

labels:

project: www

app: php-demo

spec:

imagePullSecrets:

- name: registry-pull-secret

containers:

- name: nginx

image: lizhenliang/nginx-php

ports:

- containerPort: 80

name: web

protocol: TCP

resources:

requests:

cpu: 0.5

memory: 256Mi

limits:

cpu: 1

memory: 1Gi

livenessProbe:

httpGet:

path: /status.html

port: 80

initialDelaySeconds: 20

timeoutSeconds: 20

readinessProbe:

httpGet:

path: /status.html

port: 80

initialDelaySeconds: 20

timeoutSeconds: 20

volumeMounts:

- name: nginx-logs

mountPath: /usr/local/nginx/logs

- name: filebeat

image: elastic/filebeat:7.3.1

args: [

"-c", "/etc/filebeat.yml",

"-e",

]

resources:

limits:

memory: 500Mi

requests:

cpu: 100m

memory: 100Mi

securityContext:

runAsUser: 0

volumeMounts:

- name: filebeat-config

mountPath: /etc/filebeat.yml

subPath: filebeat.yml

- name: nginx-logs

mountPath: /usr/local/nginx/logs

volumes:

- name: nginx-logs

emptyDir: {}

- name: filebeat-config

configMap:

name: filebeat-nginx-config

---

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-nginx-config

namespace: kube-system

data:

filebeat.yml: |-

filebeat.inputs:

- type: log

paths:

- /usr/local/nginx/logs/access.log

# tags: ["access"]

fields:

app: www

type: nginx-access

fields_under_root: true

setup.ilm.enabled: false

setup.template.name: "nginx-access"

setup.template.pattern: "nginx-access-*"

output.elasticsearch:

hosts: ['elasticsearch.kube-system:9200']

index: "nginx-access-%{+yyyy.MM.dd}"创建刚才编写的nginx-deployment.yaml,创建成果之后会在kube-system命名空间下面pod/web-demo-58d89c9bc4-r5692的2个pod副本,还有一个对外暴露的service/web-demo

[root@k8s-master elk]# kubectl apply -f nginx-deployment.yaml

[root@k8s-master fek]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-5bd5f9dbd9-8zdn5 1/1 Running 0 20h

elasticsearch-0 1/1 Running 1 23h

filebeat-46nvd 1/1 Running 0 23m

filebeat-sst8m 1/1 Running 0 23m

k8s-logs-52xgk 1/1 Running 0 15h

k8s-logs-jpkqp 1/1 Running 0 15h

kibana-b7d98644-tllmm 1/1 Running 0 20h

php-demo-85849d58df-d98gv 2/2 Running 0 26m

php-demo-85849d58df-sl5ss 2/2 Running 0 26m然后打开kibana的web,按照刚才的办法继续添加一个索引匹配nginx-access-*,如图所示

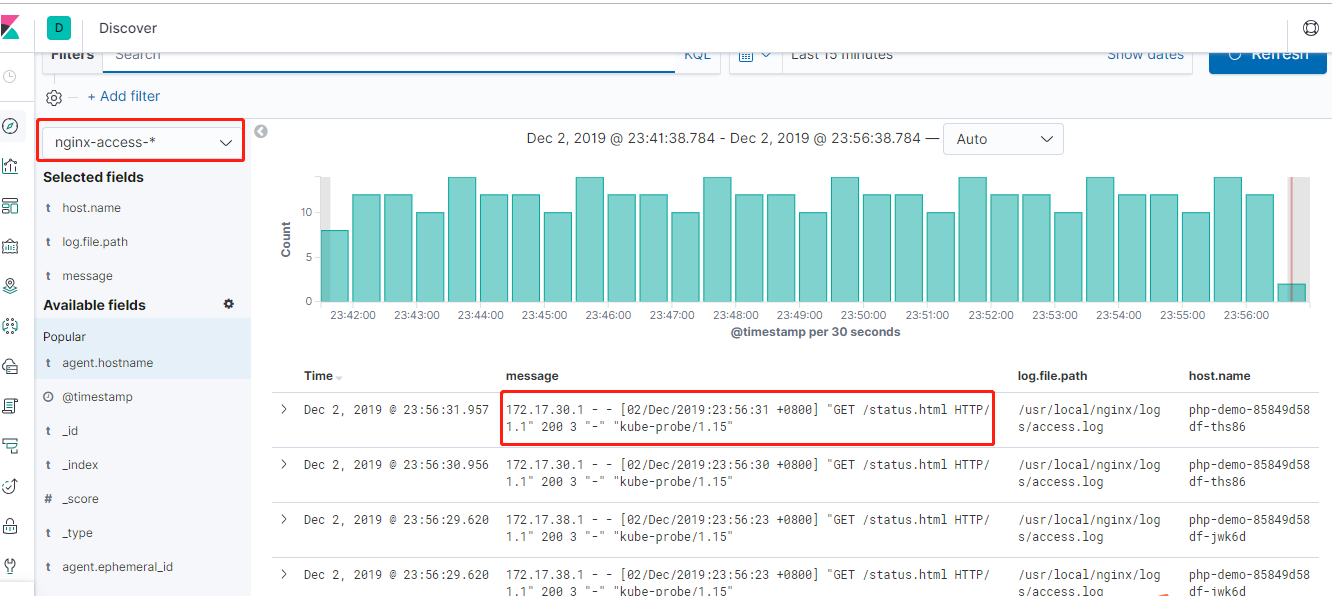

最后点击左边最上面的菜单Discove,然后可以在左侧看到我们刚才创建的索引匹配,下拉选择nginx-access-*,然后就可以在下面添加要展示的标签,也可以对标签进行筛选,最终效果如图所示,可以看到采集到的日志的所有信息,可以使用 http://node ip+30001的访问访问一下刚才创建的nginx,然后测试是否有日志产生

专注开源的DevOps技术栈技术,可以关注公众号,有问题欢迎一起交流

Kubernetes实战之部署ELK Stack收集平台日志

标签:try sse term elk stack ref delay 另一个 耦合 搜索

原文地址:https://www.cnblogs.com/double-dong/p/11992774.html