标签:src page 下载图片 lib from int ike gecko text

一台能上404的机子..

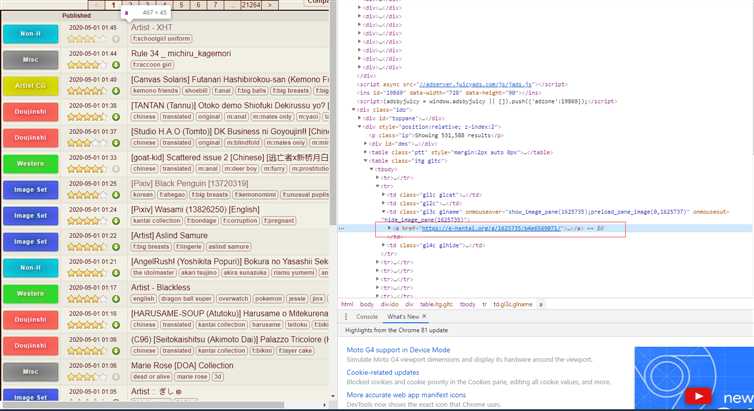

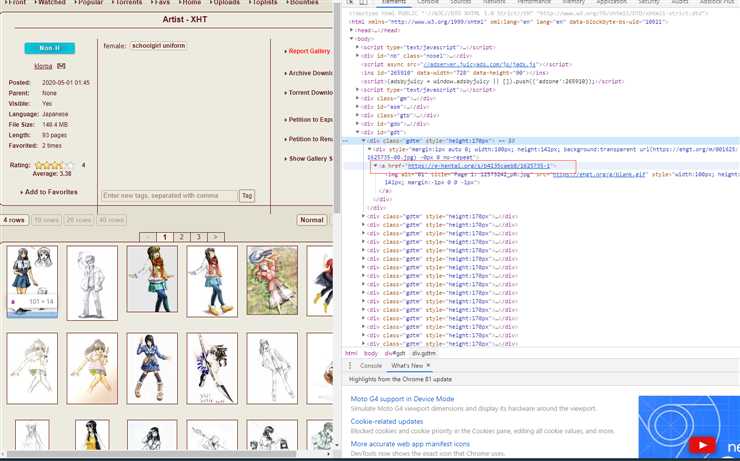

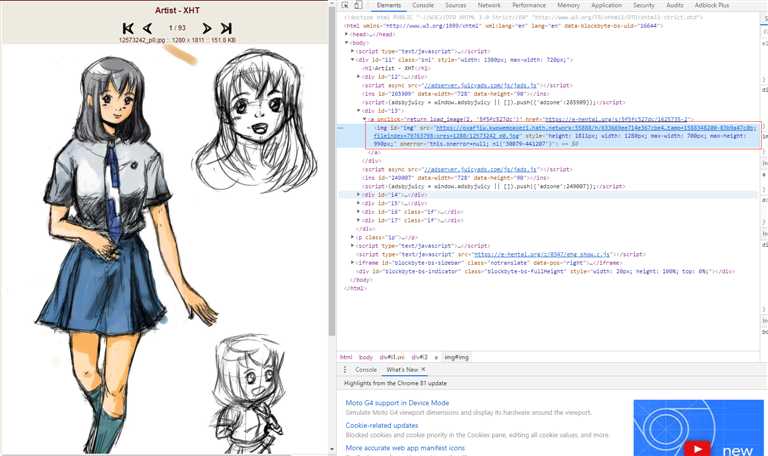

翻本子的时候觉得要是直接爬到本地看起来多舒服啊..然后就写了个爬虫,由于也只是初学爬虫,个中技巧也不熟练,写的过程中的语法用法参考了很多文档和博客,我是对于当前搜索页用F12看过去..找到每个本子的地址再一层层下去最后下载图片...然后去根据标签一层层遍历将文件保存在本地,能够直接爬取搜索页下一整页的所有本,并保存在该文件同级目录下,用着玩玩还行中途还被E站封了一次IP,一看起来就觉得写的属实差劲(差就是还有进步空间嘛

这就是个试验页 别想太多

from bs4 import BeautifulSoup

import re

import requests

import os

import urllib.request

headers = {

‘User-Agent‘: ‘Mozilla/5.0 (Windows NT 6.3; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.82 Safari/537.36‘,

‘Accept‘: ‘text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8‘,

‘Upgrade-Insecure-Requests‘: ‘1‘}

r = requests.get("https://e-hentai.org/", headers=headers)

soup = BeautifulSoup(r.text, ‘lxml‘)

divs = soup.find_all(class_=‘gl3c glname‘)

# 爬取当前页面的本子网址

for div in divs:

url = div.a.get(‘href‘)

r2 = requests.get(url, headers=headers)

soup2 = BeautifulSoup(r2.text, ‘lxml‘)

manga = soup2.find_all(class_=‘gdtm‘)

title = soup2.title.get_text() # 获取该本子标题

# 遍历本子的各页

for div2 in manga:

picurl = div2.a.get(‘href‘)

picr = requests.get(picurl, headers=headers)

soup3 = BeautifulSoup(picr.text, ‘lxml‘)

downurl = soup3.find_all(id=‘img‘)

page = 0

for dur in downurl:

# print(dur.get(‘src‘))

# 判断是否存在该文件夹

purl=dur.get(‘src‘)

fold_path = ‘./‘+title

if not os.path.exists(fold_path):

print("正在创建文件夹...")

os.makedirs(fold_path)

print("正在尝试下载图片....:{}".format(purl))

#保留后缀

filename = title+str(page)+purl.split(‘/‘)[-1]

filepath = fold_path + ‘/‘ + filename

page = page + 1

if os.path.exists(filepath):

print("已存在该文件,不下了不下了")

else:

try:

urllib.request.urlretrieve(purl, filename=filepath)

except Exception as e:

print("error发生:")

print(e)

标签:src page 下载图片 lib from int ike gecko text

原文地址:https://www.cnblogs.com/graytido/p/12815423.html