标签:ons mon 新版本 guide multi 设置 nohup ike The

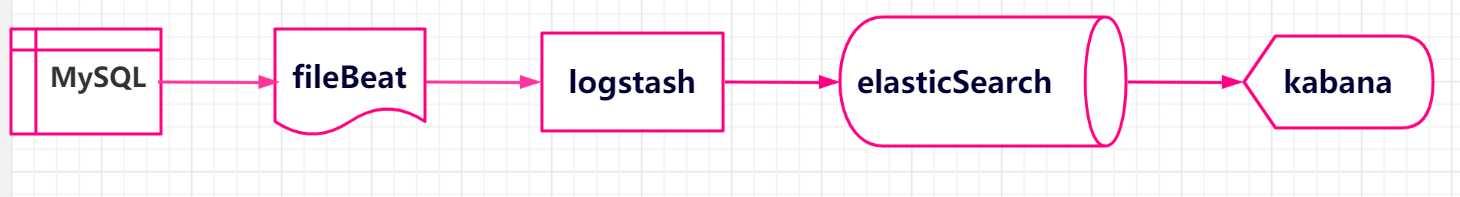

ELK是集分布式数据存储、可视化查询和日志解析于一体的日志分析平台。ELK=elasticsearch+Logstash+kibana,三者各司其职,相互配合,共同完成日志的数据处理工作。ELK各组件的主要功能如下:

我们在搭建平台时,还借助了filebeat插件。Filebeat是本地文件的日志数据采集器,可监控日志目录或特定日志文件(tail file),并可将数据转发给Elasticsearch或Logstatsh等。

本案例的实践,主要通过ELK收集、管理、检索mysql实例的慢查询日志和错误日志。

简单的数据流程图如下:

| ES数据库 | MySQL数据库 |

| Index | Database |

| Tpye[在7.0之后type为固定值_doc] | Table |

| Document | Row |

| Field | Column |

| Mapping | Schema |

| Everything is indexed | Index |

| Query DSL[Descriptor structure language] | SQL |

| GET http://... | Select * from table … |

| PUT http://... | Update table set … |

报错提示

[usernimei@testes01 bin]$ Exception in thread "main" org.elasticsearch.bootstrap.BootstrapException: java.nio.file.AccessDeniedException: /data/elasticsearch/elasticsearch-7.4.2/config/elasticsearch.keystore Likely root cause: java.nio.file.AccessDeniedException: /data/elasticsearch/elasticsearch-7.4.2/config/elasticsearch.keystore at java.base/sun.nio.fs.UnixException.translateToIOException(UnixException.java:90) at java.base/sun.nio.fs.UnixException.rethrowAsIOException(UnixException.java:111) at java.base/sun.nio.fs.UnixException.rethrowAsIOException(UnixException.java:116) at java.base/sun.nio.fs.UnixFileSystemProvider.newByteChannel(UnixFileSystemProvider.java:219) at java.base/java.nio.file.Files.newByteChannel(Files.java:374) at java.base/java.nio.file.Files.newByteChannel(Files.java:425) at org.apache.lucene.store.SimpleFSDirectory.openInput(SimpleFSDirectory.java:77) at org.elasticsearch.common.settings.KeyStoreWrapper.load(KeyStoreWrapper.java:219) at org.elasticsearch.bootstrap.Bootstrap.loadSecureSettings(Bootstrap.java:234) at org.elasticsearch.bootstrap.Bootstrap.init(Bootstrap.java:305) at org.elasticsearch.bootstrap.Elasticsearch.init(Elasticsearch.java:159) at org.elasticsearch.bootstrap.Elasticsearch.execute(Elasticsearch.java:150) at org.elasticsearch.cli.EnvironmentAwareCommand.execute(EnvironmentAwareCommand.java:86) at org.elasticsearch.cli.Command.mainWithoutErrorHandling(Command.java:125) at org.elasticsearch.cli.Command.main(Command.java:90) at org.elasticsearch.bootstrap.Elasticsearch.main(Elasticsearch.java:115) at org.elasticsearch.bootstrap.Elasticsearch.main(Elasticsearch.java:92) Refer to the log for complete error details

问题分析

第一次误用了root账号启动,此时路径下的elasticsearch.keystore 权限属于了root

-rw-rw---- 1 root root 199 Mar 24 17:36 elasticsearch.keystore

解决方案--切换到root用户修改文件elasticsearch.keystore权限

调整到es用户下,即

chown -R es用户:es用户组 elasticsearch.keystore

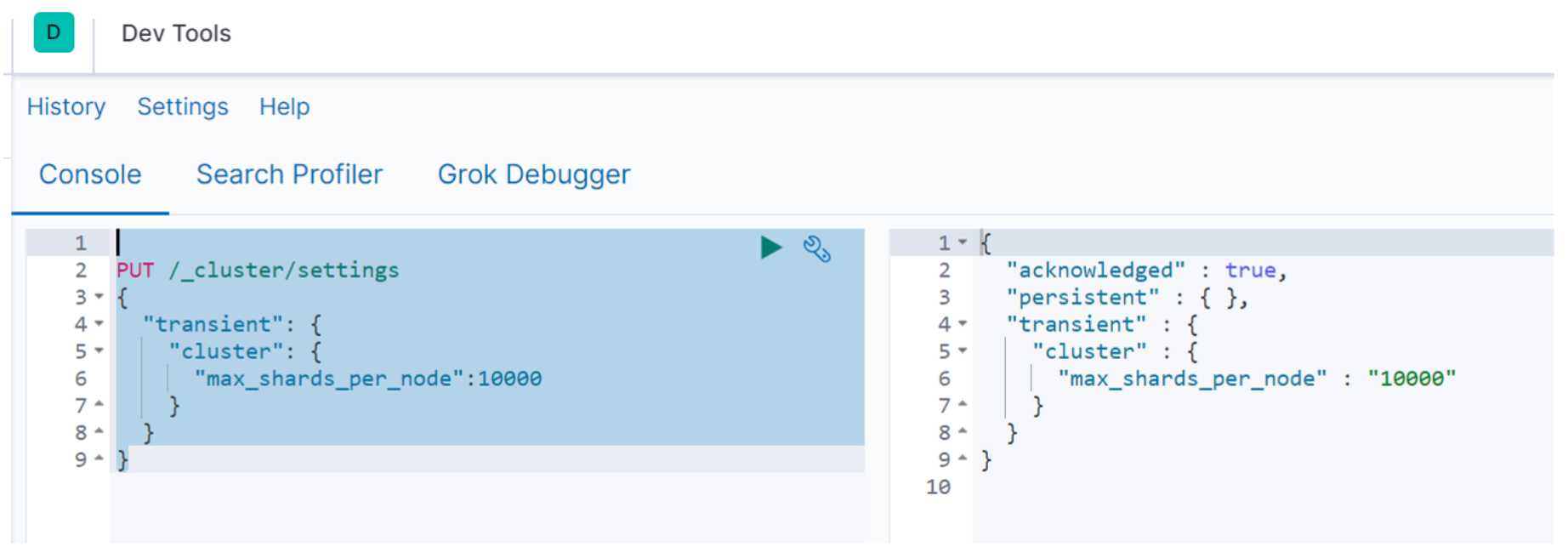

根据官方解释,从Elasticsearch v7.0.0 开始,集群中的每个节点默认限制 1000 个shard,如果你的es集群有3个数据节点,那么最多 3000 shards。这里我们是只有一台es。所以只有1000。

[2019-05-11T11:05:24,650][WARN ][logstash.outputs.elasticsearch][main] Marking url as dead. Last error: [LogStash::Outputs::ElasticSearch::HttpClient::Pool::HostUnreachableError] Elasticsearch Unreachable: [http://qqelastic:xxxxxx@155.155.155.155:55944/][Manticore::SocketTimeout] Read timed out {:url=>http://qqelastic:xxxxxx@155.155.155.155:55944/, :error_message=>"Elasticsearch Unreachable: [http://qqelastic:xxxxxx@155.155.155.155:55944/][Manticore::SocketTimeout] Read timed out", :error_class=>"LogStash::Outputs::ElasticSearch::HttpClient::Pool::HostUnreachableError"} [2019-05-11T11:05:24,754][ERROR][logstash.outputs.elasticsearch][main] Attempted to send a bulk request to elasticsearch‘ but Elasticsearch appears to be unreachable or down! {:error_message=>"Elasticsearch Unreachable: [http://qqelastic:xxxxxx@155.155.155.155:55944/][Manticore::SocketTimeout] Read timed out", :class=>"LogStash::Outputs::ElasticSearch::HttpClient::Pool::HostUnreachableError", :will_retry_in_seconds=>2} [2019-05-11T11:05:25,158][WARN ][logstash.outputs.elasticsearch][main] Restored connection to ES instance {:url=>"http://qqelastic:xxxxxx@155.155.155.155:55944/"} [2019-05-11T11:05:26,763][WARN ][logstash.outputs.elasticsearch][main] Could not index event to Elasticsearch. {:status=>400, :action=>["index", {:_id=>nil, :_index=>"mysql-error-testqq-2019.05.11", :routing=>nil, :_type=>"_doc"}, #<LogStash::Event:0x65416fce>], :response=>{"index"=>{"_index"=>"mysql-error-qqweixin-2020.05.11", "_type"=>"_doc", "_id"=>nil, "status"=>400, "error"=>{"type"=>"validation_exception", "reason"=>"Validation Failed: 1: this action would add [2] total shards, but this cluster currently has [1000]/[1000] maximum shards open;"}}}}

可以用Kibana来设置

主要命令:

PUT /_cluster/settings { "transient": { "cluster": { "max_shards_per_node":10000 } } }

操作截图如下:

注意事项:

建议设置后重启下lostash服务

2019-03-23T19:24:41.772+0800 INFO [monitoring] log/log.go:145 Non-zero metrics in the last 30s

{"monitoring": {"metrics": {"beat":{"cpu":{"system":{"ticks":30,"time":{"ms":2}},"total":{"ticks":80,"time":{"ms":4},"value":80},"user":{"ticks":50,"time":{"ms":2}}},"handles":{"limit":{"hard":1000000,"soft":1000000},"open":6},"info":{"ephemeral_id":"a4c61321-ad02-2c64-9624-49fe4356a4e9","uptime":{"ms":210031}},"memstats":{"gc_next":7265376,"memory_alloc":4652416,"memory_total":12084992},"runtime":{"goroutines":16}},"filebeat":{"harvester":{"open_files":0,"running":0}},"libbeat":{"config":{"module":{"running":0}},"pipeline":{"clients":0,"events":{"active":0}}},"registrar":{"states":{"current":0}},"system":{"load":{"1":0,"15":0.05,"5":0.01,"norm":{"1":0,"15":0.0125,"5":0.0025}}}}}}

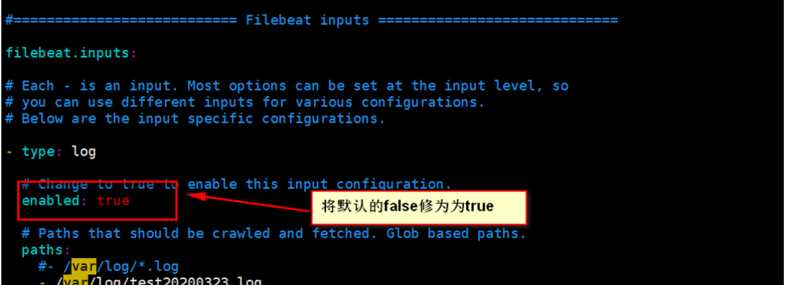

修改 filebeat.yml 的配置参数

2019-03-27T20:13:22.985+0800 ERROR logstash/async.go:256 Failed to publish events caused by: write tcp [::1]:48338->[::1]:5044: write: connection reset by peer 2019-03-27T20:13:23.985+0800 INFO [monitoring] log/log.go:145 Non-zero metrics in the last 30s {"monitoring": {"metrics": {"beat":{"cpu":{"system":{"ticks":130,"time":{"ms":11}},"total":{"ticks":280,"time":{"ms":20},"value":280},"user":{"ticks":150,"time":{"ms":9}}},"handles":{"limit":{"hard":65536,"soft":65536},"open":7},"info":{"ephemeral_id":"a02ed909-a7a0-49ee-aff9-5fdab26ecf70","uptime":{"ms":150065}},"memstats":{"gc_next":10532480,"memory_alloc":7439504,"memory_total":19313416,"rss":806912},"runtime":{"goroutines":27}},"filebeat":{"events":{"active":1,"added":1},"harvester":{"open_files":1,"running":1}},"libbeat":{"config":{"module":{"running":0}},"output":{"events":{"batches":1,"failed":1,"total":1},"write":{"errors":1}},"pipeline":{"clients":1,"events":{"active":1,"published":1,"total":1}}},"registrar":{"states":{"current":1}},"system":{"load":{"1":0.05,"15":0.11,"5":0.06,"norm":{"1":0.0063,"15":0.0138,"5":0.0075}}}}}} 2019-03-27T20:13:24.575+0800 ERROR pipeline/output.go:121 Failed to publish events: write tcp [::1]:48338->[::1]:5044: write: connection reset by peer

原因是同时有多个logstash进程在运行,关闭重启

filebeat 服务所在路径:

/etc/systemd/system

编辑filebeat.service文件

[Unit] Description=filebeat.service [Service] User=root ExecStart=/data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat -e -c /data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat.yml [Install] WantedBy=multi-user.target

管理服务的相关命令

systemctl start filebeat #启动filebeat服务

systemctl enable filebeat #设置开机自启动

systemctl disable filebeat #停止开机自启动

systemctl status filebeat #查看服务当前状态

systemctl restart filebeat #重新启动服务

systemctl list-units --type=service #查看所有已启动的服务

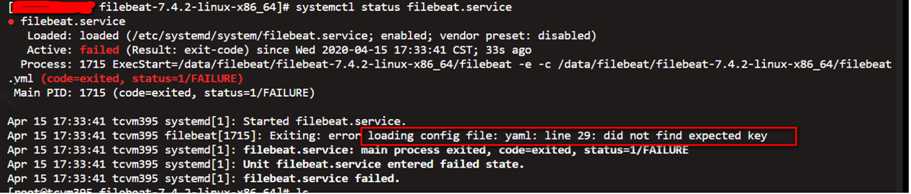

注意错误

Exiting: error loading config file: yaml: line 29: did not find expected key

主要问题是:filebeat.yml 文件中的格式有破坏,应特别注意修改和新增的地方,对照前后文,验证格式是否有变化。

此时我们可以以service来管理,在目录init.d下创建一个filebeat.service文件。主要脚本如下:

#!/bin/bash agent="/data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat" args="-e -c /data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat.yml" start() { pid=`ps -ef |grep /data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat |grep -v grep |awk ‘{print $2}‘` if [ ! "$pid" ];then echo "Starting filebeat: " nohup $agent $args >/dev/null 2>&1 & if [ $? == ‘0‘ ];then echo "start filebeat ok" else echo "start filebeat failed" fi else echo "filebeat is still running!" exit fi } stop() { echo -n $"Stopping filebeat: " pid=`ps -ef |grep /data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat |grep -v grep |awk ‘{print $2}‘` if [ ! "$pid" ];then echo "filebeat is not running" else kill $pid echo "stop filebeat ok" fi } restart() { stop start } status(){ pid=`ps -ef |grep /data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat |grep -v grep |awk ‘{print $2}‘` if [ ! "$pid" ];then echo "filebeat is not running" else echo "filebeat is running" fi } case "$1" in start) start ;; stop) stop ;; restart) restart ;; status) status ;; *) echo $"Usage: $0 {start|stop|restart|status}" exit 1 esac

注意事项

1.文件授予执行权限

chmod 755 filebeat.service

2.设置开机自启动

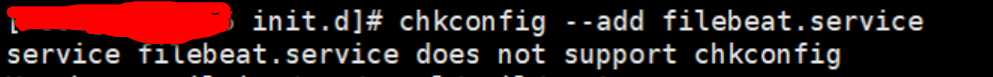

chkconfig --add filebeat.service

上面的服务添加自启动时,会报错

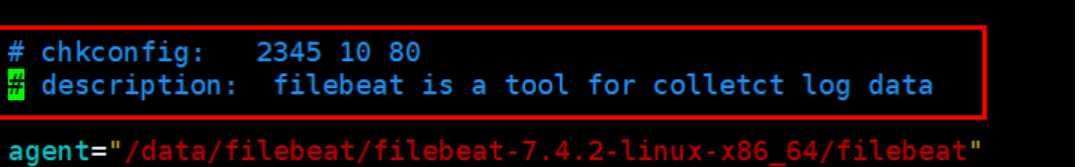

解决方案 在 service file的开头添加以下 两行

即修改完善后的代码如下:

#!/bin/bash # chkconfig: 2345 10 80 # description: filebeat is a tool for colletct log data agent="/data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat" args="-e -c /data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat.yml" start() { pid=`ps -ef |grep /data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat |grep -v grep |awk ‘{print $2}‘` if [ ! "$pid" ];then echo "Starting filebeat: " nohup $agent $args >/dev/null?2>&1 & if [ $? == ‘0‘ ];then echo "start filebeat ok" else echo "start filebeat failed" fi else echo "filebeat is still running!" exit fi } stop() { echo -n $"Stopping filebeat: " pid=`ps -ef |grep /data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat |grep -v grep |awk ‘{print $2}‘` if [ ! "$pid" ];then echo "filebeat is not running" else kill $pid echo "stop filebeat ok" fi } restart() { stop start } status(){ pid=`ps -ef |grep /data/filebeat/filebeat-7.4.2-linux-x86_64/filebeat |grep -v grep |awk ‘{print $2}‘` if [ ! "$pid" ];then echo "filebeat is not running" else echo "filebeat is running" fi } case "$1" in start) start ;; stop) stop ;; restart) restart ;; status) status ;; *) echo $"Usage: $0 {start|stop|restart|status}" exit 1 esac

logstash最常见的运行方式即命令行运行./bin/logstash -f logstash.conf启动,结束命令是ctrl+c。这种方式的优点在于运行方便,缺点是不便于管理,同时如果遇到服务器重启,则维护成本会更高一些,如果在生产环境运行logstash推荐使用服务的方式。以服务的方式启动logstash,同时借助systemctl的特性实现开机自启动。

(1)安装目录下的config中的startup.options需要修改

修改主要项:

1.服务默认启动用户和用户组为logstash;可以修改为root;

2. LS_HOME 参数设置为 logstash的安装目录;例如:/data/logstash/logstash-7.6.0

3. LS_SETTINGS_DIR参数配置为含有logstash.yml的目录;例如:/data/logstash/logstash-7.6.0/config

4. LS_OPTS 参数项,添加 logstash.conf 指定项(-f参数);例如:LS_OPTS="--path.settings ${LS_SETTINGS_DIR} -f /data/logstash/logstash-7.6.0/config/logstash.conf"

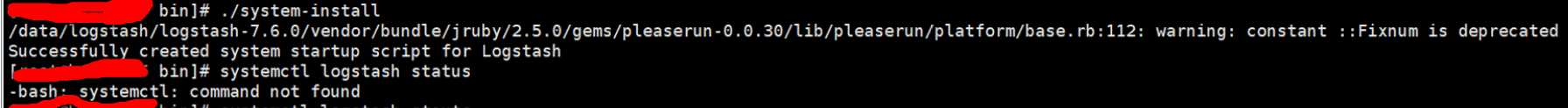

(2)以root身份执行logstash命令创建服务

创建服务的命令

安装目录/bin/system-install

执行创建命令后,在/etc/systemd/system/目录中生成了logstash.service 文件

(3)logstash 服务的管理

设置服务自启动:systemctl enable logstash

启动服务:systemctl start logstash

停止服务:systemctl stop logstash

重启服务:systemctl restart logstash

查看服务状态:systemctl status logstash

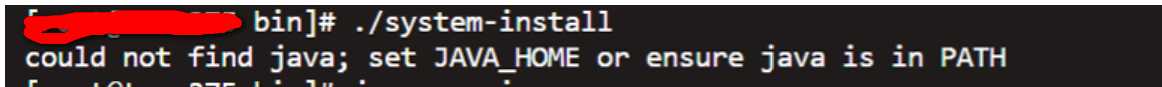

报错提示如下:

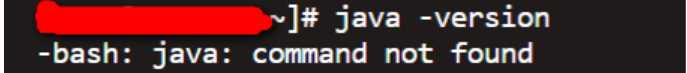

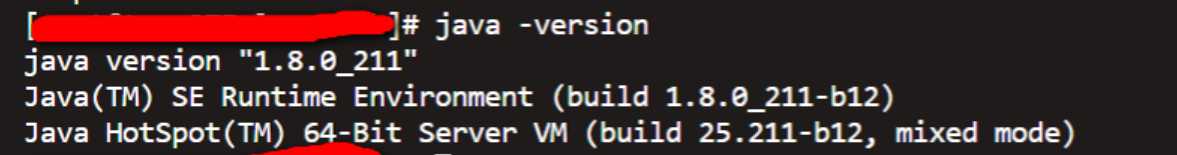

通过查看jave版本,验证是否已安装

上图说明没有安装。则将安装包下载(或上传)至本地,执行安装

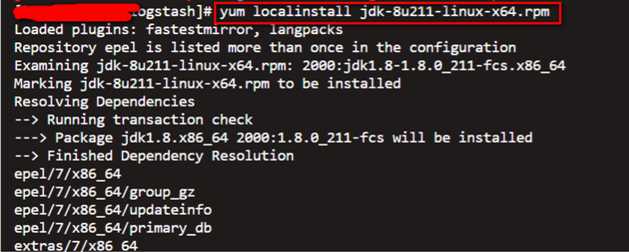

执行安装命令如下:

yum localinstall jdk-8u211-linux-x64.rpm

安装OK,执行验证

问题提示

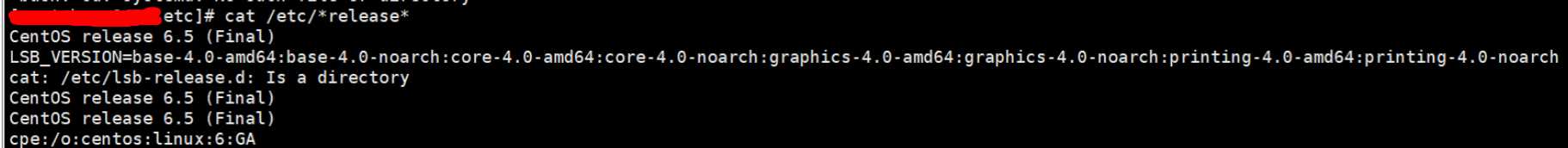

查看Linux系统版本

原因: centos 6.5 不支持 systemctl 管理服务

解决方案

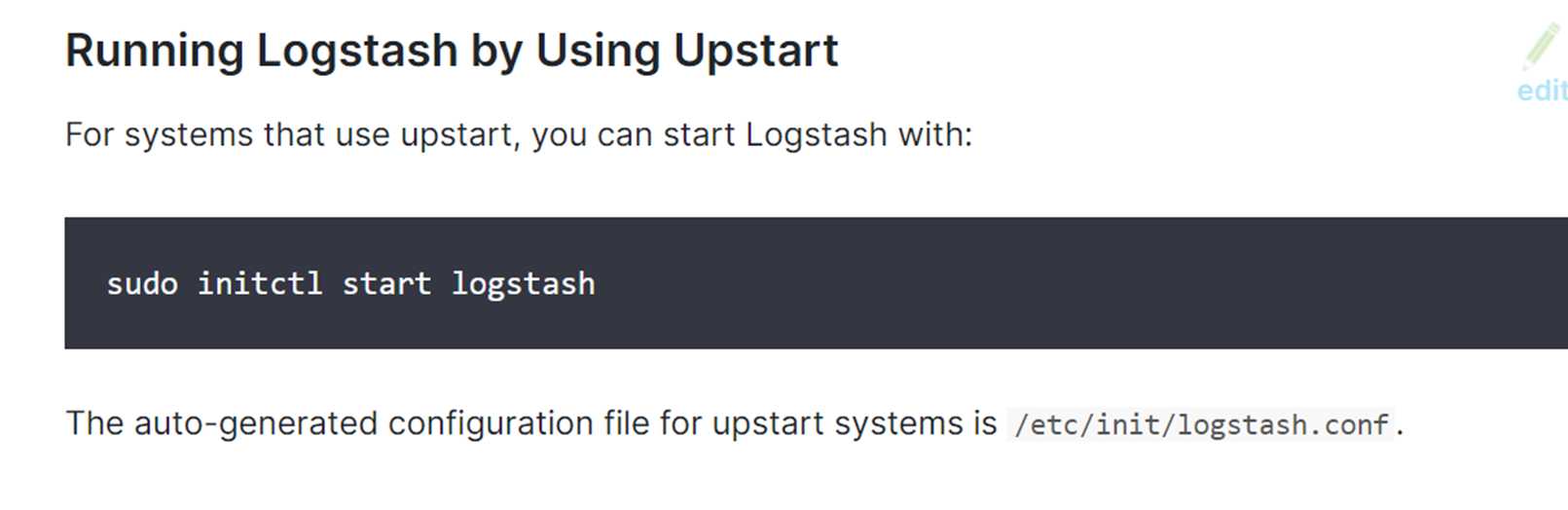

方案验证

相关命令

1.启动命令 initctl start logstash 2.查看状态 initctl status logstash

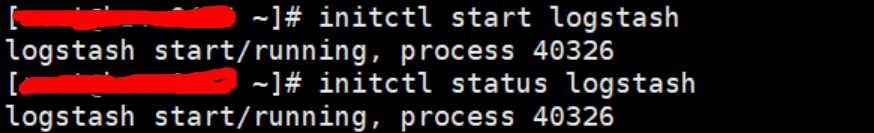

注意事项:

注意以下生成服务的命令还是要执行的

./system-install

否则提示错误

initctl: Unknown job: logstash

"Invalid index name [mysql-error-Test-2019.05.13], must be lowercase", "index_uuid"=>"_na_", "index"=>"mysql-error-Test-2019.05.13"}}}} May 13 13:36:33 hzvm1996 logstash[123194]: [2019-05-13T13:36:33,907][ERROR][logstash.outputs.elasticsearch][main] Could not index event to Elasticsearch. {:status=>400, :action=>["index", {:_id=>nil, :_index=>"mysql-slow-Test-2020.05.13", :routing=>nil, :_type=>"_doc"}, #<LogStash::Event:0x1f0aedbc>], :response=>{"index"=>{"_index"=>"mysql-slow-Test-2019.05.13", "_type"=>"_doc", "_id"=>nil, "status"=>400, "error"=>{"type"=>"invalid_index_name_exception", "reason"=>"Invalid index name [mysql-slow-Test-2019.05.13], must be lowercase", "index_uuid"=>"_na_", "index"=>"mysql-slow-Test-2019.05.13"}}}} May 13 13:38:50 hzvm1996 logstash[123194]: [2019-05-13T13:38:50,765][ERROR][logstash.outputs.elasticsearch][main] Could not index event to Elasticsearch. {:status=>400, :action=>["index", {:_id=>nil, :_index=>"mysql-error-Test-2020.05.13", :routing=>nil, :_type=>"_doc"}, #<LogStash::Event:0x4bdce1db>], :response=>{"index"=>{"_index"=>"mysql-error-Test-2019.05.13", "_type"=>"_doc", "_id"=>nil, "status"=>400, "error"=>{"type"=>"invalid_index_name_exception", "reason"=>"Invalid index name [mysql-error-Test-2019.05.13], must be lowercase", "index_uuid"=>"_na_", "index"=>"mysql-error-Test-2019.05.13"}}}}

[root@testkibaba bin]# ./kibana-plugin install x-pack Plugin installation was unsuccessful due to error "Kibana now contains X-Pack by default, there is no longer any need to install it as it is already present.

说明:新版本的Elasticsearch和Kibana都已经支持自带支持x-pack了,不需要进行显式安装。老版本的需要进行安装。

[root@testkibana bin]# ./kibana

报错

Kibana should not be run as root. Use --allow-root to continue.

添加个专门的账号

useradd qqweixinkibaba --添加账号 chown -R qqweixinkibaba:hzdbakibaba kibana-7.4.2-linux-x86_64 --为新增账号赋予文档目录的权限 su qqweixinkibaba ---切换账号,让后再启动

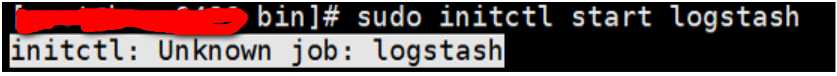

{"statusCode":403,"error":"Forbidden","message":"Forbidden"}

报错原因是:用kibana账号登录kibana报错,改为elastic用户就行了

一个公司会有多个业务线,也可能会有多个研发小组,那么如何实现收集到的数据只对相应的团队开放呢?即实现只能看到自家的数据。一种思路就是搭建多个ELK,一个业务线一个ELK,但这个方法会导致资源浪费和增加运维工作量;另一种思路就是通过多租户来实现。

实现时,应注意以下问题:

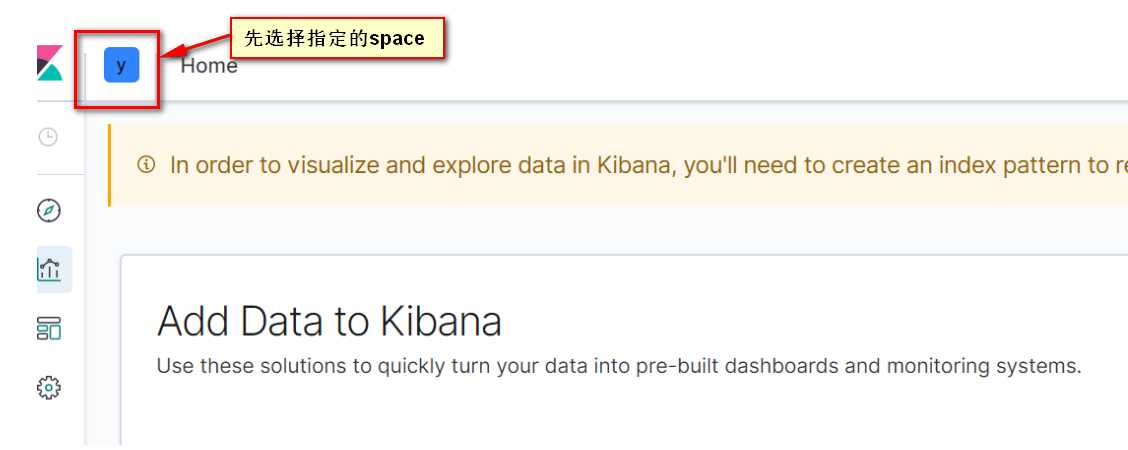

要在 elastic 账号下,转到指定的空间(space)下,再设置 index pattern 。

先创建role(注意与space关联),最后创建user。

1.https://www.jianshu.com/p/0a5acf831409 《ELK应用之Filebeat》

2.http://www.voidcn.com/article/p-nlietamt-zh.html 《filebeat 启动脚本》

3.https://www.bilibili.com/video/av68523257/?redirectFrom=h5 《ElasticTalk #22 Kibana 多租户介绍与实战》

4.https://www.cnblogs.com/shengyang17/p/10597841.html 《ES集群》

5.https://www.jianshu.com/p/54cdddf89989 《Logstash配置以服务方式运行》

6.https://www.elastic.co/guide/en/logstash/current/running-logstash.html#running-logstash-upstart 《Running Logstash as a Service on Debian or RPM》

标签:ons mon 新版本 guide multi 设置 nohup ike The

原文地址:https://www.cnblogs.com/xuliuzai/p/12783278.html