标签:shadow 端口 hiberna org table proc 现在 currently apach

进入链接下载源码

https://github.com/keedio/flume-ng-sql-source

现在最新是1.5.3

解压,

进入到目录中编译

直接编译可能报错,跳过test

mvn package -DskipTests

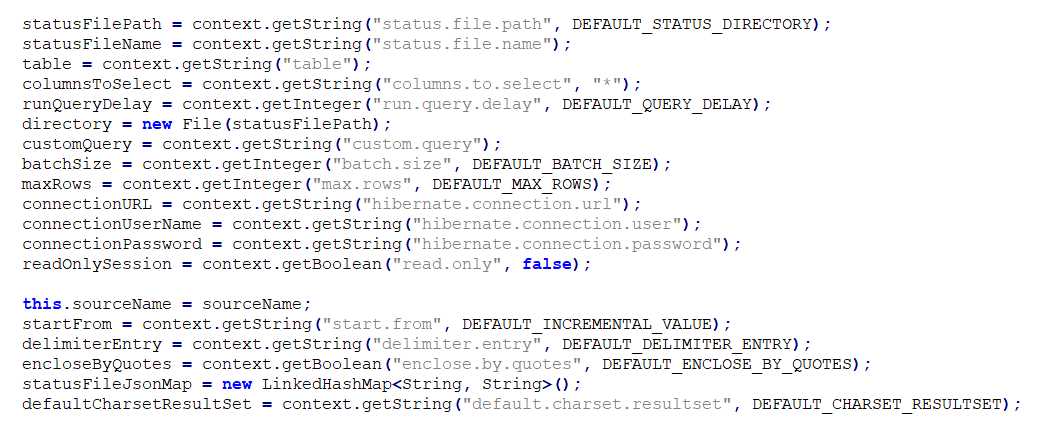

agent.sources = sql-source agent.sinks = k1 agent.channels = ch #这个是flume采集mysql的驱动,git地址https://github.com/keedio/flume-ng-sql-source, #需要自己编译,编译完成后,将flume-ng-sql-source-1.x.x.jar包放到FLUME_HOME/lib下, #如果是CM下CDH版本的flume,则放到/opt/cloudera/parcels/CDH-xxxx/lib/flume-ng/lib下 agent.sources.sql-source.type= org.keedio.flume.source.SQLSource # URL to connect to database (currently only mysql is supported) #?useUnicode=true&characterEncoding=utf-8&useSSL=false参数需要加上 agent.sources.sql-source.hibernate.connection.url=jdbc:mysql://hostname:3306/yinqing?useUnicode=true&characterEncoding=utf-8&useSSL=false # Database connection properties agent.sources.sql-source.hibernate.connection.user=root agent.sources.sql-source.hibernate.connection.password =password agent.sources.sql-source.hibernate.dialect = org.hibernate.dialect.MySQLDialect #需要将mysql-connector-java-X-bin.jar放到FLUME_HOME/lib下, #如果是CM下CDH版本的flume,则放到/opt/cloudera/parcels/CDH-xxxx/lib/flume-ng/lib下 #此处直接提供5.1.48版本(理论mysql5.x的都可以用)的 #wget https://dev.mysql.com/get/Downloads/Connector-J/mysql-connector-java-5.1.48.tar.gz #注意,mysql驱动版本太低会报错: #org.hibernate.exception.JDBCConnectionException: Error calling DriverManager#getConnection agent.sources.sql-source.hibernate.driver_class = com.mysql.jdbc.Driver agent.sources.sql-source.hibernate.connection.autocommit = true #填写你需要采集的数据表名字 agent.sources.sql-source.table =table_name agent.sources.sql-source.columns.to.select = * # Query delay, each configured milisecond the query will be sent agent.sources.sql-source.run.query.delay=10000 # Status file is used to save last readed row #储存flume的状态数据,因为是增量查找 agent.sources.sql-source.status.file.path = /var/lib/flume-ng agent.sources.sql-source.status.file.name = sql-source.status #kafka.sink配置,此处是集群,需要zookeeper和kafka集群的地址已经端口号,不懂的,看后面kafka的配置已经介绍 agent.sinks.k1.type = org.apache.flume.sink.kafka.KafkaSink agent.sinks.k1.topic = yinqing agent.sinks.k1.brokerList = kafka-node1:9092,kafka-node2:9092,kafka-node3:9092 agent.sinks.k1.batchsize = 200 agent.sinks.kafkaSink.requiredAcks=1 agent.sinks.k1.serializer.class = kafka.serializer.StringEncoder #此处的zookeeper端口根据配置来,我配的是2180,基本应该是2181 agent.sinks.kafkaSink.zookeeperConnect=zookeeper-node1:2180,zookeeper-node2:2180,zookeeper-node3:2180 agent.channels.ch.type = memory agent.channels.ch.capacity = 10000 agent.channels.ch.transactionCapacity = 10000 agent.channels.hbaseC.keep-alive = 20 agent.sources.sql-source.channels = ch agent.sinks.k1.channel = ch

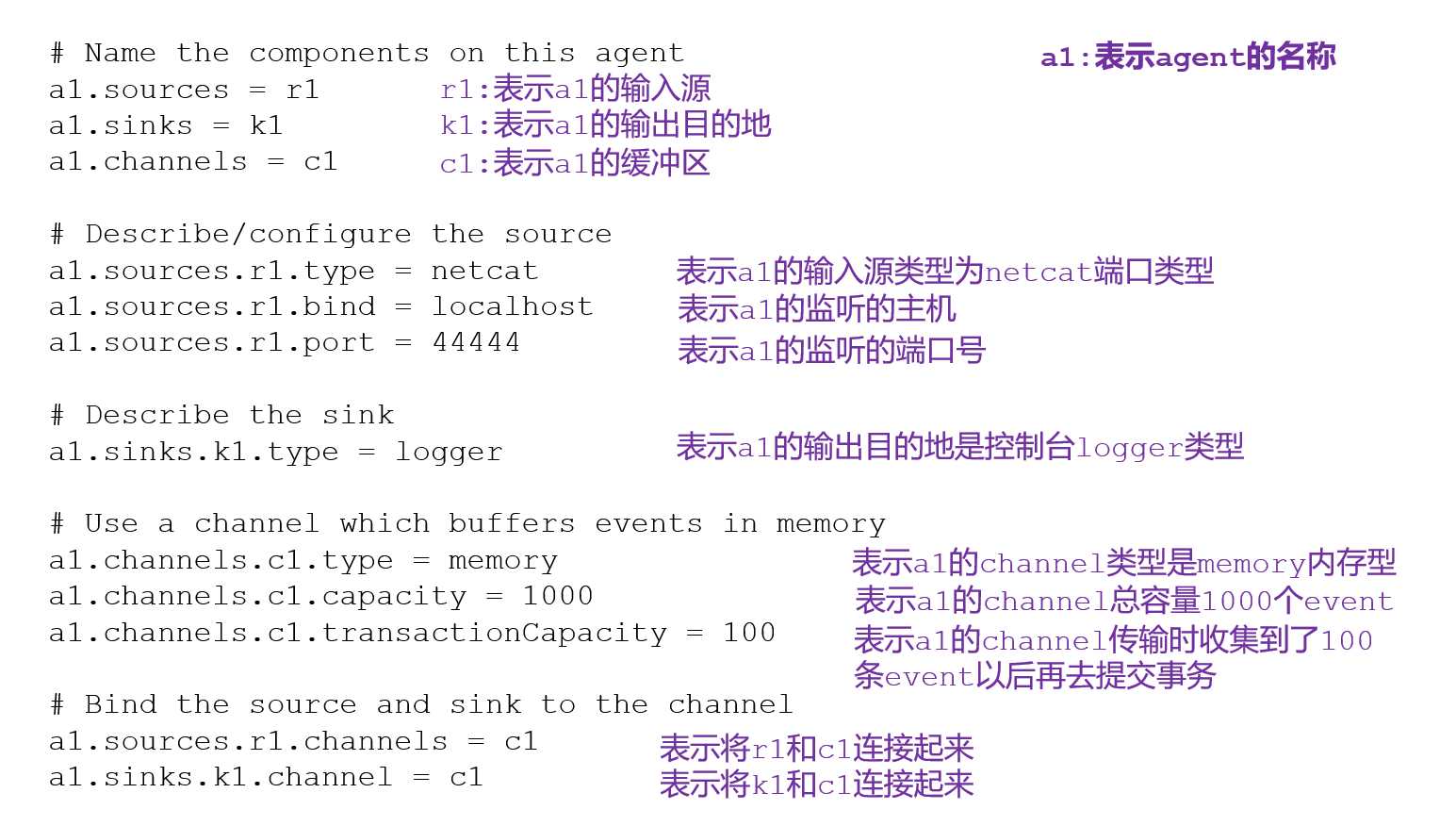

conf文件说明

参数说明:

--conf conf/ :表示配置文件存储在conf/目录

--name a1 :表示给agent起名为a1

--conf-file job/flume-telnet.conf :flume本次启动读取的配置文件是在job文件夹下的flume-telnet.conf文件。

-Dflume.root.logger==INFO,console :-D表示flume运行时动态修改flume.root.logger参数属性值,并将控制台日志打印级别设置为INFO级别。日志级别包括:log、info、warn、error。

#开启flume服务

flume-ng agent -n agent -c ./ -f ./mysql-flume-kafka.conf -Dflume.root.logger=DEBUG,console

git地址https://github.com/keedio/flume-ng-sql-source 下载的也要注意版本,不然conf文件中会找不到对应的配置报错

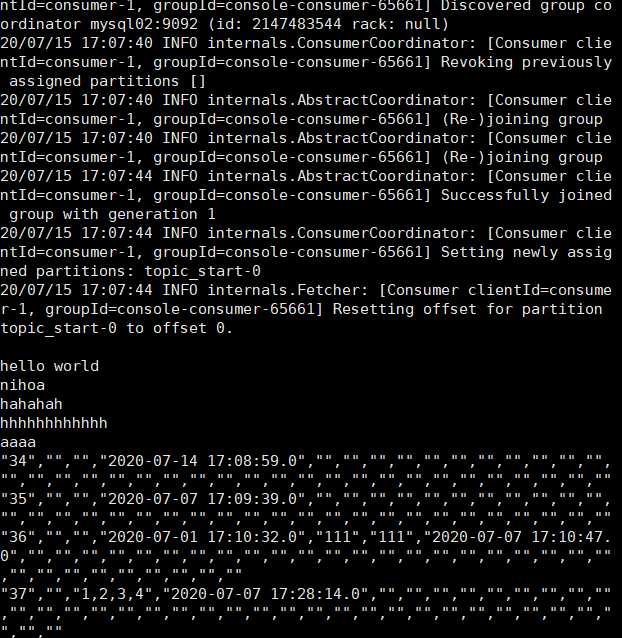

启动一个kafak的消费监控:

kafka-console-consumer --bootstrap-server app02:9092 --from-beginning --topic topic_start

往监控的mysql表中插入数据即可,只能获取新增的数据,更新的数据还不能识别。

标签:shadow 端口 hiberna org table proc 现在 currently apach

原文地址:https://www.cnblogs.com/zpan2019/p/13307097.html