标签:cli network yam app leader targe 验证 升级内核 pre

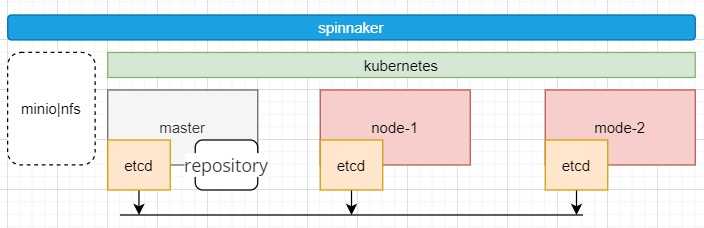

| 节点角色 | 主机名 | IP | cpu | ram | disk | kernel | 组件 |

|---|---|---|---|---|---|---|---|

| master/worker | master | 192.168.23.135 | 2 | 8G | 80G | kernel-lt-4.4.227 | etcd docker flannel kube-scheduler kube-controller-mananger kube-apiserver kubelet kube-proxy minio nfs docker repository |

| worker | node-1 | 192.168.23.136 | 2 | 8G | 80G | kernel-lt-4.4.227 | etcd docker flannel kubelet kube-proxy |

| worker | node-2 | 192.168.23.137 | 2 | 8G | 80G | kernel-lt-4.4.227 | etcd docker flannel kubelet kube-proxy |

| 组件 | 版本 | 二进制包下载地址 |

|---|---|---|

| kubernetes | v1.18.5 | https://dl.k8s.io/v1.18.5/kubernetes-server-linux-amd64.tar.gz |

| docker-ce | 18.03 | https://download.docker.com/linux/static/stable/x86_64/docker-18.03.0-ce.tgz |

| flannel | 0.11 | https://github.com/coreos/flannel/releases/download/v0.11.0/flannel-v0.11.0-linux-amd64.tar.gz |

| etcd | 3.3.10 | https://github.com/etcd-io/etcd/releases/download/v3.3.10/etcd-v3.3.10-linux-amd64.tar.gz |

| mino | https://dl.min.io/server/minio/release/linux-amd64/minio | |

| cfssl | 1.12.0 | https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 |

| kubernetes-dashboard | 2.0.3 | https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.3/aio/deploy/recommended.yaml |

| kernel-lt-4.4.227 | 4.4.227 | https://elrepo.org/linux/kernel/el7/x86_64/RPMS/ |

组件百度云下载地址:

cd /tmp

[root@master tmp]# ls

k8s-software.tar.gz

tar -xvf k8s-software.tar.gz

ls -l

dr-xr-x--- 2 1001 513 58 Jul 24 18:51 cfssl-1.12.0

-r-xr-x--- 1 1001 513 378880 Jun 26 01:59 codedns.tar.gz

-r-xr-x--- 1 1001 513 7552 Jul 5 18:50 dashboard-v2.0.3.yaml

dr-xr-x--- 2 1001 513 177 Jul 24 18:52 docker-ce-18.03

dr-xr-x--- 2 1001 513 33 Jul 24 18:52 etcd-3.3.10

dr-xr-x--- 2 1001 513 47 Jul 24 18:52 flannel-0.11

-rw-r--r-- 1 root root 1056826368 Jul 25 01:56 k8s-software.tar.gz

-r-xr-x--- 1 1001 513 41209720 Jun 29 10:19 kernel-lt-4.4.227-1.el7.elrepo.x86_64.rpm

-r-xr-x--- 1 1001 513 10711232 Jun 29 10:18 kernel-lt-devel-4.4.227-1.el7.elrepo.x86_64.rpm

dr-xr-x--- 2 1001 513 175 Jul 24 18:52 kubernetes-v.1.18.5

-r-xr-x--- 1 1001 513 4687 Jul 5 19:07 kubernetes-dashboard.yaml

-r-xr-x--- 1 1001 513 227409920 Jul 5 19:07 kubernetesui-dashboard-v2.0.3.tar.gz

-r-xr-x--- 1 1001 513 36961280 Jul 5 19:07 kubernetesui-metrics-scraper-v1.0.4.tar.gz

-r-xr-x--- 1 1001 513 765440 Jun 26 19:31 pause-amd64-3.0.tar.gz

-rw-r--r-- 1 root root 26806784 Jul 25 02:01 registry.tar.gz

systemctl stop firewalld

systemctl disable firewalld

setenforce 0

sed -i.bak ‘s/SELINUX=enforcing/SELINUX=disabled/‘ /etc/selinux/config

swapoff -a

sed -i.bak ‘/ swap / s/^\(.*\)$/#\1/g‘ /etc/fstab

wget ##下载内核rpm包

yum localinstall -y kernel-lt-*

sed -i ‘s\saved\0\g‘ /etc/default/grub

grub2-mkconfig -o /boot/grub2/grub.cfg

systemctl enable rc-local

chmod a+x /etc/rc.d/rc.local

systemctl restart rc-local

cat >> /etc/rc.local << EOF

modprobe ip_vs_rr

modprobe br_netfilter

EOF

cat > /etc/modules-load.d/ipvs.conf << EOF

ip_vs_rr

br_netfilter

EOF

systemctl enable --now systemd-modules-load.service

cat >/etc/sysctl.d/kubernetes.conf <<EOF

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

net.ipv4.tcp_tw_recycle=0

vm.swappiness=0

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

fs.file-max=52706963

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

net.netfilter.nf_conntrack_max=2310720

EOF

sysctl -p /etc/sysctl.d/kubernetes.conf

reboot

mkdir -p /opt/{etcd,k8s}

mkdir -p /opt/etcd/{bin,tsl,config}

mkdir -p /opt/k8s/{bin,tsl,config}

cp /tmp/cfssl-1.12.0/cfssl* /usr/sbin/

cd /opt/etcd/tsl/

cfssl print-defaults csr > etcd-ca.json

##修改etcd-ca.json

cat etcd-ca.json

{

"CN": "etcd",

"hosts": [

"192.168.23.135",

"192.168.23.136",

"192.168.23.137",

"127.0.0.1"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Shenzhen",

"ST": "Nanshan"

}

]

}

## 初始化ca-key

cfssl gencert -initca etcd-ca.json |cfssljson -bare etcd-ca -

ls *pem

etcd-ca-key.pem etcd-ca.pem

cfssl print-defaults csr > etcd-server-csr.json

cat etcd-server-csr.json

{

"CN": "etcd",

"hosts": [

"192.168.23.135",

"192.168.23.136",

"192.168.23.137",

"127.0.0.1"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Shenzhen",

"ST": "Nanshan"

}

]

}

cfssl print-defaults config > etcd-ca-config.json

cat etcd-ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"etcd": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth"

]

},

"client": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"client auth"

]

}

}

}

}

cfssl gencert -ca=etcd-ca.pem -ca-key=etcd-ca-key.pem -config=etcd-ca-config.json --profile=etcd etcd-server-csr.json |cfssljson -bare etcd-server -

ls etcd*server*pem

etcd-server-key.pem etcd-server.pem

cd /opt/k8s/tsl/

cfssl print-defaults csr > k8s-ca.json

cat k8s-ca.json

{

"CN": "k8s",

"hosts": [

"192.168.23.135",

"192.168.23.136",

"192.168.23.137",

"127.0.0.1"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Shenzhen",

"ST": "Nanshan"

}

]

}

cfssl gencert -initca k8s-ca.json |cfssljson -bare k8s-ca -

cfssl print-defaults config > k8s-ca-config.json

cat k8s-ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"k8s": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth"

]

},

"client": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"client auth"

]

}

}

}

}

cfssl print-defaults csr > k8s-apiserver.json

cat k8s-apiserver.json

{

"CN": "k8s",

"hosts": [

"127.0.0.1",

"192.168.23.135",

"192.168.23.136",

"192.168.23.137",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.k8s",

"kubernetes.default.svc.k8s.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Shenzhen",

"ST": "Nanshan",

"O": "k8s",

"OU": "System"

}

]

}

cfssl gencert -ca=k8s-ca.pem -ca-key=k8s-ca-key.pem -config=k8s-ca-config.json -profile=k8s k8s-apiserver.json |cfssljson -bare k8s-apiserver -

ls k8s-apiserver*pem

k8s-apiserver-key.pem k8s-apiserver.pem

cfssl print-defaults csr > k8s-proxy.json

cat k8s-proxy.json

{

"CN": "k8s",

"hosts": [

"127.0.0.1",

"192.168.23.135",

"192.168.23.136",

"192.168.23.137"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Shenzhen",

"ST": "Nanshan",

"O": "k8s",

"OU": "System"

}

]

}

cfssl gencert -ca=k8s-ca.pem -ca-key=k8s-ca-key.pem -config=k8s-ca-config.json -profile=k8s k8s-proxy.json |cfssljson -bare k8s-proxy -

ls k8s-proxy*pem

k8s-proxy-key.pem k8s-proxy.pem

hostnamectl set-hostname master

cp /tmp/etcd-3.3.10/etcd* /opt/etcd/bin/

cd /opt/etcd/bin/

[root@master bin]# ls

etcd etcdctl

vim /opt/etcd/config/etcd.cfg

#[Member]

ETCD_NAME="etcd01"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.23.135:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.23.135:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.23.135:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.23.135:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.23.135:2380,etcd02=https://192.168.23.136:2380,etcd03=https://192.168.23.137:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

[root@master bin]# cat /opt/etcd/config/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/opt/etcd/config/etcd.cfg

ExecStart=/opt/etcd/bin/etcd --name=${ETCD_NAME} --data-dir=${ETCD_DATA_DIR} --listen-peer-urls=${ETCD_LISTEN_PEER_URLS} --listen-client-urls=${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 --advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} --initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} --initial-cluster=${ETCD_INITIAL_CLUSTER} --initial-cluster-token=${ETCD_INITIAL_CLUSTER_TOKEN} --initial-cluster-state=new --cert-file=/opt/etcd/tsl/etcd-server.pem --key-file=/opt/etcd/tsl/etcd-server-key.pem --peer-cert-file=/opt/etcd/tsl/etcd-server.pem --peer-key-file=/opt/etcd/tsl/etcd-server-key.pem --trusted-ca-file=/opt/etcd/tsl/etcd-ca.pem --peer-trusted-ca-file=/opt/etcd/tsl/etcd-ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

scp -r /opt/etcd/ root@192.168.23.136:/opt/

scp -r /opt/etcd/ root@192.168.23.137:/opt/

ln -s /opt/etcd/config/etcd.service /usr/lib/systemd/system/etcd.service

hostnamectl set-hostname node-1

[root@node-1 ~]# cat /opt/etcd/config/etcd.cfg

#[Member]

ETCD_NAME="etcd02"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.23.136:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.23.136:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.23.136:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.23.136:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.23.135:2380,etcd02=https://192.168.23.136:2380,etcd03=https://192.168.23.137:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

hostnamectl set-hostname node-2

[root@node-2 ~]# cat /opt/etcd/config/etcd.cfg

#[Member]

ETCD_NAME="etcd03"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.23.137:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.23.137:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.23.137:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.23.137:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.23.135:2380,etcd02=https://192.168.23.136:2380,etcd03=https://192.168.23.137:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

ln -s /opt/etcd/config/etcd.service /usr/lib/systemd/system/etcd.service

systemctl daemon-reload

systemctl enable etcd

systemctl start etcd

#启动ETCD集群同时启动二个节点,单节点是无法正常启动的。

[root@master bin]# /opt/etcd/bin/etcdctl --ca-file=/opt/etcd/tsl/etcd-ca.pem --cert-file=/opt/etcd/tsl/etcd-server.pem --key-file=/opt/etcd/tsl/etcd-server-key.pem --endpoints="https://192.168.23.135:2379,https:/192.168.23.136:2379,https://192.168.23.137:2379" cluster-health

member f605c94d9a81d1 is healthy: got healthy result from https://192.168.23.136:2379

member 1d4ca791a3f37e9a is healthy: got healthy result from https://192.168.23.135:2379

member 50da4142886026dc is healthy: got healthy result from https://192.168.23.137:2379

cluster is healthy

[root@master bin]# /opt/etcd/bin/etcdctl --ca-file=/opt/etcd/tsl/etcd-ca.pem --cert-file=/opt/etcd/tsl/etcd-server.pem --key-file=/opt/etcd/tsl/etcd-server-key.pem --endpoints="https://192.168.23.135:2379,https:/192.168.23.136:2379,https://192.168.23.137:2379" member list

f605c94d9a81d1: name=etcd02 peerURLs=https://192.168.23.136:2380 clientURLs=https://192.168.23.136:2379 isLeader=false

1d4ca791a3f37e9a: name=etcd01 peerURLs=https://192.168.23.135:2380 clientURLs=https://192.168.23.135:2379 isLeader=true

50da4142886026dc: name=etcd03 peerURLs=https://192.168.23.137:2380 clientURLs=https://192.168.23.137:2379 isLeader=false

pod网络规划为:172.10.0.0/16

[root@master bin]# /opt/etcd/bin/etcdctl --ca-file=/opt/etcd/tsl/etcd-ca.pem --cert-file=/opt/etcd/tsl/etcd-server.pem --key-file=/opt/etcd/tsl/etcd-server-key.pem --endpoints="https://192.168.23.135:2379,https:/192.168.23.136:2379,https://192.168.23.137:2379" set /k8s-pod/network/config ‘{"Network":"172.10.0.0/16","Backend":{"Type":"vxlan"}}‘

{"Network":"172.10.0.0/16","Backend":{"Type":"vxlan"}}

参数说明:写入的 Pod 网段 ${CLUSTER_CIDR} 必须是 /16 段地址,必须与 kube-controller-manager 的 --cluster-cidr 参数值一致;

mkdir -p /opt/flanneld/{bin,config}

cp /tmp/flannel-0.11/* /opt/flannel/bin/

cd /opt/flanneld/bin

[root@master bin]# ls

flanneld mk-docker-opts.sh

vim /opt/flanneld/config/flenneld.cfg

FLANNEL_OPTIONS="-etcd-cafile=/opt/etcd/tsl/etcd-ca.pem -etcd-certfile=/opt/etcd/tsl/etcd-server.pem -etcd-keyfile=/opt/etcd/tsl/etcd-server-key.pem -iface=ens33 -etcd-prefix=/k8s-pod/network -etcd-endpoints=https://192.168.23.135:2379,https://192.168.23.136:2379,https://192.168.23.137:2379"

vim /opt/flanneld/config/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/flannel/config/flanneld.cfg

ExecStart=/opt/flannel/bin/flanneld --ip-masq $FLANNEL_OPTIONS

ExecStartPost=/opt/flannel/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

ln -s /opt/flannel/config/flanneld.service /usr/lib/systemd/system/flanneld.service

写入的 Pod 网段 ${CLUSTER_CIDR} 必须是 /16 段地址,必须与 kube-controller-manager 的 --cluster-cidr 参数值一致;

scp -r /opt/flannel/ root@192.168.23.136:/opt/ [master]

scp -r /opt/flannel/ root@192.168.23.137:/opt/ [master]

ln -s /opt/flannel/config/flanneld.service /usr/lib/systemd/system/flanneld.service

ln -s /opt/flannel/config/flanneld.service /usr/lib/systemd/system/flanneld.service

systemctl daemon-reload

systemctl restart flanneld

systemctl enable flanneld

mkdir -p /opt/docker/config/

cp /tmp/docker-ce-18.03/docker* /usr/sbin/

vim /opt/docker/config/docker.service

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/sbin/dockerd $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

cat > daemon.json <<EOF

{

"registry-mirrors": ["https://wbuj86p5.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

scp /usr/sbin/docker* root@192.168.23.136:/usr/sbin/

scp /usr/sbin/docker* root@192.168.23.137:/usr/sbin/

scp -r /opt/docker/ root@192.168.23.136:/opt/

scp -r /opt/docker/ root@192.168.23.137:/opt/

mkdir -p /etc/docker/

ln -s /opt/docker/config/daemon.json /etc/docker/daemon.json

ln -s /opt/docker/config/docker.service /usr/lib/systemd/system/docker.service

systemctl daemon-reload

systemctl restart docker

systemctl enable docker

[root@node-1 ~]# ifconfig flannel.1|grep inet

inet 172.10.14.0 netmask 255.255.255.255 broadcast 0.0.0.0

[root@node-1 ~]# ifconfig docker0|grep inet

inet 172.10.14.1 netmask 255.255.255.0 broadcast 172.10.14.255

[root@node-1 ~]# docker info|egrep ‘Cgroup|Network‘

Cgroup Driver: systemd

Network: bridge host macvlan null overlay

至此所有非k8s的组件环境安装完成!

检查所有服务必须正常

[root@node-1 ~]# systemctl status etcd flanneld docker|grep running

Active: active (running) since Thu 2020-07-23 15:54:10 CST; 7min ago

Active: active (running) since Thu 2020-07-23 15:54:10 CST; 7min ago

Active: active (running) since Thu 2020-07-23 15:54:11 CST; 7min ago

[root@master ~]# systemctl status etcd flanneld docker|grep running

Active: active (running) since Thu 2020-07-23 15:54:08 CST; 8min ago

Active: active (running) since Thu 2020-07-23 15:54:08 CST; 8min ago

Active: active (running) since Thu 2020-07-23 15:54:09 CST; 8min ago

[root@node-2 ~]# systemctl status etcd flanneld docker|grep running

Active: active (running) since Thu 2020-07-23 15:54:08 CST; 8min ago

Active: active (running) since Thu 2020-07-23 15:54:08 CST; 8min ago

Active: active (running) since Thu 2020-07-23 15:54:09 CST; 8min ago

kubernetes master 节点运行如下组件:

kube-apiserver

kube-scheduler

kube-controller-manager

kube-scheduler 和 kube-controller-manager 可以以集群模式运行,通过 leader 选举产生一个工作进程,其它进程处于阻塞模式。

cd /opt/k8s/config/

[root@master config]# head -c 16 /dev/urandom | od -An -t x | tr -d ‘ ‘

2c1e270afcd5440611fd76ee3faab593

vim k8s-token.csv

2c1e270afcd5440611fd76ee3faab593,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

vim kube-apiserver.cfg

KUBE_APISERVER_OPTS="--logtostderr=true --v=4 --etcd-servers=https://192.168.23.135:2379,https://192.168.23.136:2379,https://192.168.23.137:2379 --bind-address=192.168.23.135 --secure-port=6443 --advertise-address=192.168.23.135 --allow-privileged=true --service-cluster-ip-range=10.0.0.0/24 --enable-admission-plugins=NamespaceLifecycle,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota,NodeRestriction --authorization-mode=RBAC,Node --enable-bootstrap-token-auth --token-auth-file=/opt/k8s/config/k8s-token.csv --service-node-port-range=30000-50000 --tls-cert-file=/opt/k8s/tsl/k8s-apiserver.pem --tls-private-key-file=/opt/k8s/tsl/k8s-apiserver-key.pem --client-ca-file=/opt/k8s/tsl/k8s-ca.pem --service-account-key-file=/opt/k8s/tsl/k8s-ca-key.pem --etcd-cafile=/opt/etcd/tsl/etcd-ca.pem --etcd-certfile=/opt/etcd/tsl/etcd-server.pem --etcd-keyfile=/opt/etcd/tsl/etcd-server-key.pem"

vim kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/k8s/config/kube-apiserver.cfg

ExecStart=/opt/k8s/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

cp /tmp/kubernetes-v.1.18.5/kube-apiserver /opt/k8s/bin/

ln -s /opt/k8s/config/kube-apiserver.service /usr/lib/systemd/system/kube-apiserver.service

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver

ps: 如果出现错误则先处理错误后在部署下一个组件。

[root@master config]# systemctl status kube-apiserver

● kube-apiserver.service - Kubernetes API Server

Loaded: loaded (/opt/k8s/config/kube-apiserver.service; enabled; vendor preset: disabled)

Active: active (running) since Thu 2020-07-23 17:07:25 CST; 5min ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 4911 (kube-apiserver)

CGroup: /system.slice/kube-apiserver.service

└─4911 /opt/k8s/bin/kube-apiserver --logtostderr=true --v=4 --etcd-servers=https://192.168.23.135:2379,https://192.168.23.136:2379,https://192.168.23.137:2379 --bind-addre...

vim kube-scheduler.cfg

KUBE_SCHEDULER_OPTS="--logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect"

--address:在 127.0.0.1:10251 端口接收 http /metrics 请求;kube-scheduler 目前还不支持接收 https 请求;

vim kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/k8s/config/kube-scheduler.cfg

ExecStart=/opt/k8s/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

cp /tmp/kubernetes-v.1.18.5/kube-scheduler /opt/k8s/bin/

ln -s /opt/k8s/config/kube-scheduler.service /usr/lib/systemd/system/kube-scheduler.service

systemctl daemon-reload

systemctl start kube-scheduler

systemctl enable kube-scheduler

[root@master config]# systemctl status kube-scheduler

● kube-scheduler.service - Kubernetes Scheduler

Loaded: loaded (/opt/k8s/config/kube-scheduler.service; enabled; vendor preset: disabled)

Active: active (running) since Thu 2020-07-23 17:21:01 CST; 32s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 6008 (kube-scheduler)

CGroup: /system.slice/kube-scheduler.service

└─6008 /opt/k8s/bin/kube-scheduler --logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect

vim kube-controller-manager.cfg

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect=true --address=127.0.0.1 --service-cluster-ip-range=10.0.0.0/24 --cluster-name=kubernetes --cluster-signing-cert-file=/opt/k8s/tsl/k8s-ca.pem --cluster-signing-key-file=/opt/k8s/tsl/k8s-ca-key.pem --root-ca-file=/opt/k8s/tsl/k8s-ca.pem --service-account-private-key-file=/opt/k8s/tsl/k8s-ca-key.pem"

vim kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/k8s/config/kube-controller-manager.cfg

ExecStart=/opt/k8s/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

cp /tmp/kubernetes-v.1.18.5/kube-controller-manager /opt/k8s/bin/

ln -s /opt/k8s/config/kube-controller-manager.service /usr/lib/systemd/system/kube-controller-manager.service

systemctl daemon-reload

systemctl start kube-controller-manager

systemctl enable kube-controller-manager

[root@master config]# systemctl status kube-controller-manager

● kube-controller-manager.service - Kubernetes Controller Manager

Loaded: loaded (/opt/k8s/config/kube-controller-manager.service; enabled; vendor preset: disabled)

Active: active (running) since Thu 2020-07-23 17:45:16 CST; 30s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 7891 (kube-controller)

CGroup: /system.slice/kube-controller-manager.service

└─7891 /opt/k8s/bin/kube-controller-manager --logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect=true --address=127.0...

cp /tmp/kubernetes-v.1.18.5/kubectl /usr/sbin/

[root@master config]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

ps: master节点组件部署完成,此时建议多次重启系统检查服务是否正常,否则需要检查修复后再部署node节点组件。

kubernetes node 节点运行如下组件:

kubelet

kube-proxy

kublet 运行在每个 worker 节点上,接收 kube-apiserver 发送的请求,管理 Pod 容器,执行交互式命令,如exec、run、logs 等;

kublet 启动时自动向 kube-apiserver 注册节点信息,内置的 cadvisor 统计和监控节点的资源使用情况;

为确保安全,只开启接收 https 请求的安全端口,对请求进行认证和授权,拒绝未授权的访问(如apiserver、heapster)。

mkdir -p /opt/k8s-node/{bin,tsl,config}

cd /opt/k8s-node/config/

export BOOTSTRAP_TOKEN=2c1e270afcd5440611fd76ee3faab593

export KUBE_APISERVER="https://192.168.23.135:6443"

kubectl config set-cluster kubernetes --certificate-authority=/opt/k8s/tsl/k8s-ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=./bootstrap.kubeconfig

kubectl config set-credentials kubelet-bootstrap --token=${BOOTSTRAP_TOKEN} --kubeconfig=./bootstrap.kubeconfig

kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=./bootstrap.kubeconfig

kubectl config use-context default --kubeconfig=./bootstrap.kubeconfig

kubectl config set-cluster kubernetes --certificate-authority=/opt/k8s/tsl/k8s-ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=./kubelet.kubeconfig

kubectl config set-credentials kubelet --token=${BOOTSTRAP_TOKEN} --kubeconfig=./kubelet.kubeconfig

kubectl config set-context default --cluster=kubernetes --user=kubelet --kubeconfig=./kubelet.kubeconfig

kubectl config use-context default --kubeconfig=./kubelet.kubeconfig

cd /opt/k8s-node/config

vim kubelet.env

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 192.168.23.136

port: 10250

readOnlyPort: 10255

cgroupDriver: systemd

clusterDomain: k8s.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

PS: cgroupDriver 类型必须与docker设置的一样,否则会导致approve后kubele会进程推出

vim kubelet.cfg

KUBELET_OPTS="--logtostderr=true --v=4 --hostname-override=node-1 --kubeconfig=/opt/k8s-node/config/kubelet.kubeconfig --bootstrap-kubeconfig=/opt/k8s-node/config/bootstrap.kubeconfig --config=/opt/k8s-node/config/kubelet.env --cert-dir=/opt/k8s-node/tsl/ --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

vim kubelet.service

[Unit]

Description=Kubernetes Kubelet

Requires=docker.service

After=docker.service

[Service]

EnvironmentFile=/opt/k8s-node/config/kubelet.cfg

ExecStart=/opt/k8s-node/bin/kubelet $KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

cp /tmp/kubernetes-v.1.18.5/kubelet /opt/k8s-node/bin/kube

scp -r /opt/k8s-node/ root@192.168.23.136:/opt/

scp -r /opt/k8s-node/ root@192.168.23.137:/opt/

ln -s /opt/k8s-node/config/kubelet.service /usr/lib/systemd/system/kubelet.service

systemctl daemon-reload

systemctl start kubelet

systemctl enable kubelet

[root@master config]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-OcSB30Vo3Gge_tX3OM5uuINwkkCYgzaW0y5FcVZGiRI 2m20s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-bG5qkwBn7kllVT_E21pV-gDZ35hlEvy6q_16PWXSr00 64s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

kubectl certificate approve node-csr-OcSB30Vo3Gge_tX3OM5uuINwkkCYgzaW0y5FcVZGiRI

kubectl certificate approve node-csr-bG5qkwBn7kllVT_E21pV-gDZ35hlEvy6q_16PWXSr00

[root@master config]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-OcSB30Vo3Gge_tX3OM5uuINwkkCYgzaW0y5FcVZGiRI 4m41s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

node-csr-bG5qkwBn7kllVT_E21pV-gDZ35hlEvy6q_16PWXSr00 3m25s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

- csr 状态变为 Approved,Issued 即可

- Requesting User:请求 CSR 的用户,kube-apiserver 对它进行认证和授权;

- Subject:请求签名的证书信息;

- 证书的 CN 是 system:node:kube-node2, Organization 是 system:nodes,kube-apiserver 的 Node 授权模式会授予该证书的相关权限;

[root@master ~]# kubectl get node,cs

NAME STATUS ROLES AGE VERSION

node/node-1 Ready <none> 40m v1.18.5

node/node-2 Ready <none> 42m v1.18.5

NAME STATUS MESSAGE ERROR

componentstatus/controller-manager Healthy ok

componentstatus/scheduler Healthy ok

componentstatus/etcd-2 Healthy {"health":"true"}

componentstatus/etcd-1 Healthy {"health":"true"}

componentstatus/etcd-0 Healthy {"health":"true"}

kube-proxy 运行在所有 node节点上,它监听 apiserver 中 service 和 Endpoint 的变化情况,创建路由规则来进行服务负载均衡。

cd /opt/k8s-node/config/

export BOOTSTRAP_TOKEN=2c1e270afcd5440611fd76ee3faab593

export KUBE_APISERVER="https://192.168.23.135:6443"

kubectl config set-cluster kubernetes --certificate-authority=/opt/k8s/tsl/k8s-ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=./kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy --client-certificate=/opt/k8s/tsl/k8s-proxy.pem --client-key=/opt/k8s/tsl/k8s-proxy-key.pem --embed-certs=true --kubeconfig=./kube-proxy.kubeconfig

kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=./kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=./kube-proxy.kubeconfig

vim kube-proxy.cfg

KUBE_PROXY_OPTS="--logtostderr=true --v=4 --hostname-override=node-1 --cluster-cidr=10.0.0.0/24 --kubeconfig=/opt/k8s-node/config/kube-proxy.kubeconfig"

--cluster-cidr=10.0.0.0/24 集群service网络

vim kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/opt/k8s-node/config/kube-proxy.cfg

ExecStart=/opt/k8s-node/bin/kube-proxy $KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

cp /tmp/kubernetes-v.1.18.5/kube-proxy /opt/k8s-node/bin/

scp /opt/k8s-node/bin/kube-proxy root@192.168.23.136:/opt/k8s-node/bin/

scp /opt/k8s-node/bin/kube-proxy root@192.168.23.137:/opt/k8s-node/bin/

scp /opt/k8s-node/config/kube-proxy.* root@192.168.23.136:/opt/k8s-node/config/

scp /opt/k8s-node/config/kube-proxy.* root@192.168.23.137:/opt/k8s-node/config/

ln -s /opt/k8s-node/config/kube-proxy.service /usr/lib/systemd/system/kube-proxy.service

systemctl daemon-reload

systemctl enable kube-proxy

systemctl restart kube-proxy

[root@master config]# systemctl status kube-proxy

● kube-proxy.service - Kubernetes Proxy

Loaded: loaded (/opt/k8s-node/config/kube-proxy.service; disabled; vendor preset: disabled)

Active: active (running) since Fri 2020-07-24 07:16:43 CST; 1min 31s ago

Hint: Some lines were ellipsized, use -l to show in full.

[root@node-1 k8s-node]# systemctl status kube-proxy

● kube-proxy.service - Kubernetes Proxy

Loaded: loaded (/opt/k8s-node/config/kube-proxy.service; enabled; vendor preset: disabled)

Active: active (running) since Fri 2020-07-24 06:18:44 CST; 58min ago

Main PID: 32609 (kube-proxy)

[root@node-2 ~]# systemctl status kube-proxy

● kube-proxy.service - Kubernetes Proxy

Loaded: loaded (/opt/k8s-node/config/kube-proxy.service; enabled; vendor preset: disabled)

Active: active (running) since Fri 2020-07-24 06:21:04 CST; 55min ago

Main PID: 32682 (kube-proxy)

Tasks: 7

Memory: 16.5M

CGroup: /system.slice/kube-proxy.service

ps:此时K8S集群部署完成,建议多次重启服务器验证集群服务情况

86) 离线二进制安装kubernetes v1.18.5 生产CI-CD环境 [1. 环境部署篇]

标签:cli network yam app leader targe 验证 升级内核 pre

原文地址:https://www.cnblogs.com/lemanlai/p/13376441.html