标签:本地 ctf 文件 任务计划 现在 ffffff 管理 郑州 http

一、Hadoop集群安装

1. 环境准备

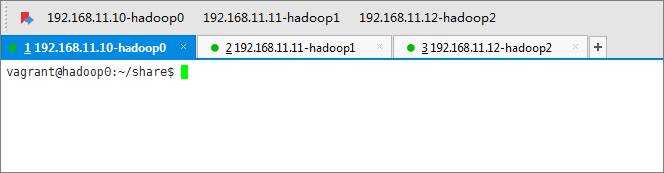

(1) 准备三台机器:hadoop0(192.168.11.10)、hadoop1(192.168.11.11)、hadoop2(192.168.11.12)

(2)每台机器安装好JAVA环境以及SSH打通(SSH免密登录;关闭防火墙)

2. 下载Hadoop安装包 并解压至相关目录

3. 配置Hadoop相关配置文件

(1)hadoop-env.sh 添加Java环境变量

export JAVA_HOME=/home/vagrant/share/jdk1.8.0_211

(2)core-site.xml

<configuration> <property> <name>fs.defaultFS</name> <value>hdfs://hadoop0:9000</value> </property> <property> <name>io.file.buffer.size</name> <value>131072</value> </property> <property> <name>hadoop.tmp.dir</name> <value>file:/home/vagrant/share/hadoop-2.9.2/tmp</value> </property> <property> <name>hadoop.proxyuser.root.hosts</name> <value>*</value> </property> <property> <name>hadoop.proxyuser.root.groups</name> <value>*</value> </property> </configuration>

(3)hdfs-site.xml

<configuration> <property> <name>dfs.namenode.secondary.http-address</name> <value>hadoop0:9001</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>file:/home/vagrant/share/hadoop-2.9.2/dfs/name</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>file:/home/vagrant/share/hadoop-2.9.2/dfs/data</value> </property> <property> <name>dfs.replication</name> <value>2</value> </property> <property> <name>dfs.webhdfs.enabled</name> <value>true</value> </property> <property> <name>dfs.permissions</name> <value>false</value> </property> <property> <name>dfs.web.ugi</name> <value>supergroup</value> </property> </configuration>

(4)mapred-site.xml

<configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> <property> <name>mapreduce.jobhistory.address</name> <value>hadoop0:10020</value> </property> <property> <name>mapreduce.jobhistory.webapp.address</name> <value>hadoop0:19888</value> </property> </configuration>

(5)yarn-site.xml

<configuration> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name> <value>org.apache.hadoop.mapred.ShuffleHandler</value> </property> <property> <name>yarn.resourcemanager.address</name> <value>hadoop0:8032</value> </property> <property> <name>yarn.resourcemanager.scheduler.address</name> <value>hadoop0:8030</value> </property> <property> <name>yarn.resourcemanager.resource-tracker.address</name> <value>hadoop0:8031</value> </property> <property> <name>yarn.resourcemanager.admin.address</name> <value>hadoop0:8033</value> </property> <property> <name>yarn.resourcemanager.webapp.address</name> <value>hadoop0:8088</value> </property> </configuration>

(6)slaves

hadoop1

hadoop2

配置完成以上几个配置文件之后,将其拷贝至另外两台机器。

4.启动Hadoop集群

(1)执行命令格式化name node:./bin/hadoop namenode -format

(2)启动Hadoop:./sbin/start-all.sh

(3)jps查看每个机器进程情况

- 主节点

NameNode

SecondaryNameNode

ResourceManager

-从节点

DataNode

NodeManager

5. 问题

(1)tmp/nm-local-dir is not a valid path. Path should be with file scheme or without schem

解决:使用hadoop默认的tmp目录

标签:本地 ctf 文件 任务计划 现在 ffffff 管理 郑州 http

原文地址:https://www.cnblogs.com/penguin0601/p/13470313.html