标签:encoding date str nbsp 开始 tar code lazy ima

1 import time

2 import traceback

3 import requests

4 from lxml import etree

5 import re

6 from bs4 import BeautifulSoup

7 from lxml.html.diff import end_tag

8 import json

9 import pymysql

10

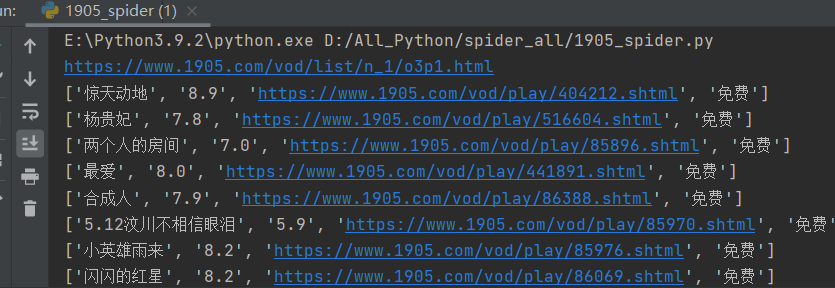

11 def get1905():

12 url=‘https://www.1905.com/vod/list/n_1/o3p1.html‘

13 headers={

14 ‘User-Agent‘:‘Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/90.0.4430.212 Safari/537.36‘

15 }

16 templist=[]

17 dataRes=[]

18 #最热

19 #1905电影网一共有99页,每页24部电影 for1-100 输出1-99页

20 for i in range(1,100):

21 url_1=‘https://www.1905.com/vod/list/n_1/o3p‘

22 auto=str(i)

23 url_2=‘.html‘

24 url=url_1+auto+url_2

25 print(url)

26 response = requests.get(url, headers)

27 response.encoding = ‘utf-8‘

28 page_text = response.text

29 soup = BeautifulSoup(page_text, ‘lxml‘)

30 # print(page_text)

31 movie_all = soup.find_all(‘div‘, class_="grid-2x grid-3x-md grid-6x-sm")

32 for single in movie_all:

33 part_html=str(single)

34 part_soup=BeautifulSoup(part_html,‘lxml‘)

35 #添加名字

36 name=part_soup.find(‘a‘)[‘title‘]

37 templist.append(name)

38 # print(name)

39 #添加评分

40 try:

41 score=part_soup.find(‘i‘).text

42 except:

43 if(len(score)==0):

44 score="1905暂无评分"

45 templist.append(score)

46 # print(score)

47 #添加path

48 path=part_soup.find(‘a‘,class_="pic-pack-outer")[‘href‘]

49 templist.append(path)

50 # print(path)

51 #添加state

52 state="免费"

53 templist.append(state)

54 print(templist)

55 dataRes.append(templist)

56 templist=[]

57 print(len(dataRes))

58 # print(movie_all)

59

60 #---------------------------------------------

61 #好评

62 templist = []

63 # 1905电影网一共有99页,每页24部电影 for1-100 输出1-99页

64 for i in range(1, 100):

65 url_1 = ‘https://www.1905.com/vod/list/n_1/o4p‘

66 auto = str(i)

67 url_2 = ‘.html‘

68 url = url_1 + auto + url_2

69 print(url)

70 response = requests.get(url, headers)

71 response.encoding = ‘utf-8‘

72 page_text = response.text

73 soup = BeautifulSoup(page_text, ‘lxml‘)

74 # print(page_text)

75 movie_all = soup.find_all(‘div‘, class_="grid-2x grid-3x-md grid-6x-sm")

76 for single in movie_all:

77 part_html = str(single)

78 part_soup = BeautifulSoup(part_html, ‘lxml‘)

79 # 添加名字

80 name = part_soup.find(‘a‘)[‘title‘]

81 templist.append(name)

82 # print(name)

83 # 添加评分

84 try:

85 score = part_soup.find(‘i‘).text

86 except:

87 if (len(score) == 0):

88 score = "1905暂无评分"

89 templist.append(score)

90 # print(score)

91 # 添加path

92 path = part_soup.find(‘a‘, class_="pic-pack-outer")[‘href‘]

93 templist.append(path)

94 # print(path)

95 # 添加state

96 state = "免费"

97 templist.append(state)

98 print(templist)

99 dataRes.append(templist)

100 templist = []

101 print(len(dataRes))

102 #---------------------------------------------

103 # 最新

104 templist = []

105 # 1905电影网一共有99页,每页24部电影 for1-100 输出1-99页

106 for i in range(1, 100):

107 url_1 = ‘https://www.1905.com/vod/list/n_1/o1p‘

108 auto = str(i)

109 url_2 = ‘.html‘

110 url = url_1 + auto + url_2

111 print(url)

112 response = requests.get(url, headers)

113 response.encoding = ‘utf-8‘

114 page_text = response.text

115 soup = BeautifulSoup(page_text, ‘lxml‘)

116 # print(page_text)

117 movie_all = soup.find_all(‘div‘, class_="grid-2x grid-3x-md grid-6x-sm")

118 for single in movie_all:

119 part_html = str(single)

120 part_soup = BeautifulSoup(part_html, ‘lxml‘)

121 # 添加名字

122 name = part_soup.find(‘a‘)[‘title‘]

123 templist.append(name)

124 # print(name)

125 # 添加评分

126 try:

127 score = part_soup.find(‘i‘).text

128 except:

129 if (len(score) == 0):

130 score = "1905暂无评分"

131 templist.append(score)

132 # print(score)

133 # 添加path

134 path = part_soup.find(‘a‘, class_="pic-pack-outer")[‘href‘]

135 templist.append(path)

136 # print(path)

137 # 添加state

138 state = "免费"

139 templist.append(state)

140 print(templist)

141 dataRes.append(templist)

142 templist = []

143 print(len(dataRes))

144 #去重

145 old_list = dataRes

146 new_list = []

147 for i in old_list:

148 if i not in new_list:

149 new_list.append(i)

150 print(len(new_list))

151 print("总数: "+str(len(new_list)))

152 return new_list

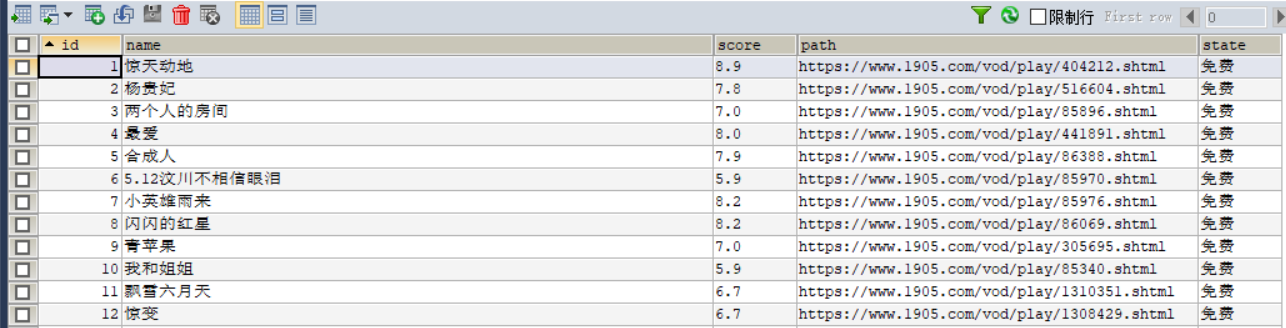

153 def insert_1905():

154 cursor = None

155 conn = None

156 try:

157 count = 0

158 list = get1905()

159 print(f"{time.asctime()}开始插入1905电影数据")

160 conn, cursor = get_conn()

161 sql = "insert into movie1905 (id,name,score,path,state) values(%s,%s,%s,%s,%s)"

162 for item in list:

163 print(item)

164 # 异常捕获,防止数据库主键冲突

165 try:

166 cursor.execute(sql, [0, item[0], item[1], item[2], item[3]])

167 except pymysql.err.IntegrityError:

168 print("重复!跳过!")

169 conn.commit() # 提交事务 update delete insert操作

170 print(f"{time.asctime()}插入1905电影数据完毕")

171 except:

172 traceback.print_exc()

173 finally:

174 close_conn(conn, cursor)

175 return;

176

177 #连接数据库 获取游标

178 def get_conn():

179 """

180 :return: 连接,游标

181 """

182 # 创建连接

183 conn = pymysql.connect(host="127.0.0.1",

184 user="root",

185 password="000429",

186 db="movierankings",

187 charset="utf8")

188 # 创建游标

189 cursor = conn.cursor() # 执行完毕返回的结果集默认以元组显示

190 if ((conn != None) & (cursor != None)):

191 print("数据库连接成功!游标创建成功!")

192 else:

193 print("数据库连接失败!")

194 return conn, cursor

195 #关闭数据库连接和游标

196 def close_conn(conn, cursor):

197 if cursor:

198 cursor.close()

199 if conn:

200 conn.close()

201 return 1

202

203 if __name__ == ‘__main__‘:

204 # get1905()

205 insert_1905()

Python爬虫爬取1905电影网视频电影并存储到mysql数据库

标签:encoding date str nbsp 开始 tar code lazy ima

原文地址:https://www.cnblogs.com/rainbow-1/p/14772852.html