标签:

程序如下:

package com.lcy.hadoop.examples;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.compress.CompressionCodec;

import org.apache.hadoop.io.compress.CompressionOutputStream;

import org.apache.hadoop.util.ReflectionUtils;

public class StreamCompressor {

public static void main(String[] args) throws Exception{

// TODO Auto-generated method stub

String codecClassname=args[0];

Class<?> codecClass=Class.forName(codecClassname);

Configuration conf=new Configuration();

CompressionCodec codec=(CompressionCodec)ReflectionUtils.newInstance(codecClass, conf);

CompressionOutputStream out=codec.createOutputStream(System.out);

IOUtils.copyBytes(System.in, out, 4096,false);

out.finish();

}

}

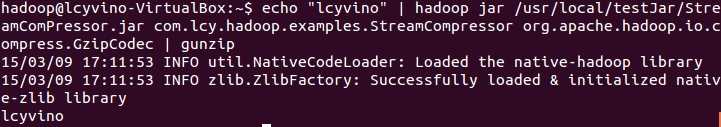

运行程序,输入如下命令:

hadoop@lcyvino-VirtualBox:~$ echo "lcyvino" | hadoop jar /usr/local/testJar/StreamComPressor.jar com.lcy.hadoop.examples.StreamCompressor org.apache.hadoop.io.compress.GzipCodec | gunzip

运行结果:

Hadoop:用API来压缩从标准输入中读取的数据并将其写到标准输出

标签:

原文地址:http://www.cnblogs.com/Murcielago/p/4324050.html