标签:

前两周的作业主要是关于Factor以及有向图的构造,但是概率图模型中还有一种更强大的武器——双向图(无向图、Markov Network)。与有向图不同,双向图可以描述两个var之间相互作用以及联系。描述的方式依旧是factor.本周的作业非常有实际意义——基于马尔科夫模型的图像文字识别系统(OCR)

图像文字识别系统(OCR)在人工智能中有着非常重要的应用。但是受到图像噪声,手写体变形,连笔等影响基于图像的文字识别系统比较复杂。PGM的重要作用就是解决那些测量过程复杂,测量结果不一定对,连续测量的情况(单次测量,前后比对,反复斟酌,寻找最优)。而英文文字往往由字母组成单词,所以适合利用概率图模型来进行建模。

概率图模型 OCR SLAM

单次测量——对单个字母的图像识别不准确;单次配准,转移矩阵求取不准确;

前后比对——结合单词字母组合规律 ;结合上一帧或前几帧的观测;

反复斟酌—— 图模型中更复杂的联系

寻找最优—— MAP估计

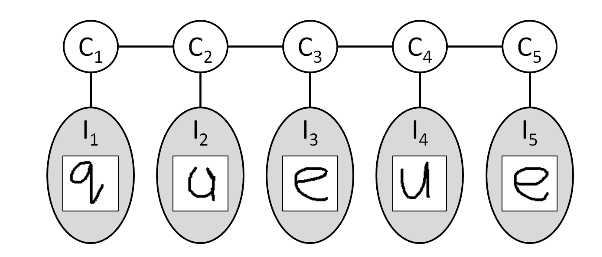

在文字识别系统中,文字的图像(var:I)总是被观测到,而所需要求得的字母(var(C))总是无法被观测到。所以我们建模的是P(C|I),此时的马尔科夫模型更为特殊,被称为条件随机场。

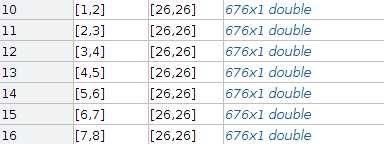

在构建复杂的概率图模型之前,应该先从简单的入手。尽管单次不准,也应该先对单次观测进行推测。所以,对于给定图像,获取其与字母之间关系的factor是必要的。此时的图模型如图所示。

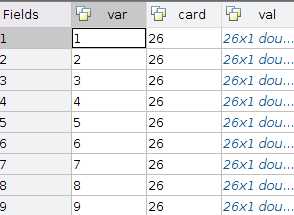

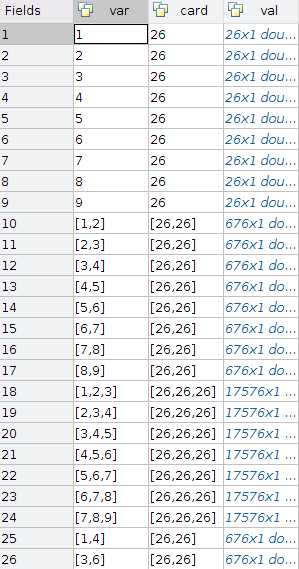

此时,每个字母都是单独的一个图,我们也只需要指定每个字母与图像之间的factor——phi(I,C).由于图像总是被观测到了,所以这个factor里的变量只有C。但是,对于每个不同的小图而言,factor的val是不一样的,因为val代表了var取card中每个值的概率。factor的var应为字母的序号。card=26代表var的取值范围。val则由computeImageFactor给出。

由于图片不同会导致var的取值分布不同,所以这不能像之前那样构造好一个factor然后批量复制,而需要单独计算。在factor.val的计算中,使用了以下函数

1 function P = ComputeImageFactor (img, imgModel) 2 % This function computes the singleton OCR factor values for a single 3 % image. 4 % 5 % Input: 6 % img: The 16x8 matrix of the image 7 % imgModel: The provided, trained image model 8 % 9 % Output: 10 % P: A K-by-1 array of the factor values for each of the K possible 11 % character assignments to the given image 12 % 13 % Copyright (C) Daphne Koller, Stanford University, 2012 14 15 X = img(:); 16 N = length(X); 17 K = imgModel.K; 18 19 theta = reshape(imgModel.params(1:N*(K-1)), K-1, N); 20 bias = reshape(imgModel.params((1+N*(K-1)):end), K-1, 1); 21 22 W = [ bsxfun(@plus, theta * X, bias) ; 0 ]; 23 W = bsxfun(@minus, W, max(W)); 24 W = exp(W); 25 26 P=bsxfun(@rdivide, W, sum(W)); 27 28 29 end

函数中最重要的信息被藏在了imageModel.params里,点开params发现是一个3225×1的向量,而结合reshape指令来看,这个向量是图像像素点的值与字母一一对应的权重。最后利用sigmoid函数将此权重转换成了factor.val。故此factor的代码如下所示:

1 function factors = ComputeSingletonFactors (images, imageModel) 2 % This function computes the single OCR factors for all of the images in a 3 % word. 4 % 5 % Input: 6 % images: An array of structs containing the ‘img‘ value for each 7 % character in the word. You could, for example, pass in allWords{1} to 8 % use the first word of the provided dataset. 9 % imageModel: The provided OCR image model. 10 % 11 % Output: 12 % factors: An array of the OCR factors, one for every character in the 13 % image. 14 % 15 % Hint: You will want to use ComputeImageFactor.m when computing the ‘val‘ 16 % entry for each factor. 17 % 18 % Copyright (C) Daphne Koller, Stanford University, 2012 19 20 % The number of characters in the word 21 n = length(images); 22 23 % Preallocate the array of factors 24 factors = repmat(struct(‘var‘, [], ‘card‘, [], ‘val‘, []), n, 1); 25 26 % Your code here: 27 for i = 1:n 28 factors(i).var = i; 29 factors(i).card = imageModel.K; 30 factors(i).val = ComputeImageFactor(images(i).img,imageModel); 31 end 32 end

显然对于一个9字母的单词而言,运行此代码可构建PGM,所谓的PGM实际上是一系列factor.

如此简单的模型同样可以进行有效的推断。推断通过调用预编译好的c代码实现,此时的识别结果为:字母识别率77%,单词识别率22%.显然此时的识别成功率基本完全由训练好的params决定。仅仅是图片与字母的一一对应。

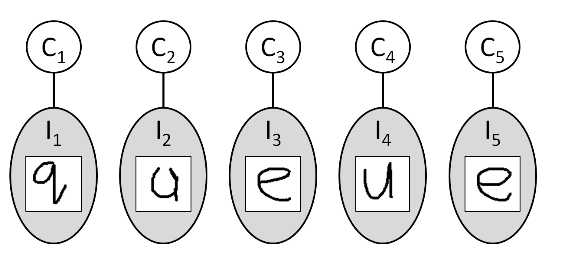

显然对于英文单次而言,相邻字母的组合也是有一定先验信息的。比如q后面接h的概率要小于q后面接u的概率。这种关系对推测是有益的。此时的图模型如下所示:

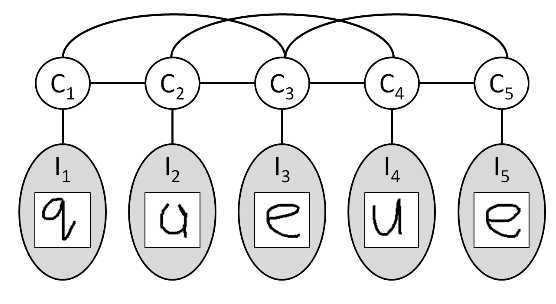

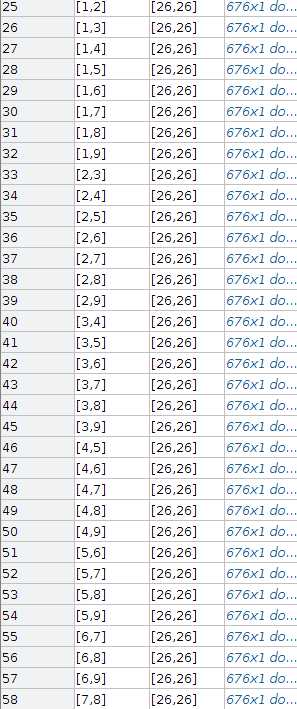

显然与之前相比,此时的图模型需要考虑相邻字母之间的关系(factor),此时factor的var应该有两个,且应该是相邻的,如:1 2;2 3;3 4...而每个var的card依旧是[26 26],一幅图中var一旦确定了,有了唯一的编号,那么card是不可以改变的。两个相邻字母的factor.val规模非常庞大了,应该为26*26 = 676. 但是此时的factor.val与图像观测值并没有关系,它只是var之间的一种联系。也就是说,此时的factor是可以复制的。只需要改变var,其他的值都应该是一样的。factor的计算如下:

1 function factors = ComputePairwiseFactors (images, pairwiseModel, K) 2 % This function computes the pairwise factors for one word and uses the 3 % given pairwise model to set the factor values. 4 % 5 % Input: 6 % images: An array of structs containing the ‘img‘ value for each 7 % character in the word. 8 % pairwiseModel: The provided pairwise model. It is a K-by-K matrix. For 9 % character i followed by character j, the factor value should be 10 % pairwiseModel(i, j). 11 % K: The alphabet size (accessible in imageModel.K for the provided 12 % imageModel). 13 % 14 % Output: 15 % factors: The pairwise factors for this word. 16 % 17 % Copyright (C) Daphne Koller, Stanford University, 2012 18 19 n = length(images); 20 21 % If there are fewer than 2 characters, return an empty factor list. 22 if (n < 2) 23 factors = []; 24 return; 25 end 26 27 val_ = reshape(pairwiseModel,K*K,1); 28 29 factors = repmat(struct(‘var‘, [], ‘card‘, [K K], ‘val‘, val_), n - 1, 1); 30 31 % Your code here: 32 for i = 1 : n-1 33 factors(i).var =[i,i+1]; 34 end 35 end

显然,这里的关键val,又是由神秘参数pairwiseModel决定的。pairwiseModel实际上是一个26×26的矩阵,它指定了两个字母相邻的可能性。点开发现里面有很多0,即代表两个字母几乎不可能相邻。此模型可由字典统计获得。实际上,这里置0是一件挺危险的事情,这里可以这么做是因为足够自信。

如果使用相邻字母构建PGM,则又可以得到一些factor.如下所示:

此时的识别结果为:字母识别率79.16%,单词识别率26%,显然,单词识别率获得了较大的提升。

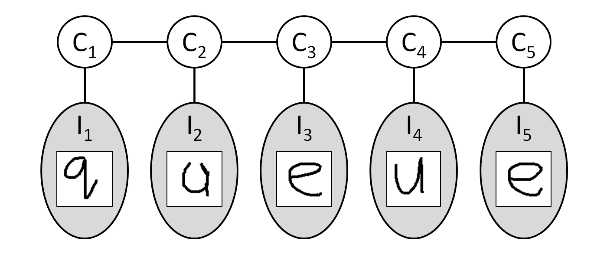

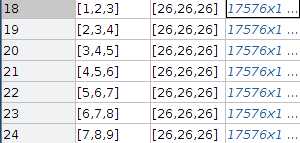

考虑到双字母组合可以对识别率提升较大,那么3字母组合也应该可以提升识别率。考虑一个单词的i,i+1,i+2个字母之间的关系,则图模型如下所示:

显然,我们需要做的工作是继续增加factor —— phi(i,i+1,i+2),此factor的var为i,i+1,i+2,card为26 26 26. 剩下最重要的val依旧由神秘的数字决定。然后,val一共需要26*26*26=17576个值来决定。显然我们针对每个组合均设计一个val,哪怕是穷尽字典也需要大量的运算。所以,我们只针对2000个常用的组合(ing,ght.....)给予较高的权重(大于1),而其他组合则赋予1(不管之)。此时特征的稀疏性表现的更加明显了。factor的计算代码如下:

1 function factors = ComputeTripletFactors (images, tripletList, K) 2 3 % images = allWords{1}; 4 % K = 26; 5 % This function computes the triplet factor values for one word. 6 % 7 % Input: 8 % images: An array of structs containing the ‘img‘ value for each 9 % character in the word. 10 % tripletList: An array of the character triplets we will consider (other 11 % factor values should be 1). tripletList(i).chars gives character 12 % assignment, and triplistList(i).factorVal gives the value for that 13 % entry in the factor table. 14 % K: The alphabet size (accessible in imageModel.K for the provided 15 % imageModel). 16 % 17 % Hint: Every character triple in the word will use the same ‘val‘ table. 18 % Consider computing that array once and then resusing for each factor. 19 % 20 % Copyright (C) Daphne Koller, Stanford University, 2012 21 22 23 n = length(images); 24 25 % If the word has fewer than three characters, then return an empty list. 26 if (n < 3) 27 factors = []; 28 return 29 end 30 31 val_init = ones(K*K*K,1); 32 num_zhiding = length(tripletList); 33 for i = 1:num_zhiding 34 triplet_i = tripletList(i); 35 assign_ = triplet_i.chars; 36 index_ = AssignmentToIndex(assign_,[K K K]); 37 val_init(index_) = triplet_i.factorVal; 38 end 39 40 factors = repmat(struct(‘var‘, [], ‘card‘, [K K K], ‘val‘, [val_init]), n - 2, 1); 41 42 % Your code here: 43 for i = 1: n-2 44 factors(i).var = [i i+1 i+2]; 45 end 46 end

此时又可以得到一些factor,如下:

增加了这些factor之后,字母的识别率上升为80.3%,单词识别率上升为24%.显然,上升的速度放缓了,由于三字母的组合在很多单词中并不容易碰见,故对于识别率的提升效果有限。

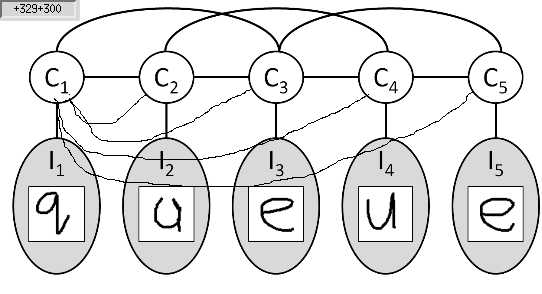

显然相邻信息已经无法满足效果提升的要求了,所以我们需要寻找更多有用的信息并将其带入PGM中。对于常见的手写体来说,人们对于同一个字母的书写总是相似的。也就是说,同一个单词中,字母之间应该两两存在联系,如果其观测值(图片)相似,则这两个字母有很大的可能性是相同的。此时的概率图如图所示:

节点与节点之间是两两相连的(图中为了查看方便,只连了第一个节点与其他节点)。显然,1 2;1 3;本就相连,此线条不是重复了么?实际上不是的,之前相连的factor所表示的是相邻信息,而此时相连的factor需要表示两幅图的相似程度。此factor的本质应该是phi(C1,C2,I1,I2),但是由于I1,I2被观测到了,所以var仅为C1,C2,card 依旧为[26 26]——card表示随机变量的取值范围,而不是随机变量序号的取值范围,且要与之前对应。val则应该由两幅图的相似程度决定。所以这又是一个不能直接复制的factor,因为其与观测值有关。

此factor由以下程序给出:

1 function factor = ComputeSimilarityFactor (images, K, i, j) 2 % This function computes the similarity factor between two character images 3 % in one word --- which characters is given by indices i and j (a 4 % description of how the factor should be computed is given below). 5 % 6 % Input: 7 % images: A struct array of character images from one word. 8 % K: The alphabet size. 9 % i,j: The scope of that factor. That is, you should construct a factor 10 % between characters i and j in the images array. 11 % 12 % Output: 13 % factor: The similarity factor between these two characters. For any 14 % assignment C_i != C_j, the factor value should be one. For any 15 % assignment C_i == C_j, the factor value should be 16 % ImageSimilarity(I_i, I_j) --- ie, the computed value given by 17 % ImageSimilarity.m on the two images. 18 % 19 % Copyright (C) Daphne Koller, Stanford University, 2012 20 21 factor = struct(‘var‘, [i j], ‘card‘, [K K], ‘val‘,ones(K*K,1)); 22 for i_ = 1:K 23 for j_ = 1:K 24 indx_ = AssignmentToIndex([i_ j_],[K K]); 25 if(i_ == j_) 26 factor.val(indx_) = ImageSimilarity(images(i).img,images(j).img); 27 end 28 end 29 end 30 31 % Your code here: 32 33 end

其中,ImageSimilarity 计算的是两幅图的相似程度,利用两幅图向量化后夹角的余弦进行量化。

显然,在此factor不可直接复制的情况下,我们还需要生成整幅图所有的factors.由以下程序给出:

1 function factors = ComputeAllSimilarityFactors (images, K) 2 % This function computes all of the similarity factors for the images in 3 % one word. 4 % 5 % Input: 6 % images: An array of structs containing the ‘img‘ value for each 7 % character in the word. 8 % K: The alphabet size (accessible in imageModel.K for the provided 9 % imageModel). 10 % 11 % Output: 12 % factors: Every similarity factor in the word. You should use 13 % ComputeSimilarityFactor to compute these. 14 % 15 % Copyright (C) Daphne Koller, Stanford University, 2012 16 17 n = length(images); 18 nFactors = nchoosek (n, 2); 19 20 factors = repmat(struct(‘var‘, [], ‘card‘, [K K], ‘val‘, []), nFactors, 1); 21 22 % Your code here: 23 num_factor_ =1; 24 for i = 1:n 25 for j = i+1:n 26 factors(num_factor_) = ComputeSimilarityFactor(images,K,i,j); 27 num_factor_ = num_factor_+1; 28 end 29 end 30 end

但是值得注意的是,增加此factors后,其图模型增加了如下变量:

注意此图并未截全。如果单词较长(9字母)的情况下,factors会剧烈增长,这会给图模型的推断带来极大的计算困难。然后很多情况下,单词中重复的字母是少数的,考虑2组重复字母足以应对大部分单词。所以为了降低计算难度,我们将图像相似的factors减小,仅保留最相似(factor.val最大)的两组。所使用的代码如下:

1 function factors = ChooseTopSimilarityFactors (allFactors, F) 2 % This function chooses the similarity factors with the highest similarity 3 % out of all the possibilities. 4 % 5 % Input: 6 % allFactors: An array of all the similarity factors. 7 % F: The number of factors to select. 8 % 9 % Output: 10 % factors: The F factors out of allFactors for which the similarity score 11 % is highest. 12 % 13 % Hint: Recall that the similarity score for two images will be in every 14 % factor table entry (for those two images‘ factor) where they are 15 % assigned the same character value. 16 % 17 % Copyright (C) Daphne Koller, Stanford University, 2012 18 19 20 % If there are fewer than F factors total, just return all of them. 21 if (length(allFactors) <= F) 22 factors = allFactors; 23 return; 24 end 25 26 % Your code here: 27 n_factors = length(allFactors); 28 n_img = max(allFactors(n_factors).var); 29 30 start_ = n_factors-nchoosek(n_img,2)+1; 31 Similarity =[]; 32 for i = start_ : n_factors 33 Similarity =[Similarity;i max(allFactors(i).val)]; 34 end 35 36 Similarity_paixu = sortrows(Similarity,-2); 37 factors_to_keep = Similarity_paixu(1:F,1); 38 factors_to_remove = setdiff(start_:n_factors,factors_to_keep); 39 allFactors(factors_to_remove,:)=[]; 40 factors = allFactors; %%% REMOVE THIS LINE 41 42 end

最终,PGM的factors如下所示:

利用推断算法对此模型计算,可以求得:文字识别率81.6%,单词识别率37%.相比于单纯的图片-文字识别,识别率提高了近一倍!!!概率图模型的效果是显著的。

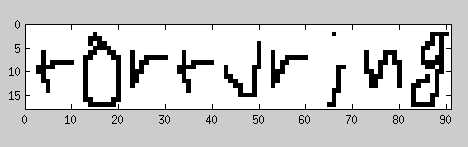

最后,大家肯定好奇文字图片到底是啥,如下:

识别结果如下:

所有代码请点这里

机器学习 —— 概率图模型(Homework: Week3)

标签:

原文地址:http://www.cnblogs.com/ironstark/p/5372191.html