标签:

CDH5包下载:http://archive.cloudera.com/cdh5/

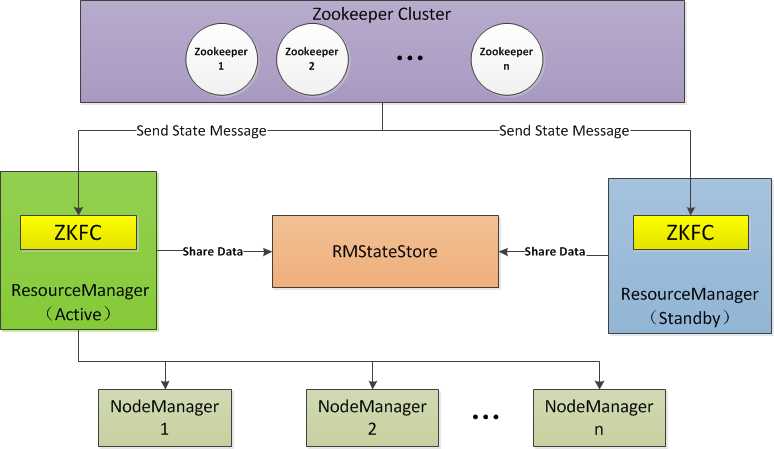

架构设计:

主机规划:

|

IP |

Host |

部署模块 |

进程 |

|

192.168.254.151 |

Hadoop-NN-01 |

NameNode ResourceManager |

NameNode DFSZKFailoverController ResourceManager |

|

192.168.254.152 |

Hadoop-NN-02 |

NameNode ResourceManager |

NameNode DFSZKFailoverController ResourceManager |

|

192.168.254.153 |

Hadoop-DN-01 Zookeeper-01 |

DataNode NodeManager Zookeeper |

DataNode NodeManager JournalNode QuorumPeerMain |

|

192.168.254.154 |

Hadoop-DN-02 Zookeeper-02 |

DataNode NodeManager Zookeeper |

DataNode NodeManager JournalNode QuorumPeerMain |

|

192.168.254.155 |

Hadoop-DN-03 Zookeeper-03 |

DataNode NodeManager Zookeeper |

DataNode NodeManager JournalNode QuorumPeerMain |

各个进程解释:

目录规划:

|

名称 |

路径 |

|

$HADOOP_HOME |

/home/hadoopuser/hadoop-2.6.0-cdh5.6.0 |

|

Data |

$ HADOOP_HOME/data |

|

Log |

$ HADOOP_HOME/logs |

集群安装:

一、关闭防火墙(防火墙可以以后配置)

二、安装JDK(略)

三、修改HostName并配置Host(5台)

[root@Linux01 ~]# vim /etc/sysconfig/network [root@Linux01 ~]# vim /etc/hosts 192.168.254.151 Hadoop-NN-01 192.168.254.152 Hadoop-NN-02 192.168.254.153 Hadoop-DN-01 Zookeeper-01 192.168.254.154 Hadoop-DN-02 Zookeeper-01 192.168.254.155 Hadoop-DN-03 Zookeeper-01

四、为了安全,创建Hadoop专门登录的用户(5台)

[root@Linux01 ~]# useradd hadoopuser [root@Linux01 ~]# passwd hadoopuser [root@Linux01 ~]# su – hadoopuser #切换用户

五、配置SSH免密码登录(2台NameNode)

[hadoopuser@Linux05 hadoop-2.6.0-cdh5.6.0]$ ssh-keygen --生成公私钥

[hadoopuser@Linux05 hadoop-2.6.0-cdh5.6.0]$ ssh-copy-id -i ~/.ssh/id_rsa.pub hadoopuser@Hadoop-NN-01

-I 表示 input

~/.ssh/id_rsa.pub 表示哪个公钥组

或者省略为:

[hadoopuser@Linux05 hadoop-2.6.0-cdh5.6.0]$ ssh-copy-id Hadoop-NN-01(或写IP:10.10.51.231) #将公钥扔到对方服务器 [hadoopuser@Linux05 hadoop-2.6.0-cdh5.6.0]$ ssh-copy-id ”6000 Hadoop-NN-01” #如果带端口则这样写

注意修改Hadoop的配置文件

vi Hadoop-env.sh export HADOOP_SSH_OPTS=”-p 6000” [hadoopuser@Linux05 hadoop-2.6.0-cdh5.6.0]$ ssh Hadoop-NN-01 #验证(退出当前连接命令:exit、logout) [hadoopuser@Linux05 hadoop-2.6.0-cdh5.6.0]$ ssh Hadoop-NN-01 –p 6000 #如果带端口这样写

六、配置环境变量:vi ~/.bashrc 然后 source ~/.bashrc(5台)

[hadoopuser@Linux01 ~]$ vi ~/.bashrc # hadoop cdh5 export HADOOP_HOME=/home/hadoopuser/hadoop-2.6.0-cdh5.6.0 export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin [hadoopuser@Linux01 ~]$ source ~/.bashrc #生效

七、安装zookeeper(3台DataNode)

1、解压

2、配置环境变量:vi ~/.bashrc

[hadoopuser@Linux01 ~]$ vi ~/.bashrc # zookeeper cdh5 export ZOOKEEPER_HOME=/home/hadoopuser/zookeeper-3.4.5-cdh5.6.0 export PATH=$PATH:$ZOOKEEPER_HOME/bin [hadoopuser@Linux01 ~]$ source ~/.bashrc #生效

3、修改日志输出

[hadoopuser@Linux01 ~]$ vi $ZOOKEEPER_HOME/libexec/zkEnv.sh 56行: 找到如下位置修改语句:ZOO_LOG_DIR="$ZOOKEEPER_HOME/logs"

4、修改配置文件

[hadoopuser@Linux01 ~]$ vi $ZOOKEEPER_HOME/conf/zoo.cfg # zookeeper tickTime=2000 initLimit=10 syncLimit=5 dataDir=/home/hadoopuser/zookeeper-3.4.5-cdh5.6.0/data clientPort=2181 # cluster server.1=Zookeeper-01:2888:3888 server.2=Zookeeper-02:2888:3888 server.3=Zookeeper-03:2888:3888

5、设置myid

(1)Hadoop-DN -01:

mkdir $ZOOKEEPER_HOME/data echo 1 > $ZOOKEEPER_HOME/data/myid

(2)Hadoop-DN -02:

mkdir $ZOOKEEPER_HOME/data echo 2 > $ZOOKEEPER_HOME/data/myid

(3)Hadoop-DN -03:

mkdir $ZOOKEEPER_HOME/data echo 3 > $ZOOKEEPER_HOME/data/myid

6、各结点启动:

[hadoopuser@Linux01 ~]$ zkServer.sh start

7、验证

[hadoopuser@Linux01 ~]$ jps 3051 Jps 2829 QuorumPeerMain

8、状态

[hadoopuser@Linux01 ~]$ zkServer.sh status JMX enabled by default Using config: /home/zero/zookeeper/zookeeper-3.4.5-cdh5.0.1/bin/../conf/zoo.cfg Mode: follower

9、附录zoo.cfg各配置项说明

|

属性 |

意义 |

|

tickTime |

时间单元,心跳和最低会话超时时间为tickTime的两倍 |

|

dataDir |

数据存放位置,存放内存快照和事务更新日志 |

|

clientPort |

客户端访问端口 |

|

initLimit |

配 置 Zookeeper 接受客户端(这里所说的客户端不是用户连接 Zookeeper服务器的客户端,而是 Zookeeper 服务器集群中连接到 Leader 的 Follower 服务器)初始化连接时最长能忍受多少个心跳时间间隔数。当已经超过 10 个心跳的时间(也就是 tickTime)长度后 Zookeeper 服务器还没有收到客户端的返回信息,那么表明这个客户端连接失败。总的时间长度就是 5*2000=10 秒。 |

|

syncLimit |

这个配置项标识 Leader 与 Follower 之间发送消息,请求和应答时间长度,最长不能超过多少个 |

|

server.id=host:port:port server.A=B:C:D |

集群结点列表: A :是一个数字,表示这个是第几号服务器; B :是这个服务器的 ip 地址; C :表示的是这个服务器与集群中的 Leader 服务器交换信息的端口; D :表示的是万一集群中的 Leader 服务器挂了,需要一个端口来重新进行选举,选出一个新的 Leader,而这个端口就是用来执行选举时服务器相互通信的端口。如果是伪集群的配置方式,由于 B 都是一样,所以不同的 Zookeeper 实例通信端口号不能一样,所以要给它们分配不同的端口号。 |

八、安装Hadoop,并配置(只装1台配置完成后分发给其它节点)

1、解压

2、修改配置文件

|

配置名称 |

类型 |

说明 |

|

hadoop-env.sh |

Bash脚本 |

Hadoop运行环境变量设置 |

|

core-site.xml |

xml |

配置Hadoop core,如IO |

|

hdfs-site.xml |

xml |

配置HDFS守护进程:NN、JN、DN |

|

yarn-env.sh |

Bash脚本 |

Yarn运行环境变量设置 |

|

yarn-site.xml |

xml |

Yarn框架配置环境 |

|

mapred-site.xml |

xml |

MR属性设置 |

|

capacity-scheduler.xml |

xml |

Yarn调度属性设置 |

|

container-executor.cfg |

Cfg |

Yarn Container配置 |

|

mapred-queues.xml |

xml |

MR队列设置 |

|

hadoop-metrics.properties |

Java属性 |

Hadoop Metrics配置 |

|

hadoop-metrics2.properties |

Java属性 |

Hadoop Metrics配置 |

|

slaves |

Plain Text |

DN节点配置 |

|

exclude |

Plain Text |

移除DN节点配置文件 |

|

log4j.properties |

系统日志设置 |

|

|

configuration.xsl |

(1)修改 $HADOOP_HOME/etc/hadoop/hadoop-env.sh

#--------------------Java Env------------------------------ export JAVA_HOME="/usr/java/jdk1.8.0_73" #--------------------Hadoop Env---------------------------- #export HADOOP_PID_DIR=${HADOOP_PID_DIR} export HADOOP_PREFIX="/home/hadoopuser/hadoop-2.6.0-cdh5.6.0" #--------------------Hadoop Daemon Options----------------- # export HADOOP_NAMENODE_OPTS="-Dhadoop.security.logger=${HADOOP_SECURITY_LOGGER:-INFO,RFAS} -Dhdfs.audit.logger=${HDFS_AUDIT_LOGGER:-INFO,NullAppender} $HADOOP_NAMENODE_OPTS" # export HADOOP_DATANODE_OPTS="-Dhadoop.security.logger=ERROR,RFAS $HADOOP_DATANODE_OPTS" #--------------------Hadoop Logs--------------------------- #export HADOOP_LOG_DIR=${HADOOP_LOG_DIR}/$USER #--------------------SSH PORT------------------------------- export HADOOP_SSH_OPTS="-p 6000" #如果你修改了SSH登录端口,一定要修改此配置。

(2)修改 $HADOOP_HOME/etc/hadoop/hadoop-site.xml

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <!--Yarn 需要使用 fs.defaultFS 指定NameNode URI --> <property> <name>fs.defaultFS</name> <value>hdfs://mycluster</value> </property> <!--HDFS超级用户 --> <property> <name>dfs.permissions.superusergroup</name> <value>zero</value> </property> <!--==============================Trash机制======================================= --> <property> <!--多长时间创建CheckPoint NameNode截点上运行的CheckPointer 从Current文件夹创建CheckPoint;默认:0 由fs.trash.interval项指定 --> <name>fs.trash.checkpoint.interval</name> <value>0</value> </property> <property> <!--多少分钟.Trash下的CheckPoint目录会被删除,该配置服务器设置优先级大于客户端,默认:0 不删除 --> <name>fs.trash.interval</name> <value>1440</value> </property> </configuration>

(3)修改 $HADOOP_HOME/etc/hadoop/hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <!--开启web hdfs --> <property> <name>dfs.webhdfs.enabled</name> <value>true</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>/home/hadoopuser/hadoop-2.6.0-cdh5.6.0/data/dfs/name</value> <description> namenode 存放name table(fsimage)本地目录(需要修改)</description> </property> <property> <name>dfs.namenode.edits.dir</name> <value>${dfs.namenode.name.dir}</value> <description>namenode存放 transaction file(edits)本地目录(需要修改)</description> </property> <property> <name>dfs.datanode.data.dir</name> <value>/home/hadoopuser/hadoop-2.6.0-cdh5.6.0/data/dfs/data</value> <description>datanode存放block本地目录(需要修改)</description> </property> <property> <name>dfs.replication</name> <value>1</value> <description>文件副本个数,默认为3</description> </property> <!-- 块大小 (默认) --> <property> <name>dfs.blocksize</name> <value>268435456</value> < description>块大小256M</description> </property> <!--======================================================================= --> <!--HDFS高可用配置 --> <!--nameservices逻辑名 --> <property> <name>dfs.nameservices</name> <value>mycluster</value> </property> <property> <!--设置NameNode IDs 此版本最大只支持两个NameNode --> <name>dfs.ha.namenodes.mycluster</name> <value>nn1,nn2</value> </property> <!-- Hdfs HA: dfs.namenode.rpc-address.[nameservice ID] rpc 通信地址 --> <property> <name>dfs.namenode.rpc-address.mycluster.nn1</name> <value>Hadoop-NN-01:8020</value> </property> <property> <name>dfs.namenode.rpc-address.mycluster.nn2</name> <value>Hadoop-NN-02:8020</value> </property> <!-- Hdfs HA: dfs.namenode.http-address.[nameservice ID] http 通信地址 --> <property> <name>dfs.namenode.http-address.mycluster.nn1</name> <value>Hadoop-NN-01:50070</value> </property> <property> <name>dfs.namenode.http-address.mycluster.nn2</name> <value>Hadoop-NN-02:50070</value> </property> <!--==================Namenode editlog同步 ============================================ --> <!--保证数据恢复 --> <property> <name>dfs.journalnode.http-address</name> <value>0.0.0.0:8480</value> </property> <property> <name>dfs.journalnode.rpc-address</name> <value>0.0.0.0:8485</value> </property> <property> <!--设置JournalNode服务器地址,QuorumJournalManager 用于存储editlog --> <!--格式:qjournal://<host1:port1>;<host2:port2>;<host3:port3>/<journalId> 端口同journalnode.rpc-address --> <name>dfs.namenode.shared.edits.dir</name> <value>qjournal://Hadoop-DN-01:8485;Hadoop-DN-02:8485;Hadoop-DN-03:8485/mycluster</value> </property> <property> <!--JournalNode存放数据地址 --> <name>dfs.journalnode.edits.dir</name> <value>/home/hadoopuser/hadoop-2.6.0-cdh5.6.0/data/dfs/jn</value> </property> <!--==================DataNode editlog同步 ============================================ --> <property> <!--DataNode,Client连接Namenode识别选择Active NameNode策略 --> <name>dfs.client.failover.proxy.provider.mycluster</name> <value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value> </property> <!--==================Namenode fencing:=============================================== --> <!--Failover后防止停掉的Namenode启动,造成两个服务 --> <property> <name>dfs.ha.fencing.methods</name> <value>sshfence</value> </property> <property> <name>dfs.ha.fencing.ssh.private-key-files</name> <value>/home/hadoopuser/.ssh/id_rsa</value> </property> <property> <!--多少milliseconds 认为fencing失败 --> <name>dfs.ha.fencing.ssh.connect-timeout</name> <value>30000</value> </property> <!--==================NameNode auto failover base ZKFC and Zookeeper====================== --> <!--开启基于Zookeeper及ZKFC进程的自动备援设置,监视进程是否死掉 --> <property> <name>dfs.ha.automatic-failover.enabled</name> <value>true</value> </property> <property> <name>ha.zookeeper.quorum</name> <!--<value>Zookeeper-01:2181,Zookeeper-02:2181,Zookeeper-03:2181</value>--> <value>Hadoop-DN-01:2181,Hadoop-DN-02:2181,Hadoop-DN-03:2181</value> </property> <property> <!--指定ZooKeeper超时间隔,单位毫秒 --> <name>ha.zookeeper.session-timeout.ms</name> <value>2000</value> </property> </configuration>

(4)修改 $HADOOP_HOME/etc/hadoop/yarn-env.sh

#Yarn Daemon Options #export YARN_RESOURCEMANAGER_OPTS #export YARN_NODEMANAGER_OPTS #export YARN_PROXYSERVER_OPTS #export HADOOP_JOB_HISTORYSERVER_OPTS #Yarn Logs export YARN_LOG_DIR="/home/hadoopuser/hadoop-2.6.0-cdh5.6.0/logs"

(5)修改 $HADOOP_HOEM/etc/hadoop/mapred-site.xml

<configuration> <!-- 配置 MapReduce Applications --> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> <!-- JobHistory Server ============================================================== --> <!-- 配置 MapReduce JobHistory Server 地址 ,默认端口10020 --> <property> <name>mapreduce.jobhistory.address</name> <value>0.0.0.0:10020</value> </property> <!-- 配置 MapReduce JobHistory Server web ui 地址, 默认端口19888 --> <property> <name>mapreduce.jobhistory.webapp.address</name> <value>0.0.0.0:19888</value> </property> </configuration>

(6)修改 $HADOOP_HOME/etc/hadoop/yarn-site.xml

<configuration> <!-- nodemanager 配置 ================================================= --> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name> <value>org.apache.hadoop.mapred.ShuffleHandler</value> </property> <property> <description>Address where the localizer IPC is.</description> <name>yarn.nodemanager.localizer.address</name> <value>0.0.0.0:23344</value> </property> <property> <description>NM Webapp address.</description> <name>yarn.nodemanager.webapp.address</name> <value>0.0.0.0:23999</value> </property> <!-- HA 配置 =============================================================== --> <!-- Resource Manager Configs --> <property> <name>yarn.resourcemanager.connect.retry-interval.ms</name> <value>2000</value> </property> <property> <name>yarn.resourcemanager.ha.enabled</name> <value>true</value> </property> <property> <name>yarn.resourcemanager.ha.automatic-failover.enabled</name> <value>true</value> </property> <!-- 使嵌入式自动故障转移。HA环境启动,与 ZKRMStateStore 配合 处理fencing --> <property> <name>yarn.resourcemanager.ha.automatic-failover.embedded</name> <value>true</value> </property> <!-- 集群名称,确保HA选举时对应的集群 --> <property> <name>yarn.resourcemanager.cluster-id</name> <value>yarn-cluster</value> </property> <property> <name>yarn.resourcemanager.ha.rm-ids</name> <value>rm1,rm2</value> </property> <!--这里RM主备结点需要单独指定,(可选) <property> <name>yarn.resourcemanager.ha.id</name> <value>rm2</value> </property> --> <property> <name>yarn.resourcemanager.scheduler.class</name> <value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler</value> </property> <property> <name>yarn.resourcemanager.recovery.enabled</name> <value>true</value> </property> <property> <name>yarn.app.mapreduce.am.scheduler.connection.wait.interval-ms</name> <value>5000</value> </property> <!-- ZKRMStateStore 配置 --> <property> <name>yarn.resourcemanager.store.class</name> <value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value> </property> <property> <name>yarn.resourcemanager.zk-address</name> <!--<value>Zookeeper-01:2181,Zookeeper-02:2181,Zookeeper-03:2181</value>--> <value>Hadoop-DN-01:2181,Hadoop-DN-02:2181,Hadoop-DN-03:2181</value> </property> <property> <name>yarn.resourcemanager.zk.state-store.address</name> <!--<value>Zookeeper-01:2181,Zookeeper-02:2181,Zookeeper-03:2181</value>--> <value>Hadoop-DN-01:2181,Hadoop-DN-02:2181,Hadoop-DN-03:2181</value> </property> <!-- Client访问RM的RPC地址 (applications manager interface) --> <property> <name>yarn.resourcemanager.address.rm1</name> <value>Hadoop-NN-01:23140</value> </property> <property> <name>yarn.resourcemanager.address.rm2</name> <value>Hadoop-NN-02:23140</value> </property> <!-- AM访问RM的RPC地址(scheduler interface) --> <property> <name>yarn.resourcemanager.scheduler.address.rm1</name> <value>Hadoop-NN-01:23130</value> </property> <property> <name>yarn.resourcemanager.scheduler.address.rm2</name> <value>Hadoop-NN-02:23130</value> </property> <!-- RM admin interface --> <property> <name>yarn.resourcemanager.admin.address.rm1</name> <value>Hadoop-NN-01:23141</value> </property> <property> <name>yarn.resourcemanager.admin.address.rm2</name> <value>Hadoop-NN-02:23141</value> </property> <!--NM访问RM的RPC端口 --> <property> <name>yarn.resourcemanager.resource-tracker.address.rm1</name> <value>Hadoop-NN-01:23125</value> </property> <property> <name>yarn.resourcemanager.resource-tracker.address.rm2</name> <value>Hadoop-NN-02:23125</value> </property> <!-- RM web application 地址 --> <property> <name>yarn.resourcemanager.webapp.address.rm1</name> <value>Hadoop-NN-01:23188</value> </property> <property> <name>yarn.resourcemanager.webapp.address.rm2</name> <value>Hadoop-NN-02:23188</value> </property> <property> <name>yarn.resourcemanager.webapp.https.address.rm1</name> <value>Hadoop-NN-01:23189</value> </property> <property> <name>yarn.resourcemanager.webapp.https.address.rm2</name> <value>Hadoop-NN-02:23189</value> </property> </configuration>

(7)修改 $HADOOP_HOME/etc/hadoop/slaves

Hadoop-DN-01 Hadoop-DN-02 Hadoop-DN-03

3、分发程序

#因为我的SSH登录修改了端口,所以使用了 -P 6000 scp -P 6000 -r /home/hadoopuser/hadoop-2.6.0-cdh5.6.0 hadoopuser@Hadoop-NN-02:/home/hadoopuser scp -P 6000 -r /home/hadoopuser/hadoop-2.6.0-cdh5.6.0 hadoopuser@Hadoop-DN-01:/home/hadoopuser scp -P 6000 -r /home/hadoopuser/hadoop-2.6.0-cdh5.6.0 hadoopuser@Hadoop-DN-02:/home/hadoopuser scp -P 6000 -r /home/hadoopuser/hadoop-2.6.0-cdh5.6.0 hadoopuser@Hadoop-DN-03:/home/hadoopuser

4、启动HDFS

(1)启动JournalNode:

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ hadoop-daemon.sh start journalnode starting journalnode, logging to /home/hadoopuser/hadoop-2.6.0-cdh5.6.0/logs/hadoop-puppet-journalnode-BigData-03.out

验证JournalNode:

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ jps 5652 QuorumPeerMain 9076 Jps 9029 JournalNode

停止JournalNode:

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ hadoop-daemon.sh stop journalnode stoping journalnode

(2)NameNode 格式化:

结点Hadoop-NN-01:hdfs namenode -format

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ hdfs namenode -format

(3)同步NameNode元数据:

同步Hadoop-NN-01元数据到Hadoop-NN-02

主要是:dfs.namenode.name.dir,dfs.namenode.edits.dir还应该确保共享存储目录下(dfs.namenode.shared.edits.dir ) 包含NameNode 所有的元数据。

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ scp -P 6000 -r data/ hadoopuser@Hadoop-NN-02:/home/hadoopuser/hadoop-2.6.0-cdh5.6.0

(4)初始化ZFCK:

创建ZNode,记录状态信息。

结点Hadoop-NN-01:hdfs zkfc -formatZK

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ hdfs zkfc -formatZK

(5)启动

集群启动法:Hadoop-NN-01: start-dfs.sh

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ start-dfs.sh

单进程启动法:

<1>NameNode(Hadoop-NN-01,Hadoop-NN-02):hadoop-daemon.sh start namenode

<2>DataNode(Hadoop-DN-01,Hadoop-DN-02,Hadoop-DN-03):hadoop-daemon.sh start datanode

<3>JournalNode(Hadoop-DN-01,Hadoop-DN-02,Hadoop-DN-03):hadoop-daemon.sh start journalnode

<4>ZKFC(Hadoop-NN-01,Hadoop-NN-02):hadoop-daemon.sh start zkfc

(6)验证

<1>进程

NameNode:jps

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ jps 9329 JournalNode 9875 NameNode 10155 DFSZKFailoverController 10223 Jps

DataNode:jps

[hadoopuser@Linux05 hadoop-2.6.0-cdh5.6.0]$ jps 9498 Jps 9019 JournalNode 9389 DataNode 5613 QuorumPeerMain

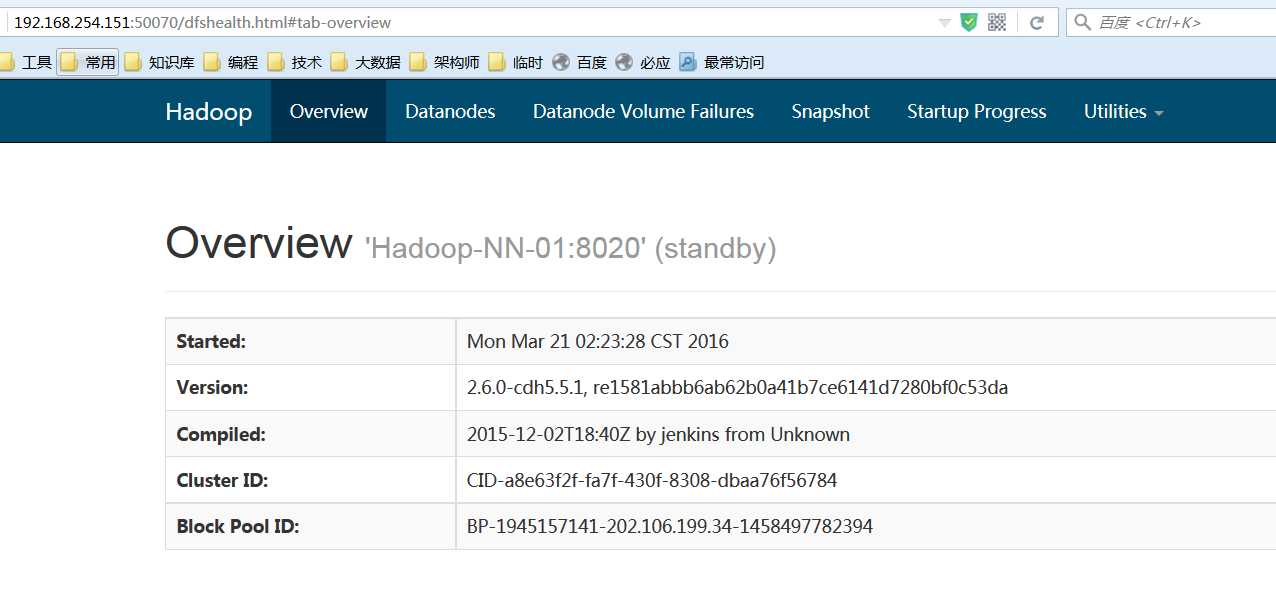

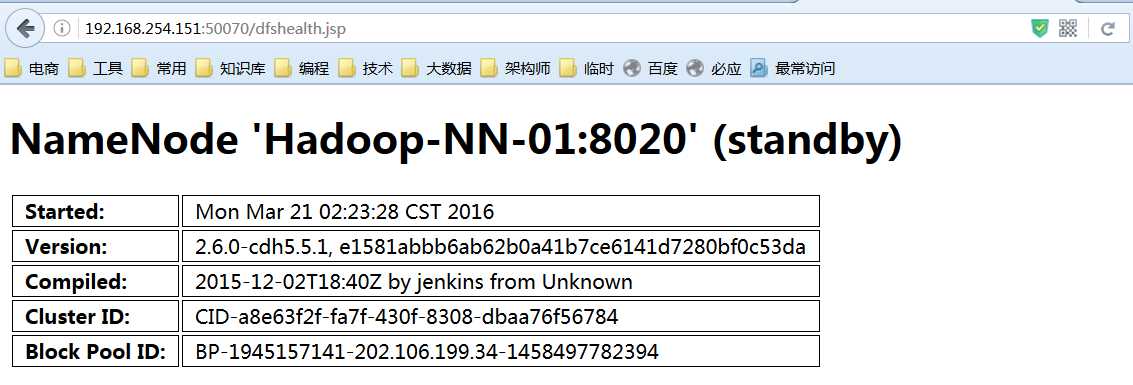

<2>页面:

Active结点:http://192.168.254.151:50070

(7)停止:stop-dfs.sh

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ stop-dfs.sh

5、启动Yarn

(1)启动

<1>集群启动

Hadoop-NN-01启动Yarn,命令所在目录:$HADOOP_HOME/sbin

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ start-yarn.sh

Hadoop-NN-02备机启动RM:

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ yarn-daemon.sh start resourcemanager

<2>单进程启动

ResourceManager(Hadoop-NN-01,Hadoop-NN-02):yarn-daemon.sh start resourcemanager

DataNode(Hadoop-DN-01,Hadoop-DN-02,Hadoop-DN-03):yarn-daemon.sh start nodemanager

(2)验证

<1>进程:

JobTracker:Hadoop-NN-01,Hadoop-NN-02

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ jps 9329 JournalNode 9875 NameNode 10355 ResourceManager 10646 Jps 10155 DFSZKFailoverController

TaskTracker:Hadoop-DN-01,Hadoop-DN-02,Hadoop-DN-03

[hadoopuser@Linux05 hadoop-2.6.0-cdh5.6.0]$ jps 9552 NodeManager 9680 Jps 9019 JournalNode 9389 DataNode 5613 QuorumPeerMain

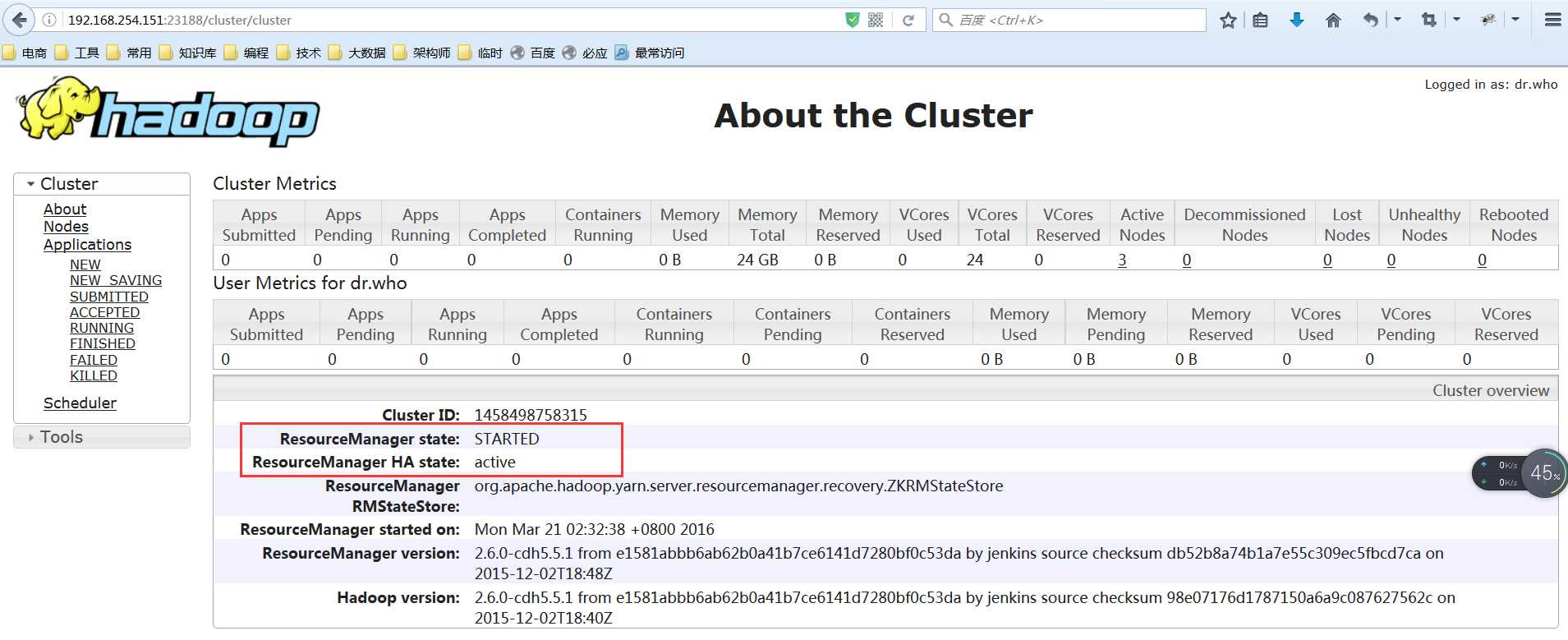

<2>页面

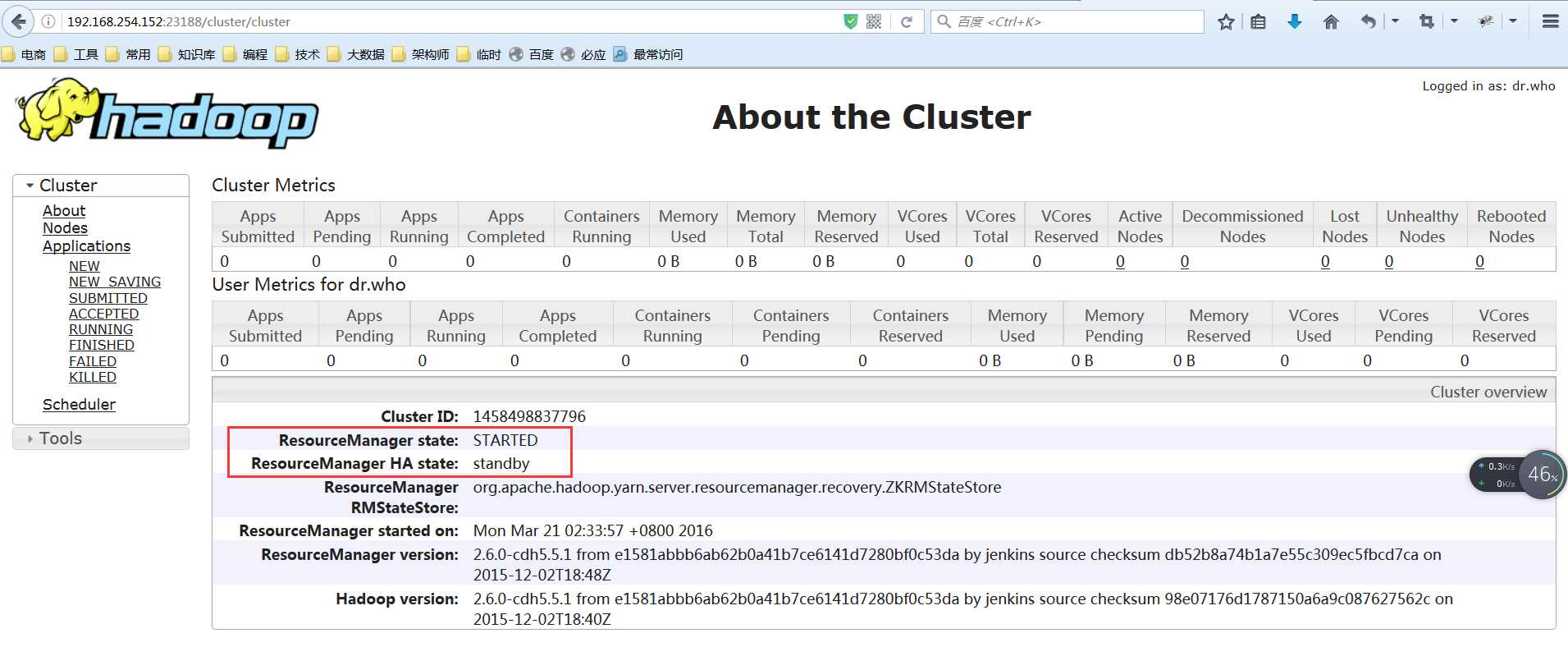

ResourceManger(Active):192.168.254.151:23188

ResourceManager(Standby):192.168.254.152:23188

(3)停止

Hadoop-NN-01:stop-yarn.sh

[hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ stop-yarn.sh Hadoop-NN-02:yarn-daemon.sh stop resourcemanager [hadoopuser@Linux01 hadoop-2.6.0-cdh5.6.0]$ yarn-daeman.sh stop resourcemanager

附:Hadoop常用命令总结

#第1步 启动3个DN的zookeeper [hadoopuser@Linux01 ~]$ zkServer.sh start [hadoopuser@Linux01 ~]$ zkServer.sh stop#停止 #第2步 启动JournalNode: [hadoopuser@Linux01 hadoop-2.6.0-cdh5.5.1]$ hadoop-daemon.sh start journalnode starting journalnode, logging to /home/hadoopuser/hadoop/hadoop-2.6.0-cdh5.5.1/logs/hadoop-puppet-journalnode-BigData-03.out [hadoopuser@Linux01 hadoop-2.6.0-cdh5.5.1]$ hadoop-daemon.sh stop journalnode stoping journalnode #停止 #第3步 启动DFS: [hadoopuser@Linux01 hadoop-2.6.0-cdh5.5.1]$ start-dfs.sh [hadoopuser@Linux01 hadoop-2.6.0-cdh5.5.1]$ stop-dfs.sh #停止 #第4步 启动Yarn: #Hadoop-NN-01启动Yarn [hadoopuser@Linux01 hadoop-2.6.0-cdh5.5.1]$ start-yarn.sh #Hadoop-NN-02备机启动RM [hadoopuser@Linux01 hadoop-2.6.0-cdh5.5.1]$ yarn-daemon.sh start resourcemanager #停止: #Hadoop-NN-01: [hadoopuser@Linux01 hadoop-2.6.0-cdh5.5.1]$ stop-yarn.sh #Hadoop-NN-02: [hadoopuser@Linux01 hadoop-2.6.0-cdh5.5.1]$ yarn-daemon.sh stop resourcemanager

——郑重声明:本文仅为作者个人笔记,请勿转载!——

标签:

原文地址:http://www.cnblogs.com/hunttown/p/5452138.html