标签:

Mavon是一种项目管理工具,通过xml配置来设置项目信息。

Mavon POM(project of model).

1. set up and configure the development environment.

2. writing your map and reduce functions and run them in local (standalone) mode from the command line or within your IDE.

3. unit test --> test on small dataset --> test on the full dataset after unleash in a cluster

--> tuning

For example, write a configuration-1.xml like:

<?xml version="1.0"?>

<configuration>

<property>

<name>color</name>

<value>yellow</value>

<description>Color</description>

</property>

<property>

<name>size</name>

<value>10</value>

<description>Size</description>

</property>

<property>

<name>weight</name>

<value>heavy</value>

<final>true</final>

<description>Weight</description>

</property>

<property>

<name>size-weight</name>

<value>${size},${weight}</value>

<description>Size and weight</description>

</property>

</configuration>

then access it by coding below:

Configuration conf = new Configuration();

conf.addResource("configuration-1.xml");

conf.addResource("configuration-2.xml"); // more than one resource are added orderly, and the latter will overwrite the former.

assertThat(conf.get("color"), is("yellow"));

assertThat(conf.getInt("size", 0), is(10));

assertThat(conf.get("breadth", "wide"), is("wide"));

Note:

System.setProperty("size", "14")

Options specified with -D take priority over properties from the configuration files.

This will override the number of reducers set on the cluster or set in any client-side configuration files.

% hadoop ConfigurationPrinter -D color=yellow | grep color

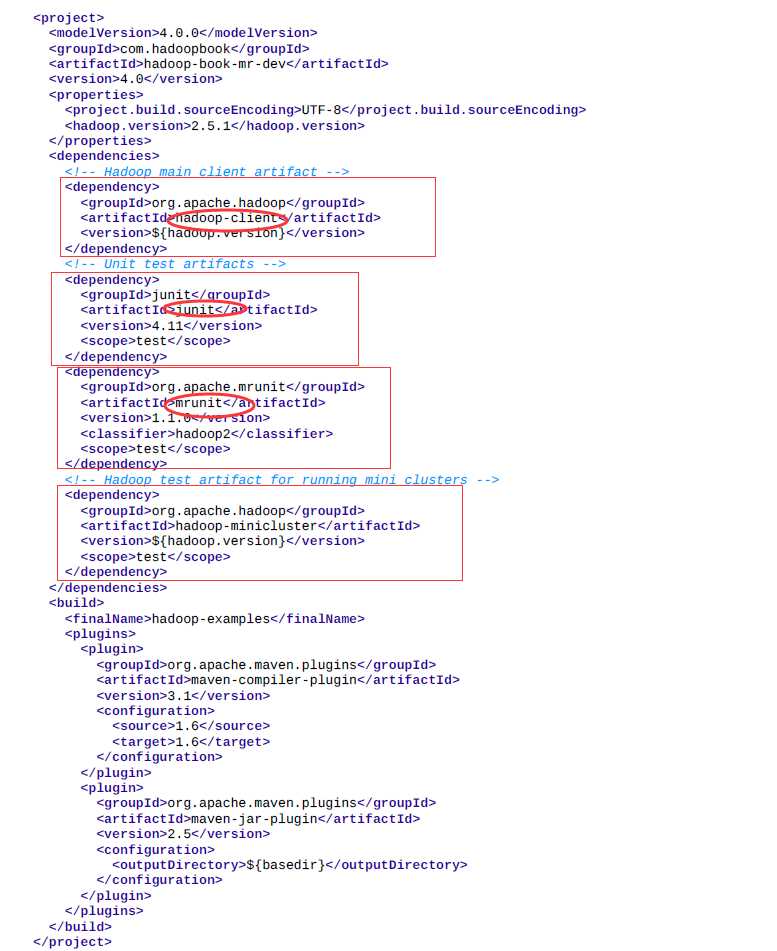

The Maven POMs (Project Object Model) are used to show the dependencies needed for building and testing MapReduce programs. Actually a xml file.

Many IDEs can read Maven POMs directly, so you can just point them at the directory containing the pom.xml file and start writing code.

Alternatively, you can use Maven to generate configuration files for your IDE. For example, the following creates Eclipse configuration files so you can import the project into Eclipse:

% mvn eclipse:eclipse -DdownloadSources=true -DdownloadJavadocs=true

It is common to switch between running the application locally and running it on a cluster.

For example, the following command shows a directory listing on the HDFS serverrunning in pseudodistributed mode on localhost:

- conf

% hadoop fs -conf conf/hadoop-localhost.xml -ls Found 2 items drwxr-xr-x - tom supergroup 0 2014-09-08 10:19 input drwxr-xr-x - tom supergroup 0 2014-09-08 10:19 output

Mapper: to get year and temperature from an input string

public class MaxTemperatureMapper

extends Mapper<LongWritable, Text, Text, IntWritable> {

@Override

public void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

String line = value.toString();

String year = line.substring(15, 19);

int airTemperature = Integer.parseInt(line.substring(87, 92));

context.write(new Text(year), new IntWritable(airTemperature));

}

}

Unit test for the Mapper:

import java.io.IOException;

import org.apache.hadoop.io.*;

import org.apache.hadoop.mrunit.mapreduce.MapDriver;

import org.junit.*;

public class MaxTemperatureMapperTest {

@Test

public void processesValidRecord() throws IOException, InterruptedException {

Text value = new Text("0043011990999991950051518004+68750+023550FM-12+0382" +

// Year ^^^^

"99999V0203201N00261220001CN9999999N9-00111+99999999999");

// Temperature ^^^^^

new MapDriver<LongWritable, Text, Text, IntWritable>()

.withMapper(new MaxTemperatureMapper())

.withInput(new LongWritable(0), value)

.withOutput(new Text("1950"), new IntWritable(-11))

.runTest();

}

}

Reducer: to get the maxmium

public class MaxTemperatureReducer

extends Reducer<Text, IntWritable, Text, IntWritable> {

@Override

public void reduce(Text key, Iterable<IntWritable> values, Context context)

throws IOException, InterruptedException {

int maxValue = Integer.MIN_VALUE;

for (IntWritable value : values) {

maxValue = Math.max(maxValue, value.get());

}

context.write(key, new IntWritable(maxValue));

}

}

Unit test for the Reducer:

@Test

public void returnsMaximumIntegerInValues() throws IOException, InterruptedException {

new ReduceDriver<Text, IntWritable, Text, IntWritable>()

.withReducer(new MaxTemperatureReducer())

.withInput(new Text("1950"),

Arrays.asList(new IntWritable(10), new IntWritable(5)))

.withOutput(new Text("1950"), new IntWritable(10))

.runTest();

}

Using the Tool interface , it’s easy to write a driver to run a MapReduce job.

Then run the driver locally.

% mvn compile % export HADOOP_CLASSPATH=target/classes/ % hadoop v2.MaxTemperatureDriver -conf conf/hadoop-local.xml input/ncdc/micro output

或

% hadoop v2.MaxTemperatureDriver -fs file:/// -jt local input/ncdc/micro output

The local job runner uses a single JVM to run a job, so as long as all the classes that your job needs are on its classpath, then things will just work.

a job’s classes must be packaged into a job JAR file to send to the cluster

Hadoop 学习笔记3 Develping MapReduce

标签:

原文地址:http://www.cnblogs.com/skyEva/p/5567276.html