标签:sha manifest dir pre 运行 ext stop mpi attr

为什么,我要在这里提出要用Ultimate版本。

基于Intellij IDEA搭建Spark开发环境搭——参考文档

参考文档http://spark.apache.org/docs/latest/programming-guide.html

操作步骤

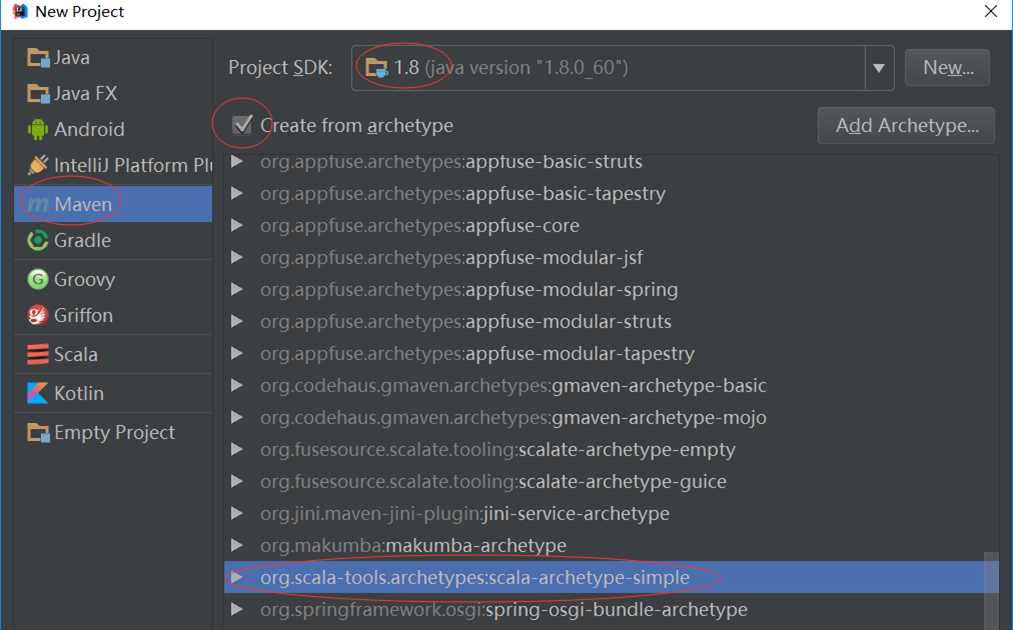

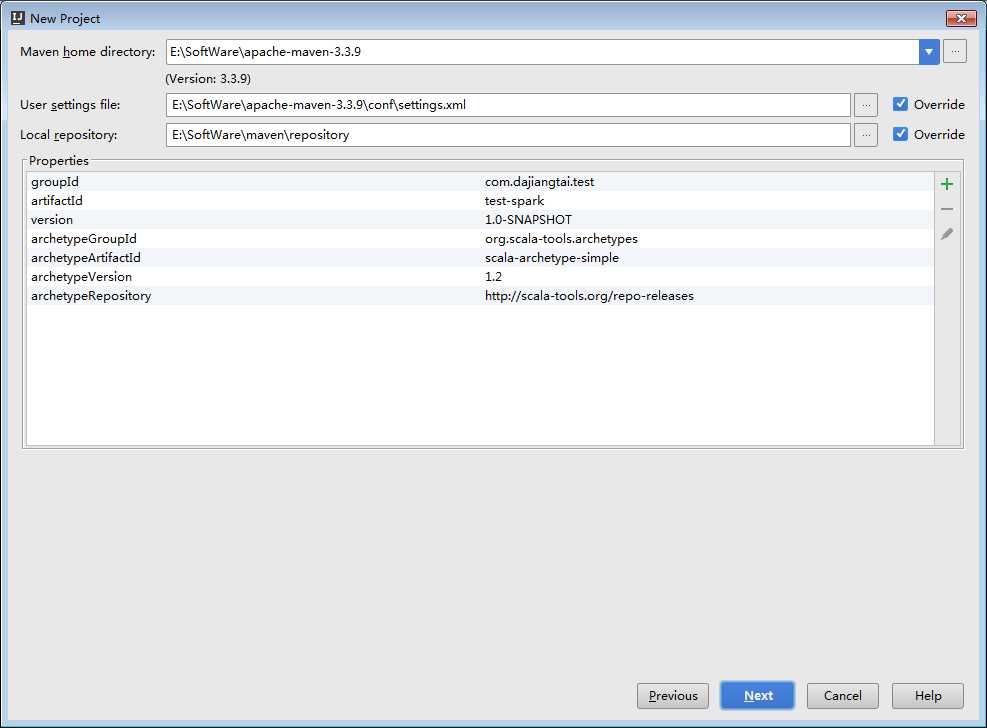

a)创建maven 项目

b)引入依赖(Spark 依赖、打包插件等等)

基于Intellij IDEA搭建Spark开发环境—maven vs sbt

a)哪个熟悉用哪个

b)Maven也可以构建scala项目

基于Intellij IDEA搭建Spark开发环境搭—maven构建scala项目

参考文档http://docs.scala-lang.org/tutorials/scala-with-maven.html

操作步骤

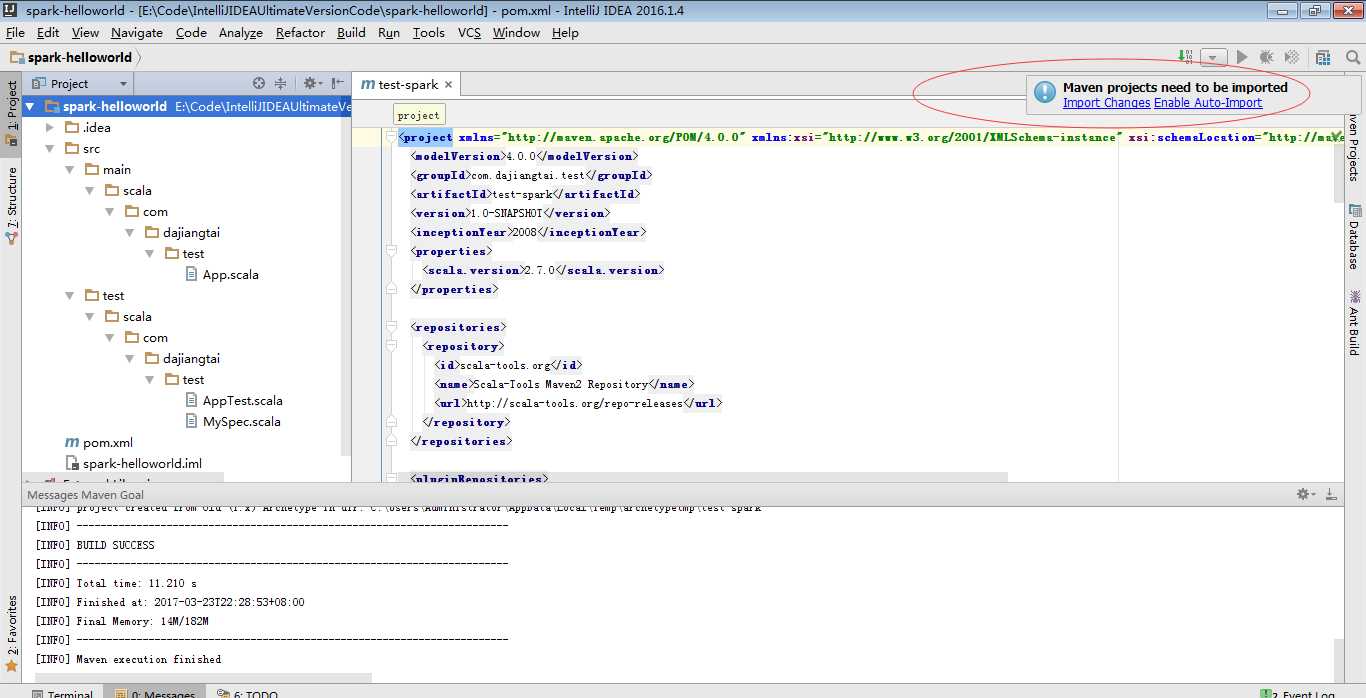

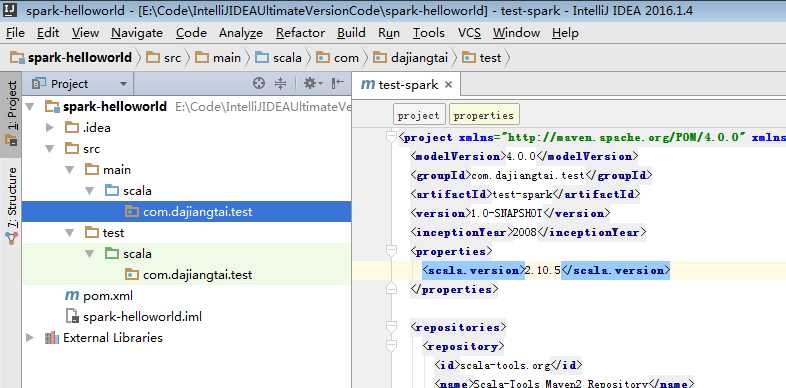

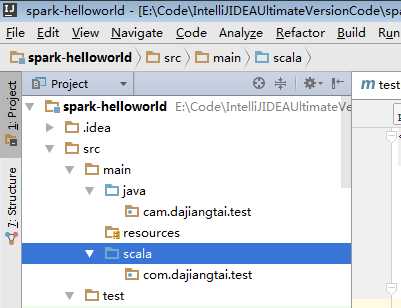

a) 用maven构建scala项目(基于net.alchim31.maven:scala-archetype-simple)

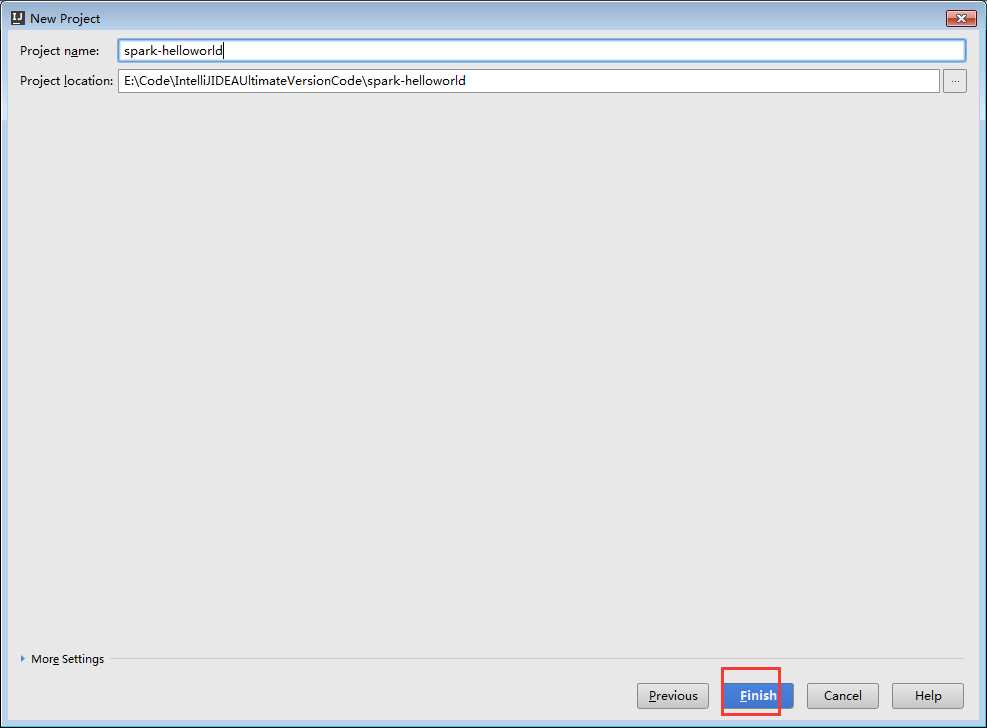

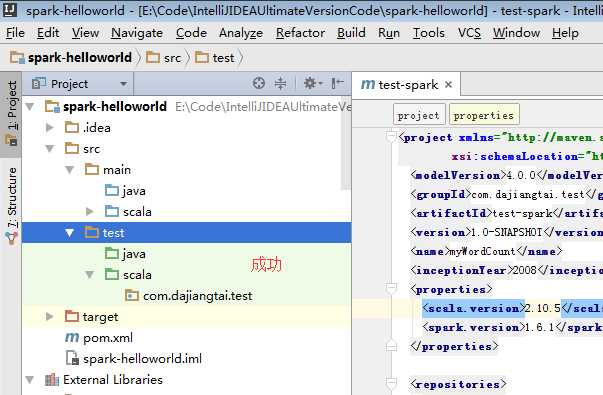

spark-helloworld

E:\Code\IntelliJIDEAUltimateVersionCode\spark-helloworld

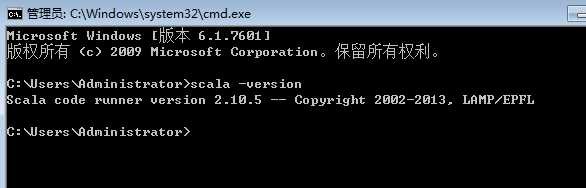

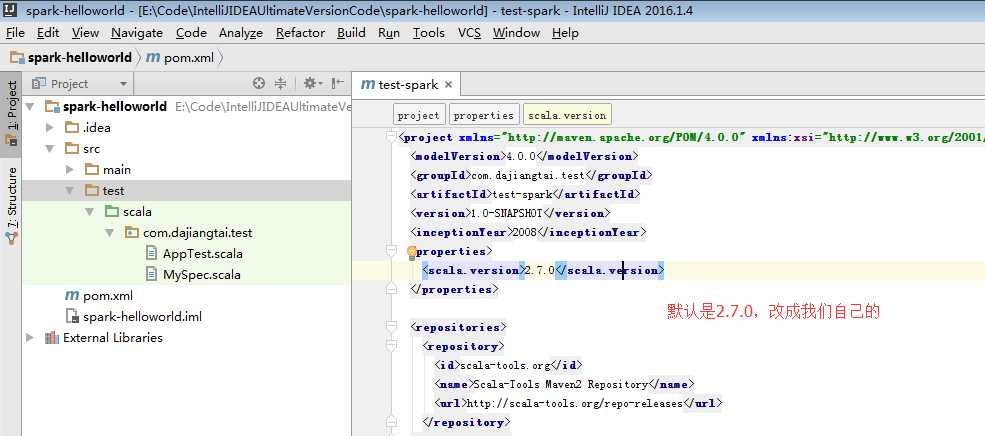

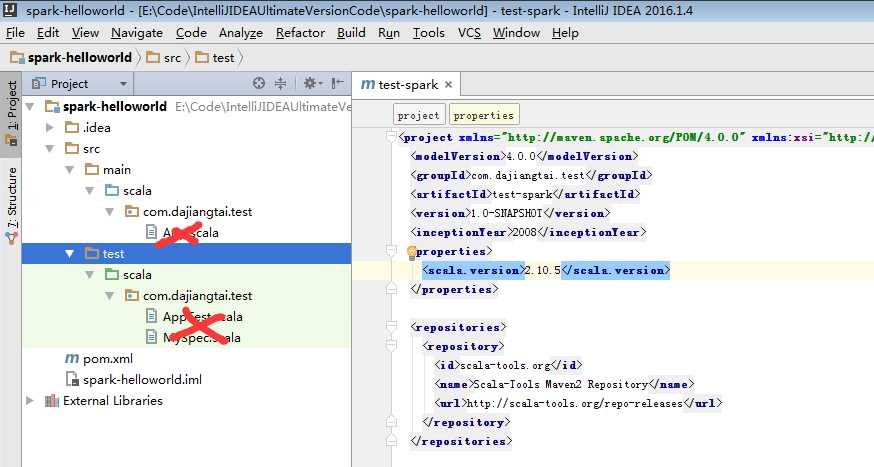

因为,我本地的scala版本是2.10.5

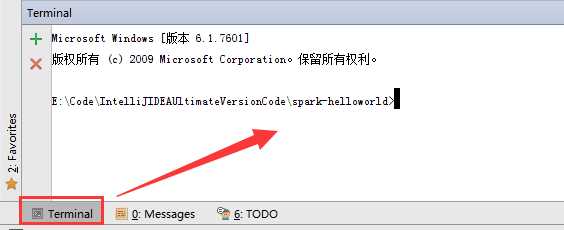

其实,这个就是windows里的cmd终端,只是IDEA它把这个cmd终端集成到这了。

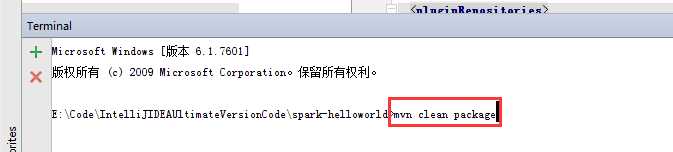

mvn clean package

这只是做个测试而已。

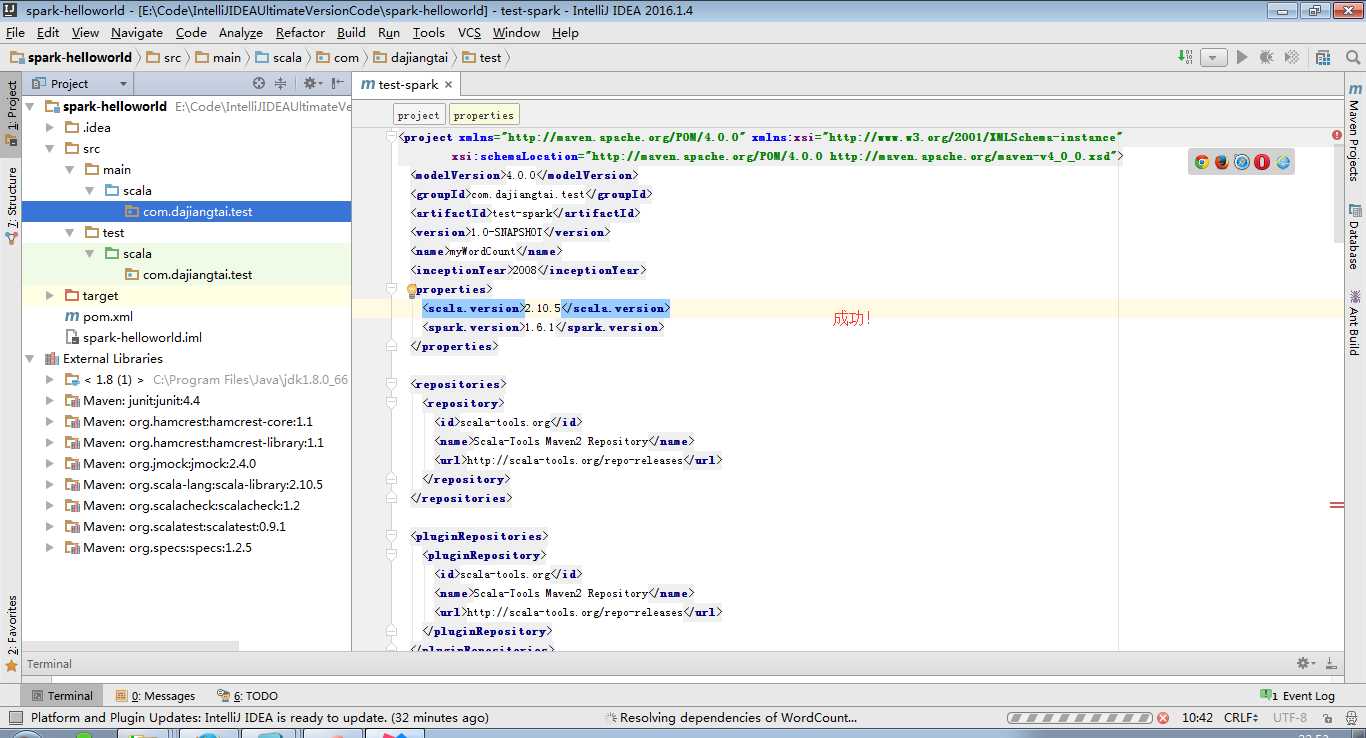

b)pom.xml引入依赖(spark依赖、打包插件等等)

注意:scala与java版本的兼容性

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd">

<modelVersion>4.0.0</modelVersion>

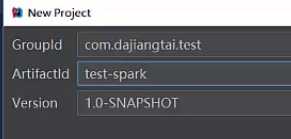

<groupId>com.dajiangtai.test</groupId>

<artifactId>test-spark</artifactId>

<version>1.0-SNAPSHOT</version>

<name>myWordCount</name>

<inceptionYear>2008</inceptionYear>

<properties>

<scala.version>2.10.5</scala.version>

<spark.version>1.6.1</spark.version>

</properties>

<repositories>

<repository>

<id>scala-tools.org</id>

<name>Scala-Tools Maven2 Repository</name>

<url>http://scala-tools.org/repo-releases</url>

</repository>

</repositories>

<pluginRepositories>

<pluginRepository>

<id>scala-tools.org</id>

<name>Scala-Tools Maven2 Repository</name>

<url>http://scala-tools.org/repo-releases</url>

</pluginRepository>

</pluginRepositories>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.4</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.specs</groupId>

<artifactId>specs</artifactId>

<version>1.2.5</version>

<scope>test</scope>

</dependency>

<!--spark -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.10</artifactId>

<version>${spark.version}</version>

<scope>provided</scope>

</dependency>

</dependencies>

<build>

<!--

<sourceDirectory>src/main/scala</sourceDirectory>

<testSourceDirectory>src/test/scala</testSourceDirectory>

-->

<plugins>

<plugin>

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

<configuration>

<scalaVersion>${scala.version}</scalaVersion>

<args>

<arg>-target:jvm-1.5</arg>

</args>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-eclipse-plugin</artifactId>

<configuration>

<downloadSources>true</downloadSources>

<buildcommands>

<buildcommand>ch.epfl.lamp.sdt.core.scalabuilder</buildcommand>

</buildcommands>

<additionalProjectnatures>

<projectnature>ch.epfl.lamp.sdt.core.scalanature</projectnature>

</additionalProjectnatures>

<classpathContainers>

<classpathContainer>org.eclipse.jdt.launching.JRE_CONTAINER</classpathContainer>

<classpathContainer>ch.epfl.lamp.sdt.launching.SCALA_CONTAINER</classpathContainer>

</classpathContainers>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>2.4.1</version>

<executions>

<!-- Run shade goal on package phase -->

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<transformers>

<!-- add Main-Class to manifest file -->

<transformer

implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<!--<mainClass>com.dajiang.MyDriver</mainClass>-->

</transformer>

</transformers>

<createDependencyReducedPom>false</createDependencyReducedPom>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

<reporting>

<plugins>

<plugin>

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<configuration>

<scalaVersion>${scala.version}</scalaVersion>

</configuration>

</plugin>

</plugins>

</reporting>

</project>

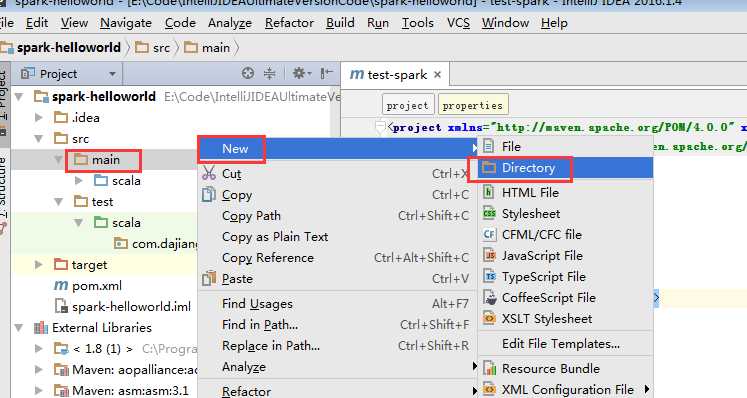

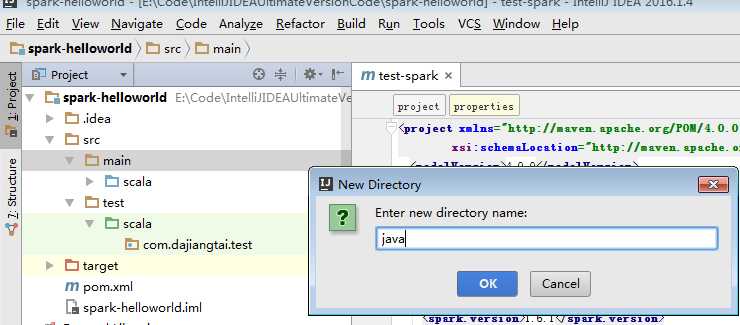

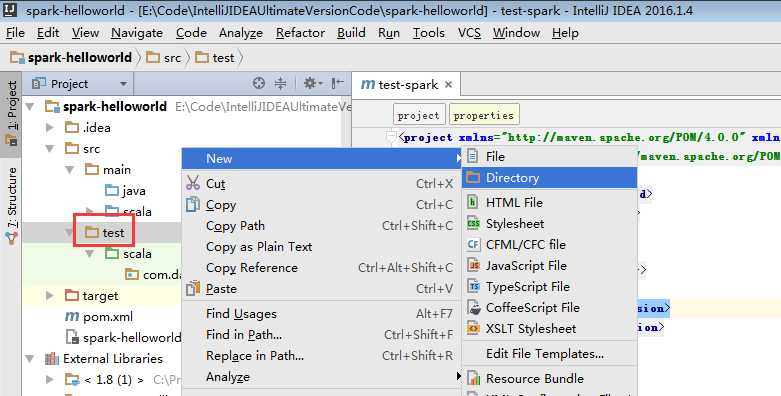

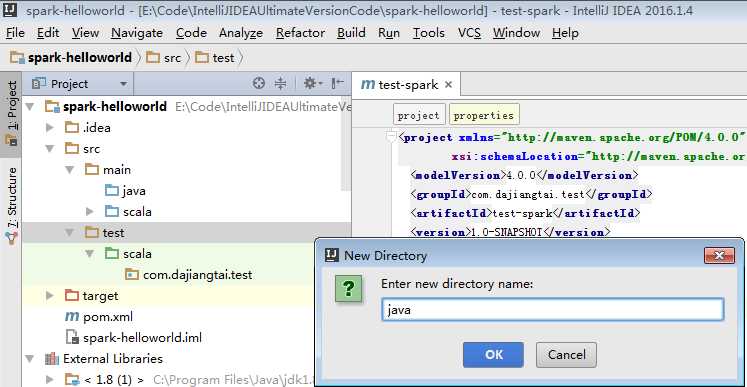

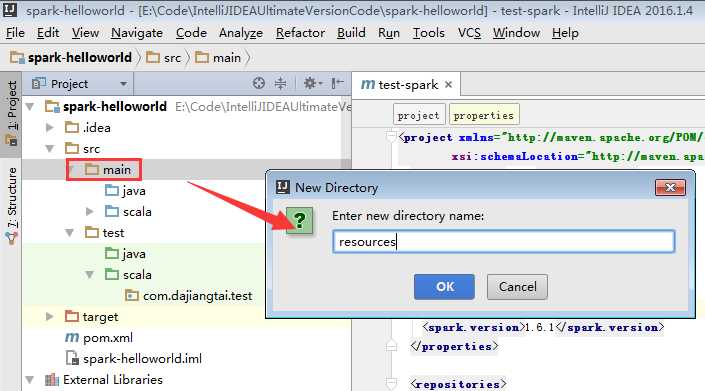

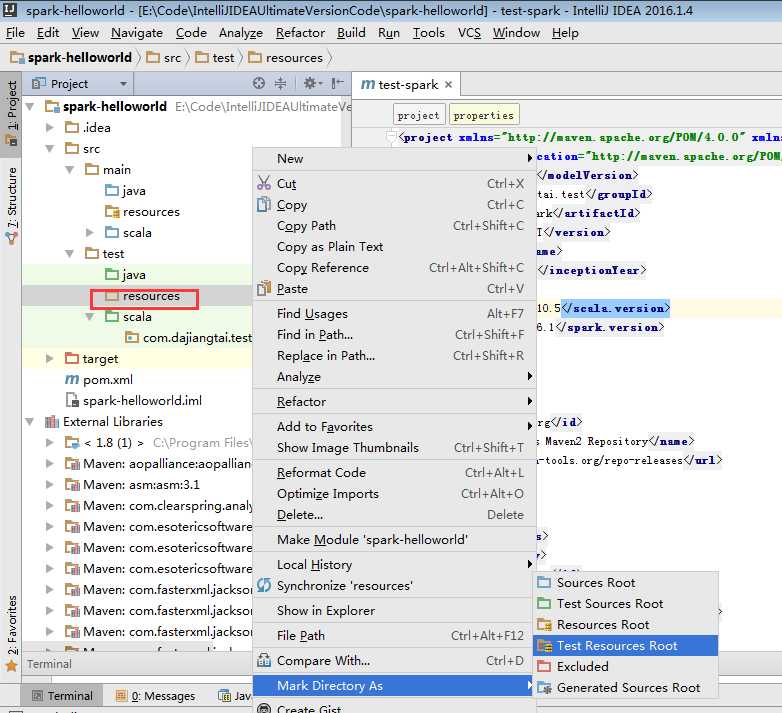

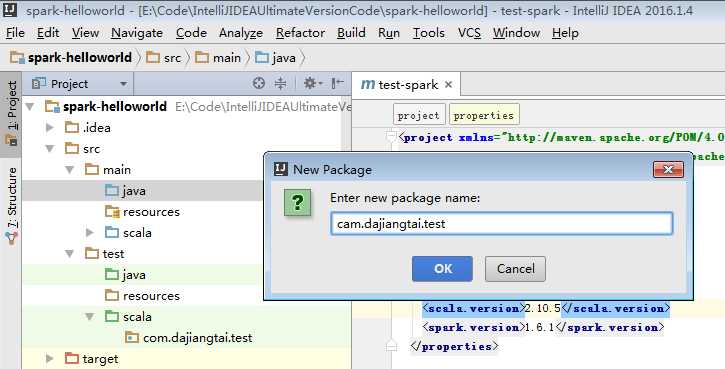

为了养成,开发规范。

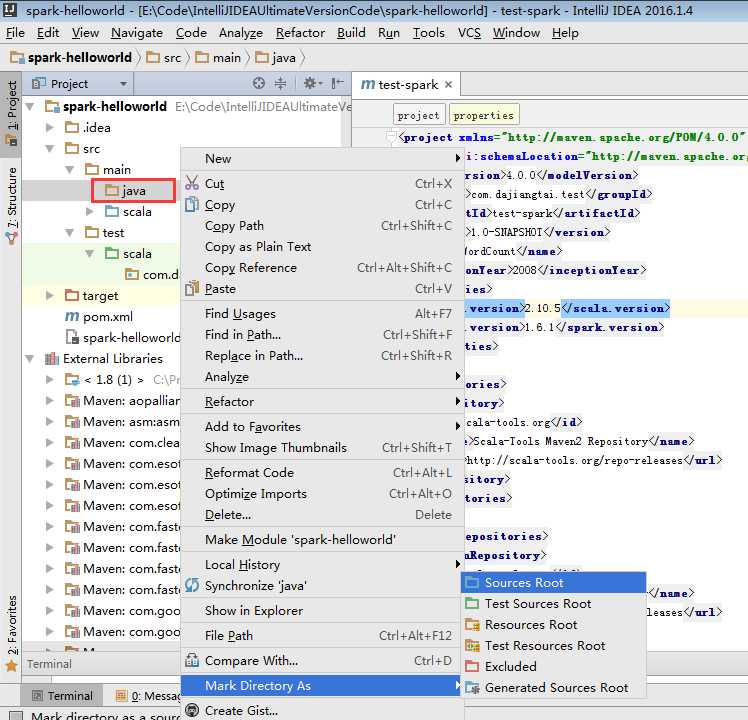

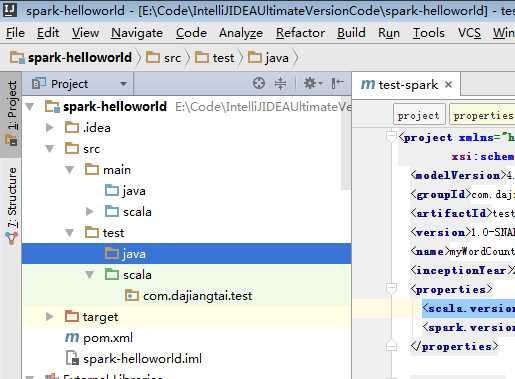

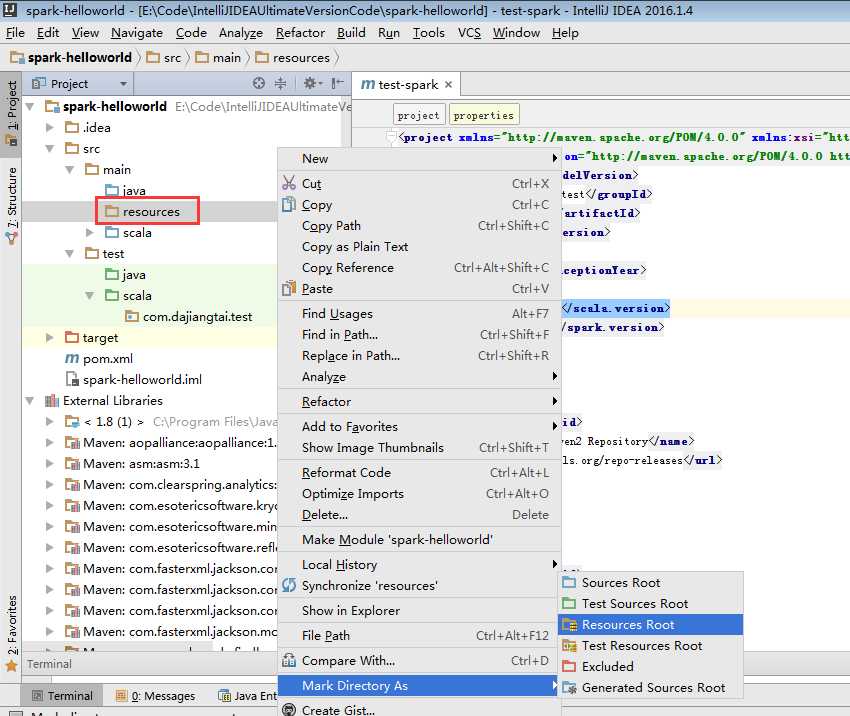

默认,创建是没有生效的,比如做如下,才能生效。

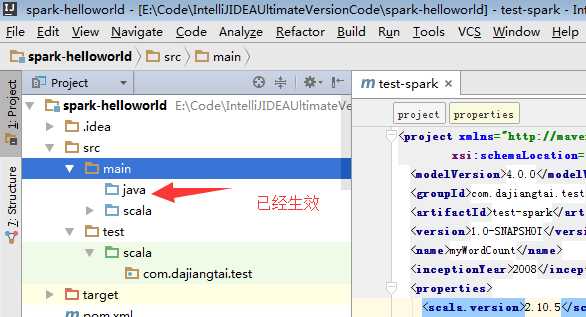

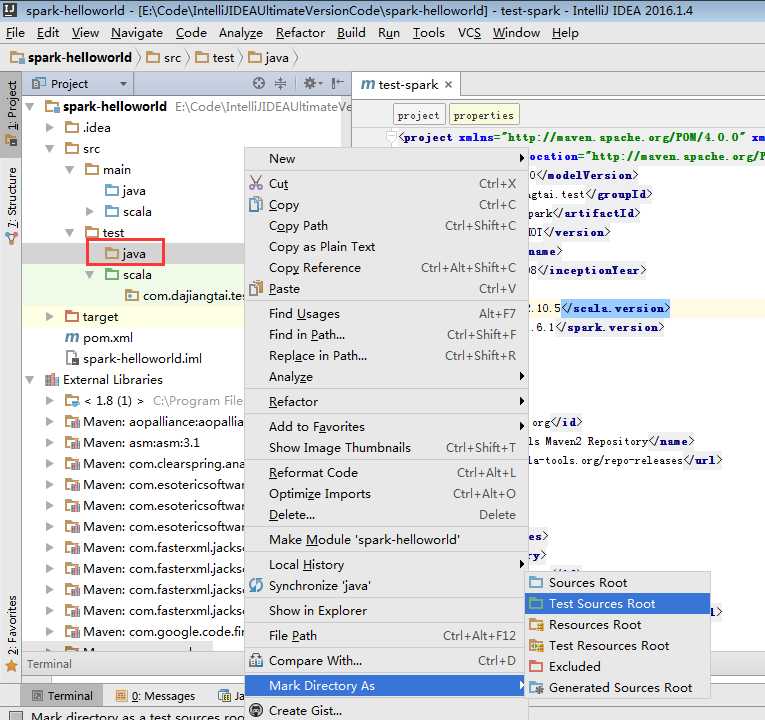

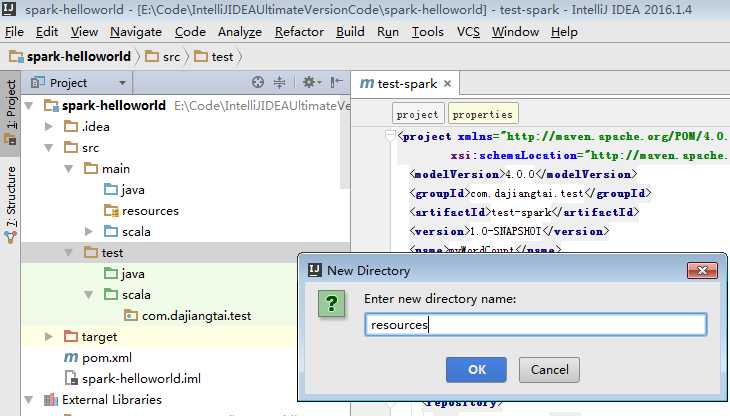

同样,对于下面的单元测试,也是一样

默认,也是没有生效的。

必须做如下的动作,才能生效。

a) 第一个Scala版本的spark程序

package com.dajiangtai.test import org.apache.spark.{SparkConf, SparkContext} /** * Created by lifei on 2016-6-19. */ object MyWordCout { def main(args: Array[String]): Unit = { //参数检查 if (args.length < 2) { System.err.println("Usage: MyWordCout <input> <output> ") System.exit(1) } //获取参数 val input=args(0) val output=args(1) //创建scala版本的SparkContext val conf=new SparkConf().setAppName("myWordCount") val sc=new SparkContext(conf) //读取数据 val lines=sc.textFile(input) //进行相关计算 val resultRdd=lines.flatMap(_.split(" ")).map((_,1)).reduceByKey(_+_) //保存结果 resultRdd.saveAsTextFile(output) sc.stop() }

b) 第一个Java版本的spark程序

package com.dajiangtai.test; import org.apache.spark.SparkConf; import org.apache.spark.api.java.JavaPairRDD; import org.apache.spark.api.java.JavaRDD; import org.apache.spark.api.java.JavaSparkContext; import org.apache.spark.api.java.function.FlatMapFunction; import org.apache.spark.api.java.function.Function2; import org.apache.spark.api.java.function.PairFunction; import scala.Tuple2; import java.util.Arrays; /** * Created by lifei on 2016-6-19. */ public class MyJavaWordCount { public static void main(String[] args) { //参数检查 if(args.length<2){ System.err.println("Usage: MyJavaWordCount <input> <output> "); System.exit(1); } //获取参数 String input=args[0]; String output=args[1]; //创建java版本的SparkContext SparkConf conf=new SparkConf().setAppName("MyJavaWordCount"); JavaSparkContext sc=new JavaSparkContext(conf); //读取数据 JavaRDD inputRdd=sc.textFile(input); //进行相关计算 JavaRDD words=inputRdd.flatMap(new FlatMapFunction() { public Iterable call(String line) throws Exception { return Arrays.asList(line.split(" ")); } }); JavaPairRDD result=words.mapToPair(new PairFunction() { public Tuple2 call(String word) throws Exception { return new Tuple2(word,1); } }).reduceByKey(new Function2() { public Integer call(Integer x, Integer y) throws Exception { return x+y; } }); //保存结果 result.saveAsTextFile(output); //关闭sc sc.stop(); } }

Spark maven 项目打包

mvn package

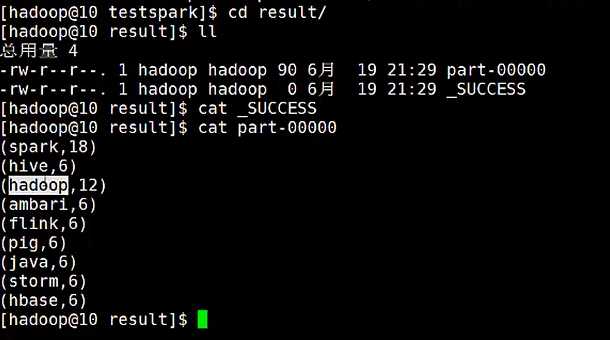

提交Spark 集群运行

a) 提交Scala版本的Wordcount

bin/spark-submit --class com.zhouls.test.MyWordCount ~/testspark/test-spark-1.0.SNAPSHOT.jar ~/testspark/words.txt ~/testspark/result

b) 提交Java版本的Wordcount

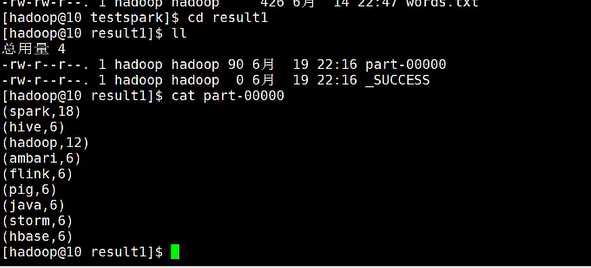

bin/spark-submit --class com.zhouls.test.MyJavaWordCount ~/testspark/test-spark-1.0.SNAPSHOT.jar ~/testspark/words.txt ~/testspark/result1

成功!

Spark编程环境搭建(基于Intellij IDEA的Ultimate版本)

标签:sha manifest dir pre 运行 ext stop mpi attr

原文地址:http://www.cnblogs.com/zlslch/p/6607599.html