标签:错误 算法 inf ack net contents 频率 ring 转换

朴素贝叶斯方法是一种使用先验概率去计算后验概率的方法, 具体见上一节。

算法包:e1071

函数:navieBayes(formule,data,laplace=0,...,subset,na.action=na.pass)

模型常用评判标准

R手机短信过滤示例

参考:https://zhuanlan.zhihu.com/p/22615168

数据导入

setwd("E:\\RML")

sms <- read.csv("sms.csv",header=TRUE,stringsAsFactors=FALSE)

将sms$type设置为因子变量,再查看sms结构,若type中有其他类型的文本,那么factor会大于2个,正好验证数据是否有误读

sms$type <- factor(sms$type)

str(sms)

统计垃圾短信与非垃圾短信在这个数据集中各占了多少

> table(sms$type)

ham spam

4827 747

数据清洗

> library(NLP)

> library(tm)

> sms_corpus <- Corpus(VectorSource(sms$text))

> print(sms_corpus)

<<SimpleCorpus>>

Metadata: corpus specific: 1, document level (indexed): 0

Content: documents: 5574

清理语料库

> # 所有字母转换成小写

> corpus_clean <- tm_map(sms_corpus, tolower)

> # 去除text中的数字

> corpus_clean <- tm_map(corpus_clean, removeNumbers)

> # 去除停用词,例如and,or,until...

> corpus_clean <- tm_map(corpus_clean, removeWords, stopwords())

> # 去除标点符号

> corpus_clean <- tm_map(corpus_clean, removePunctuation)

> # 去除多余的空格,使单词之间只保留一个空格

> corpus_clean <- tm_map(corpus_clean, stripWhitespace)

> #查看一下清理后的语料库文本

> inspect(corpus_clean[1])

<<SimpleCorpus>>

Metadata: corpus specific: 1, document level (indexed): 0

Content: documents: 1

[1] go jurong point crazy available bugis n great world la e buffet cine got amore wat

>

注:inspect 是tm包的函数,display detailed information on a corpus, a term-document matrix, or a text document

标记化

> #将文本信息转化成DocumentTermMatrix类型的稀疏矩阵 > dtm <- DocumentTermMatrix(corpus_clean) > dtm[1:3,] <<DocumentTermMatrix (documents: 3, terms: 7928)>> Non-/sparse entries: 31/23753 Sparsity : 100% Error in nchar(Terms(x), type = "chars") : invalid multibyte string, element 183

解决异常的方法,执行如下语句

> Sys.setlocale(category = "LC_ALL", locale = "us") [1] "LC_COLLATE=English_United States.1252;LC_CTYPE=English_United States.1252;LC_MONETARY=English_United States.1252;LC_NUMERIC=C;LC_TIME=English_United States.1252" > dtm[1:3,] <<DocumentTermMatrix (documents: 3, terms: 7928)>> Non-/sparse entries: 31/23753 Sparsity : 100% Maximal term length: 40 Weighting : term frequency (tf)

建立训练集和测试集数据

> #训练集和测试集数据,查看垃圾与正常邮件占比

> trainSet <- sms[1:4169,]

> testset <- sms[4170:5574,]

> prop.table(table(trainSet$type))

ham spam

0.8647158 0.1352842

> prop.table(table(testset$type))

ham spam

0.8697509 0.1302491

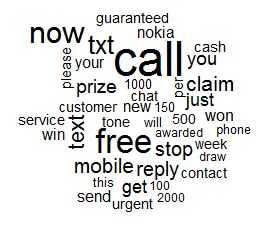

创建可视化词云,通过词云可以大致浏览一下哪些词在spam中经常出现,哪些词在ham中经常出现。当然,前者对于垃圾短信的过滤相对重要一点。绘制词云可以通过添加包wordcloud实现 install.packages("wordcloud")

> #创建可视化词云,大致浏览一下哪些词在spam中经常出现 > library(RColorBrewer) > library(wordcloud) > #取trainset对spam和ham的子集 > spam <- subset(trainSet, type == "spam") > ham <- subset(trainSet, type == "ham") > #创建词云 > wordcloud(spam$text, max.words=40, scale=c(3,0.5))

dtm_train <- dtm[1:4169,] > dtm_test <- dtm[4170:5574,] > findFreqTerms(dtm_train,5) [1] "available" "bugis" "cine" "crazy" "got" "great" "point" "wat" [9] "world" "lar" "wif" "apply" "comp" "cup" "entry" "final" [17] "free" "may" "receive" "text" "txt" "win" "wkly" "already" [25] "dun" "early" "say" "around" "goes" "nah" "think" "though" [33] "usf" "back" "freemsg" "fun" "hey" "like" "now" "send" [41] "std" "still" "weeks" "word" "xxx" "brother" "even" "speak" [49] "treat" "callers" "callertune" "copy" "friends" "melle" "per" "press" ........................

将这些词设置成指示标识,下面建模时用这个指示标识提示模型只对这些词进行计算

> #缩减特征 > d <- findFreqTerms(dtm,5) > corpus_train = sms_corpus[1:4169] > corpus_test = sms_corpus[4170:5574] > dtm_train <- DocumentTermMatrix(corpus_train,list(dictionary=d)) > dtm_test <- DocumentTermMatrix(corpus_test,list(dictionary=d))

train和test都是计数矩阵,如果一条text中某个单词出现2次,那么这个单词在这条文本下会被记上2,NB只想知道这个单词出现了或者没出现,因此需要对矩阵进行转化成因子矩阵。

> #对矩阵进行转化成因子矩阵 > convert_counts <- function(x){ + x <- ifelse(x>0,1,0) + x <- factor(x, levels=c(0,1),labels=c("No","Yes")) + return(x) + } > dtm_train <- apply(dtm_train, MARGIN=2, convert_counts) > dtm_test <- apply(dtm_test, MARGIN=2, convert_counts)

训练模型

> #需要的包是e1071 > #install.packages("e1071") > library(e1071) > sms_classifier <- naiveBayes(dtm_train,trainSet$type) > sms_prediction <- predict(sms_classifier, dtm_test) >

评估模型

> library(gmodels) > CrossTable(sms_prediction,testset$type,prop.chisq=TRUE,prop.t=FALSE, dnn=c("predicted","actual")) Cell Contents |-------------------------| | N | | Chi-square contribution | | N / Row Total | | N / Col Total | |-------------------------| Total Observations in Table: 1405 | actual predicted | ham | spam | Row Total | -------------|-----------|-----------|-----------| ham | 1124 | 150 | 1274 | | 0.229 | 1.531 | | | 0.882 | 0.118 | 0.907 | | 0.920 | 0.820 | | -------------|-----------|-----------|-----------| spam | 98 | 33 | 131 | | 2.229 | 14.886 | | | 0.748 | 0.252 | 0.093 | | 0.080 | 0.180 | | -------------|-----------|-----------|-----------| Column Total | 1222 | 183 | 1405 | | 0.870 | 0.130 | | -------------|-----------|-----------|-----------|

ham-ham和spam-spam是预测正确的,spam-ham:本身不是垃圾短信却被认为是垃圾短信过滤掉,由于Classifier1没有设置拉普拉斯估计,下面再尝试建立classifier2,看结果是否被优化。

> #设置拉普拉斯估计 > sms_classifier <- naiveBayes(dtm_train,trainSet$type,laplace = 1) > sms_prediction <- predict(sms_classifier, dtm_test) > CrossTable(sms_prediction,testset$type,prop.chisq=TRUE,prop.t=FALSE, dnn=c("predicted","actual")) Cell Contents |-------------------------| | N | | Chi-square contribution | | N / Row Total | | N / Col Total | |-------------------------| Total Observations in Table: 1405 | actual predicted | ham | spam | Row Total | -------------|-----------|-----------|-----------| ham | 1105 | 132 | 1237 | | 0.788 | 5.262 | | | 0.893 | 0.107 | 0.880 | | 0.904 | 0.721 | | -------------|-----------|-----------|-----------| spam | 117 | 51 | 168 | | 5.803 | 38.747 | | | 0.696 | 0.304 | 0.120 | | 0.096 | 0.279 | | -------------|-----------|-----------|-----------| Column Total | 1222 | 183 | 1405 | | 0.870 | 0.130 | | -------------|-----------|-----------|-----------|

spam人预测结果有改进,尝试继续优化,下一步以评论分类进行中文分类模拟

完整代码

setwd("E:\\RML") #数据导入 sms <- read.csv("sms.csv",header=TRUE,stringsAsFactors=FALSE) #查看结构: data.frame 3498 obs. 2 variables: str(sms) #将sms_raw$type设置为因子变量 sms$type <- factor(sms$type) str(sms) #垃圾短信与非垃圾短信在这个数据集中各占了多少 table(sms$type) #创建语料库 library(NLP) library(tm) sms_corpus <- Corpus(VectorSource(sms$text)) print(sms_corpus) #clear corpus sms_corpus <- tm_map(sms_corpus, PlainTextDocument) # 所有字母转换成小写 sms_corpus <- tm_map(sms_corpus, tolower) # 去除text中的数字 sms_corpus <- tm_map(sms_corpus, removeNumbers) # 去除停用词,例如and,or,until... sms_corpus <- tm_map(sms_corpus, removeWords, stopwords()) # 去除标点符号 sms_corpus <- tm_map(sms_corpus, removePunctuation) # 去除多余的空格,使单词之间只保留一个空格 sms_corpus <- tm_map(sms_corpus, stripWhitespace) #查看一下清理后的语料库文本 inspect(sms_corpus[1]) #将文本信息转化成DocumentTermMatrix类型的稀疏矩阵 dtm <- DocumentTermMatrix(sms_corpus) Sys.setlocale(category = "LC_ALL", locale = "us") #训练集和测试集数据,查看垃圾与正常邮件占比 trainSet <- sms[1:4169,] testset <- sms[4170:5574,] prop.table(table(trainSet$type)) prop.table(table(testset$type)) #创建可视化词云,大致浏览一下哪些词在spam中经常出现 library(RColorBrewer) library(wordcloud) #取trainset对spam和ham的子集 spam <- subset(trainSet, type == "spam") ham <- subset(trainSet, type == "ham") #创建词云 wordcloud(spam$text, max.words=40, scale=c(3,0.5)) #缩减特征 d <- findFreqTerms(dtm,5) corpus_train = sms_corpus[1:4169] corpus_test = sms_corpus[4170:5574] dtm_train <- DocumentTermMatrix(corpus_train,list(dictionary=d)) dtm_test <- DocumentTermMatrix(corpus_test,list(dictionary=d)) dtm_train$ncol dtm_test$ncol #对矩阵进行转化成因子矩阵 convert_counts <- function(x){ x <- ifelse(x>0,1,0) x <- factor(x, levels=c(0,1),labels=c("No","Yes")) return(x) } dtm_train <- apply(dtm_train, MARGIN=2, convert_counts) dtm_test <- apply(dtm_test, MARGIN=2, convert_counts) #需要的包是e1071 #install.packages("e1071") library(e1071) sms_classifier <- naiveBayes(dtm_train,trainSet$type) sms_prediction <- predict(sms_classifier, dtm_test) #评估模型:用交叉表来看看test中多少预测对了 #install.packages("gmodels") library(gmodels) CrossTable(sms_prediction,testset$type,prop.chisq=TRUE,prop.t=FALSE, dnn=c("predicted","actual")) #设置拉普拉斯估计laplace=1 sms_classifier <- naiveBayes(dtm_train,trainSet$type,laplace = 1) sms_prediction <- predict(sms_classifier, dtm_test) CrossTable(sms_prediction,testset$type,prop.chisq=TRUE,prop.t=FALSE, dnn=c("predicted","actual"))

标签:错误 算法 inf ack net contents 频率 ring 转换

原文地址:http://www.cnblogs.com/tgzhu/p/6680219.html