标签:rac ret on() 返回 add 意义 hid 预测 ast

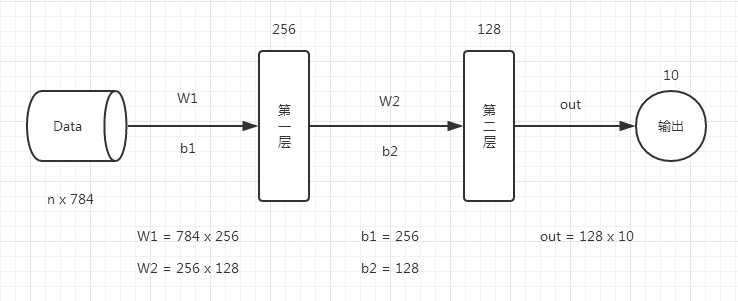

首先看一下神经网络模型,一个比较简单的两层神经。

代码如下:

# 定义参数 n_hidden_1 = 256 #第一层神经元 n_hidden_2 = 128 #第二层神经元 n_input = 784 #输入大小,28*28的一个灰度图,彩图没有什么意义 n_classes = 10 #结果是要得到一个几分类的任务 # 输入和输出 x = tf.placeholder("float", [None, n_input]) y = tf.placeholder("float", [None, n_classes]) # 权重和偏置参数 stddev = 0.1 weights = { ‘w1‘: tf.Variable(tf.random_normal([n_input, n_hidden_1], stddev=stddev)), ‘w2‘: tf.Variable(tf.random_normal([n_hidden_1, n_hidden_2], stddev=stddev)), ‘out‘: tf.Variable(tf.random_normal([n_hidden_2, n_classes], stddev=stddev)) } biases = { ‘b1‘: tf.Variable(tf.random_normal([n_hidden_1])), ‘b2‘: tf.Variable(tf.random_normal([n_hidden_2])), ‘out‘: tf.Variable(tf.random_normal([n_classes])) } print ("NETWORK READY") def multilayer_perceptron(_X, _weights, _biases): #第1层神经网络 = tf.nn.激活函数(tf.加上偏置量(tf.矩阵相乘(输入Data, 权重W1), 偏置参数b1)) layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(_X, _weights[‘w1‘]), _biases[‘b1‘])) #第2层的格式与第1层一样,第2层的输入是第1层的输出。 layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, _weights[‘w2‘]), _biases[‘b2‘])) #返回预测值 return (tf.matmul(layer_2, _weights[‘out‘]) + _biases[‘out‘]) # 预测 pred = multilayer_perceptron(x, weights, biases) # 计算损失函数和优化 cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(pred, y)) optm = tf.train.GradientDescentOptimizer(learning_rate=0.001).minimize(cost) corr = tf.equal(tf.argmax(pred, 1), tf.argmax(y, 1)) accr = tf.reduce_mean(tf.cast(corr, "float")) # 初始化 init = tf.global_variables_initializer() print ("FUNCTIONS READY") # 训练 training_epochs = 20 batch_size = 100 display_step = 4 # LAUNCH THE GRAPH sess = tf.Session() sess.run(init) # 优化器 for epoch in range(training_epochs): avg_cost = 0. total_batch = int(mnist.train.num_examples/batch_size) # 迭代训练 for i in range(total_batch): batch_xs, batch_ys = mnist.train.next_batch(batch_size) feeds = {x: batch_xs, y: batch_ys} sess.run(optm, feed_dict=feeds) avg_cost += sess.run(cost, feed_dict=feeds) avg_cost = avg_cost / total_batch # 打印结果 if (epoch+1) % display_step == 0: print ("Epoch: %03d/%03d cost: %.9f" % (epoch, training_epochs, avg_cost)) feeds = {x: batch_xs, y: batch_ys} train_acc = sess.run(accr, feed_dict=feeds) print ("TRAIN ACCURACY: %.3f" % (train_acc)) feeds = {x: mnist.test.images, y: mnist.test.labels} test_acc = sess.run(accr, feed_dict=feeds) print ("TEST ACCURACY: %.3f" % (test_acc)) print ("OPTIMIZATION FINISHED")

标签:rac ret on() 返回 add 意义 hid 预测 ast

原文地址:http://www.cnblogs.com/hunttown/p/6830671.html