标签:getting byte factory 能力 窗口 intern glob .so 信息

本文是<Java Rest Service实战>的容器化服务章节实验记录。使用的基础环境ubuntu 16.04 LTS,实验中的集群都在一个虚拟机上,其实质是伪集群,但对于了解搭建的基本方法已经满足基本要求了。

一、构建Zookeeper容器集群

1. 定义Dockerfile

FROM index.tenxcloud.com/docker_library/java MAINTAINER HaHa

#事先下载好zookeeper COPY zookeeper-3.4.8.tar.gz /tmp/ RUN tar -xzf /tmp/zookeeper-3.4.8.tar.gz -C /opt RUN cp /opt/zookeeper-3.4.8/conf/zoo_sample.cfg /opt/zookeeper-3.4.8/conf/zoo.cfg RUN mv /opt/zookeeper-3.4.8 /opt/zookeeper RUN rm -f /tmp/zookeeper-3.4.8.tar.gz ADD entrypoint.sh /opt/entrypoint.sh RUN chmod 777 /opt/entrypoint.sh EXPOSE 2181 2888 3888 WORKDIR /opt/zookeeper VOLUME ["/opt/zookeeper/conf","/tmp/zookeeper"] CMD ["/opt/entrypoint.sh"]

2. 编写entrypoint.sh

#!/bin/sh ZOO_CFG="/opt/zookeeper/conf/zoo.cfg" echo "server id (myid): ${SERVER_ID}" echo "${SERVER_ID}" > /tmp/zookeeper/myid echo "${APPEND_1}" >> ${ZOO_CFG} echo "${APPEND_2}" >> ${ZOO_CFG} echo "${APPEND_3}" >> ${ZOO_CFG} echo "${APPEND_4}" >> ${ZOO_CFG} echo "${APPEND_5}" >> ${ZOO_CFG} echo "${APPEND_6}" >> ${ZOO_CFG} echo "${APPEND_7}" >> ${ZOO_CFG} echo "${APPEND_8}" >> ${ZOO_CFG} echo "${APPEND_9}" >> ${ZOO_CFG} echo "${APPEND_10}" >> ${ZOO_CFG} /opt/zookeeper/bin/zkServer.sh start-foreground

3.构建镜像

//工作目录

root@ubuntu:/home/zhl/zookeeper# ll

total 21756

drwxrwxr-x 2 zhl zhl 4096 Sep 14 22:17 ./

drwxr-xr-x 25 zhl zhl 4096 Sep 14 05:09 ../

-rw-rw-r-- 1 zhl zhl 512 Sep 14 20:25 Dockerfile

-rw-r--r-- 1 root root 508 Sep 14 22:17 entrypoint.sh

-rw-rw-r-- 1 zhl zhl 22261552 Sep 14 05:29 zookeeper-3.4.8.tar.gz

//在当前目录下构建名为zk的zookeeper镜像。

root@ubuntu:/home/zhl/zookeeper#docker build -t zk .

Sending build context to Docker daemon 22.27MB

Step 1/13 : FROM index.tenxcloud.com/docker_library/java

---> 264282a59a95

Step 2/13 : MAINTAINER HaHa

---> Running in 79720c1fbb96

---> ae7eddae4e93

Removing intermediate container 79720c1fbb96

Step 3/13 : COPY zookeeper-3.4.8.tar.gz /tmp/

---> 245818bb5e48

Removing intermediate container 4f8a2919a235

Step 4/13 : RUN tar -xzf /tmp/zookeeper-3.4.8.tar.gz -C /opt

---> Running in b8302238ceb1

---> 00aea27e64e3

Removing intermediate container b8302238ceb1

Step 5/13 : RUN cp /opt/zookeeper-3.4.8/conf/zoo_sample.cfg /opt/zookeeper-3.4.8/conf/zoo.cfg

---> Running in 6278f1a9487c

---> 007e855798a5

Removing intermediate container 6278f1a9487c

Step 6/13 : RUN mv /opt/zookeeper-3.4.8 /opt/zookeeper

---> Running in 90bb30879ea4

---> 63d17dc7b863

Removing intermediate container 90bb30879ea4

Step 7/13 : RUN rm -f /tmp/zookeeper-3.4.8.tar.gz

---> Running in d7ea5b8a83f4

---> b59d11ed6bdd

Removing intermediate container d7ea5b8a83f4

Step 8/13 : ADD entrypoint.sh /opt/entrypoint.sh

---> 9576b2b0cebf

Removing intermediate container ef1bd65c7c80

Step 9/13 : RUN chmod 777 /opt/entrypoint.sh

---> Running in f9dd51fe02f6

---> 4ffadd4c1d60

Removing intermediate container f9dd51fe02f6

Step 10/13 : EXPOSE 2181 2888 3888

---> Running in e58393d692c1

---> e2c47dc22195

Removing intermediate container e58393d692c1

Step 11/13 : WORKDIR /opt/zookeeper

---> 1fac68fcb274

Removing intermediate container 851cde7114c4

Step 12/13 : VOLUME /opt/zookeeper/conf /tmp/zookeeper

---> Running in e395e1f1ef13

---> 77f2f7be2dd0

Removing intermediate container e395e1f1ef13

Step 13/13 : CMD /opt/entrypoint.sh

---> Running in 8bf6fa5a1079

---> 5c819179f3f8

Removing intermediate container 8bf6fa5a1079

Successfully built 5c819179f3f8

Successfully tagged zk:latest

4. 单主机启动3个zookeeper实例

//以守护态的形式启动zookeeper 容器 zk1\zk2\zk3

root@ubuntu:/home/zhl/zookeeper# docker run -d --name=zk1 --net=host -e SERVER_ID=1 -e APPEND_1=server.1=192.168.225.128:2888:3888 -e APPEND_2=server.2=192.168.225.128:2889:3889 -e APPEND_3=server.3=192.168.225.128:2890:3890 -e APPEND_4=clientPort=2181 zk c3990b9e7bdedd1fdf4e73848eb4370279d1da018a82cc767f9529d2f9f5f72b root@ubuntu:/home/zhl/zookeeper# docker run -d --name=zk2 --net=host -e SERVER_ID=2 -e APPEND_1=server.1=192.168.225.128:2888:3888 -e APPEND_2=server.2=192.168.225.128:2889:3889 -e APPEND_3=server.3=192.168.225.128:2890:3890 -e APPEND_4=clientPort=2182 zk df4c81b16c7de76d74145a06bc978959f0e11c8d4aa7d615f3a98053f8a5cd2d root@ubuntu:/home/zhl/zookeeper# docker run -d --name=zk3 --net=host -e SERVER_ID=3 -e APPEND_1=server.1=192.168.225.128:2888:3888 -e APPEND_2=server.2=192.168.225.128:2889:3889 -e APPEND_3=server.3=192.168.225.128:2890:3890 -e APPEND_4=clientPort=2183 zk bd7718052a8d8e1e9c37b326c704f92c120383ab4cbf6d5988cffc7cb13bc720

其中,192.168.225.128为主机IP地址(echo $HOST_IP)

5. 查看zookeeper运行情况

zoo.cfg内容(选择其中一个):

root@ubuntu:/# cat ./var/lib/docker/volumes/b38902183ee2684494ea1edba0c963635851660e5edc0424471a49935309d669/_data/zoo.cfg # The number of milliseconds of each tick tickTime=2000 # The number of ticks that the initial # synchronization phase can take initLimit=10 # The number of ticks that can pass between # sending a request and getting an acknowledgement syncLimit=5 # the directory where the snapshot is stored. # do not use /tmp for storage, /tmp here is just # example sakes. dataDir=/tmp/zookeeper # the port at which the clients will connect clientPort=2181 # the maximum number of client connections. # increase this if you need to handle more clients #maxClientCnxns=60 # # Be sure to read the maintenance section of the # administrator guide before turning on autopurge. # # http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance # # The number of snapshots to retain in dataDir #autopurge.snapRetainCount=3 # Purge task interval in hours # Set to "0" to disable auto purge feature #autopurge.purgeInterval=1 server.1=192.168.225.128:2888:3888 server.2=192.168.225.128:2889:3889 server.3=192.168.225.128:2890:3890 clientPort=2182

//查看所有的容器

root@ubuntu:/home/zhl/zookeeper# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

c1ab885b2770 zk "/opt/entrypoint.sh" 26 seconds ago Up 26 seconds zk3

b939bfa60ea2 zk "/opt/entrypoint.sh" 58 seconds ago Up 58 seconds zk2

bd161a246c28 zk "/opt/entrypoint.sh" 2 minutes ago Up 2 minutes zk1

root@ubuntu:~# echo stat|nc 127.0.0.1 2181

Zookeeper version: 3.4.8--1, built on 02/06/2016 03:18 GMT

Clients:

/172.17.0.3:45638[1](queued=0,recved=1177,sent=1177)

/127.0.0.1:57130[0](queued=0,recved=1,sent=0)

Latency min/avg/max: 0/0/70

Received: 9114

Sent: 9121

Connections: 2

Outstanding: 0

Zxid: 0x50000005e

Mode: follower

Node count: 21

root@ubuntu:~# telnet 192.168.225.128 2183

Trying 192.168.225.128...

Connected to 192.168.225.128.

Escape character is ‘^]‘.

stat

Zookeeper version: 3.4.8--1, built on 02/06/2016 03:18 GMT

Clients:

/192.168.225.128:40962[0](queued=0,recved=1,sent=0)

/172.17.0.2:43694[1](queued=0,recved=1801,sent=1801)

Latency min/avg/max: 0/0/187

Received: 18234

Sent: 18241

Connections: 2

Outstanding: 0

Zxid: 0x50000005e

Mode: follower

Node count: 21

Connection closed by foreign host.

root@ubuntu:~# jps

6888 ZooKeeperMain

27882 Jps

6955 ZooKeeperMain

或者 查看zookeeper是否已启动使用:ps -ef | grep zoo.cfg

root@ubuntu:~# ps -ef | grep zoo.cfg root 6462 6439 0 14:59 ? 00:00:36 /usr/lib/jvm/java-8-openjdk-amd64/bin/java -Dzookeeper.log.dir=. -Dzookeeper.root.logger=INFO,CONSOLE -cp /opt/zookeeper/bin/../build/classes:/opt/zookeeper/bin/../build/lib/*.jar:/opt/zookeeper/bin/../lib/slf4j-log4j12-1.6.1.jar:/opt/zookeeper/bin/../lib/slf4j-api-1.6.1.jar:/opt/zookeeper/bin/../lib/netty-3.7.0.Final.jar:/opt/zookeeper/bin/../lib/log4j-1.2.16.jar:/opt/zookeeper/bin/../lib/jline-0.9.94.jar:/opt/zookeeper/bin/../zookeeper-3.4.8.jar:/opt/zookeeper/bin/../src/java/lib/*.jar:/opt/zookeeper/bin/../conf: -Dcom.sun.management.jmxremote -Dcom.sun.management.jmxremote.local.only=false org.apache.zookeeper.server.quorum.QuorumPeerMain /opt/zookeeper/bin/../conf/zoo.cfg root 6561 6540 0 14:59 ? 00:00:44 /usr/lib/jvm/java-8-openjdk-amd64/bin/java -Dzookeeper.log.dir=. -Dzookeeper.root.logger=INFO,CONSOLE -cp /opt/zookeeper/bin/../build/classes:/opt/zookeeper/bin/../build/lib/*.jar:/opt/zookeeper/bin/../lib/slf4j-log4j12-1.6.1.jar:/opt/zookeeper/bin/../lib/slf4j-api-1.6.1.jar:/opt/zookeeper/bin/../lib/netty-3.7.0.Final.jar:/opt/zookeeper/bin/../lib/log4j-1.2.16.jar:/opt/zookeeper/bin/../lib/jline-0.9.94.jar:/opt/zookeeper/bin/../zookeeper-3.4.8.jar:/opt/zookeeper/bin/../src/java/lib/*.jar:/opt/zookeeper/bin/../conf: -Dcom.sun.management.jmxremote -Dcom.sun.management.jmxremote.local.only=false org.apache.zookeeper.server.quorum.QuorumPeerMain /opt/zookeeper/bin/../conf/zoo.cfg root 6670 6649 0 14:59 ? 00:00:38 /usr/lib/jvm/java-8-openjdk-amd64/bin/java -Dzookeeper.log.dir=. -Dzookeeper.root.logger=INFO,CONSOLE -cp /opt/zookeeper/bin/../build/classes:/opt/zookeeper/bin/../build/lib/*.jar:/opt/zookeeper/bin/../lib/slf4j-log4j12-1.6.1.jar:/opt/zookeeper/bin/../lib/slf4j-api-1.6.1.jar:/opt/zookeeper/bin/../lib/netty-3.7.0.Final.jar:/opt/zookeeper/bin/../lib/log4j-1.2.16.jar:/opt/zookeeper/bin/../lib/jline-0.9.94.jar:/opt/zookeeper/bin/../zookeeper-3.4.8.jar:/opt/zookeeper/bin/../src/java/lib/*.jar:/opt/zookeeper/bin/../conf: -Dcom.sun.management.jmxremote -Dcom.sun.management.jmxremote.local.only=false org.apache.zookeeper.server.quorum.QuorumPeerMain /opt/zookeeper/bin/../conf/zoo.cfg root 28049 3295 0 23:54 pts/2 00:00:00 grep --color=auto zoo.cfg

如果遇到问题,可以通过docker logs c1ab885b2770 查看。

//jps(Java Virtual Machine Process Status Tool)是JDK 1.5提供的一个显示当前所有java进程pid的命令。

root@ubuntu:~# jps -m //-m 输出传递给main 方法的参数 27665 Jps -m 6888 ZooKeeperMain -server 192.168.225.128:2181 6955 ZooKeeperMain -server 192.168.225.128:2182

root@ubuntu:~# echo stat|nc 127.0.0.1 2182 Zookeeper version: 3.4.8--1, built on 02/06/2016 03:18 GMT Clients: /127.0.0.1:36988[0](queued=0,recved=1,sent=0) /172.17.0.4:60280[1](queued=0,recved=955,sent=955) Latency min/avg/max: 0/0/288 Received: 18211 Sent: 18212 Connections: 2 Outstanding: 0 Zxid: 0x50000005e Mode: leader Node count: 21

6. 其它可能用到的命令或小知识

1. echo stat|nc 127.0.0.1 2181 查看哪个节点被选择作为follower或者leader 2. echo ruok|nc 127.0.0.1 2181 测试是否启动了该Server,若回复imok表示已经启动。 3. echo dump| nc 127.0.0.1 2181 列出未经处理的会话和临时节点。 4. echo kill | nc 127.0.0.1 2181 关掉server 5. echo conf | nc 127.0.0.1 2181 输出相关服务配置的详细信息。 6. echo cons | nc 127.0.0.1 2181 列出所有连接到服务器的客户端的完全的连接 / 会话的详细信息。 7. echo envi |nc 127.0.0.1 2181 输出关于服务环境的详细信息(区别于 conf 命令)。 8. echo reqs | nc 127.0.0.1 2181 列出未经处理的请求。 9. echo wchs | nc 127.0.0.1 2181 列出服务器 watch 的详细信息。 10. echo wchc | nc 127.0.0.1 2181 通过 session 列出服务器 watch 的详细信息,它的输出是一个与 watch 相关的会话的列表。 11. echo wchp | nc 127.0.0.1 2181 通过路径列出服务器 watch 的详细信息。它输出一个与 session 相关的路径。

//zk集群有3个节点,为何只能查询出来2个节点

root@ubuntu:~# netstat -an | grep 2183 tcp6 0 0 :::2183 :::* LISTEN tcp6 0 0 192.168.225.128:2183 172.17.0.2:43694 ESTABLISHED unix 3 [ ] STREAM CONNECTED 21838 /run/systemd/journal/stdout unix 3 [ ] STREAM CONNECTED 21837 root@ubuntu:~# netstat -atunp Active Internet connections (servers and established) Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name tcp 0 0 127.0.1.1:53 0.0.0.0:* LISTEN 1023/dnsmasq tcp 0 0 172.17.0.1:44870 172.17.0.3:9092 CLOSE_WAIT 4886/docker-proxy tcp 0 0 172.17.0.1:50278 172.17.0.2:9091 CLOSE_WAIT 4692/docker-proxy tcp 0 0 172.17.0.1:41458 172.17.0.4:9093 CLOSE_WAIT 5041/docker-proxy tcp6 0 0 :::9092 :::* LISTEN 4886/docker-proxy tcp6 0 0 :::2181 :::* LISTEN 6462/java tcp6 0 0 :::9093 :::* LISTEN 5041/docker-proxy tcp6 0 0 :::2182 :::* LISTEN 6561/java tcp6 0 0 :::2183 :::* LISTEN 6670/java tcp6 0 0 192.168.225.128:2889 :::* LISTEN 6561/java tcp6 0 0 192.168.225.128:3888 :::* LISTEN 6462/java tcp6 0 0 192.168.225.128:3889 :::* LISTEN 6561/java tcp6 0 0 192.168.225.128:3890 :::* LISTEN 6670/java tcp6 0 0 :::34035 :::* LISTEN 6462/java tcp6 0 0 :::37529 :::* LISTEN 6670/java tcp6 0 0 :::37817 :::* LISTEN 6561/java tcp6 0 0 :::9091 :::* LISTEN 4692/docker-proxy tcp6 0 0 192.168.225.128:58368 192.168.225.128:2889 ESTABLISHED 6670/java tcp6 0 0 192.168.225.128:9091 172.17.0.4:39558 FIN_WAIT2 4692/docker-proxy tcp6 0 0 192.168.225.128:42050 192.168.225.128:3888 ESTABLISHED 6670/java tcp6 1 0 192.168.225.128:49148 192.168.225.128:2181 CLOSE_WAIT 6888/java tcp6 0 0 192.168.225.128:3889 192.168.225.128:50582 ESTABLISHED 6561/java tcp6 0 0 192.168.225.128:42002 192.168.225.128:3888 ESTABLISHED 6561/java tcp6 0 0 192.168.225.128:3888 192.168.225.128:42050 ESTABLISHED 6462/java tcp6 0 0 192.168.225.128:9092 172.17.0.4:37246 FIN_WAIT2 4886/docker-proxy tcp6 0 0 192.168.225.128:58374 192.168.225.128:2889 ESTABLISHED 6462/java tcp6 0 0 192.168.225.128:2182 172.17.0.4:60280 ESTABLISHED 6561/java tcp6 0 0 192.168.225.128:3888 192.168.225.128:42002 ESTABLISHED 6462/java tcp6 1 0 192.168.225.128:59822 192.168.225.128:2182 CLOSE_WAIT 6955/java tcp6 0 0 192.168.225.128:2889 192.168.225.128:58368 ESTABLISHED 6561/java tcp6 0 0 192.168.225.128:2889 192.168.225.128:58374 ESTABLISHED 6561/java tcp6 0 0 192.168.225.128:2181 172.17.0.3:45638 ESTABLISHED 6462/java tcp6 0 0 192.168.225.128:9093 172.17.0.4:60222 FIN_WAIT2 5041/docker-proxy tcp6 0 0 192.168.225.128:2183 172.17.0.2:43694 ESTABLISHED 6670/java tcp6 0 0 192.168.225.128:50582 192.168.225.128:3889 ESTABLISHED 6670/java udp 0 0 0.0.0.0:631 0.0.0.0:* 2688/cups-browsed udp 0 0 0.0.0.0:60401 0.0.0.0:* 1023/dnsmasq udp 0 0 127.0.1.1:53 0.0.0.0:* 1023/dnsmasq udp 0 0 0.0.0.0:68 0.0.0.0:* 26791/dhclient udp 0 0 0.0.0.0:5353 0.0.0.0:* 818/avahi-daemon: r udp 0 0 0.0.0.0:36101 0.0.0.0:* 818/avahi-daemon: r udp6 0 0 :::54133 :::* 818/avahi-daemon: r udp6 0 0 :::5353 :::* 818/avahi-daemon: r root@ubuntu:~# lsof -i:2181 COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME java 6462 root 34u IPv6 495094 0t0 TCP *:2181 (LISTEN) java 6462 root 40u IPv6 1615785 0t0 TCP 192.168.225.128:2181->172.17.0.3:45638 (ESTABLISHED) java 6888 root 13u IPv6 498296 0t0 TCP 192.168.225.128:49148->192.168.225.128:2181 (CLOSE_WAIT) root@ubuntu:~# lsof -i:2182 COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME java 6561 root 34u IPv6 495425 0t0 TCP *:2182 (LISTEN) java 6561 root 41u IPv6 1615791 0t0 TCP 192.168.225.128:2182->172.17.0.4:60280 (ESTABLISHED) java 6955 root 13u IPv6 500012 0t0 TCP 192.168.225.128:59822->192.168.225.128:2182 (CLOSE_WAIT) root@ubuntu:~# lsof -i:2183 COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME java 6670 root 34u IPv6 495813 0t0 TCP *:2183 (LISTEN) java 6670 root 39u IPv6 1615789 0t0 TCP 192.168.225.128:2183->172.17.0.2:43694 (ESTABLISHED)

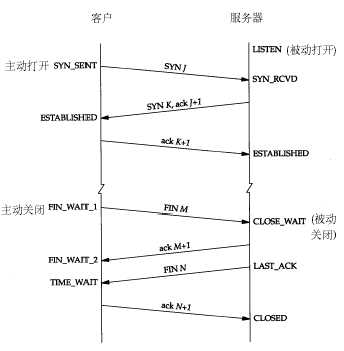

常用的三个状态是:ESTABLISHED 表示正在通信,TIME_WAIT 表示主动关闭,CLOSE_WAIT 表示被动关闭常用的三个状态是:ESTABLISHED 表示正在通信,TIME_WAIT 表示主动关闭,CLOSE_WAIT 表示被动关闭

二、Kafka

1. Kafka简介:

Kafka是一种分布式的,基于发布/订阅的消息系统。主要设计目标如下: 以时间复杂度为O(1)的方式提供消息持久化能力,即使对TB级以上数据也能保证常数时间复杂度的访问性能。高吞吐率。即使在非常廉价的商用机器上也能做到单机支持每秒100K条以上消息的传输。 支持Kafka Server间的消息分区,及分布式消费,同时保证每个Partition内的消息顺序传输。同时支持离线数据处理和实时数据处理。Scale out:支持在线水平扩展。

常用Message Queue对比 RabbitMQ RabbitMQ是使用Erlang编写的一个开源的消息队列,本身支持很多的协议:AMQP,XMPP, SMTP, STOMP,也正因如此,它非常重量级,更适合于企业级的开发。同时实现了Broker构架,

这意味着消息在发送给客户端时先在中心队列排队。对路由,负载均衡或者数据持久化都有很好的支持。 Redis Redis是一个基于Key-Value对的NoSQL数据库,开发维护很活跃。虽然它是一个Key-Value数据库存储系统,但它本身支持MQ功能,所以完全可以当做一个轻量级的队列服务来使用。

对于RabbitMQ和Redis的入队和出队操作,各执行100万次,每10万次记录一次执行时间。测试数据分为128Bytes、512Bytes、1K和10K四个不同大小的数据。实验表明:入队时,

当数据比较小时Redis的性能要高于RabbitMQ,而如果数据大小超过了10K,Redis则慢的无法忍受;出队时,无论数据大小,Redis都表现出非常好的性能,而RabbitMQ的出队性能则远低于Redis。 ZeroMQ ZeroMQ号称最快的消息队列系统,尤其针对大吞吐量的需求场景。ZeroMQ能够实现RabbitMQ不擅长的高级/复杂的队列,但是开发人员需要自己组合多种技术框架,

技术上的复杂度是对这MQ能够应用成功的挑战。ZeroMQ具有一个独特的非中间件的模式,你不需要安装和运行一个消息服务器或中间件,因为你的应用程序将扮演这个服务器角色。

你只需要简单的引用ZeroMQ程序库,可以使用NuGet安装,然后你就可以愉快的在应用程序之间发送消息了。但是ZeroMQ仅提供非持久性的队列,也就是说如果宕机,数据将会丢失。

其中,Twitter的Storm 0.9.0以前的版本中默认使用ZeroMQ作为数据流的传输(Storm从0.9版本开始同时支持ZeroMQ和Netty作为传输模块)。 ActiveMQ ActiveMQ是Apache下的一个子项目。 类似于ZeroMQ,它能够以代理人和点对点的技术实现队列。同时类似于RabbitMQ,它少量代码就可以高效地实现高级应用场景。 Kafka/Jafka Kafka是Apache下的一个子项目,是一个高性能跨语言分布式发布/订阅消息队列系统,而Jafka是在Kafka之上孵化而来的,即Kafka的一个升级版。

具有以下特性:快速持久化,可以在O(1)的系统开销下进行消息持久化;高吞吐,在一台普通的服务器上既可以达到10W/s的吞吐速率;

完全的分布式系统,Broker、Producer、Consumer都原生自动支持分布式,自动实现负载均衡;支持Hadoop数据并行加载,对于像Hadoop的一样的日志数据和离线分析系统,

但又要求实时处理的限制,这是一个可行的解决方案。Kafka通过Hadoop的并行加载机制统一了在线和离线的消息处理。Apache Kafka相对于ActiveMQ是一个非常轻量级的消息系统,

除了性能非常好之外,还是一个工作良好的分布式系统。

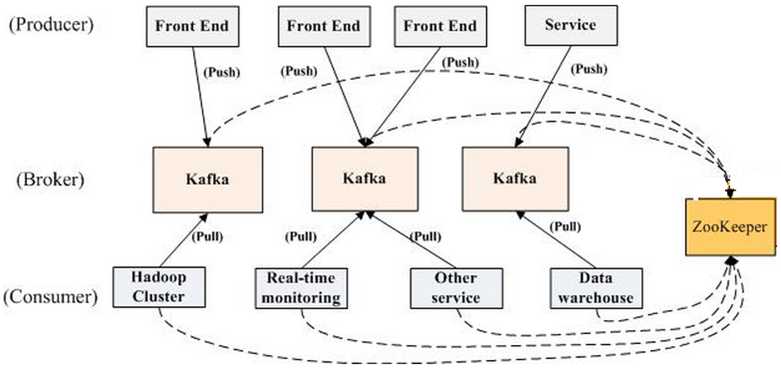

2. kafka架构

Terminology Broker Kafka集群包含一个或多个服务器,这种服务器被称为broker Topic 每条发布到Kafka集群的消息都有一个类别,这个类别被称为Topic。(物理上不同Topic的消息分开存储,逻辑上一个Topic的消息虽然保存于一个或多个broker上但用户只需指定消息的Topic即可生产或

消费数据而不必关心数据存于何处) Partition Parition是物理上的概念,每个Topic包含一个或多个Partition. Producer 负责发布消息到Kafka broker Consumer 消息消费者,向Kafka broker读取消息的客户端。 Consumer Group 每个Consumer属于一个特定的Consumer Group(可为每个Consumer指定group name,若不指定group name则属于默认的group)。

一个典型的Kafka集群中包含若干Producer(可以是web前端产生的Page View,或者是服务器日志,系统CPU、Memory等),若干broker(Kafka支持水平扩展,一般broker数量越多,集群吞吐率越高),

若干Consumer Group,以及一个Zookeeper集群。Kafka通过Zookeeper管理集群配置,选举leader,以及在Consumer Group发生变化时进行rebalance。Producer使用push模式将消息发布到

broker,Consumer使用pull模式从broker订阅并消费消息。

3.kafka镜像制作与构建容器集群

(1). 编写Dockerfile文件

root@ubuntu:/home/zhl/kafka# vi Dockerfile FROM index.tenxcloud.com/docker_library/java MAINTAINER HaHa COPY kafka_2.10-0.9.0.1.tgz /tmp/ RUN tar -xzf /tmp/kafka_2.10-0.9.0.1.tgz -C /opt RUN mv /opt/kafka_2.10-0.9.0.1 /opt/kafka RUN rm -f /tmp/zookeeper-3.4.8.tar.gz ENV KAFKA_HOME /opt/kafka ADD start-kafka.sh /usr/bin/start-kafka.sh RUN chmod 777 /usr/bin/start-kafka.sh CMD /usr/bin/start-kafka.sh

(2). 编写容器启动脚本

root@ubuntu:/home/zhl/kafka# vi start-kafka.sh cp $KAFKA_HOME/config/server.properties $KAFKA_HOME/config/server.properties.bk sed -r -i "s/(zookeeper.connect)=(.*)/\1=${ZK}/g" $KAFKA_HOME/config/server.properties sed -r -i "s/(broker.id)=(.*)/\1=${BROKER_ID}/g" $KAFKA_HOME/config/server.properties sed -r -i "s/(log.dirs)=(.*)/\1=\/tmp\/kafka-logs-${BROKER_ID}/g" $KAFKA_HOME/config/server.properties sed -r -i "s/#(advertised.host.name)=(.*)/\1=${HOST_IP}/g" $KAFKA_HOME/config/server.properties sed -r -i "s/#(port)=(.*)/\1=${PORT}/g" $KAFKA_HOME/config/server.properties sed -r -i "s/(listeners)=(.*)/\1=PLAINTEXT:\/\/:${PORT}/g" $KAFKA_HOME/config/server.properties if [ "$KAFKA_HEAP_OPTS" !=""]; then sed -r -i "s/^(export KAFKA_HEAP_OPTS)=\"(.*)\"/\1=\"$KAFKA_HEAP_OPTS\"/g" $KAFKA_HOME/bin/kafka-server-start.sh fi $KAFKA_HOME/bin/kafka-server-start.sh $KAFKA_HOME/config/server.properties

(3). 构建镜像

docker build -t kafkatest .

(4). 使用kafkatest镜像,启动3个kafka容器实例,

K_PORT=9091 docker run --name=k1 -p ${K_PORT}:${K_PORT} -e BROKER_ID=1 -e HOST_IP=${HOST_IP} -e PORT=${K_PORT} -e ZK=‘192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183‘ -d kafkatest K_PORT=9092 docker run --name=k2 -p ${K_PORT}:${K_PORT} -e BROKER_ID=2 -e HOST_IP=${HOST_IP} -e PORT=${K_PORT} -e ZK=‘192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183‘ -d kafkatest K_PORT=9093 docker run --name=k3 -p ${K_PORT}:${K_PORT} -e BROKER_ID=3 -e HOST_IP=${HOST_IP} -e PORT=${K_PORT} -e ZK=‘192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183‘ -d kafkatest

查看启动结果:

root@ubuntu:/home/zhl/kafka# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 637beebec29e kafkatest "/bin/sh -c /usr/b..." 9 minutes ago Up 9 minutes 0.0.0.0:9093->9093/tcp k3 4bf4925e6f40 kafkatest "/bin/sh -c /usr/b..." 9 minutes ago Up 9 minutes 0.0.0.0:9092->9092/tcp k2 988c32940785 kafkatest "/bin/sh -c /usr/b..." 11 minutes ago Up 11 minutes 0.0.0.0:9091->9091/tcp k1 c1ab885b2770 zk "/opt/entrypoint.sh" 4 hours ago Up 4 hours zk3 b939bfa60ea2 zk "/opt/entrypoint.sh" 4 hours ago Up 4 hours zk2 bd161a246c28 zk "/opt/entrypoint.sh" 4 hours ago Up 4 hours zk1

root@ubuntu:~# ps -ef | grep config/server root 4755 4742 0 13:24 ? 00:01:51 /usr/lib/jvm/java-8-openjdk-amd64/bin/java -Xmx1G -Xms1G -server -XX:+UseG1GC -XX:MaxGCPauseMillis=20 -XX:InitiatingHeapOccupancyPercent=35 -XX:+DisableExplicitGC -Djava.awt.headless=true -Xloggc:/opt/kafka/bin/../logs/kafkaServer-gc.log -verbose:gc -XX:+PrintGCDetails -XX:+PrintGCDateStamps -XX:+PrintGCTimeStamps -Dcom.sun.management.jmxremote -Dcom.sun.management.jmxremote.authenticate=false -Dcom.sun.management.jmxremote.ssl=false -Dkafka.logs.dir=/opt/kafka/bin/../logs -Dlog4j.configuration=file:/opt/kafka/bin/../config/log4j.properties -cp :/opt/kafka/bin/../libs/* kafka.Kafka /opt/kafka/config/server.properties root 4954 4943 0 13:25 ? 00:01:55 /usr/lib/jvm/java-8-openjdk-amd64/bin/java -Xmx1G -Xms1G -server -XX:+UseG1GC -XX:MaxGCPauseMillis=20 -XX:InitiatingHeapOccupancyPercent=35 -XX:+DisableExplicitGC -Djava.awt.headless=true -Xloggc:/opt/kafka/bin/../logs/kafkaServer-gc.log -verbose:gc -XX:+PrintGCDetails -XX:+PrintGCDateStamps -XX:+PrintGCTimeStamps -Dcom.sun.management.jmxremote -Dcom.sun.management.jmxremote.authenticate=false -Dcom.sun.management.jmxremote.ssl=false -Dkafka.logs.dir=/opt/kafka/bin/../logs -Dlog4j.configuration=file:/opt/kafka/bin/../config/log4j.properties -cp :/opt/kafka/bin/../libs/* kafka.Kafka /opt/kafka/config/server.properties root 5107 5095 0 13:25 ? 00:01:52 /usr/lib/jvm/java-8-openjdk-amd64/bin/java -Xmx1G -Xms1G -server -XX:+UseG1GC -XX:MaxGCPauseMillis=20 -XX:InitiatingHeapOccupancyPercent=35 -XX:+DisableExplicitGC -Djava.awt.headless=true -Xloggc:/opt/kafka/bin/../logs/kafkaServer-gc.log -verbose:gc -XX:+PrintGCDetails -XX:+PrintGCDateStamps -XX:+PrintGCTimeStamps -Dcom.sun.management.jmxremote -Dcom.sun.management.jmxremote.authenticate=false -Dcom.sun.management.jmxremote.ssl=false -Dkafka.logs.dir=/opt/kafka/bin/../logs -Dlog4j.configuration=file:/opt/kafka/bin/../config/log4j.properties -cp :/opt/kafka/bin/../libs/* kafka.Kafka /opt/kafka/config/server.properties root 28057 3295 0 23:57 pts/2 00:00:00 grep --color=auto config/server

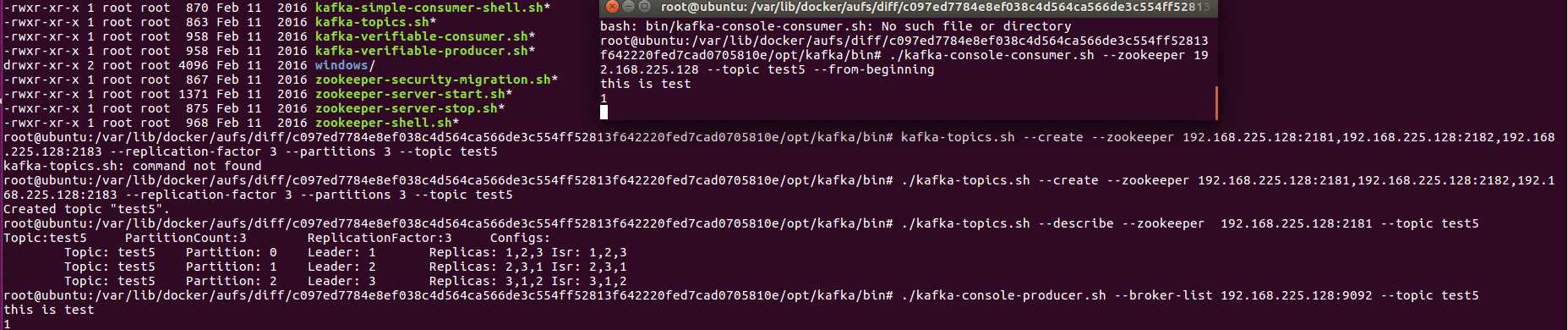

(5). 通过命令行的方式启动生产者和消费者进行测试(http://www.jianshu.com/p/dc4770fc34b6)

//--进入到kafka目录,创建“test5”topic主题:分区为3、备份为3

root@ubuntu:/var/lib/docker/aufs/diff/c097ed7784e8ef038c4d564ca566de3c554ff52813f642220fed7cad0705810e/opt/kafka/bin# ./kafka-topics.sh --create --zookeeper 192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183 --replication-factor 3 --partitions 3 --topic test5 Created topic "test5". //--查看"test5"主题详情

root@ubuntu:/var/lib/docker/aufs/diff/c097ed7784e8ef038c4d564ca566de3c554ff52813f642220fed7cad0705810e/opt/kafka/bin# ./kafka-topics.sh --describe --zookeeper 192.168.225.128:2181 --topic test5 Topic:test5 PartitionCount:3 ReplicationFactor:3 Configs: Topic: test5 Partition: 0 Leader: 1 Replicas: 1,2,3 Isr: 1,2,3 Topic: test5 Partition: 1 Leader: 2 Replicas: 2,3,1 Isr: 2,3,1 Topic: test5 Partition: 2 Leader: 3 Replicas: 3,1,2 Isr: 3,1,2 root@ubuntu:/var/lib/docker/aufs/diff/c097ed7784e8ef038c4d564ca566de3c554ff52813f642220fed7cad0705810e/opt/kafka/bin# ./kafka-console-producer.sh --broker-list 192.168.225.128:9092 --topic test5 //--broker-list : 值可以为broker集群中的一个或多个节点 this is test 1

//新开启一个窗口,启动消费者

root@ubuntu:/var/lib/docker/aufs/diff/c097ed7784e8ef038c4d564ca566de3c554ff52813f642220fed7cad0705810e/opt/kafka/bin# ./kafka-console-consumer.sh --zookeeper 192.168.225.128 --topic test5 --from-beginning this is test 1

server.properties内容:

root@ubuntu:/# cat ./var/lib/docker/aufs/diff/6befb484b490a6f34d4a0e5ca00f2eda7c92bce972cabf510518ed4ce12b1fef/opt/kafka/config/server.properties # Licensed to the Apache Software Foundation (ASF) under one or more # contributor license agreements. See the NOTICE file distributed with # this work for additional information regarding copyright ownership. # The ASF licenses this file to You under the Apache License, Version 2.0 # (the "License"); you may not use this file except in compliance with # the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. # see kafka.server.KafkaConfig for additional details and defaults ############################# Server Basics ############################# # The id of the broker. This must be set to a unique integer for each broker.

# 每一个broker在集群中的唯一表示 broker.id=1 ############################# Socket Server Settings ############################# listeners=PLAINTEXT://:9091 # The port the socket server listens on port=9091 # Hostname the broker will bind to. If not set, the server will bind to all interfaces #host.name=localhost # Hostname the broker will advertise to producers and consumers. If not set, it uses the # value for "host.name" if configured. Otherwise, it will use the value returned from # java.net.InetAddress.getCanonicalHostName(). advertised.host.name=192.168.225.128 # The port to publish to ZooKeeper for clients to use. If this is not set, # it will publish the same port that the broker binds to. #advertised.port=<port accessible by clients> # The number of threads handling network requests num.network.threads=3 # The number of threads doing disk I/O num.io.threads=8 # The send buffer (SO_SNDBUF) used by the socket server socket.send.buffer.bytes=102400 # The receive buffer (SO_RCVBUF) used by the socket server socket.receive.buffer.bytes=102400 # The maximum size of a request that the socket server will accept (protection against OOM) socket.request.max.bytes=104857600 ############################# Log Basics ############################# # A comma seperated list of directories under which to store log files log.dirs=/tmp/kafka-logs-1 # The default number of log partitions per topic. More partitions allow greater # parallelism for consumption, but this will also result in more files across # the brokers. num.partitions=1 # The number of threads per data directory to be used for log recovery at startup and flushing at shutdown. # This value is recommended to be increased for installations with data dirs located in RAID array. num.recovery.threads.per.data.dir=1 ############################# Log Flush Policy ############################# # Messages are immediately written to the filesystem but by default we only fsync() to sync # the OS cache lazily. The following configurations control the flush of data to disk. # There are a few important trade-offs here: # 1. Durability: Unflushed data may be lost if you are not using replication. # 2. Latency: Very large flush intervals may lead to latency spikes when the flush does occur as there will be a lot of data to flush. # 3. Throughput: The flush is generally the most expensive operation, and a small flush interval may lead to exceessive seeks. # The settings below allow one to configure the flush policy to flush data after a period of time or # every N messages (or both). This can be done globally and overridden on a per-topic basis. # The number of messages to accept before forcing a flush of data to disk #log.flush.interval.messages=10000 # The maximum amount of time a message can sit in a log before we force a flush #log.flush.interval.ms=1000 ############################# Log Retention Policy ############################# # The following configurations control the disposal of log segments. The policy can # be set to delete segments after a period of time, or after a given size has accumulated. # A segment will be deleted whenever *either* of these criteria are met. Deletion always happens # from the end of the log. # The minimum age of a log file to be eligible for deletion log.retention.hours=168 # A size-based retention policy for logs. Segments are pruned from the log as long as the remaining # segments don‘t drop below log.retention.bytes. #log.retention.bytes=1073741824 # The maximum size of a log segment file. When this size is reached a new log segment will be created. log.segment.bytes=1073741824 # The interval at which log segments are checked to see if they can be deleted according # to the retention policies log.retention.check.interval.ms=300000 ############################# Zookeeper ############################# # Zookeeper connection string (see zookeeper docs for details). # This is a comma separated host:port pairs, each corresponding to a zk # server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002". # You can also append an optional chroot string to the urls to specify the # root directory for all kafka znodes.

# zookeeper集群的地址,可以是多个,多个之间用逗号分割 zookeeper.connect=192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183 # Timeout in ms for connecting to zookeeper zookeeper.connection.timeout.ms=6000

三、微服务

1.使用微服务镜像mstest,启动3个实例

root@ubuntu:/home/zhl/mstest# PORT=18081 root@ubuntu:/home/zhl/mstest# docker run -d --name=kaka1 --hostname=kaka1 -p ${PORT}:8080 -e ZK=‘192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183‘ -e KAFKA=‘192.168.225.128:9091,192.168.225.128:9092,192.168.225.128:9093‘ mstest 3c11d508bf89d96c73e29747b79095225775c2daab88cf4075e81be567a9444b root@ubuntu:/home/zhl/mstest# PORT=18080 root@ubuntu:/home/zhl/mstest# docker run -d --name=kaka2 --hostname=kaka2 -p ${PORT}:8080 -e ZK=‘192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183‘ -e KAFKA=‘192.168.225.128:9091,192.168.225.128:9092,192.168.225.128:9093‘ mstest 8531d77e485c1c722dc6f2f6fc2fce3e9e316040c1ea7b0f0fb3d991fa3b2e46 root@ubuntu:/home/zhl/mstest# PORT=18082 root@ubuntu:/home/zhl/mstest# docker run -d --name=kaka3 --hostname=kaka3 -p ${PORT}:8080 -e ZK=‘192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183‘ -e KAFKA=‘192.168.225.128:9091,192.168.225.128:9092,192.168.225.128:9093‘ mstest

2. 查看微服务运行情况:

root@ubuntu:/home/zhl/mstest# docker ps -a CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES d64ef36d5919 mstest "/start.sh" 2 minutes ago Up 2 minutes 0.0.0.0:18081->8080/tcp kaka1 637beebec29e kafkatest "/bin/sh -c /usr/b..." 3 days ago Up 26 hours 0.0.0.0:9093->9093/tcp k3 4bf4925e6f40 kafkatest "/bin/sh -c /usr/b..." 3 days ago Up 26 hours 0.0.0.0:9092->9092/tcp k2 988c32940785 kafkatest "/bin/sh -c /usr/b..." 3 days ago Up 26 hours 0.0.0.0:9091->9091/tcp k1 c1ab885b2770 zk "/opt/entrypoint.sh" 4 days ago Up 25 hours zk3 b939bfa60ea2 zk "/opt/entrypoint.sh" 4 days ago Up 25 hours zk2 bd161a246c28 zk "/opt/entrypoint.sh" 4 days ago Up 25 hours zk1

root@ubuntu:/home/zhl/mstest# docker logs d6 ZK=192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183 KAFKA=192.168.225.128:9091,192.168.225.128:9092,192.168.225.128:9093 zk.kaka.properties: ==== spring.application.name=bootZKKafka spring.cloud.config.enabled=false spring.cloud.zookeeper.connectString=192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183 server.port=8080 endpoints.restart.enabled=true endpoints.shutdown.enabled=true endpoints.health.sensitive=false logging.level.org.apache.zookeeper.ClientCnxn: WARN zkConnect=192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183 kafkaServerList=192.168.225.128:9091,192.168.225.128:9092,192.168.225.128:9093 group=my_group topic=my_story key=my_dream ==== start micro services... SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/my_app.jar!/lib/logback-classic-1.1.5.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/my_app.jar!/lib/slf4j-log4j12-1.7.16.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [ch.qos.logback.classic.util.ContextSelectorStaticBinder] 2017-09-19 08:14:54.994 INFO 9 --- [ main] s.c.a.AnnotationConfigApplicationContext : Refreshing org.springframework.context.annotation.AnnotationConfigApplicationContext@30f92868: startup date [Tue Sep 19 08:14:54 UTC 2017]; root of context hierarchy 2017-09-19 08:14:55.715 INFO 9 --- [ main] f.a.AutowiredAnnotationBeanPostProcessor : JSR-330 ‘javax.inject.Inject‘ annotation found and supported for autowiring 2017-09-19 08:14:55.782 INFO 9 --- [ main] trationDelegate$BeanPostProcessorChecker : Bean ‘configurationPropertiesRebinderAutoConfiguration‘ of type [class org.springframework.cloud.autoconfigure.ConfigurationPropertiesRebinderAutoConfiguration$$EnhancerBySpringCGLIB$$faa8dd1d] is not eligible for getting processed by all BeanPostProcessors (for example: not eligible for auto-proxying) 2017-09-19 08:14:56.445 INFO 9 --- [ main] o.a.c.f.imps.CuratorFrameworkImpl : Starting 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:zookeeper.version=3.4.6-1569965, built on 02/20/2014 09:09 GMT 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:host.name=kaka1 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:java.version=1.8.0_91 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:java.vendor=Oracle Corporation 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:java.home=/usr/lib/jvm/java-8-openjdk-amd64/jre 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:java.class.path=/my_app.jar 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:java.library.path=/usr/java/packages/lib/amd64:/usr/lib/x86_64-linux-gnu/jni:/lib/x86_64-linux-gnu:/usr/lib/x86_64-linux-gnu:/usr/lib/jni:/lib:/usr/lib 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:java.io.tmpdir=/tmp 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:java.compiler=<NA> 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:os.name=Linux 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:os.arch=amd64 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:os.version=4.10.0-33-generic 2017-09-19 08:14:56.466 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:user.name=root 2017-09-19 08:14:56.467 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:user.home=/root 2017-09-19 08:14:56.467 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:user.dir=/ 2017-09-19 08:14:56.470 INFO 9 --- [ main] org.apache.zookeeper.ZooKeeper : Initiating client connection, connectString=192.168.225.128:2181,192.168.225.128:2182,192.168.225.128:2183 sessionTimeout=60000 watcher=org.apache.curator.ConnectionState@4487109a 2017-09-19 08:14:56.789 INFO 9 --- [ain-EventThread] o.a.c.f.state.ConnectionStateManager : State change: CONNECTED . ____ _ __ _ _ /\\ / ___‘_ __ _ _(_)_ __ __ _ \ \ \ \ ( ( )\___ | ‘_ | ‘_| | ‘_ \/ _` | \ \ \ \ \\/ ___)| |_)| | | | | || (_| | ) ) ) ) ‘ |____| .__|_| |_|_| |_\__, | / / / / =========|_|==============|___/=/_/_/_/ :: Spring Boot :: (v1.3.3.RELEASE) 2017-09-19 08:14:57.337 INFO 9 --- [ main] b.c.PropertySourceBootstrapConfiguration : Located property source: CompositePropertySource [name=‘zookeeper‘, propertySources=[ZookeeperPropertySource [name=‘config/bootZKKafka‘], ZookeeperPropertySource [name=‘config/application‘]]] 2017-09-19 08:14:57.447 INFO 9 --- [ main] com.example.KafkaApplication : No active profile set, falling back to default profiles: default 2017-09-19 08:14:57.486 INFO 9 --- [ main] ationConfigEmbeddedWebApplicationContext : Refreshing org.springframework.boot.context.embedded.AnnotationConfigEmbeddedWebApplicationContext@1c7faa8a: startup date [Tue Sep 19 08:14:57 UTC 2017]; parent: org.springframework.context.annotation.AnnotationConfigApplicationContext@30f92868 2017-09-19 08:14:59.061 INFO 9 --- [ main] o.s.b.f.s.DefaultListableBeanFactory : Overriding bean definition for bean ‘requestContextFilter‘ with a different definition: replacing [Root bean: class [null]; scope=; abstract=false; lazyInit=false; autowireMode=3; dependencyCheck=0; autowireCandidate=true; primary=false; factoryBeanName=org.springframework.boot.autoconfigure.jersey.JerseyAutoConfiguration; factoryMethodName=requestContextFilter; initMethodName=null; destroyMethodName=(inferred); defined in class path resource [org/springframework/boot/autoconfigure/jersey/JerseyAutoConfiguration.class]] with [Root bean: class [null]; scope=; abstract=false; lazyInit=false; autowireMode=3; dependencyCheck=0; autowireCandidate=true; primary=false; factoryBeanName=org.springframework.boot.autoconfigure.web.WebMvcAutoConfiguration$WebMvcAutoConfigurationAdapter; factoryMethodName=requestContextFilter; initMethodName=null; destroyMethodName=(inferred); defined in class path resource [org/springframework/boot/autoconfigure/web/WebMvcAutoConfiguration$WebMvcAutoConfigurationAdapter.class]] 2017-09-19 08:14:59.064 INFO 9 --- [ main] o.s.b.f.s.DefaultListableBeanFactory : Overriding bean definition for bean ‘beanNameViewResolver‘ with a different definition: replacing [Root bean: class [null]; scope=; abstract=false; lazyInit=false; autowireMode=3; dependencyCheck=0; autowireCandidate=true; primary=false; factoryBeanName=org.springframework.boot.autoconfigure.web.ErrorMvcAutoConfiguration$WhitelabelErrorViewConfiguration; factoryMethodName=beanNameViewResolver; initMethodName=null; destroyMethodName=(inferred); defined in class path resource [org/springframework/boot/autoconfigure/web/ErrorMvcAutoConfiguration$WhitelabelErrorViewConfiguration.class]] with [Root bean: class [null]; scope=; abstract=false; lazyInit=false; autowireMode=3; dependencyCheck=0; autowireCandidate=true; primary=false; factoryBeanName=org.springframework.boot.autoconfigure.web.WebMvcAutoConfiguration$WebMvcAutoConfigurationAdapter; factoryMethodName=beanNameViewResolver; initMethodName=null; destroyMethodName=(inferred); defined in class path resource [org/springframework/boot/autoconfigure/web/WebMvcAutoConfiguration$WebMvcAutoConfigurationAdapter.class]] 2017-09-19 08:14:59.364 INFO 9 --- [ main] o.s.cloud.context.scope.GenericScope : BeanFactory id=c693bc6c-904d-38f8-8ece-872daebe5dd1 2017-09-19 08:14:59.390 INFO 9 --- [ main] f.a.AutowiredAnnotationBeanPostProcessor : JSR-330 ‘javax.inject.Inject‘ annotation found and supported for autowiring 2017-09-19 08:14:59.425 INFO 9 --- [ main] trationDelegate$BeanPostProcessorChecker : Bean ‘org.springframework.cloud.autoconfigure.ConfigurationPropertiesRebinderAutoConfiguration‘ of type [class org.springframework.cloud.autoconfigure.ConfigurationPropertiesRebinderAutoConfiguration$$EnhancerBySpringCGLIB$$faa8dd1d] is not eligible for getting processed by all BeanPostProcessors (for example: not eligible for auto-proxying) 2017-09-19 08:15:00.563 INFO 9 --- [ main] s.b.c.e.t.TomcatEmbeddedServletContainer : Tomcat initialized with port(s): 8080 (http) 2017-09-19 08:15:00.601 INFO 9 --- [ main] o.apache.catalina.core.StandardService : Starting service Tomcat 2017-09-19 08:15:00.603 INFO 9 --- [ main] org.apache.catalina.core.StandardEngine : Starting Servlet Engine: Apache Tomcat/8.0.32 2017-09-19 08:15:00.816 INFO 9 --- [ost-startStop-1] o.a.c.c.C.[Tomcat].[localhost].[/] : Initializing Spring embedded WebApplicationContext 2017-09-19 08:15:00.816 INFO 9 --- [ost-startStop-1] o.s.web.context.ContextLoader : Root WebApplicationContext: initialization completed in 3330 ms 2017-09-19 08:15:01.744 INFO 9 --- [ost-startStop-1] o.s.b.c.e.ServletRegistrationBean : Mapping servlet: ‘com.example.JerseyConfig‘ to [/*] 2017-09-19 08:15:01.748 INFO 9 --- [ost-startStop-1] o.s.b.c.e.ServletRegistrationBean : Mapping servlet: ‘dispatcherServlet‘ to [/] 2017-09-19 08:15:01.756 INFO 9 --- [ost-startStop-1] o.s.b.c.embedded.FilterRegistrationBean : Mapping filter: ‘characterEncodingFilter‘ to: [/*] 2017-09-19 08:15:01.761 INFO 9 --- [ost-startStop-1] o.s.b.c.embedded.FilterRegistrationBean : Mapping filter: ‘hiddenHttpMethodFilter‘ to: [/*] 2017-09-19 08:15:01.761 INFO 9 --- [ost-startStop-1] o.s.b.c.embedded.FilterRegistrationBean : Mapping filter: ‘httpPutFormContentFilter‘ to: [/*] 2017-09-19 08:15:01.762 INFO 9 --- [ost-startStop-1] o.s.b.c.embedded.FilterRegistrationBean : Mapping filter: ‘requestContextFilter‘ to: [/*] 2017-09-19 08:15:01.943 INFO 9 --- [ main] o.a.k.clients.producer.ProducerConfig : ProducerConfig values: compression.type = none metric.reporters = [] metadata.max.age.ms = 300000 metadata.fetch.timeout.ms = 60000 reconnect.backoff.ms = 50 sasl.kerberos.ticket.renew.window.factor = 0.8 bootstrap.servers = [192.168.225.128:9091, 192.168.225.128:9092, 192.168.225.128:9093] retry.backoff.ms = 100 sasl.kerberos.kinit.cmd = /usr/bin/kinit buffer.memory = 33554432 timeout.ms = 30000 key.serializer = class org.apache.kafka.common.serialization.StringSerializer sasl.kerberos.service.name = null sasl.kerberos.ticket.renew.jitter = 0.05 ssl.keystore.type = JKS ssl.trustmanager.algorithm = PKIX block.on.buffer.full = false ssl.key.password = null max.block.ms = 60000 sasl.kerberos.min.time.before.relogin = 60000 connections.max.idle.ms = 540000 ssl.truststore.password = null max.in.flight.requests.per.connection = 5 metrics.num.samples = 2 client.id = boost.zk.kafka ssl.endpoint.identification.algorithm = null ssl.protocol = TLS request.timeout.ms = 30000 ssl.provider = null ssl.enabled.protocols = [TLSv1.2, TLSv1.1, TLSv1] acks = 1 batch.size = 16384 ssl.keystore.location = null receive.buffer.bytes = 32768 ssl.cipher.suites = null ssl.truststore.type = JKS security.protocol = PLAINTEXT retries = 0 max.request.size = 1048576 value.serializer = class org.apache.kafka.common.serialization.StringSerializer ssl.truststore.location = null ssl.keystore.password = null ssl.keymanager.algorithm = SunX509 metrics.sample.window.ms = 30000 partitioner.class = class org.apache.kafka.clients.producer.internals.DefaultPartitioner send.buffer.bytes = 131072 linger.ms = 0 2017-09-19 08:15:02.215 INFO 9 --- [ main] o.a.kafka.common.utils.AppInfoParser : Kafka version : 0.9.0.1 2017-09-19 08:15:02.215 INFO 9 --- [ main] o.a.kafka.common.utils.AppInfoParser : Kafka commitId : 23c69d62a0cabf06 2017-09-19 08:15:02.378 INFO 9 --- [ main] o.a.k.clients.consumer.ConsumerConfig : ConsumerConfig values: metric.reporters = [] metadata.max.age.ms = 300000 value.deserializer = class org.apache.kafka.common.serialization.StringDeserializer group.id = my_group partition.assignment.strategy = [org.apache.kafka.clients.consumer.RangeAssignor] reconnect.backoff.ms = 50 sasl.kerberos.ticket.renew.window.factor = 0.8 max.partition.fetch.bytes = 1048576 bootstrap.servers = [192.168.225.128:9091, 192.168.225.128:9092, 192.168.225.128:9093] retry.backoff.ms = 100 sasl.kerberos.kinit.cmd = /usr/bin/kinit sasl.kerberos.service.name = null sasl.kerberos.ticket.renew.jitter = 0.05 ssl.keystore.type = JKS ssl.trustmanager.algorithm = PKIX enable.auto.commit = true ssl.key.password = null fetch.max.wait.ms = 500 sasl.kerberos.min.time.before.relogin = 60000 connections.max.idle.ms = 540000 ssl.truststore.password = null session.timeout.ms = 30000 metrics.num.samples = 2 client.id = ssl.endpoint.identification.algorithm = null key.deserializer = class org.apache.kafka.common.serialization.IntegerDeserializer ssl.protocol = TLS check.crcs = true request.timeout.ms = 40000 ssl.provider = null ssl.enabled.protocols = [TLSv1.2, TLSv1.1, TLSv1] ssl.keystore.location = null heartbeat.interval.ms = 3000 auto.commit.interval.ms = 1000 receive.buffer.bytes = 32768 ssl.cipher.suites = null ssl.truststore.type = JKS security.protocol = PLAINTEXT ssl.truststore.location = null ssl.keystore.password = null ssl.keymanager.algorithm = SunX509 metrics.sample.window.ms = 30000 fetch.min.bytes = 1 send.buffer.bytes = 131072 auto.offset.reset = latest 2017-09-19 08:15:02.633 INFO 9 --- [ main] o.a.kafka.common.utils.AppInfoParser : Kafka version : 0.9.0.1 2017-09-19 08:15:02.634 INFO 9 --- [ main] o.a.kafka.common.utils.AppInfoParser : Kafka commitId : 23c69d62a0cabf06 2017-09-19 08:15:03.032 INFO 9 --- [ c] com.example.EagleService : [c], Starting 2017-09-19 08:15:03.384 WARN 9 --- [ c] org.apache.kafka.clients.NetworkClient : Error while fetching metadata with correlation id 1 : {my_story=LEADER_NOT_AVAILABLE} 2017-09-19 08:15:03.879 WARN 9 --- [ c] org.apache.kafka.clients.NetworkClient : Error while fetching metadata with correlation id 3 : {my_story=LEADER_NOT_AVAILABLE} 2017-09-19 08:15:03.987 WARN 9 --- [ c] org.apache.kafka.clients.NetworkClient : Error while fetching metadata with correlation id 5 : {my_story=LEADER_NOT_AVAILABLE} 2017-09-19 08:15:04.169 INFO 9 --- [ main] s.w.s.m.m.a.RequestMappingHandlerAdapter : Looking for @ControllerAdvice: org.springframework.boot.context.embedded.AnnotationConfigEmbeddedWebApplicationContext@1c7faa8a: startup date [Tue Sep 19 08:14:57 UTC 2017]; parent: org.springframework.context.annotation.AnnotationConfigApplicationContext@30f92868 2017-09-19 08:15:04.696 INFO 9 --- [ main] s.w.s.m.m.a.RequestMappingHandlerMapping : Mapped "{[/error],produces=[text/html]}" onto public org.springframework.web.servlet.ModelAndView org.springframework.boot.autoconfigure.web.BasicErrorController.errorHtml(javax.servlet.http.HttpServletRequest,javax.servlet.http.HttpServletResponse) 2017-09-19 08:15:04.699 INFO 9 --- [ main] s.w.s.m.m.a.RequestMappingHandlerMapping : Mapped "{[/error]}" onto public org.springframework.http.ResponseEntity<java.util.Map<java.lang.String, java.lang.Object>> org.springframework.boot.autoconfigure.web.BasicErrorController.error(javax.servlet.http.HttpServletRequest) 2017-09-19 08:15:04.869 INFO 9 --- [ main] o.s.w.s.handler.SimpleUrlHandlerMapping : Mapped URL path [/webjars/**] onto handler of type [class org.springframework.web.servlet.resource.ResourceHttpRequestHandler] 2017-09-19 08:15:04.871 INFO 9 --- [ main] o.s.w.s.handler.SimpleUrlHandlerMapping : Mapped URL path [/**] onto handler of type [class org.springframework.web.servlet.resource.ResourceHttpRequestHandler] 2017-09-19 08:15:05.057 INFO 9 --- [ main] o.s.w.s.handler.SimpleUrlHandlerMapping : Mapped URL path [/**/favicon.ico] onto handler of type [class org.springframework.web.servlet.resource.ResourceHttpRequestHandler] 2017-09-19 08:15:05.654 WARN 9 --- [ main] c.n.c.sources.URLConfigurationSource : No URLs will be polled as dynamic configuration sources. 2017-09-19 08:15:05.657 INFO 9 --- [ main] c.n.c.sources.URLConfigurationSource : To enable URLs as dynamic configuration sources, define System property archaius.configurationSource.additionalUrls or make config.properties available on classpath. 2017-09-19 08:15:05.675 WARN 9 --- [ main] c.n.c.sources.URLConfigurationSource : No URLs will be polled as dynamic configuration sources. 2017-09-19 08:15:05.676 INFO 9 --- [ main] c.n.c.sources.URLConfigurationSource : To enable URLs as dynamic configuration sources, define System property archaius.configurationSource.additionalUrls or make config.properties available on classpath. 2017-09-19 08:15:05.895 INFO 9 --- [ main] o.s.j.e.a.AnnotationMBeanExporter : Registering beans for JMX exposure on startup 2017-09-19 08:15:05.938 INFO 9 --- [ main] o.s.j.e.a.AnnotationMBeanExporter : Bean with name ‘configurationPropertiesRebinder‘ has been autodetected for JMX exposure 2017-09-19 08:15:05.944 INFO 9 --- [ main] o.s.j.e.a.AnnotationMBeanExporter : Bean with name ‘refreshScope‘ has been autodetected for JMX exposure 2017-09-19 08:15:05.944 INFO 9 --- [ main] o.s.j.e.a.AnnotationMBeanExporter : Bean with name ‘environmentManager‘ has been autodetected for JMX exposure 2017-09-19 08:15:05.956 INFO 9 --- [ main] o.s.j.e.a.AnnotationMBeanExporter : Located managed bean ‘environmentManager‘: registering with JMX server as MBean [org.springframework.cloud.context.environment:name=environmentManager,type=EnvironmentManager] 2017-09-19 08:15:06.002 INFO 9 --- [ main] o.s.j.e.a.AnnotationMBeanExporter : Located managed bean ‘refreshScope‘: registering with JMX server as MBean [org.springframework.cloud.context.scope.refresh:name=refreshScope,type=RefreshScope] 2017-09-19 08:15:06.042 INFO 9 --- [ main] o.s.j.e.a.AnnotationMBeanExporter : Located managed bean ‘configurationPropertiesRebinder‘: registering with JMX server as MBean [org.springframework.cloud.context.properties:name=configurationPropertiesRebinder,context=1c7faa8a,type=ConfigurationPropertiesRebinder] 2017-09-19 08:15:06.353 INFO 9 --- [ main] o.s.c.support.DefaultLifecycleProcessor : Starting beans in phase 0 2017-09-19 08:15:06.750 INFO 9 --- [ main] s.b.c.e.t.TomcatEmbeddedServletContainer : Tomcat started on port(s): 8080 (http) 2017-09-19 08:15:07.490 WARN 9 --- [ main] org.apache.curator.utils.ZKPaths : The version of ZooKeeper being used doesn‘t support Container nodes. CreateMode.PERSISTENT will be used instead. 2017-09-19 08:15:07.722 INFO 9 --- [ main] com.example.KafkaApplication : Started KafkaApplication in 14.542 seconds (JVM running for 16.038)

3. 遇到的问题:

在运行微服务实例时,曾出现如下问题:

[localhost:2181)] org.apache.zookeeper.ClientCnxn : Session 0x0 for server null, unexpected error, closing socket connection and attempting reconnect

后经排查,发现源文件中spring.cloud.zookeeperconnectString=ZK,而实际上应该为:spring.cloud.zookeeper.connectString=ZK,少了一个点

4. 测试

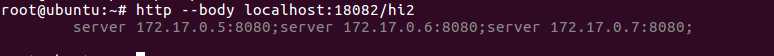

四、使用Nginx进行负载均衡

1. 新建并编辑/tmp/nginx/nginx.conf文件

worker_processes 2; events { worker_connections 1024; } http { upstream my_service { server 172.17.0.5:8080;server 172.17.0.6:8080;server 172.17.0.7:8080;

}

server {

listen 8000;

server_name localhost;

location / {

proxy_pass http://my_service;

proxy_redirect off;

}

}

}

2. 构建镜像:

使用网易docker镜像:https://c.163.com/hub#/m/repository/?repoId=2967

运行:docker pull hub.c.163.com/library/nginx:latest

下载完毕后,可以查询:docker ps -a

root@ubuntu:/tmp# docker images REPOSITORY TAG IMAGE ID CREATED SIZE mstest latest 7ae56ffdd866 2 hours ago 769MB kafkatest latest eaa35ba12fe6 4 days ago 762MB zk latest 5c819179f3f8 4 days ago 778MB ubuntu xenial 8b72bba4485f 6 days ago 120MB hub.c.163.com/library/nginx latest 46102226f2fd 4 months ago 109MB index.tenxcloud.com/docker_library/java latest 264282a59a95 15 months ago 669MB index.tenxcloud.com/tenxcloud/redis latest c9dc199d1b1c 2 years ago 190MB

3. 运行容器

root@ubuntu:/tmp# docker run -d --name kaka-ng -v /tmp/website:/usr/share/nginx/html -v /tmp/nginx:/etc/nginx:ro -p 18000:8000 hub.c.163.com/library/nginx 393cedc53742e537100672b012346169d26f342efeb236437a1412446c7edc27

4. 测试Nginx负载均衡(轮询)

root@ubuntu:/tmp/nginx# http --body localhost:18000/hi 172.17.0.5:8080 root@ubuntu:/tmp/nginx# http --body localhost:18000/hi 172.17.0.6:8080 root@ubuntu:/tmp/nginx# http --body localhost:18000/hi 172.17.0.7:8080 root@ubuntu:/tmp/nginx# http --body localhost:18000/hi 172.17.0.5:8080 root@ubuntu:/tmp/nginx# http --body localhost:18000/hi 172.17.0.6:8080 root@ubuntu:/tmp/nginx# http --body localhost:18000/hi 172.17.0.7:8080 root@ubuntu:/tmp/nginx#

标签:getting byte factory 能力 窗口 intern glob .so 信息

原文地址:http://www.cnblogs.com/FrankZhou2017/p/7524640.html