标签:sha ant 输出 collect orm targe 维度 mbed pytho

word2Vec 是实现从原始语料中学习字词空间向量的预测模型

使用word2Vec的skip_Gram模型

import collections

import math

import os

import random

import zipfile

import numpy as np

import urllib.request

import tensorflow as tf

url = ‘http://mattmahoney.net/dc/‘

def maybe_download(filename,expected_bytes):

"下载数据的压缩文件并核对文件尺寸大小"

if not os.path.exists(filename):

filename ,_=urllib.request.urlretrieve(url+filename,filename)

statinfo = os.stat(filename)

if statinfo.st_size == expected_bytes:

print(‘Found and verified‘,filename)

else:

print(statinfo.st_size)

raise Exception(

‘Failed to verify‘+filename +‘.can you get to it with a browser?‘

)

return filename

filename = maybe_download(‘text8.zip‘,31344016)

def read_data(filename):

with zipfile.ZipFile(filename) as f:

"将数据转化为单词列表"

data = tf.compat.as_str(f.read(f.namelist()[0])).split( )

return data

words = read_data(filename)

print(‘Data size‘,len(words))

"创建词汇表"

vocabulary_size =50000

def build_dataset(words):

count = [[‘UNK‘,-1]]

"统计单词列表中单词的频数,把前50000的放入字典"

count.extend(collections.Counter(words).most_common(vocabulary_size-1))

dictionary = dict()

for word,_ in count:

dictionary[word] = len(dictionary)

data = list()

unk_count = 0

"""

不在前50000里面 编码为0

"""

for word in words:

if word in dictionary:

index = dictionary[word]

else:

index = 0

unk_count +=1

data.append(index)

count[0][1] = unk_count

reverse_dictionary = dict(zip(dictionary.values(),dictionary.keys()))

return data,count,dictionary,reverse_dictionary

data, count,dictionary,reverse_dictionary = build_dataset(words)

del words

print(‘Most common words (+UNK)‘,count[:5])

print(‘Sample data‘,data[:10],[reverse_dictionary[i] for i in data[:10]])

data_index = 0

def generate_batch(batch_size,num_skips,skip_window):

"""

:param batch_size:

:param num_skips: 对每个单词生成多少样本 不大于2*skip_window

:param skip_window: 滑动窗口步长

:return: batch

labels

"""

global data_index

assert batch_size %num_skips==0

assert num_skips <=2*skip_window

batch = np.ndarray(shape=(batch_size),dtype=np.int32)

labels = np.ndarray(shape=(batch_size,1),dtype=np.int32)

span = 2*skip_window+1

buffer = collections.deque(maxlen=span)

for _ in range(span):

buffer.append(data[data_index])

data_index = (data_index+1)%len(data)

for i in range(batch_size//num_skips): # 一块batch里面有包含的目标单词数

target = skip_window

target_to_avoid = [skip_window] #需要避免的单词列表

for j in range(num_skips):

# 找到可以使用的语境词语

while target in target_to_avoid:

target = random.randint(0,span-1)

target_to_avoid.append(target)

batch[i*num_skips+j]=buffer[skip_window] #目标词汇

labels[i*num_skips+j,0] = buffer[target] #语境词汇

"buffer此时已经填满,后续的数据会覆盖掉前面的数据"

buffer.append(data[data_index])

data_index=(data_index+1)%len(data)

return batch,labels

batch,labels = generate_batch(batch_size=8,num_skips=2,skip_window=1)

for i in range(8):

print(batch[i],reverse_dictionary[batch[i]],‘->‘,labels[i,0],reverse_dictionary[labels[i,0]])

batch_size = 128

embedding_size = 128 #单词转化为稠密词向量的维度

skip_window = 1

num_skips = 2

valid_size = 16 #验证单词数

valid_window = 100 #验证单词数从频数最高的100个单词里面抽取

valid_examples = np.random.choice(valid_window,valid_size,replace=False) #负样本的噪声单词数

num_sampled =64

graph = tf.Graph()

with graph.as_default():

train_inputs = tf.placeholder(tf.int32,shape=[batch_size])

train_labels = tf.placeholder(tf.int32,shape=[batch_size,1])

valid_dataset = tf.constant(valid_examples,dtype = tf.int32)

with tf.device(‘/cpu:0‘):

embeddings = tf.Variable(

tf.random_uniform([vocabulary_size,embedding_size],-1.0,1.0)

)

embed = tf.nn.embedding_lookup(embeddings,train_inputs) #查找输入对应的向量

nce_weights = tf.Variable(

tf.truncated_normal([vocabulary_size,embedding_size],

stddev=1.0/math.sqrt(embedding_size))

)

nce_biases = tf.Variable(tf.zeros([vocabulary_size]))

loss = tf.reduce_mean(tf.nn.nce_loss(

weights=nce_weights,

biases= nce_biases,

labels=train_labels,

inputs=embed,

num_sampled=num_sampled,

num_classes=vocabulary_size

))

optimizer = tf.train.GradientDescentOptimizer(1.0).minimize(loss)

norm = tf.sqrt(tf.reduce_sum(tf.square(embeddings),1,keep_dims = True))

normalized_embeddings=embeddings/norm

valid_embeddings = tf.nn.embedding_lookup(normalized_embeddings,valid_dataset)

similarity = tf.matmul(valid_embeddings,normalized_embeddings,transpose_b=True)

init = tf.global_variables_initializer()

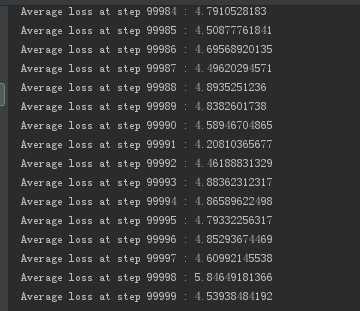

num_step =100001

with tf.Session(graph=graph)as session:

init.run()

print(‘Initialized‘)

average_loss = 0

for step in range(num_step):

batch_inputs,batch_labels=generate_batch(batch_size,num_skips,skip_window)

feed_dict={train_inputs:batch_inputs,train_labels:batch_labels}

_,loss_val = session.run([optimizer,loss],feed_dict=feed_dict)

average_loss+=loss_val

if step%200==0:

if step >0:

average_loss /=2000

print(‘Average loss at step‘,step,":",average_loss)

average_loss=0

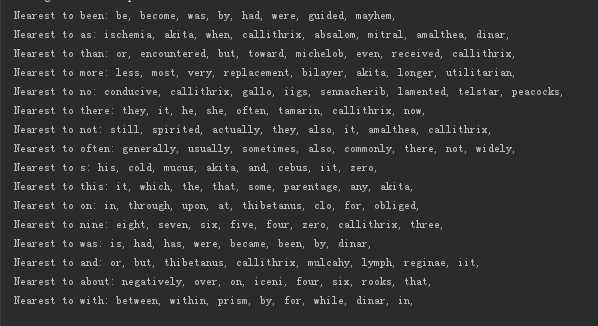

"把验证单词的相关单词与所有单词计算相关性,并输出前8个相似性高的单词"

if step%10000==0:

sim = similarity.eval()

for i in range(valid_size):

valid_word = reverse_dictionary[valid_examples[i]]

top_k = 8

nearest = (-sim[i,:]).argsort()[1:top_k+1]

log_str = "Nearest to %s:"%valid_word

for k in range(top_k):

close_word = reverse_dictionary[nearest[k]]

log_str= "%s %s,"%(log_str,close_word)

print(log_str)

final_embeddings = normalized_embeddings.eval()

使用url下载数据集会出现数据集下载不完整,推荐手动下载数据集 网址为http://mattmahoney.net/dc/text8.zip

结果如下

标签:sha ant 输出 collect orm targe 维度 mbed pytho

原文地址:http://www.cnblogs.com/jzcbest1016/p/7865824.html