标签:有一个 file sleep 不可用 问题 模拟 dex cst ade

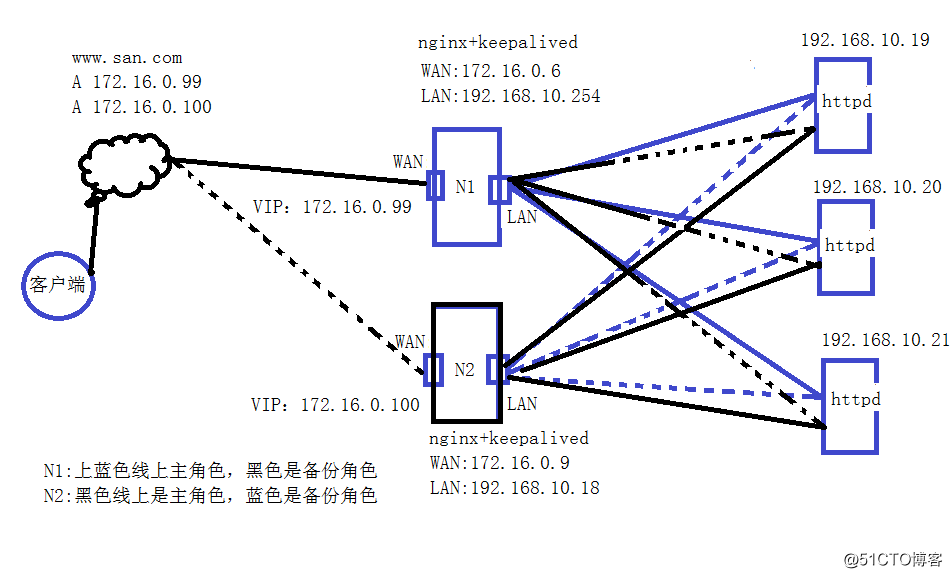

一、概述前面几篇介绍了nginx做web网站以及https网站,反向代理,LVS的NAT与DR调度负载,但由于nginx本身负载是七层,需要套接字,即使基于upstream模块模拟成四层代理也是瓶颈,因此本文介绍nginx基于keepalived做高可用负载均衡集群服务,目标就是两台keepalived对nginx反向代理负载服务做检查与调度,做成双主模式,nginx负载调度后端的httpd网站,任何一台调度器(nginx或keepalived服务)故障,不影响业务;后端任何一台web故障也不影响业务正常访问;

实验环境:

N1:

CentOS7 X64 nginx +keepalived ip:172.16.0.6 192.168.10.254 VIP:172.16.0.99

N2:

CentOS7 x64 nginx+ keepalived ip:172.16.0.9 192.168.10.18 VIP:172.16.0.100

httpd:

CentOS7 x64 nginx 在一台虚拟机上使用三块网卡ip: ip 192.168.10.19 - 21

架构如下:

说明:

在双主模式下,客户端访问www.san.com 通过互联网DNS解析访问到N1或N2提供的公网VIP,如解析到N1 172.16.0.99和N2 172.16.0.100,,就会一部分通过N1访问后端的web,一部分通过N2访问后端web服务,另外如果其中一台nginx故障则转移到另一台调度器上,如果是主备模式则同时只有一个做主调度器,另一台是备份调度器,是非活动的,只有当主调度器故障时,或手动降低优先级后才会激活使用;本次测试主要针对双主模式进行测试。不对DNS做解析测试,只做nginx keepalived的高可用负载集群测试;另外为了看到效果把三台httpd的内容分别修改成不一样,以示区别,现实中后端三台httpd提供一样的内容;

1、后端web配置

通过一台虚拟机三台网卡模拟三台

安装配置httpd

#yum install httpd -y

配置测试网站

[root@web ~]# cat /etc/httpd/conf.d/vhosts.conf

<VirtualHost 192.168.10.19:80>

ServerName 192.168.10.19

DocumentRoot "/data/web/vhost1"

<Directory "/data/web/vhost1">

Options FollowSymLinks

AllowOverride None

Require all granted

</Directory>

</VirtualHost>

<VirtualHost 192.168.10.20:80>

ServerName 192.168.10.20

DocumentRoot "/data/web/vhost2"

<Directory "/data/web/vhost2">

Options FollowSymLinks

AllowOverride None

Require all granted

</Directory>

</VirtualHost>

<VirtualHost 192.168.10.21:80>

ServerName 192.168.10.21

DocumentRoot "/data/web/vhost3"

<Directory "/data/web/vhost3">

Options FollowSymLinks

AllowOverride None

Require all granted

</Directory>

</VirtualHost>

[root@web ~]# mkdir -pv /data/web/vhost{1,2,3}

[root@web ~]# cat /data/web/vhost1/index.html

<h1>Vhost1</h1>

[root@web ~]# cat /data/web/vhost2/index.html

<h1>Vhost2</h1>

[root@web ~]# cat /data/web/vhost3/index.html

<h1>Vhost3</h1>

启动web服务

[root@web ~]# systemctl start httpd至此httpd服务配置完成

2、nginx负载配置

N1与N2分别安装nginx:

#yum install nginx -y

配置nginx负载如下:

# egrep -v ‘(^$|^#)‘ /etc/nginx/nginx.conf

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

http {

log_format main ‘$remote_addr - $remote_user [$time_local] "$request" ‘

‘$status $body_bytes_sent "$http_referer" ‘

‘"$http_user_agent" "$http_x_forwarded_for"‘;

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

include /etc/nginx/mime.types;

default_type application/octet-stream;

upstream websrvs { #定义后端节点,检查超时间隔1s,三次失败就表示就踢除

server 192.168.10.19:80 fail_timeout=1 max_fails=3;

server 192.168.10.20:80 fail_timeout=1 max_fails=3;

server 192.168.10.21:80 fail_timeout=1 max_fails=3;

}

include /etc/nginx/conf.d/*.conf;

server {

listen 80 default_server;

listen [::]:80 default_server;

server_name _;

root /usr/share/nginx/html;

# Load configuration files for the default server block.

include /etc/nginx/default.d/*.conf;

location / {

proxy_pass http://websrvs; #使用负载代理

}

error_page 404 /404.html;

location = /40x.html {

}

error_page 500 502 503 504 /50x.html;

location = /50x.html {

}

}

}

#启动nginx服务

#systemctl start nginx3、安装keepalived高可用服务

N1,N2上都安装keepalived服务

#yum install keepalived -y

N1 keepalived配置

[root@n1 keepalived]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

root@localhost #发件箱地址(启动本地postfix服务即可)

}

notification_email_from keepalived@localhost

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id node1 #路由节点标识

vrrp_mcast_group4 224.1.101.33 #多播地址

}

vrrp_script chk_down {

script "/etc/keepalived/check.sh" #通过脚本手动转移主备角色

weight -10

interval 1

fall 1

rise 1

}

vrrp_script chk_ngx { #检查nginx 每隔2s 3次 失败 权重减少10

script "killall -0 nginx && exit 0 || exit 1"

weight -10

interval 2

fall 3

rise 3

}

vrrp_instance VI_1 { #高可用节点1

state MASTER #主节点

priority 100 #优先级100

interface ens33 #网卡接口

virtual_router_id 33 #虚拟路由

advert_int 1

authentication { #简单密码认证

auth_type PASS

auth_pass RT3SKUI2

}

virtual_ipaddress { #节点1虚拟VIP 172.16.0.99

172.16.0.99/24 dev ens33 label ens33:0

}

track_script { #检查

chk_down

chk_ngx

}

#主备变换时调用脚本

notify_master "/etc/keepalived/notify.sh master"

notify_backup "/etc/keepalived/notify.sh backup"

notify_fault "/etc/keepalived/notify.sh fault"

}

vrrp_instance VI_2 { #高可用节点2

state BACKUP #备用节点

priority 96 #优先级 96

interface ens33 #网络接口

virtual_router_id 43 #虚拟路由名称

advert_int 1

authentication { #简单认证

auth_type PASS

auth_pass RT3SKUI3

}

virtual_ipaddress { #节点2 VIP 172.16.0.100

172.16.0.100/24 dev ens33 label ens33:1

}

track_script {

chk_down

chk_ngx

}

track_interface { #通过检查网卡对集群健康检查

ens33

ens37

}

notify_master "/etc/keepalived/notify.sh master"

notify_backup "/etc/keepalived/notify.sh backup"

notify_fault "/etc/keepalived/notify.sh fault"

}

配置说明:

有两个集群节点,其中在节点1上N1做主角色,在节点2上N1做备份角色;

N2 keepalived配置

[root@n2 keepalived]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

root@localhost

}

notification_email_from keepalived@localhost

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id node2

vrrp_mcast_group4 224.1.101.33 #多播地址

}

vrrp_script chk_down {

script "/etc/keepalived/check.sh"

weight -10

interval 1

fall 1

rise 1

}

vrrp_script chk_ngx {

script "killall -0 nginx && exit 0 || exit 1"

weight -10

interval 2

fall 3

rise 3

}

vrrp_instance VI_1 {

state BACKUP

priority 96

interface ens33

virtual_router_id 33

advert_int 1

authentication {

auth_type PASS

auth_pass RT3SKUI2

}

virtual_ipaddress {

172.16.0.99/24 dev ens33 label ens33:0

}

track_script {

chk_down

chk_ngx

}

notify_master "/etc/keepalived/notify.sh master"

notify_backup "/etc/keepalived/notify.sh backup"

notify_fault "/etc/keepalived/notify.sh fault"

}

vrrp_instance VI_2 {

state MASTER

priority 100

interface ens33

virtual_router_id 43

advert_int 1

authentication {

auth_type PASS

auth_pass RT3SKUI3

}

virtual_ipaddress {

172.16.0.100/24 dev ens33 label ens33:1

}

track_script {

chk_down

chk_ngx

}

track_interface {

ens33

ens37

}

notify_master "/etc/keepalived/notify.sh master"

notify_backup "/etc/keepalived/notify.sh backup"

notify_fault "/etc/keepalived/notify.sh fault"

}

配置说明:

在N2上也有两个节点,在节点1上是备份角色,在节点2上是主角色;和N1刚好相反;

4、相关脚本

check.sh脚本

# cat /etc/keepalived/check.sh

[ -f /etc/keepalived/down ] && exit 1 || exit 0

notify.sh脚本

#!/bin/bash

#

contact=‘root@localhost‘

notify() {

local mailsubject="$(hostname) to be $1, vip floating"

local mailbody="$(date +‘%F %T‘): vrrp transition, $(hostname) changed to be $1"

echo "$mailbody" | mail -s "$mailsubject" $contact

}

case $1 in

master)

systemctl start nginx

notify master

;;

backup)

systemctl start nginx

notify backup

;;

fault)

systemctl stop nginx

notify fault

;;

*)

echo "Usage: $(basename $0) {master|backup|fault}"

exit 1

;;

esac

说明:

对于notify.sh脚本依赖postfix服务和mailx软件包中的mail工具,如果系统中没有安装可以通过yum install postfix mailx -y安装即可。

#到此配置完成,接下测试

1、启动N1 keepalived服务:

查看keepalived服务状态

[root@n1 keepalived]# systemctl status keepalived

● keepalived.service - LVS and VRRP High Availability Monitor

……省略……

Jan 21 15:42:36 n1.san.com Keepalived_vrrp[13234]: Sending gratuitous ARP on ens33 for 172.16.0.99

Jan 21 15:42:39 n1.san.com Keepalived_vrrp[13234]: VRRP_Instance(VI_2) Sending/queueing gratuitous ARPs on ens33 for 172.16.0.100

Jan 21 15:42:39 n1.san.com Keepalived_vrrp[13234]: Sending gratuitous ARP on ens33 for 172.16.0.100

查看网卡状态:

[root@n1 ~]# ifconfig

ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.16.0.6 netmask 255.255.255.0 broadcast 172.16.0.255

inet6 fe80::96b9:e601:fd10:1888 prefixlen 64 scopeid 0x20<link>

inet6 fe80::618d:61c4:52d7:9619 prefixlen 64 scopeid 0x20<link>

ens33:0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.16.0.99 netmask 255.255.255.0 broadcast 0.0.0.0

ether 00:0c:29:8b:6e:09 txqueuelen 1000 (Ethernet)

ens33:1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.16.0.100 netmask 255.255.255.0 broadcast 0.0.0.0

ether 00:0c:29:8b:6e:09 txqueuelen 1000 (Ethernet)

可以看到在N2没有启动时(即N2 keepalived服务不可用时)N1成为主要的高可用调度节点;同时抢占了两个VIP对外服务;即当访问www.san.com域名时解析到的都是N1上的两个节点,并不影响业务;

2、启动N2 keepalived服务

#systemctl start keepalived

此时看到N1 VIP是172.16.0.99

N2 VIP 172.16.0.100

查看N1状态:

[root@n1 ~]# systemctl status keepalived

● keepalived.service - LVS and VRRP High Availability Monitor

……省略……

Jan 21 15:42:39 n1san.com Keepalived_vrrp[13234]: Sending gratuitous ARP on ens33 for 172.16.0.100

Jan 21 15:59:40 n1.san.com Keepalived_vrrp[13234]: VRRP_Instance(VI_2) Received advert with higher priority 100, ours 96

Jan 21 15:59:40 n1.san.com Keepalived_vrrp[13234]: VRRP_Instance(VI_2) Entering BACKUP STATE

Jan 21 15:59:40 n1.san.com Keepalived_vrrp[13234]: VRRP_Instance(VI_2) removing protocol VIPs.

Jan 21 15:59:40 n1.san.com Keepalived_vrrp[13234]: Opening script file /etc/keepalived/notify.sh

查看网卡:

[root@n1 ~]# ifconfig

ens33:0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.16.0.99 netmask 255.255.255.0 broadcast 0.0.0.0

ether 00:0c:29:8b:6e:09 txqueuelen 1000 (Ethernet)

只有VIP 172.16.0.99

说明:由于N1上是172.16.0.99的主模式是172.16.0.100的备份模式,所以在N2没有启动时,两个VIP都在N1上,当N2动时,172.16.0.100被N2抢占,因此变为backup备份模式;

查看N2的keepalived服务状态:

[root@n2 keepalived]# systemctl status keepalived

● keepalived.service - LVS and VRRP High Availability Monitor

……省略……

Jan 21 15:59:46 n2.san.com Keepalived_vrrp[12877]: Sending gratuitous ARP on ens33 for 172.16.0.100

Jan 21 15:59:46 n2.san.com Keepalived_vrrp[12877]: VRRP_Instance(VI_2) Sending/queueing gratuitous ARPs on ens33 for 172.16.0.100

Jan 21 15:59:46 n2.san.com Keepalived_vrrp[12877]: Sending gratuitous ARP on ens33 for 172.16.0.100

……省略……

#查看网卡状态

[root@n2 keepalived]# ifconfig

ens33:1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.16.0.100 netmask 255.255.255.0 broadcast 0.0.0.0

ether 00:0c:29:03:2e:91 txqueuelen 1000 (Ethernet)说明 :由于N2是后启动,所以直接在N2上是主模式,所以直接抢占VIP 172.16.0.100

3、模拟nginx服务岩机

关闭N1上nginx服务

[root@n1 ~]# for i in {1..20};do sleep 1;killall nginx;done

打开N1的另一个终端,查看nginx keepalived状态 :

[root@n1 ~]# systemctl status keepalived

● keepalived.service - LVS and VRRP High Availability Monitor

……省略……

Jan 21 16:44:22 n1.magedu.com Keepalived_vrrp[20354]: /usr/bin/killall -0 nginx && exit 0 || exit 1 exited with status 1

You have new mail in /var/spool/mail/root

[root@n1 ~]# systemctl status nginx

● nginx.service - The nginx HTTP and reverse proxy server

Loaded: loaded (/usr/lib/systemd/system/nginx.service; enabled; vendor preset: disabled)

Active: inactive (dead) since Sun 2018-01-21 16:42:48 CST; 1min 43s ago

……省略……

查看N2上的keepalived服务状态和VIP

[root@n2 keepalived]# systemctl status keepalived

● keepalived.service - LVS and VRRP High Availability Monitor

Jan 21 16:42:49 n2.san.com Keepalived_vrrp[17567]: Sending gratuitous ARP on ens33 for 172.16.0.99

Jan 21 16:42:49 n2.san.com Keepalived_vrrp[17567]: Opening script file /etc/keepalived/notify.sh

Jan 21 16:42:54 n2.san.com Keepalived_vrrp[17567]: Sending gratuitous ARP on ens33 for 172.16.0.99

Jan 21 16:42:54 n2.san.com Keepalived_vrrp[17567]: VRRP_Instance(VI_1) Sending/queueing gratuitous ARPs on ens33 for 172.16.0.99

Jan 21 16:42:54 n2.san.com Keepalived_vrrp[17567]: Sending gratuitous ARP on ens33 for 172.16.0.99

查看N2的VIP

[root@n2 keepalived]# ifconfig

ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.16.0.9 netmask 255.255.255.0 broadcast 172.16.0.255

……省略……

ens33:0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.16.0.99 netmask 255.255.255.0 broadcast 0.0.0.0

ether 00:0c:29:03:2e:91 txqueuelen 1000 (Ethernet)

ens33:1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.16.0.100 netmask 255.255.255.0 broadcast 0.0.0.0

ether 00:0c:29:03:2e:91 txqueuelen 1000 (Ethernet)

说明:在N1的nginx宕机后N2抢占了VIP 172.16.0.99使得N2拥有了172.16.0.100和172.16.0.99两个对外VIP,因此不影响客户端访问www.san.com网站业务

在这种情况下只有手动启动N1的nginx服务(修复nginx)故障才能让N1的keepalive获取VIP 172.16.0.99再次形成双主模式高可用负载集群;

4、对后端httpd模拟故障

对于httpd的故障,nginx会自动 去除有故障的节点;

禁用192.168.10.21 网卡

[root@web ~]# ifconfig ens39 down

测试访问:

[root@publicsrvs ~]# curl http://172.16.0.100

<h1>Vhost1</h1>

[root@publicsrvs ~]# curl http://172.16.0.100

<h1>Vhost2</h1>

[root@publicsrvs ~]# curl http://172.16.0.100

<h1>Vhost1</h1>

[root@publicsrvs ~]# curl http://172.16.0.99

<h1>Vhost1</h1>

[root@publicsrvs ~]# curl http://172.16.0.99

<h1>Vhost2</h1>

[root@publicsrvs ~]# curl http://172.16.0.99

<h1>Vhost1</h1>可以看到在192.168.10.21 web服务不能访问后整个集群依然可能访问,需要注意的是真实情况 下是访问域名的如www.san.com 来自动获取VIP和后端负载调度的;

本次过程繁多,难免有错误或遗漏之处,如有问题欢迎留言指正;谢谢~

标签:有一个 file sleep 不可用 问题 模拟 dex cst ade

原文地址:http://blog.51cto.com/dyc2005/2063399