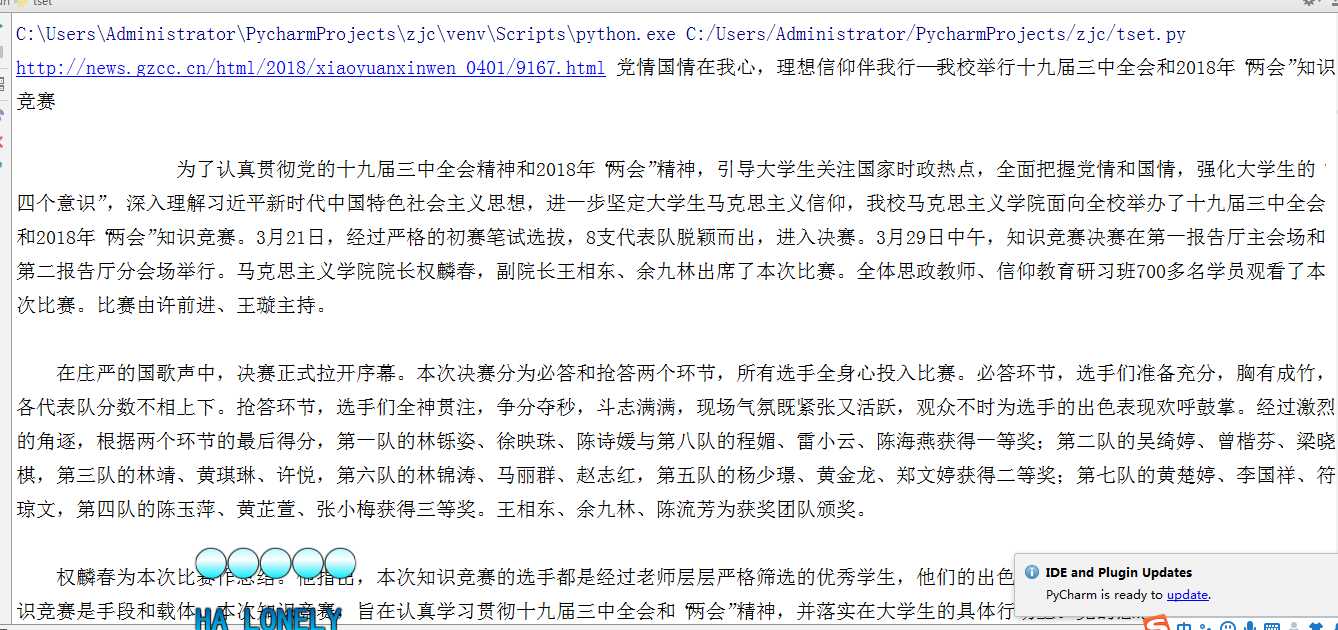

1. 用requests库和BeautifulSoup库,爬取校园新闻首页新闻的标题、链接、正文。

import requests

from bs4 import BeautifulSoup

url=‘http://news.gzcc.cn/html/xiaoyuanxinwen/‘

res=requests.get(url)

res.encoding=‘utf-8‘

soup=BeautifulSoup(res.text,‘html.parser‘)

for new in soup.select(‘li‘):

if len(new.select(‘.news-list-info‘))>0:

s0 = new.select(‘.news-list-title‘)[0].text# 标题内容

s1 = new.select(‘a‘)[0].attrs[‘href‘] # 链接

resd=requests.get(s1)

resd.encoding = ‘utf-8‘

soupd = BeautifulSoup(resd.text, ‘html.parser‘)

s2 = soupd.select(‘#content‘)[0].text #正文

print(s1,s0,s2)

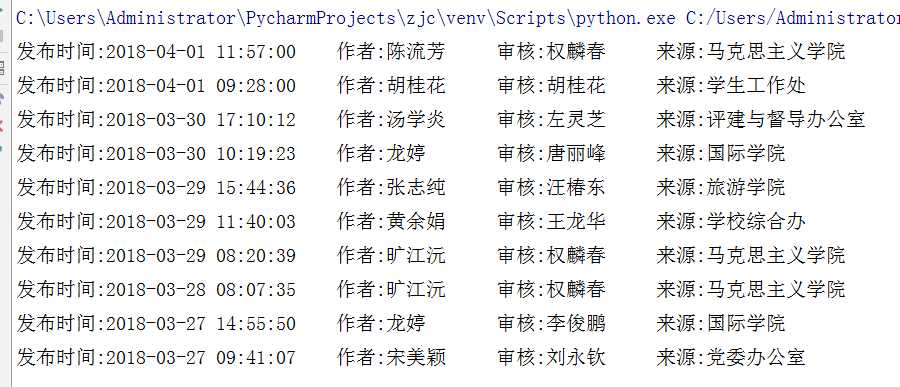

2. 分析字符串,获取每篇新闻的发布时间,作者,来源,摄影等信息。

import requests

from bs4 import BeautifulSoup

from datetime import datetime

url=‘http://news.gzcc.cn/html/xiaoyuanxinwen/‘

res=requests.get(url)

res.encoding=‘utf-8‘

soup=BeautifulSoup(res.text,‘html.parser‘)

for new in soup.select(‘li‘):

if len(new.select(‘.news-list-info‘))>0:

s1 = new.select(‘a‘)[0].attrs[‘href‘] # 链接

resd=requests.get(s1)

resd.encoding = ‘utf-8‘

soupd = BeautifulSoup(resd.text, ‘html.parser‘)

info=soupd.select(‘.show-info‘)[0].text

dt=info.lstrip(‘发布时间:‘)[:19]#发布时间

sh=info[info.find(‘作者:‘):].split()[0].lstrip(‘作者:‘) #作者

sh1 = info[info.find(‘审核:‘):].split()[0].lstrip(‘审核:‘) # 审核

sh2 = info[info.find(‘来源:‘):].split()[0].lstrip(‘来源:‘) # 来源

print(‘发布时间:‘+dt+‘\t作者:‘+sh+‘\t审核:‘+sh1+‘\t来源:‘+sh2)

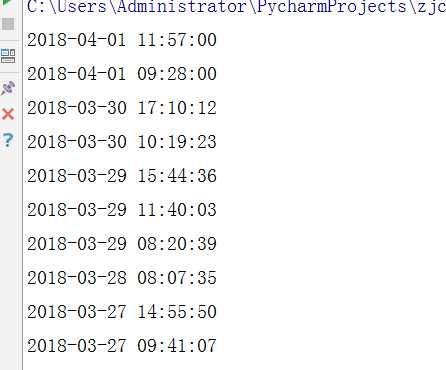

3. 将其中的发布时间由str转换成datetime类型。

import requests

from bs4 import BeautifulSoup

from datetime import datetime

url=‘http://news.gzcc.cn/html/xiaoyuanxinwen/‘

res=requests.get(url)

res.encoding=‘utf-8‘

soup=BeautifulSoup(res.text,‘html.parser‘)

for new in soup.select(‘li‘):

if len(new.select(‘.news-list-info‘))>0:

s1 = new.select(‘a‘)[0].attrs[‘href‘] # 链接

resd=requests.get(s1)

resd.encoding = ‘utf-8‘

soupd = BeautifulSoup(resd.text, ‘html.parser‘)

info=soupd.select(‘.show-info‘)[0].text

dt=info.lstrip(‘发布时间:‘)[:19]#发布时间

dati=datetime.strptime(dt,‘%Y-%m-%d %H:%M:%S‘)

print(dati)