标签:odi with open col turn dict 进入 a标签 信息 one

1. 总述

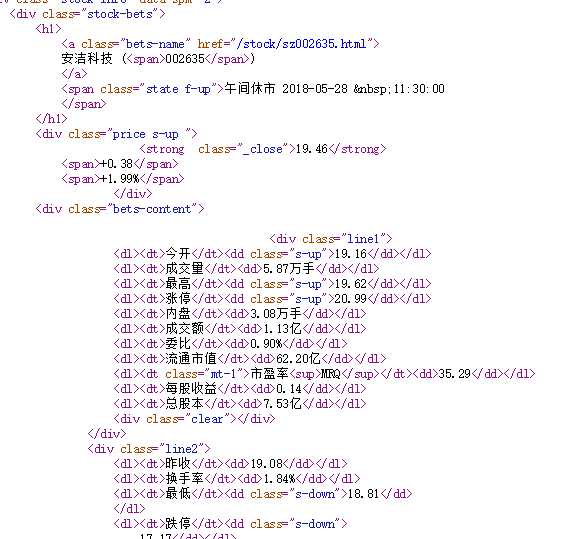

慕课中这段代码的功能是首先从东方财富网上获得所有股票的代码,再利用我们所获得的股票代码输入url中进入百度股票页面爬取该只股票的详细信息。

1 import requests 2 from bs4 import BeautifulSoup 3 import traceback 4 import re 5 6 7 def getHTMLText(url): 8 try: 9 r = requests.get(url) 10 r.raise_for_status() 11 r.encoding = r.apparent_encoding 12 return r.text 13 except: 14 return "" 15 16 17 def getStockList(lst, stockURL): 18 html = getHTMLText(stockURL) 19 soup = BeautifulSoup(html, ‘html.parser‘) 20 a = soup.find_all(‘a‘) 21 for i in a: 22 try: 23 href = i.attrs[‘href‘] 24 lst.append(re.findall(r‘[s][hz]\d{6}‘, href)[0]) 25 except: 26 continue 27 28 29 def getStockInfo(lst, stockURL, fpath): 30 for stock in lst: 31 url = stockURL + stock + ".html" 32 html = getHTMLText(url) 33 try: 34 if html == "": 35 continue 36 infoDict = {} 37 soup = BeautifulSoup(html, ‘html.parser‘) 38 stockInfo = soup.find(‘div‘, attrs={‘class‘: ‘stock-bets‘}) 39 40 name = stockInfo.find_all(attrs={‘class‘: ‘bets-name‘})[0] 41 infoDict.update({‘股票名称‘: name.text.split()[0]}) 42 43 keyList = stockInfo.find_all(‘dt‘) 44 valueList = stockInfo.find_all(‘dd‘) 45 for i in range(len(keyList)): 46 key = keyList[i].text 47 val = valueList[i].text 48 infoDict[key] = val 49 50 with open(fpath, ‘a‘, encoding=‘utf-8‘) as f: 51 f.write(str(infoDict) + ‘\n‘) 52 except: 53 traceback.print_exc() 54 continue 55 56 57 def main(): 58 stock_list_url = ‘http://quote.eastmoney.com/stocklist.html‘ 59 stock_info_url = ‘http://gupiao.baidu.com/stock/‘ 60 output_file = ‘D:/BaiduStockInfo.txt‘ 61 slist = [] 62 getStockList(slist, stock_list_url) 63 getStockInfo(slist, stock_info_url, output_file) 64 65 66 main()

2. 具体分析

2.1 获取源码

这段代码的功能就是使用requests库直接获得网页的所有源代码。

1 def getHTMLText(url): 2 try: 3 r = requests.get(url) 4 r.raise_for_status() 5 r.encoding = r.apparent_encoding 6 return r.text 7 except: 8 return ""

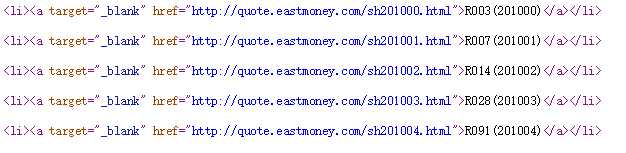

2.2 获取股票代码

在源码中可以看到每支股票都对应着一个6位数字的代码,这部分要做的工作就是获取这代码编号。这编号在a标签中,所有首先用BeautifulSoup选出所有的a标签,接下来我们在用attrs[href]来获取a标签的href属性值,最后用正则表达式筛选出我们想要的代码值。

1 def getStockList(lst, stockURL): 2 html = getHTMLText(stockURL) 3 soup = BeautifulSoup(html, ‘html.parser‘) 4 a = soup.find_all(‘a‘) 5 for i in a: 6 try: 7 href = i.attrs[‘href‘] 8 lst.append(re.findall(r‘[s][hz]\d{6}‘, href)[0]) #findall返回的是一个列表,所有这里[0]的作用就是append一个字符串,而不是一个列表进去 9 except: 10 continue

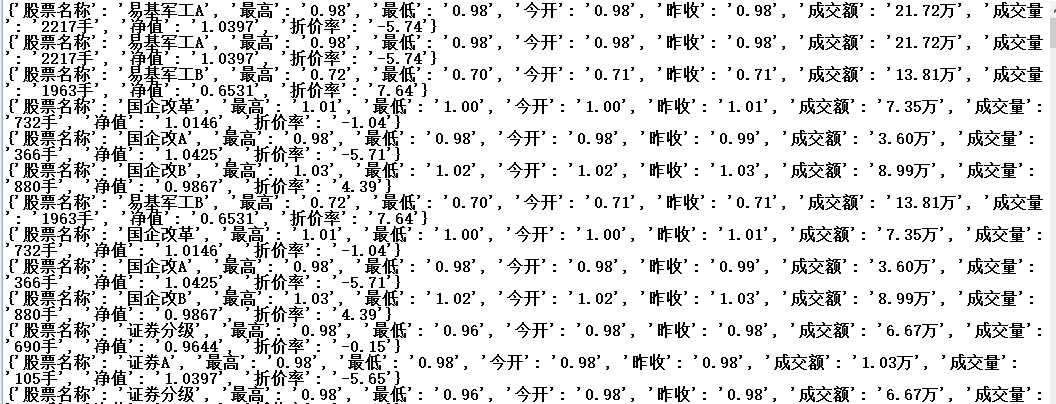

2.3 获取股票信息

同样的原理,最后用字典来保存。

1 def getStockInfo(lst, stockURL, fpath): 2 for stock in lst: 3 url = stockURL + stock + ".html" 4 html = getHTMLText(url) 5 try: 6 if html == "": 7 continue 8 infoDict = {} 9 soup = BeautifulSoup(html, ‘html.parser‘) 10 stockInfo = soup.find(‘div‘, attrs={‘class‘: ‘stock-bets‘}) 11 12 name = stockInfo.find_all(attrs={‘class‘: ‘bets-name‘})[0] 13 infoDict.update({‘股票名称‘: name.text.split()[0]}) #text是requests的方法 14 15 keyList = stockInfo.find_all(‘dt‘) 16 valueList = stockInfo.find_all(‘dd‘) 17 for i in range(len(keyList)): 18 key = keyList[i].text 19 val = valueList[i].text 20 infoDict[key] = val 21 22 with open(fpath, ‘a‘, encoding=‘utf-8‘) as f: 23 f.write(str(infoDict) + ‘\n‘) 24 except: 25 traceback.print_exc() 26 continue

标签:odi with open col turn dict 进入 a标签 信息 one

原文地址:https://www.cnblogs.com/zyb993963526/p/9094862.html