标签:get ++ ruby alt ack idf ref exec port

最近公司比较忙,没来的及更新博客,今天为大家更新一篇文章,elk+redis+filebeat,这里呢主要使用与中小型公司的日志收集,如果大型公司可以参考上面的kafka+zookeeper配合elk收集,好了开始往上怼了;

Elk为了防止数据量突然键暴增,吧服务器搞奔溃,这里需要添加一个redis,让数据输入到redis当中,然后在输入到es当中

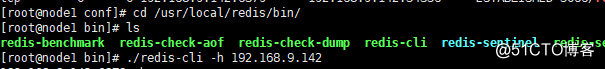

Redis安装:

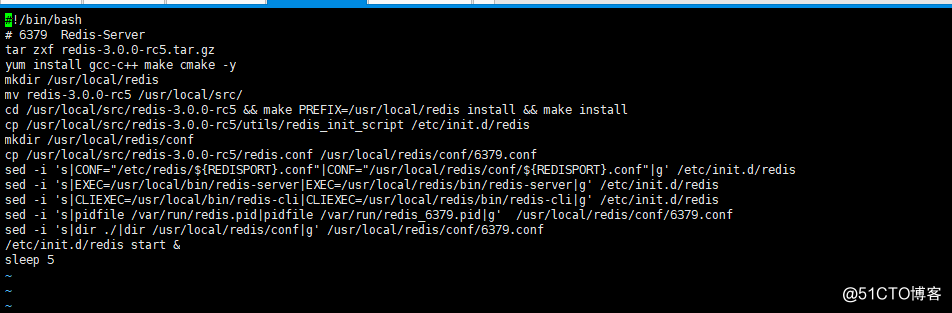

#!/bin/bash

# 6379 Redis-Server

tar zxf redis-3.0.0-rc5.tar.gz

yum install gcc-c++ make cmake -y

mkdir /usr/local/redis

mv redis-3.0.0-rc5 /usr/local/src/

cd /usr/local/src/redis-3.0.0-rc5 && make PREFIX=/usr/local/redis install && make install

cp /usr/local/src/redis-3.0.0-rc5/utils/redis_init_script /etc/init.d/redis

mkdir /usr/local/redis/conf

cp /usr/local/src/redis-3.0.0-rc5/redis.conf /usr/local/redis/conf/6379.conf

sed -i 's|CONF="/etc/redis/${REDISPORT}.conf"|CONF="/usr/local/redis/conf/${REDISPORT}.conf"|g' /etc/init.d/redis

sed -i 's|EXEC=/usr/local/bin/redis-server|EXEC=/usr/local/redis/bin/redis-server|g' /etc/init.d/redis

sed -i 's|CLIEXEC=/usr/local/bin/redis-cli|CLIEXEC=/usr/local/redis/bin/redis-cli|g' /etc/init.d/redis

sed -i 's|pidfile /var/run/redis.pid|pidfile /var/run/redis_6379.pid|g' /usr/local/redis/conf/6379.conf

sed -i 's|dir ./|dir /usr/local/redis/conf|g' /usr/local/redis/conf/6379.conf

/etc/init.d/redis start &

sleep 5

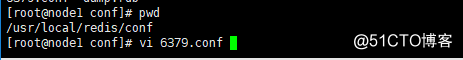

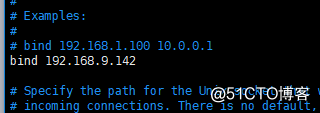

然后修改点配置

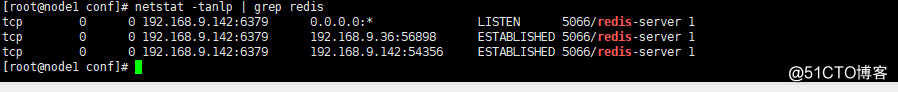

然后重新启动服务:

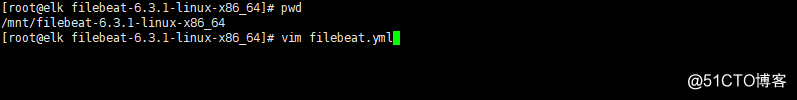

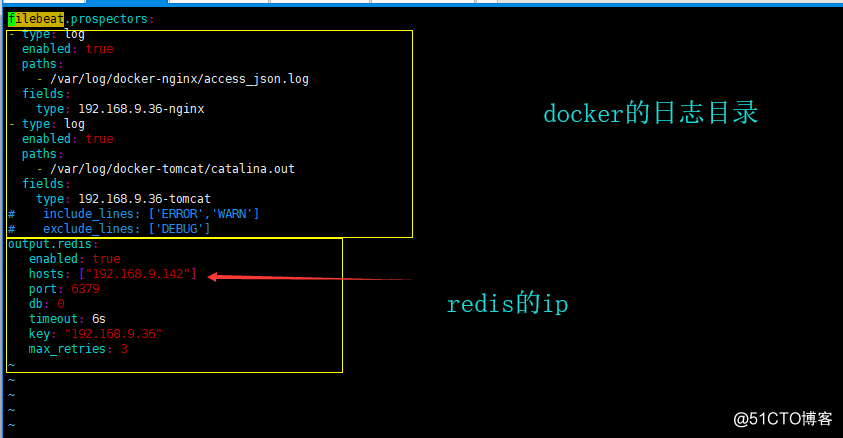

接下来开始配置filebeat的配置文件:

filebeat.prospectors:

- type: log

enabled: true

paths:

- /var/log/docker-nginx/access_json.log

fields:

type: 192.168.9.36-nginx

- type: log

enabled: true

paths:

- /var/log/docker-tomcat/catalina.out

fields:

type: 192.168.9.36-tomcat

# include_lines: ['ERROR','WARN']

# exclude_lines: ['DEBUG']

output.redis:

enabled: true

hosts: ["192.168.9.142"]

port: 6379

db: 0

timeout: 6s

key: "192.168.9.36"

max_retries: 3

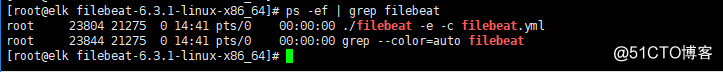

然后启动:

nohup ./filebeat -e -c filebeat.yml > /dev/null &

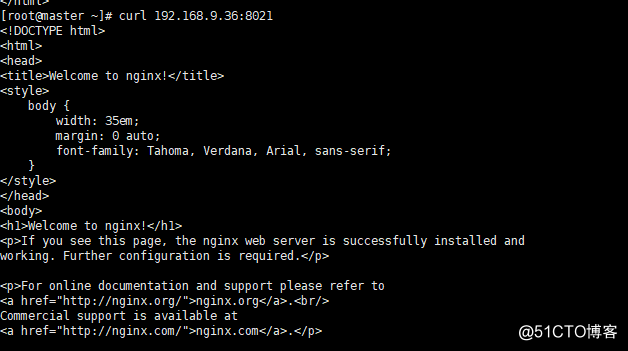

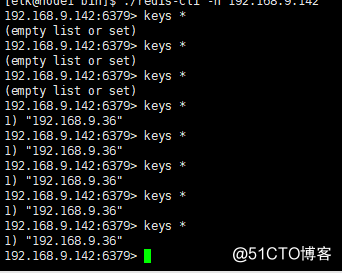

注意如果要测试一下数据有没有进入redis当中,就不要启动logstash,不然会直接从redis当中取走了,所以这里不启动,可以访问一下容器

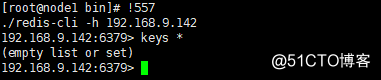

在redis当中查看:

如果要是启动了logstash那么数据就呗取走了:

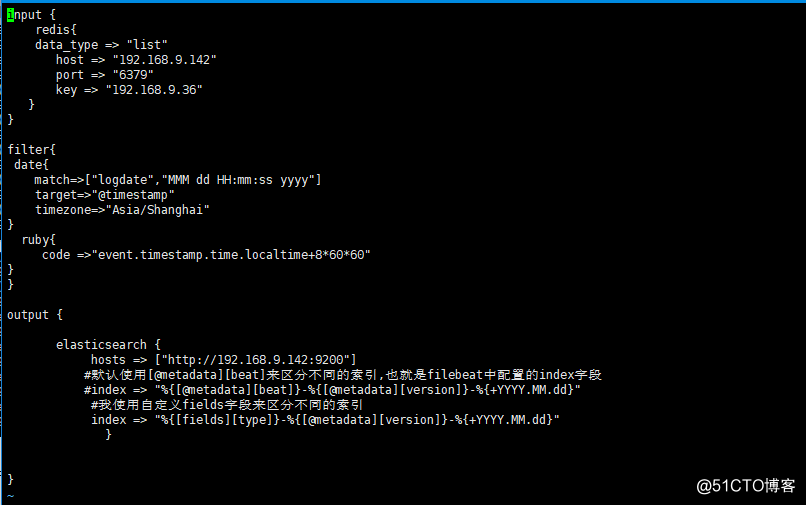

input {

redis{

data_type => "list"

host => "192.168.9.142"

port => "6379"

key => "192.168.9.36"

}

}

filter{

date{

match=>["logdate","MMM dd HH:mm:ss yyyy"]

target=>"@timestamp"

timezone=>"Asia/Shanghai"

}

ruby{

code =>"event.timestamp.time.localtime+8*60*60"

}

}

output {

elasticsearch {

hosts => ["http://192.168.9.142:9200"]

#默认使用[@metadata][beat]来区分不同的索引,也就是filebeat中配置的index字段

#index => "%{[@metadata][beat]}-%{[@metadata][version]}-%{+YYYY.MM.dd}"

#我使用自定义fields字段来区分不同的索引

index => "%{[fields][type]}-%{[@metadata][version]}-%{+YYYY.MM.dd}"

}

}

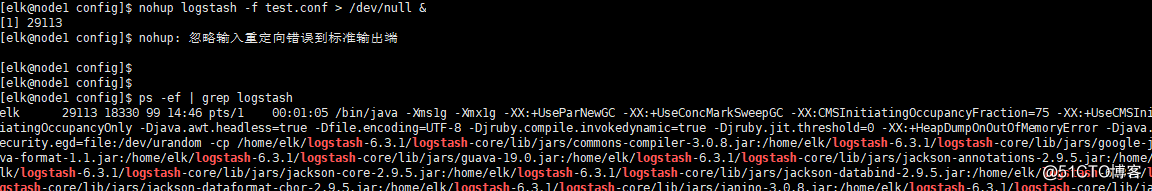

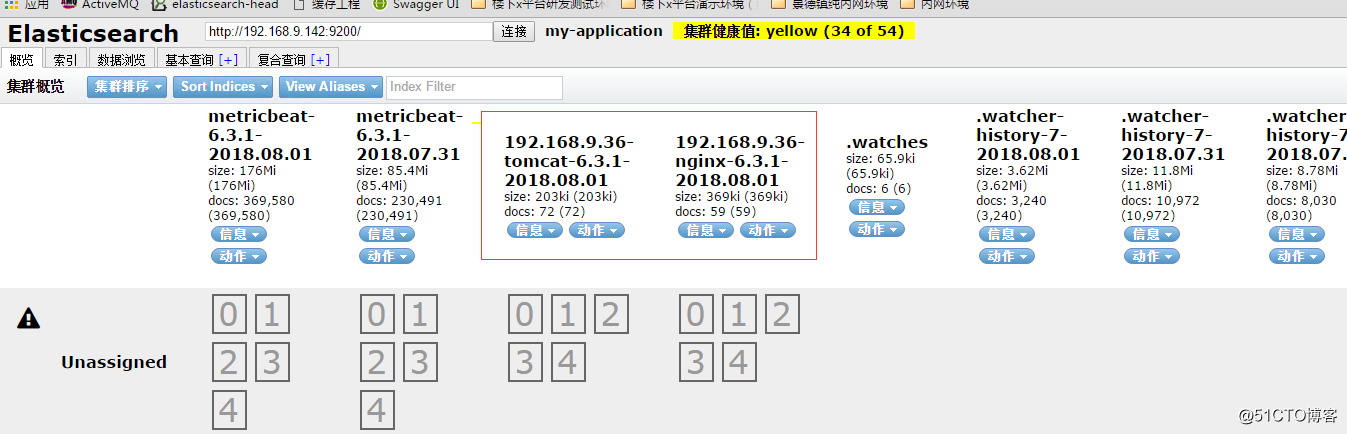

启动可以看到redis中没有数据了

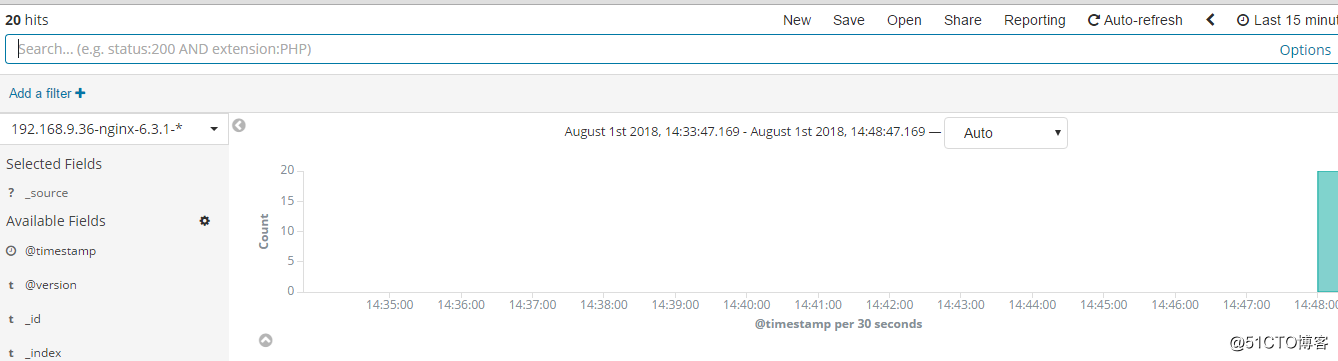

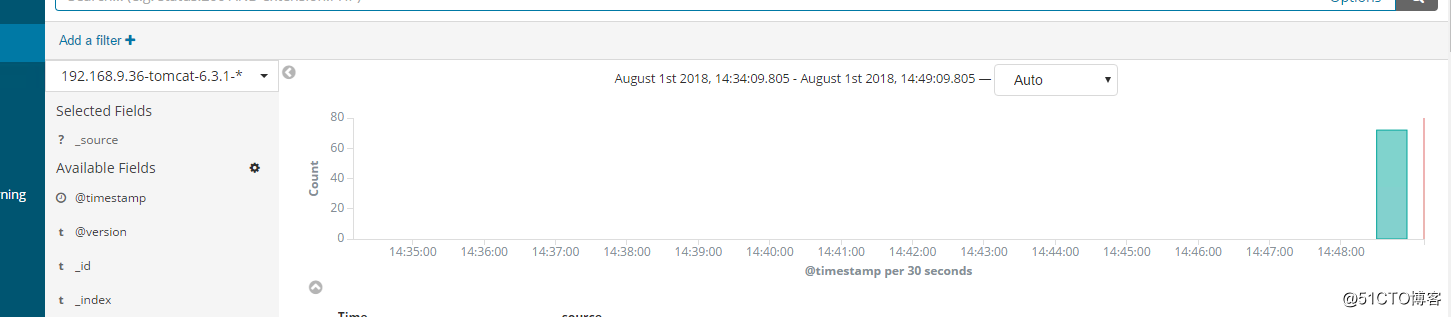

其实数据已经到了es当中,在kibana 当中可以显示出来了

6.3.1版本elk+redis+filebeat收集docker+swarm日志分析

标签:get ++ ruby alt ack idf ref exec port

原文地址:http://blog.51cto.com/xiaorenwutest/2156097