标签:concepts success done rds rename tran hat put pos

In this page, I will explain the following important MR concepts.

1) Job: how the job is inited , executed.

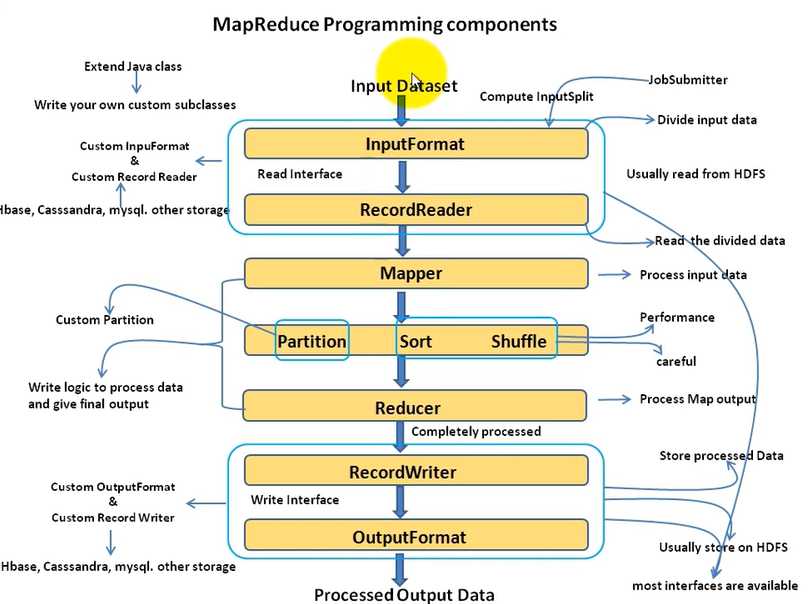

2) MR components: How they work to process data.

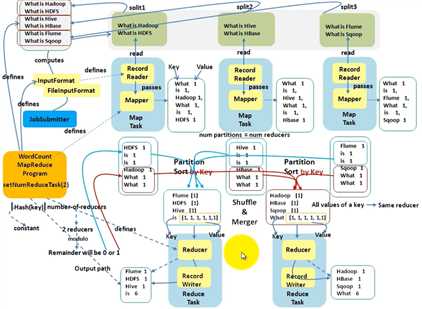

3) Data Flow: Word counter to illustrate point 2.

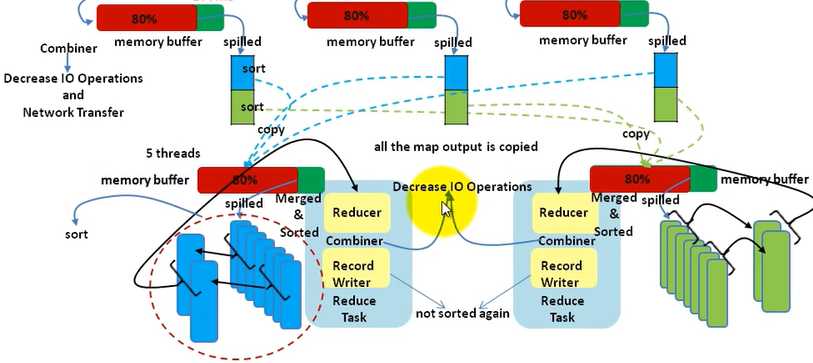

4) Shuffle: the whole picture.

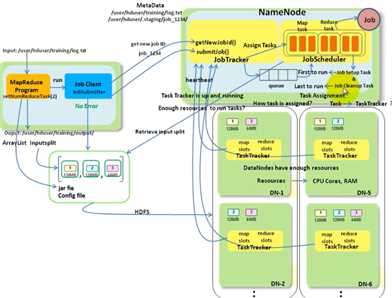

Job submitter computes the input splits, submits the job.

JobScheduler initializes the job object, creating map tasks,reduce tasks,jobsetup task and jobCleanUp task.

For each input split, one map task is created. a split is a block which is the default. But a split can be configured to be multiple blocks.

number of reduce task is configured in the MR program.

JobTracker assigns these tasks to TaskTrackers based on Data locality optimization and how much load is on the trasktracker. Tasktracker sends HB to job trackers . HB inclues the resource available(cpu,memory....). It also sends the progress to job tracker periodically , and it will forward to the application so , the progress is known.

TaskTracker will run these tasks on separate JVM in case the application bring down the task tracker due to fault.

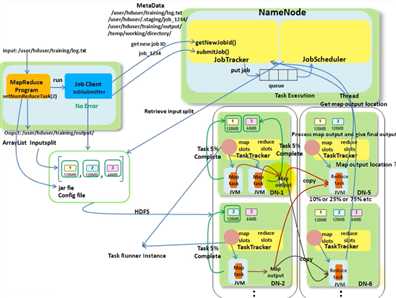

jobsetup task is run firstly to create output folder, then map tasks are run, then reduce tasks run. finally, job cleanup task is run. the job is successfuly only job cleanup task is run finished without problem. It renames the folder to _success. Before that, all the output is stored in temp folder and copied to working folder by job cleanup task. Reduce tasks knows the output of the map task from the job tracker which assigns the map tasks. When to run reduce task can be configured based on what percentage of the map task is completed .

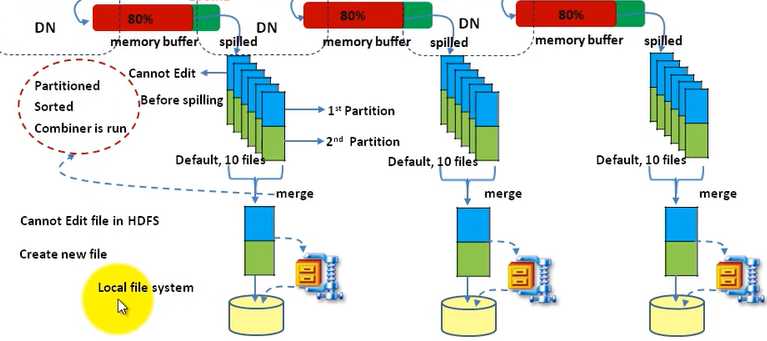

Map output is written to local FS. Reduce output is written to HDFS(as we want to be reliable).

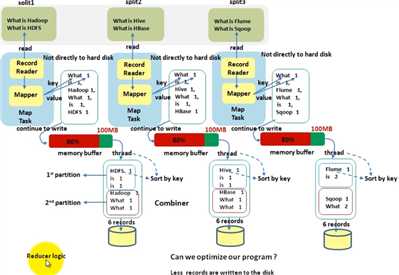

Below is very clear to describe the data flow among the components. For some job , like map side join, there is no need to run the reduce task . So, not all the final output is generated by reduce task.

Some thing to note:

1) the client / application/ submiter specifies the number of reduce tasks which will determine how many partitions.

2) Values(or records) with the same key will be in the same partition, and later, will go into the same reducer.

3) The records in the partition are sorted in memory(what algorithm?)

标签:concepts success done rds rename tran hat put pos

原文地址:https://www.cnblogs.com/nativestack/p/9670690.html