标签:.com 存储 install source spider cookies title ali ice

Scrapy是一个为了爬取网站数据,提取结构性数据而编写的应用框架。 可以应用在包括数据挖掘,信息处理或存储历史数据等一系列的程序中。

其最初是为了?页面抓取?(更确切来说,?网络抓取?)所设计的, 也可以应用在获取API所返回的数据(例如?Amazon Associates Web Services?) 或者通用的网络爬虫。

本文档将通过介绍Scrapy背后的概念使您对其工作原理有所了解, 并确定Scrapy是否是您所需要的。

当您准备好开始您的项目后,您可以参考?入门教程?。

您可以使用pip来安装Scrapy(推荐使用pip来安装Python package).

1、安装Python 2.7之后,您需要修改?PATH?环境变量,将Python的可执行程序及额外的脚本添加到系统路径中。将以下路径添加到?PATH?中:

?C:\Python27\;C:\Python27\Scripts\;?

c:\python27\python.exe c:\python27\tools\scripts\win_add2path.py?

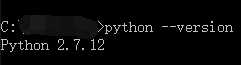

安装配置完成之后,可以执行命令python --version查看安装的python版本。(如图所示)

2、从?http://sourceforge.net/projects/pywin32/?安装?pywin32

从?https://pip.pypa.io/en/latest/installing.html?安装?pip

4、到目前为止Python 2.7 及?pip?已经可以正确运行了。接下来安装Scrapy:

?pip install Scrapy?

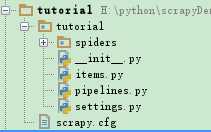

?scrapy startproject tutorial?

H:\python\scrapyDemo>scrapy startproject tutorial New Scrapy project ‘tutorial‘, using template directory ‘f:\\python27\\lib\\site-packages\\scrapy\\templates\\project‘, created in: H:\python\scrapyDemo\tutorial You can start your first spider with: cd tutorial scrapy genspider example example.com

1 # -*- coding: utf-8 -*- 2 3 # Define here the models for your scraped items 4 # 5 # See documentation in: 6 # http://doc.scrapy.org/en/latest/topics/items.html 7 8 import scrapy 9 from scrapy.item import Item, Field 10 11 class TutorialItem(Item): 12 title = Field() 13 author = Field() 14 releasedate = Field()

2、在tutorial/spiders/spider.py中书写要采集的网站以及分别采集各字段。

1 # -*-coding:utf-8-*- 2 import sys 3 from scrapy.linkextractors.sgml import SgmlLinkExtractor 4 from scrapy.spiders import CrawlSpider, Rule 5 from tutorial.items import TutorialItem 6 7 reload(sys) 8 sys.setdefaultencoding("utf-8") 9 10 11 class ListSpider(CrawlSpider): 12 # 爬虫名称13 name = "tutorial"14 # 设置下载延时15 download_delay = 1 16 # 允许域名17 allowed_domains = ["news.cnblogs.com"]18 # 开始URL19 start_urls = [ 20 "https://news.cnblogs.com"21 ] 22 # 爬取规则,不带callback表示向该类url递归爬取23 rules = ( 24 Rule(SgmlLinkExtractor(allow=(r‘https://news.cnblogs.com/n/page/\d‘,))),25 Rule(SgmlLinkExtractor(allow=(r‘https://news.cnblogs.com/n/\d+‘,)), callback=‘parse_content‘),26 ) 27 28 # 解析内容函数29 def parse_content(self, response): 30 item = TutorialItem() 31 32 # 当前URL33 title = response.selector.xpath(‘//div[@id="news_title"]‘)[0].extract().decode(‘utf-8‘)34 item[‘title‘] = title35 36 author = response.selector.xpath(‘//div[@id="news_info"]/span/a/text()‘)[0].extract().decode(‘utf-8‘)37 item[‘author‘] = author38 39 releasedate = response.selector.xpath(‘//div[@id="news_info"]/span[@class="time"]/text()‘)[0].extract().decode(40 ‘utf-8‘)41 item[‘releasedate‘] = releasedate42 43 yield item

3、在tutorial/pipelines.py管道中保存数据。

1 # -*- coding: utf-8 -*- 2 3 # Define your item pipelines here 4 # 5 # Don‘t forget to add your pipeline to the ITEM_PIPELINES setting 6 # See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html 7 import json 8 import codecs 9 10 11 class TutorialPipeline(object): 12 def __init__(self):13 self.file = codecs.open(‘data.json‘, mode=‘wb‘, encoding=‘utf-8‘)#数据存储到data.json14 15 def process_item(self, item, spider): 16 line = json.dumps(dict(item)) + "\n"17 self.file.write(line.decode("unicode_escape"))18 19 return item

4、tutorial/settings.py中配置执行环境。

1 # -*- coding: utf-8 -*- 2 3 BOT_NAME = ‘tutorial‘ 4 5 SPIDER_MODULES = [‘tutorial.spiders‘] 6 NEWSPIDER_MODULE = ‘tutorial.spiders‘ 7 8 # 禁止cookies,防止被ban 9 COOKIES_ENABLED = False 10 COOKIES_ENABLES = False 11 12 # 设置Pipeline,此处实现数据写入文件13 ITEM_PIPELINES = { 14 ‘tutorial.pipelines.TutorialPipeline‘: 30015 } 16 17 # 设置爬虫爬取的最大深度18 DEPTH_LIMIT = 100

1 from scrapy import cmdline 2 cmdline.execute("scrapy crawl tutorial".split())

最终,执行main.py后在data.json文件中获取到采集结果的json数据。

标签:.com 存储 install source spider cookies title ali ice

原文地址:https://www.cnblogs.com/songdongdong6/p/10294657.html