标签:ems mkdir lin rmi mys prope 仓库 hadoop 数据文件

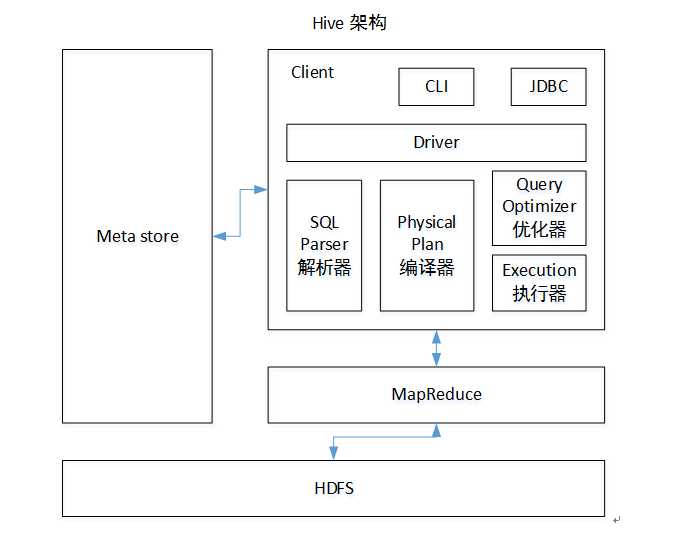

Hive是基于Hadoop的一个数据仓库工具,可以将结构化的数据文件映射为一张表,并提供类SQL查询功能。

本质是:将HQL转化成MapReduce程序

1)Hive处理的数据存储在HDFS

2)Hive分析数据底层的实现是MapReduce

3)执行程序运行在Yarn上

(1)把apache-hive-1.2.1-bin.tar.gz上传到linux的/opt/software目录下

(2)解压apache-hive-1.2.1-bin.tar.gz到/opt/module/目录下面

[kris@hadoop101 software]$ tar -zxvf apache-hive-1.2.1-bin.tar.gz -C /opt/module/

(3)修改apache-hive-1.2.1-bin.tar.gz的名称为hive

[kris@hadoop101 module]$ mv apache-hive-1.2.1-bin/ hive

(4)修改/opt/module/hive/conf目录下的hive-env.sh.template名称为hive-env.sh

[kris@hadoop101 conf]$ mv hive-env.sh.template hive-env.sh

(5)配置hive-env.sh文件

(a)配置HADOOP_HOME路径

export HADOOP_HOME=/opt/module/hadoop-2.7.2

(b)配置HIVE_CONF_DIR路径

export HIVE_CONF_DIR=/opt/module/hive/conf

Hadoop集群配置

Hadoop集群配置

(1)必须启动hdfs和yarn

start-dfs.sh

若启动时出现nodenode进程或其他,受到Ha的影响,删除data数据,可重新格式化;

killall java

[kris@hadoop101 ~]$ cd /tmp/

[kris@hadoop101 tmp]$ rm -rf *.pid

start-yarn.sh

在HDFS上创建/tmp和/user/hive/warehouse两个目录并修改他们的同组权限可写,看下没有这个文件(一般启动之后就会产生)就创建并修改权限;

[kris@hadoop101 tmp]$ hadoop fs -chmod 777 /tmp/

[kris@hadoop101 tmp]$ hadoop fs -chmod g+w /user/hive/warehouse

可直接启动hive

[kris@hadoop101 hive]$ bin/hive

在/opt/module/目录下创建datas

[atguigu@hadoop102 module]$ mkdir datas

在/opt/module/datas/目录下创建student.txt文件并添加数据

hive> create table student(id int, name string) ROW FORMAT DELIMITED FIELDS TERMINATED

> BY ‘\t‘;

OK

Time taken: 0.298 seconds

hive> load data local inpath ‘/opt/module/datas/student.txt‘ into table student;

Loading data to table default.student

Table default.student stats: [numFiles=2, numRows=0, totalSize=330, rawDataSize=0]

OK

Time taken: 0.729 seconds

hive> show tables;

OK

student

Time taken: 0.021 seconds, Fetched: 1 row(s)

hive> select * from student;

OK

1001 ss1

1002 ss2

1003 ss3

1004 ss4

1005 ss5

iGD2pY1XQycacXKc sudo rpm -ivh MySQL-server-5.6.24-1.el6.x86_64.rpm sudo cat /root/.mysql_secret sudo rpm -ivh MySQL-client-5.6.24-1.el6.x86_64.rpm sudo service mysql start mysql -uroot -p iGD2pY1XQycacXKc mysql> show databases; ERROR 1820 (HY000): You must SET PASSWORD before executing this statement mysql> set password=password("123456"); Query OK, 0 rows affected (0.00 sec) mysql> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | mysql | | performance_schema | | test | +--------------------+ 4 rows in set (0.00 sec) mysql> exit; Bye [kris@hadoop101 software]$ mysql -uroot -p123456 use mysql; mysql> select user, host, password from user; +------+-----------+-------------------------------------------+ | user | host | password | +------+-----------+-------------------------------------------+ | root | localhost | *6BB4837EB74329105EE4568DDA7DC67ED2CA2AD9 | | root | hadoop101 | *F989EA3B224D436B6BEAEAEB2E879B7E765C28C5 | | root | 127.0.0.1 | *F989EA3B224D436B6BEAEAEB2E879B7E765C28C5 | | root | ::1 | *F989EA3B224D436B6BEAEAEB2E879B7E765C28C5 | +------+-----------+-------------------------------------------+ 4 rows in set (0.00 sec) mysql> delete from user where host<>"localhost"; Query OK, 3 rows affected (0.00 sec) mysql> update user set host=‘%‘ where host=‘localhost‘; mysql> flush privileges;

拷贝驱动

[kris@hadoop101 software]$ tar -zxf mysql-connector-java-5.1.27.tar.gz

[kris@hadoop101 software]$ ll

总用量 200936

drwxr-xr-x. 4 kris kris 4096 10月 24 2013 mysql-connector-java-5.1.27

[kris@hadoop101 mysql-connector-java-5.1.27]$ cp mysql-connector-java-5.1.27-bin.jar /opt/module/hive/lib/

1.在/opt/module/hive/conf目录下创建一个hive-site.xml

[kris@hadoop101 conf]$ touch hive-site.xml

[kris@hadoop101 conf]$ vi hive-site.xml

2.根据官方文档配置参数,拷贝数据到hive-site.xml文件中

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://hadoop101:3306/metastore?createDatabaseIfNotExist=true</value>

<description>JDBC connect string for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>Driver class name for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

<description>username to use against metastore database</description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

<description>password to use against metastore database</description>

</property>

</configuration>

[kris@hadoop101 hive]$ bin/hive

Logging initialized using configuration in jar:file:/opt/module/hive/lib/hive-common-1.2.1.jar!/hive-log4j.properties

hive>

1.先启动MySQL

[kris@hadoop101 hive]$ mysql -uroot -p123456

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| metastore |

| mysql |

| performance_schema |

| test |

+--------------------+

5 rows in set (0.02 sec)

2.再次打开多个窗口,分别启动hive

[kris@hadoop101 hive]$ bin/hive

3.启动hive后,回到MySQL窗口查看数据库,显示增加了metastore数据库

[kris@hadoop101 hive]$ bin/hiveserver2 //它就相当于是SQL Parser解析器+Physical Plan编辑器+优化器+执行器

[kris@hadoop101 hive]$ bin/beeline //它就相当于是Client + Driver + CLI + JDBC

Beeline version 1.2.1 by Apache Hive

beeline> !connect jdbc:hive2://hadoop101:10000 //必须把bin/hiveserver2启动了才能启动它,它们是配套的

Connecting to jdbc:hive2://hadoop101:10000

Enter username for jdbc:hive2://hadoop101:10000: kris

Enter password for jdbc:hive2://hadoop101:10000:

Connected to: Apache Hive (version 1.2.1)

Driver: Hive JDBC (version 1.2.1)

Transaction isolation: TRANSACTION_REPEATABLE_READ

0: jdbc:hive2://hadoop101:10000> show databases;

+----------------+--+

| database_name |

+----------------+--+

| default |

+----------------+--+

1 row selected (1.233 seconds)

0: jdbc:hive2://hadoop101:10000>

发现这里发生变化:它会记录查询情况,是否出错

[kris@hadoop101 hive]$ bin/hiveserver2

OK

[kris@hadoop101 hive]$ bin/hive -e "select * from student;"

Logging initialized using configuration in jar:file:/opt/module/hive/lib/hive-common-1.2.1.jar!/hive-log4j.properties

OK

1001 ss1

1002 ss2

1003 ss3

写入文件中:

[kris@hadoop101 datas]$ vim hivef.sql

select * from student;

[kris@hadoop101 hive]$ bin/hive -f /opt/module/datas/hivef.sql

Logging initialized using configuration in jar:file:/opt/module/hive/lib/hive-common-1.2.1.jar!/hive-log4j.properties

OK

1001 ss1

1002 ss2

1003 ss3

hive> dfs -ls /;

Found 2 items

drwxrwxr-x - kris supergroup 0 2019-02-13 15:59 /tmp

drwxr-xr-x - kris supergroup 0 2019-02-13 15:54 /user

hive> !ls /opt/module/datas

> ;

business.txt

dept.txt

emp_sex.txt

emp.txt

hivef.sql

location.txt

log.data

score.txt

student.txt

hive>

查看在hive中输入的所有历史命令

(1)进入到当前用户的根目录/root或/home/atguigu

(2)查看. hivehistory文件

[kris@hadoop101 ~]$ cat .hivehistory

标签:ems mkdir lin rmi mys prope 仓库 hadoop 数据文件

原文地址:https://www.cnblogs.com/shengyang17/p/10372242.html