标签:-- seconds replicas runner OLE 引入 rpo web def

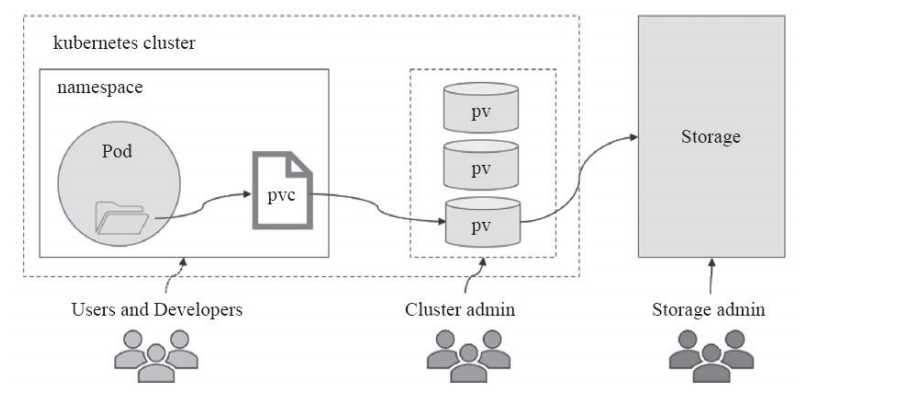

PersistentVolume(PV)是指由集群管理员配置提供的某存储系统上的段存储空间,它是对底层共享存储的抽象,将共享存储作为种可由用户申请使的资源,实现了“存储消费”机制。通过存储插件机制,PV支持使用多种网络存储系统或云端存储等多种后端存储系统,例如,NFS、RBD和Cinder等。PV是集群级别的资源,不属于任何名称空间,用户对PV资源的使需要通过PersistentVolumeClaim(PVC)提出的使申请(或称为声明)来完成绑定,是PV资源的消费者,它向PV申请特定大小的空间及访问模式(如rw或ro),从创建出PVC存储卷,后再由Pod资源通过PersistentVolumeClaim存储卷关联使,如下图:

尽管PVC使得用户可以以抽象的方式访问存储资源,但很多时候还是会涉及PV的不少属性,例如,由于不同场景时设置的性能参数等。为此,集群管理员不得不通过多种方式提供多种不同的PV以满不同用户不同的使用需求,两者衔接上的偏差必然会导致用户的需求无法全部及时有效地得到满足。Kubernetes从1.4版起引入了一个新的资源对象StorageClass,可用于将存储资源定义为具有显著特性的类(Class)而不是具体的PV,例如“fast”“slow”或“glod”“silver”“bronze”等。用户通过PVC直接向意向的类别发出申请,匹配由管理员事先创建的PV,或者由其按需为用户动态创建PV,这样做甚至免去了需要先创建PV的过程。

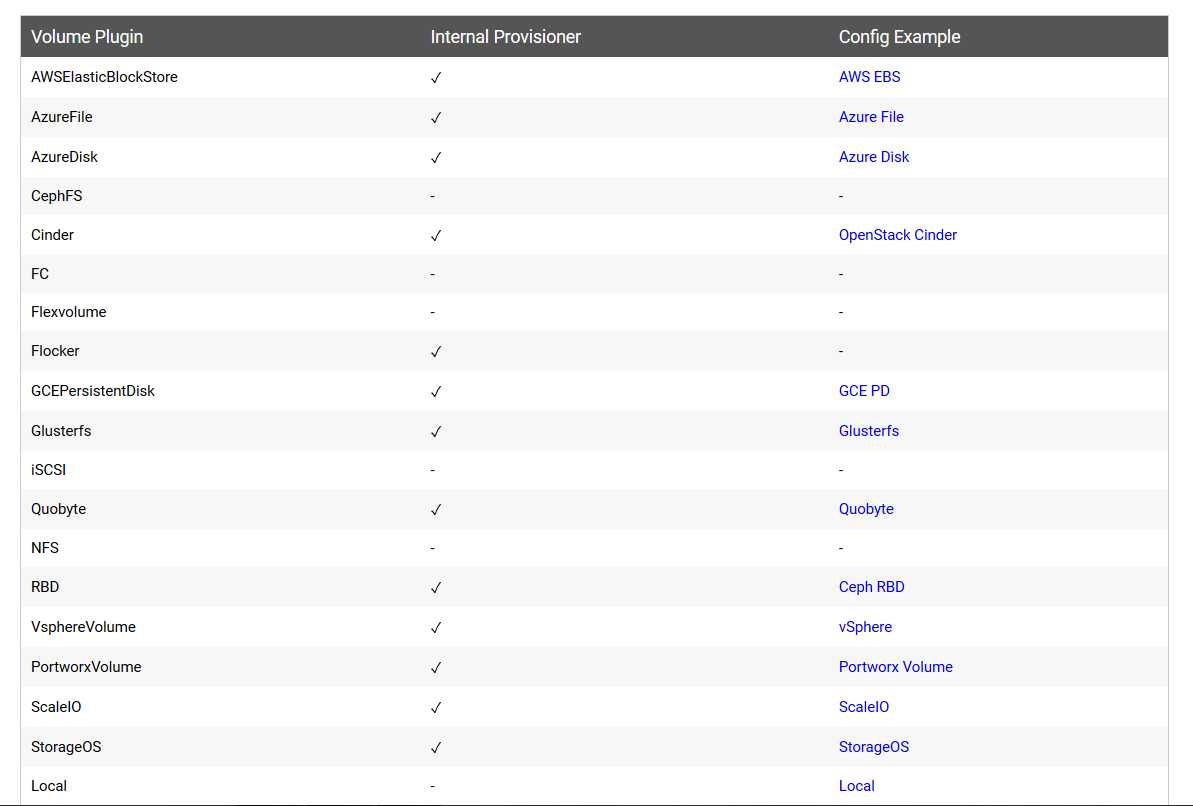

PV对存储系统的支持可通过其插件来实现,目前,Kubernetes支持如下类型的插件。

官方地址:https://kubernetes.io/docs/concepts/storage/storage-classes/

由上图我们可以看到官方插件是不支持NFS动态供给的,但是我们可以用第三方的插件来实现,下面就是本文要讲的。

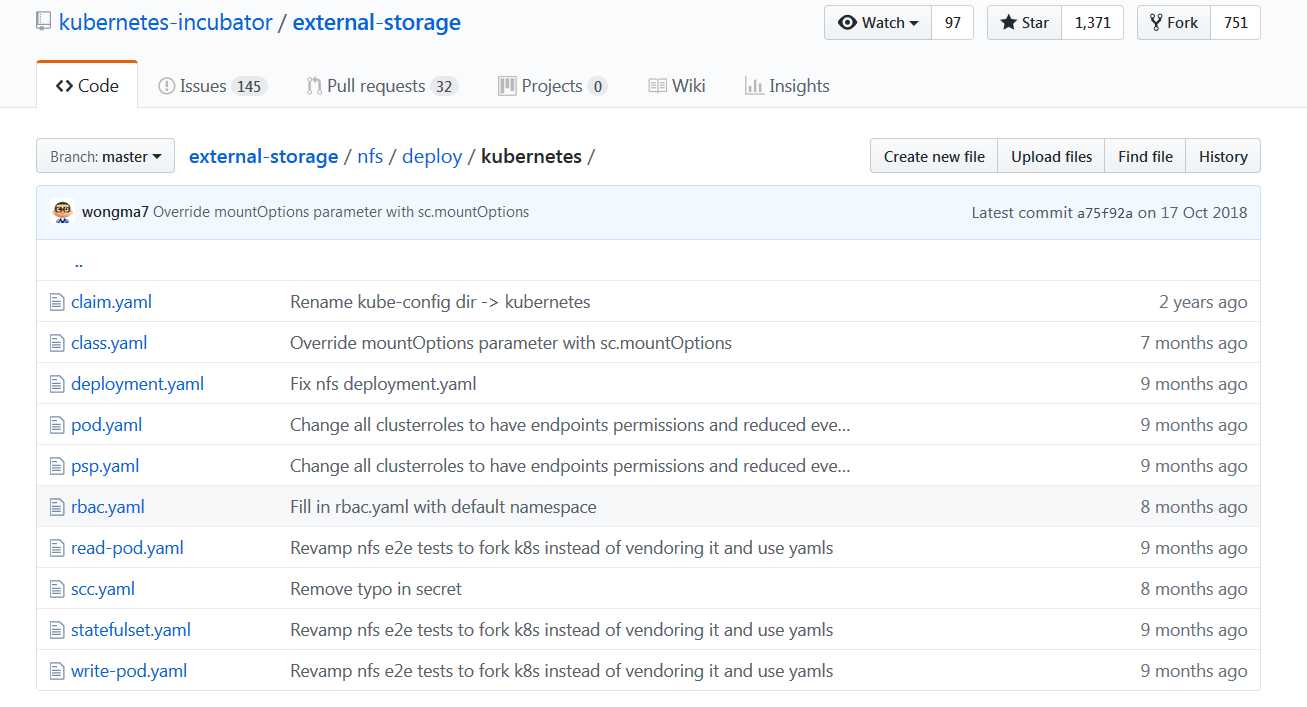

GitHub地址:https://github.com/kubernetes-incubator/external-storage/tree/master/nfs/deploy/kubernetes

2.1创建RBAC授权

[root@master storage-class]# cat rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["list", "watch", "create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: default

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

2.2 创建Storageclass类

[root@master storage-class]# cat storageclass-nfs.yaml apiVersion: storage.k8s.io/v1beta1 kind: StorageClass metadata: name: managed-nfs-storage provisioner: fuseim.pri/ifs

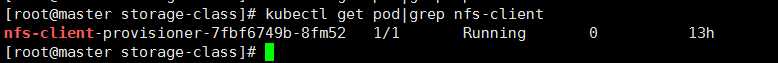

2.3 创建nfs的deployment,修改相应的nfs服务器ip及挂载路径即可。

[root@master storage-class]# cat deployment-nfs.yaml

apiVersion: apps/v1beta1

kind: Deployment

metadata:

name: nfs-client-provisioner

spec:

replicas: 1

strategy:

type: Recreate

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

imagePullSecrets:

- name: registry-pull-secret

serviceAccount: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: lizhenliang/nfs-client-provisioner:v2.0.0

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: fuseim.pri/ifs

- name: NFS_SERVER

value: 172.31.182.145

- name: NFS_PATH

value: /u01/nps/volumes

volumes:

- name: nfs-client-root

nfs:

server: 172.31.182.145

path: /u01/nps/volumes

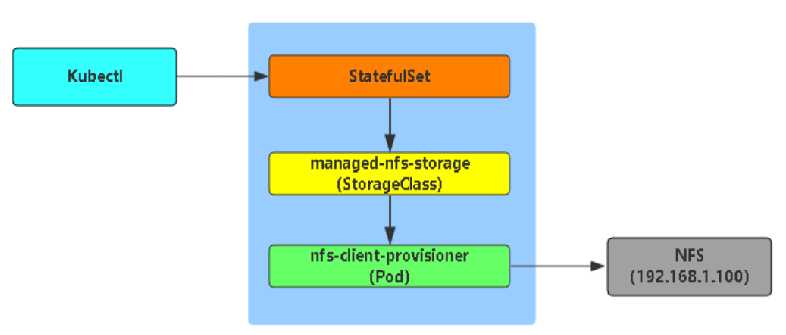

下面是一个StatefulSet应用动态申请PV的示意图:

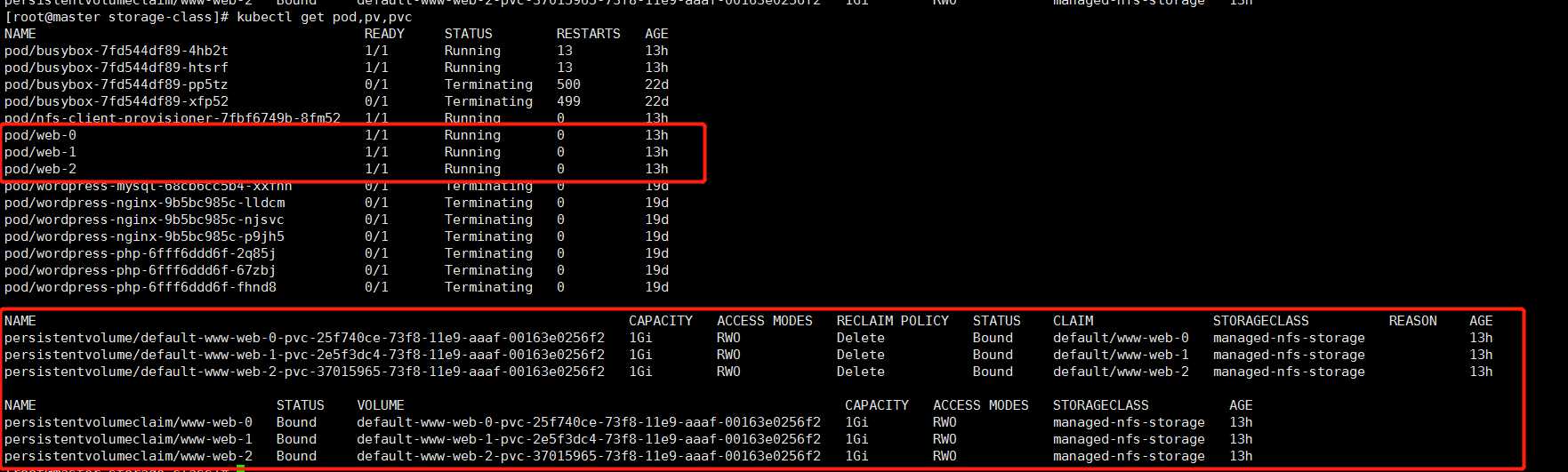

例如:创建一个nginx动态获取PV

[root@master storage-class]# cat nginx-demo.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx

labels:

app: nginx

spec:

ports:

- port: 80

name: web

clusterIP: None

selector:

app: nginx

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: web

spec:

selector:

matchLabels:

app: nginx

serviceName: "nginx"

replicas: 3

template:

metadata:

labels:

app: nginx

spec:

terminationGracePeriodSeconds: 10

containers:

- name: nginx

image: nginx

ports:

- containerPort: 80

name: web

volumeMounts:

- name: www

mountPath: /usr/share/nginx/html

volumeClaimTemplates:

- metadata:

name: www

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: "managed-nfs-storage"

resources:

requests:

storage: 1Gi

启动后我们可以看到以下信息:

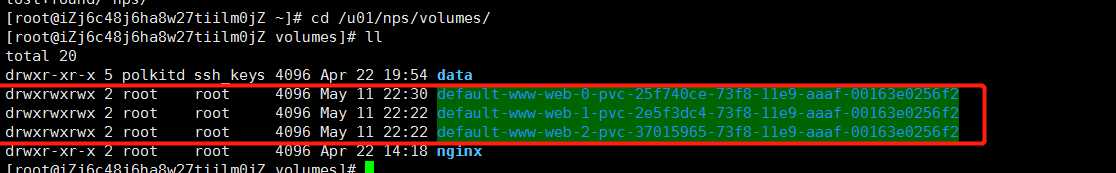

这时我们在nfs服务器上也会看到自动生成3个挂载目录,当pod删除了数据还会存在。

StatefulSet应用有以下特点:

1.唯一的主机名

2.域名访问(<statefulsetName-index>.<service-name>.svc.cluster.local) 如:web-0.nginx.default.svc.cluster.local

3.独立的挂载卷

标签:-- seconds replicas runner OLE 引入 rpo web def

原文地址:https://www.cnblogs.com/Dev0ps/p/10851802.html