标签:html zypper 报错 work last err files ons 清理

之前我们已快速部署好一套Ceph集群(3节点),现要测试在现有集群中在线方式增加节点

|

主机名 |

Public网络 |

管理网络 |

集群网络 |

说明 |

|

admin |

192.168.2.39 |

172.200.50.39 |

--- |

管理节点 |

|

node001 |

192.168.2.40 |

172.200.50.40 |

192.168.3.40 |

MON,OSD |

|

node002 |

192.168.2.41 |

172.200.50.41 |

192.168.3.41 |

MON,OSD |

|

node003 |

192.168.2.42 |

172.200.50.42 |

192.168.3.42 |

MON,OSD |

|

node004 |

192.168.2.43 |

172.200.50.43 |

192.168.3.43 |

OSD |

可以看到架构图中增加了node004节点,并且node004节点只是作为OSD节点,并无MON或MGR服务

1、收集集群信息

(1)集群状态

# ceph -s cluster: id: f7b451b3-4a4c-4681-a4ef-4b5359242a92 health: HEALTH_OK services: mon: 3 daemons, quorum node001,node002,node003 (age 90m) mgr: node001(active, since 89m), standbys: node002, node003 osd: 6 osds: 6 up (since 90m), 6 in (since 23h) data: pools: 0 pools, 0 pgs objects: 0 objects, 0 B usage: 12 GiB used, 48 GiB / 60 GiB avail pgs:

(2)集群OSD磁盘信息

# ceph osd tree ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.05878 root default -5 0.01959 host node001 2 hdd 0.00980 osd.2 up 1.00000 1.00000 5 hdd 0.00980 osd.5 up 1.00000 1.00000 -3 0.01959 host node002 0 hdd 0.00980 osd.0 up 1.00000 1.00000 3 hdd 0.00980 osd.3 up 1.00000 1.00000 -7 0.01959 host node003 1 hdd 0.00980 osd.1 up 1.00000 1.00000 4 hdd 0.00980 osd.4 up 1.00000 1.00000

(3)查看新增节点磁盘

# lsblk NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT sda 8:0 0 20G 0 disk ├─sda1 8:1 0 1G 0 part /boot └─sda2 8:2 0 19G 0 part ├─vgoo-lvswap 254:0 0 2G 0 lvm [SWAP] └─vgoo-lvroot 254:1 0 17G 0 lvm / sdb 8:16 0 10G 0 disk sdc 8:32 0 10G 0 disk sr0 11:0 1 1024M 0 rom nvme0n1 259:0 0 20G 0 disk nvme0n2 259:1 0 20G 0 disk nvme0n3 259:2 0 20G 0 disk

2、初始化操作系统

(1)初始化步骤

参考快速部署 storage6 文档

(2)显示仓库

# zypper lr Repository priorities are without effect. All enabled repositories share the same priority. # | Alias | Name | Enabled | GPG Check | Refresh ---+----------------------------------------------------+----------------------------------------------------+---------+-----------+-------- 1 | SLE-Module-Basesystem-SLES15-SP1-Pool | SLE-Module-Basesystem-SLES15-SP1-Pool | Yes | (r ) Yes | No 2 | SLE-Module-Basesystem-SLES15-SP1-Upadates | SLE-Module-Basesystem-SLES15-SP1-Upadates | Yes | (r ) Yes | No 3 | SLE-Module-Legacy-SLES15-SP1-Pool | SLE-Module-Legacy-SLES15-SP1-Pool | Yes | (r ) Yes | No 4 | SLE-Module-Legacy-SLES15-SP1-Updates | SLE-Module-Legacy-SLES15-SP1-Updates | Yes | ( p) Yes | No 5 | SLE-Module-Server-Applications-SLES15-SP1-Pool | SLE-Module-Server-Applications-SLES15-SP1-Pool | Yes | (r ) Yes | No 6 | SLE-Module-Server-Applications-SLES15-SP1-Upadates | SLE-Module-Server-Applications-SLES15-SP1-Upadates | Yes | (r ) Yes | No 7 | SLE-Product-SLES15-SP1-Pool | SLE-Product-SLES15-SP1-Pool | Yes | (r ) Yes | No 8 | SLE-Product-SLES15-SP1-Updates | SLE-Product-SLES15-SP1-Updates | Yes | (r ) Yes | No 9 | SUSE-Enterprise-Storage-6-Pool | SUSE-Enterprise-Storage-6-Pool | Yes | (r ) Yes | No 10 | SUSE-Enterprise-Storage-6-Updates | SUSE-Enterprise-Storage-6-Updates | Yes | (r ) Yes | No

(3)hosts文件

192.168.2.39 admin.example.com admin 192.168.2.40 node001.example.com node001 192.168.2.41 node002.example.com node002 192.168.2.42 node003.example.com node003 192.168.2.43 node004.example.com node004 192.168.2.44 node005.example.com node005

3、安装 satlt-minion

zypper -n in salt-minion sed -i ‘17i\master: 192.168.2.39‘ /etc/salt/minion systemctl restart salt-minion.service systemctl enable salt-minion.service systemctl status salt-minion.service

# salt-key Accepted Keys: admin.example.com node001.example.com node002.example.com node003.example.com Denied Keys: Unaccepted Keys: node004.example.com <==== 新加节点 Rejected Keys:

# salt-key -A

# salt "node004*" test.ping node004.example.com: True

4、预防集群数据平衡

# ceph osd set norebalance norebalance is set admin:/etc/salt/pki/master # ceph -s cluster: id: f7b451b3-4a4c-4681-a4ef-4b5359242a92 health: HEALTH_WARN norebalance flag(s) set

services:

mon: 3 daemons, quorum node001,node002,node003 (age 2h)

mgr: node001(active, since 2h), standbys: node002, node003

osd: 6 osds: 6 up (since 2h), 6 in (since 24h)

flags norebalance

data:

pools: 0 pools, 0 pgs

objects: 0 objects, 0 B

usage: 12 GiB used, 48 GiB / 60 GiB avail

pgs:

(1)新建 global.conf 文件(admin节点)

# vim /srv/salt/ceph/configuration/files/ceph.conf.d/global.conf osd_crush_initial_weight = 0

(2)创建新配置文件(admin节点)

# salt ‘*‘ state.apply ceph.configuration.create

注意:执行时有报错,可忽略

node003.example.com: Name: /var/cache/salt/minion/files/base/ceph/configuration - Function: file.absent - Result: Changed Started: - 15:42:45.362265 Duration: 22.133 ms ---------- ID: /srv/salt/ceph/configuration/cache/ceph.conf Function: file.managed Result: False Comment: Unable to manage file: Jinja error: ‘select.minions‘ Traceback (most recent call last): File "/usr/lib/python3.6/site-pack

(3)执行新配置文件,并且只在node001 node002 node003节点上生效 (admin节点)

# salt ‘node00[1-3]*‘ state.apply ceph.configuration

(4)检查个节点配置文件 (node001,node002,node003)

# cat /etc/ceph/ceph.conf osd crush initial weight = 0

5、执行stage0 1 2 (admin节点)

# salt-run state.orch ceph.stage.0 # salt-run state.orch ceph.stage.1 # salt-run state.orch ceph.stage.2 # salt ‘node004*‘ pillar.items # 查看pillar设置是否正确 public_network: 192.168.2.0/24 roles: - storage # 仅仅是 storage 角色 time_server: admin.example.com

6、检查生成 OSD 报告(admin节点)

# salt-run disks.report

node004.example.com:

|_

- 0

-

Total OSDs: 2

Solid State VG:

Targets: block.db Total size: 19.00 GB

Total LVs: 2 Size per LV: 1.86 GB

Devices: /dev/nvme0n2

Type Path LV Size % of device

-------------------------------------------------------------------------

[data] /dev/sdb 9.00 GB 100.0%

[block.db] vg: vg/lv 1.86 GB 10%

-------------------------------------------------------------------------

[data] /dev/sdc 9.00 GB 100.0%

[block.db] vg: vg/lv 1.86 GB 10%

7、运行stage3, 把node004节点添加进来,并自动创建OSD (admin节点)

# salt-run state.orch ceph.stage.3

8、执行后检查集群OSD状态 (admin节点)

可以发现新增节点权重都是0,这是由于之前配置的“osd_crush_initial_weight”参数,预防新增节点或磁盘进来时进行数据平衡。

# ceph osd tree ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.05878 root default -7 0.01959 host node001 2 hdd 0.00980 osd.2 up 1.00000 1.00000 5 hdd 0.00980 osd.5 up 1.00000 1.00000 -3 0.01959 host node002 0 hdd 0.00980 osd.0 up 1.00000 1.00000 3 hdd 0.00980 osd.3 up 1.00000 1.00000 -5 0.01959 host node003 1 hdd 0.00980 osd.1 up 1.00000 1.00000 4 hdd 0.00980 osd.4 up 1.00000 1.00000 -9 0 host node004 6 hdd 0 osd.6 up 1.00000 1.00000 <=== 新增节点OSD权重为0 7 hdd 0 osd.7 up 1.00000 1.00000

9、手动增加OSD磁盘权重 (admin节点)

注意:生产环境请在变更时间执行,执行时会数据平衡,影响读写。当然也可以通过Ceph参数或QOS来控制读写速率,后续文档中会提到。

# ceph osd crush reweight osd.6 0.00980 # ceph osd crush reweight osd.7 0.00980

# ceph osd tree ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.07837 root default -7 0.01959 host node001 2 hdd 0.00980 osd.2 up 1.00000 1.00000 5 hdd 0.00980 osd.5 up 1.00000 1.00000 -3 0.01959 host node002 0 hdd 0.00980 osd.0 up 1.00000 1.00000 3 hdd 0.00980 osd.3 up 1.00000 1.00000 -5 0.01959 host node003 1 hdd 0.00980 osd.1 up 1.00000 1.00000 4 hdd 0.00980 osd.4 up 1.00000 1.00000 -9 0.01959 host node004 6 hdd 0.00980 osd.6 up 1.00000 1.00000 7 hdd 0.00980 osd.7 up 1.00000 1.00000

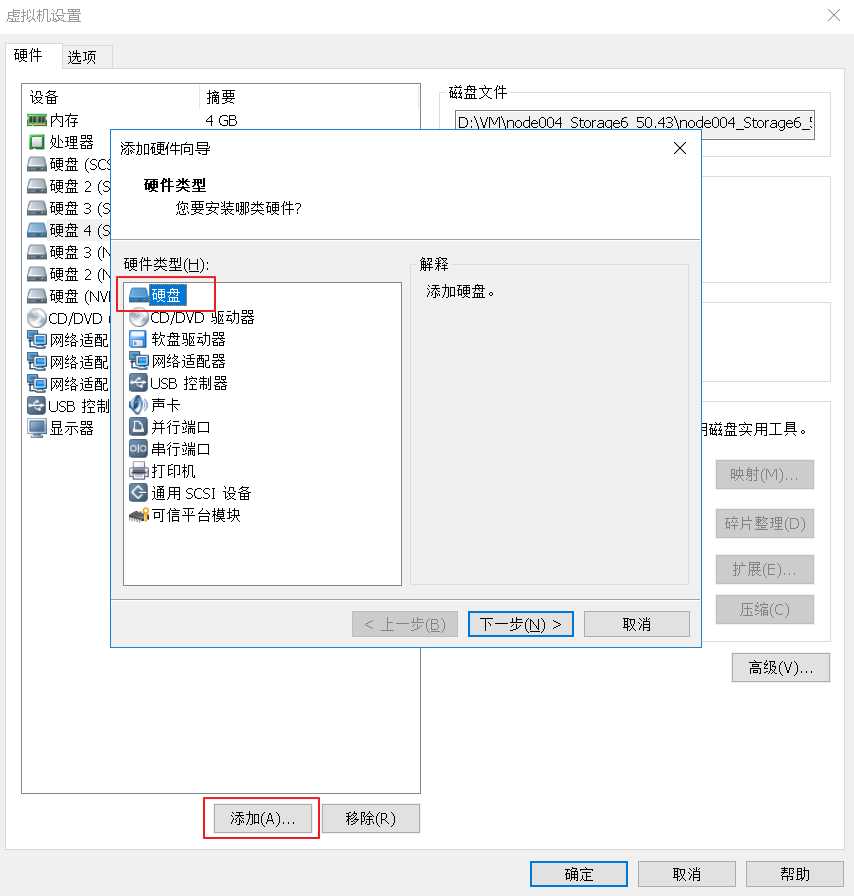

1、首先我们通过 VMware workstation 的虚拟机 node004 节点上添加一块10G大小的磁盘

2、开启虚拟机后,node004主机终端中查看新增磁盘

# lsblk NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT sda 8:0 0 20G 0 disk ├─sda1 8:1 0 1G 0 part /boot └─sda2 8:2 0 19G 0 part ├─vgoo-lvroot 254:0 0 17G 0 lvm / └─vgoo-lvswap 254:1 0 2G 0 lvm [SWAP] sdb 8:16 0 10G 0 disk └─ceph--block--0515f9d7--3407--46a5-- 254:4 0 9G 0 lvm sdc 8:32 0 10G 0 disk └─ceph--block--9f7394b2--3ad3--4cd8-- 254:5 0 9G 0 lvm sdd 8:48 0 10G 0 disk <== 新增磁盘 sr0 11:0 1 1024M 0 rom nvme0n1 259:0 0 20G 0 disk nvme0n2 259:1 0 20G 0 disk ├─ceph--block--dbs--57d07a01--4440--4 254:2 0 1G 0 lvm └─ceph--block--dbs--57d07a01--4440--4 254:3 0 1G 0 lvm nvme0n3 259:2 0 20G 0 disk

3、查看 VG LV 信息

# lvs LV VG Attr LSize osd-block-9a914f7d-ae9c-451a-ac7e-bcb6cb1fc926 ceph-block-0515f9d7-3407-46a5-be68-db80fc789dcc -wi-ao---- 9.00g osd-block-79f5920f-b41c-4dd0-94e9-dc85dbb2e7e4 ceph-block-9f7394b2-3ad3-4cd8-8267-7e5993af1271 -wi-ao---- 9.00g osd-block-db-2244293e-ca96-4847-a5cb-9112f59836fa ceph-block-dbs-57d07a01-4440-4892-b44c-eae536613586 -wi-ao---- 1.00g osd-block-db-2b295cc9-caff-45ad-a179-d7e3ba46a39d ceph-block-dbs-57d07a01-4440-4892-b44c-eae536613586 -wi-ao---- 1.00g osd-block-db-test ceph-block-dbs-57d07a01-4440-4892-b44c-eae536613586 -wi-a----- 2.00g lvroot vgoo -wi-ao---- 17.00g lvswap vgoo -wi-ao---- 2.00g

4、创建 OSD 磁盘的 VG 和 LV

# vgcreate ceph-block-0 /dev/sdd # lvcreate -l 100%FREE -n block-0 ceph-block-0

5、在已在nvme0n2磁盘上创建的 VG 上创建 LV

我们从第3个步骤中可以看到,nvme0n2磁盘已经被 VG ceph-block-dbs-57d07a01-4440-4892-b44c-eae536613586所使用,我们要在该VG上创建LV,因为一般一块PCIE SSD磁盘可以承担10块OSD数据磁盘,作为他们WAL和DB加速磁盘使用。

# lvcreate -L 2GB -n db-0 ceph-block-dbs-57d07a01-4440-4892-b44c-eae536613586

6、显示 VG LV 信息

# lvs LV VG Attr LSize block-0 ceph-block-0 -wi-a----- 10.00g osd-block-9a914f7d-ae9c-451a-ac7e-bcb6cb1fc926 ceph-block-0515f9d7-3407-46a5-be68-db80fc789dcc -wi-ao---- 9.00g osd-block-79f5920f-b41c-4dd0-94e9-dc85dbb2e7e4 ceph-block-9f7394b2-3ad3-4cd8-8267-7e5993af1271 -wi-ao---- 9.00g db-0 ceph-block-dbs-57d07a01-4440-4892-b44c-eae536613586 -wi-a----- 2.00g osd-block-db-2244293e-ca96-4847-a5cb-9112f59836fa ceph-block-dbs-57d07a01-4440-4892-b44c-eae536613586 -wi-ao---- 1.00g osd-block-db-2b295cc9-caff-45ad-a179-d7e3ba46a39d ceph-block-dbs-57d07a01-4440-4892-b44c-eae536613586 -wi-ao---- 1.00g lvroot vgoo -wi-ao---- 17.00g lvswap vgoo -wi-ao---- 2.00g

7、使用 ceph-volume 方式创建

# ceph-volume lvm create --bluestore --data ceph-block-0/block-0 --block.db ceph-block-dbs-57d07a01-4440-4892-b44c-eae536613586/db-0

# ceph osd tree ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.07837 root default -7 0.01959 host node001 2 hdd 0.00980 osd.2 up 1.00000 1.00000 5 hdd 0.00980 osd.5 up 1.00000 1.00000 -3 0.01959 host node002 0 hdd 0.00980 osd.0 up 1.00000 1.00000 3 hdd 0.00980 osd.3 up 1.00000 1.00000 -5 0.01959 host node003 1 hdd 0.00980 osd.1 up 1.00000 1.00000 4 hdd 0.00980 osd.4 up 1.00000 1.00000 -9 0.01959 host node004 6 hdd 0.00980 osd.6 up 1.00000 1.00000 7 hdd 0.00980 osd.7 up 1.00000 1.00000 8 hdd 0 osd.8 up 1.00000 1.00000 <==== 可以看到 osd.8 被创建出来

8、设置权重

注意:生产环境操作时,会数据平衡会影响到读写性能。

# ceph osd crush reweight osd.8 0.00980

# ceph osd tree ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.08817 root default -7 0.01959 host node001 2 hdd 0.00980 osd.2 up 1.00000 1.00000 5 hdd 0.00980 osd.5 up 1.00000 1.00000 -3 0.01959 host node002 0 hdd 0.00980 osd.0 up 1.00000 1.00000 3 hdd 0.00980 osd.3 up 1.00000 1.00000 -5 0.01959 host node003 1 hdd 0.00980 osd.1 up 1.00000 1.00000 4 hdd 0.00980 osd.4 up 1.00000 1.00000 -9 0.02939 host node004 6 hdd 0.00980 osd.6 up 1.00000 1.00000 7 hdd 0.00980 osd.7 up 1.00000 1.00000 8 hdd 0.00980 osd.8 up 1.00000 1.00000

语法: salt-run osd.remove OSD_ID

1、批量删除 node004 节点上 OSD.7 OSD.8

admin:~ # ceph osd tree ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.08817 root default -7 0.01959 host node001 2 hdd 0.00980 osd.2 up 1.00000 1.00000 5 hdd 0.00980 osd.5 up 1.00000 1.00000 -3 0.01959 host node002 0 hdd 0.00980 osd.0 up 1.00000 1.00000 3 hdd 0.00980 osd.3 up 1.00000 1.00000 -5 0.01959 host node003 1 hdd 0.00980 osd.1 up 1.00000 1.00000 4 hdd 0.00980 osd.4 up 1.00000 1.00000 -9 0.02939 host node004 6 hdd 0.00980 osd.6 up 1.00000 1.00000 7 hdd 0.00980 osd.7 up 1.00000 1.00000 8 hdd 0.00980 osd.8 up 1.00000 1.00000

admin:~ # salt-run osd.remove 7 8 Removing osd 7 on host node004.example.com Draining the OSD Waiting for ceph to catch up. osd.7 is safe to destroy Purging from the crushmap Zapping the device Removing osd 8 on host node004.example.com Draining the OSD Waiting for ceph to catch up. osd.8 is safe to destroy Purging from the crushmap Zapping the device

2、显示 osd 信息,node004主机上 osd.7 和 osd.8 已被删除

admin:~ # ceph osd tree ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.06857 root default -7 0.01959 host node001 2 hdd 0.00980 osd.2 up 1.00000 1.00000 5 hdd 0.00980 osd.5 up 1.00000 1.00000 -3 0.01959 host node002 0 hdd 0.00980 osd.0 up 1.00000 1.00000 3 hdd 0.00980 osd.3 up 1.00000 1.00000 -5 0.01959 host node003 1 hdd 0.00980 osd.1 up 1.00000 1.00000 4 hdd 0.00980 osd.4 up 1.00000 1.00000 -9 0.00980 host node004 6 hdd 0.00980 osd.6 up 1.00000 1.00000

3、删除 OSD 其他命令

(1)删除节点上所有osd

# salt-run osd.remove OSD_HOST_NAME

(2)当 WAL 或 DB 设备损坏时,移除破损的磁盘

# salt-run osd.remove OSD_ID force=True

从集群中移出node004 osd节点,移除前请确保集群有足够的空间容纳node004上的数据

1、手动方式

(1)管理节点查看 OSD 信息

# ceph osd tree ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.06857 root default -7 0.01959 host node001 2 hdd 0.00980 osd.2 up 1.00000 1.00000 5 hdd 0.00980 osd.5 up 1.00000 1.00000 -3 0.01959 host node002 0 hdd 0.00980 osd.0 up 1.00000 1.00000 3 hdd 0.00980 osd.3 up 1.00000 1.00000 -5 0.01959 host node003 1 hdd 0.00980 osd.1 up 1.00000 1.00000 4 hdd 0.00980 osd.4 up 1.00000 1.00000 -9 0.00980 host node004 6 hdd 0.00980 osd.6 up 1.00000 1.00000

(2)node004 节点上停止OSD服务

# systemctl stop ceph-osd@6.service # systemctl stop ceph-osd.target

(3)node004 节点上,卸载挂载目录

# umount /var/lib/ceph/osd/ceph-6

(4)admin节点上,移除OSD

# ceph osd out 6 # ceph osd crush remove osd.6 # ceph osd rm osd.6 # ceph auth del osd.6

(5)admin节点上,从CRUSH MAP上移除节点信息

# ceph osd crush rm node004

(6)检查集群是否清理干净node004

# ceph osd tree ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.05878 root default -7 0.01959 host node001 2 hdd 0.00980 osd.2 up 1.00000 1.00000 5 hdd 0.00980 osd.5 up 1.00000 1.00000 -3 0.01959 host node002 0 hdd 0.00980 osd.0 up 1.00000 1.00000 3 hdd 0.00980 osd.3 up 1.00000 1.00000 -5 0.01959 host node003 1 hdd 0.00980 osd.1 up 1.00000 1.00000 4 hdd 0.00980 osd.4 up 1.00000 1.00000

(7)node004节点,删除所有相关 VG LV 信息

node004:~ # vgs VG #PV #LV #SN Attr VSize VFree ceph-block-0515f9d7-3407-46a5-be68-db80fc789dcc 1 1 0 wz--n- 9.00g 0 ceph-block-dbs-57d07a01-4440-4892-b44c-eae536613586 1 1 0 wz--n- 19.00g 18.00g vg00 1 2 0 wz--n- 19.00g 0

node004:~ # lvs LV VG Attr LSize osd-block-9a914f7d-ae9c-451a-ac7e-bcb6cb1fc926 ceph-block-0515f9d7-3407-46a5-be68-db80fc789dcc -wi-a----- 9.00g osd-block-db-2b295cc9-caff-45ad-a179-d7e3ba46a39d ceph-block-dbs-57d07a01-4440-4892-b44c-eae536613586 -wi-a----- 1.00g lvroot vg00 -wi-ao---- 17.00g lvswap vg00 -wi-ao---- 2.00g

# for i in `vgs | grep ceph- | awk ‘{ print $1 }‘`; do vgremove -f $i; done

node004:~ # lvs LV VG Attr LSize lvroot vg00 -wi-ao---- 17.00g lvswap vg00 -wi-ao---- 2.00g

node004:~ # vgs VG #PV #LV #SN Attr VSize VFree vg00 1 2 0 wz--n- 19.00g 0

2、DeepSea 方式

(1)管理节点上修改 policy.cfg 文件

vim /srv/pillar/ceph/proposals/policy.cfg ## Cluster Assignment #cluster-ceph/cluster/*.sls <=== 注释掉 cluster-ceph/cluster/node00[1-3]*.sls <=== 匹配 target cluster-ceph/cluster/admin*.sls <=== 匹配 target ## Roles # ADMIN role-master/cluster/admin*.sls role-admin/cluster/admin*.sls # Monitoring role-prometheus/cluster/admin*.sls role-grafana/cluster/admin*.sls # MON role-mon/cluster/node00[1-3]*.sls # MGR (mgrs are usually colocated with mons) role-mgr/cluster/node00[1-3]*.sls # COMMON config/stack/default/global.yml config/stack/default/ceph/cluster.yml # Storage # 定义为 storage 角色 #role-storage/cluster/node00*.sls <=== 注释掉 role-storage/cluster/node00[1-3]*.sls <=== 匹配 target

(2)修改 drive_group.yml 文件

# vim /srv/salt/ceph/configuration/files/drive_groups.yml # This is the default configuration and # will create an OSD on all available drives drive_group_hdd_nvme: target: ‘node00[1-3]*‘ <== 匹配 target data_devices: size: ‘9GB:12GB‘ db_devices: rotational: 0 limit: 1 block_db_size: ‘2G‘

(3)执行salt命令,stage2和stage5

# salt-run state.orch ceph.stage.2 # salt-run state.orch ceph.stage.5

SUSE Ceph 增加节点、减少节点、 删除OSD磁盘等操作 - Storage6

标签:html zypper 报错 work last err files ons 清理

原文地址:https://www.cnblogs.com/alfiesuse/p/11562336.html