标签:buffer rop net src serve ima 运行 ica 安装

tar -zxvf xxx.tar.gzmv hadoop-3.1.3 /usr/local/hadoop3mv jdk-1.8.0_231 /usr/local/jdk1.8.bashrc# cd ~

# vim .bashrcexport JAVA_HOME=/usr/local/jdk1.8/

export JRE_HOME=$JAVA_HOME/jre

export CLASSPATH=.:$JAVA_HOME/lib:$JRE_HOME/lib

export PATH=$JAVA_HOME/bin:$PATHexport HADOOP_HOME=/usr/local/hadoop3

export PATH=$PATH:$HADOOP_HOME/bin

export PATH=$PATH:$HADOOP_HOME/sbin

export HADOOP_MAPRED_HOME=$HADOOP_HOME

export HADOOP_COMMON_HOME=$HADOOP_HOME

export HADOOP_HDFS_HOME=$HADOOP_HOME

export YARN_HOME=$HADOOP_HOME

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export HADOOP_OPTS="-Djava.library.path=$HADOOP_HOME/lib"# source .bashrccd /usr/local/hadoop3/etc/hadoop <property>

<name>hadoop.tmp.dir</name>

<value>/usr/local/hadoop3/tmp</value>

<description>文件临时存储目录</description>

</property>

<property>

<name>fs.defaultFS</name>

<!-- 1.x name>fs.default.name</name -->

<value>hdfs://master:9000</value>

<description>hdfs namenode访问地址</description>

</property>

<property>

<name>io.file.buffer.size</name>

<value>102400</value>

<description>文件块大小</description>

</property> <property>

<name>dfs.namenode.secondary.http-address</name>

<value>slave1:50080</value>

</property>

<property>

<name>dfs.replication</name>

<value>1</value>

<description>文件块的副本数</description>

</property> </property>

<property>

<name>dfs.name.dir</name>

<value>/usr/local/hadoop3/hdfs/name</value>

<description>namenode目录</description>

</property>

<property>

<name>dfs.data.dir</name>

<value>/usr/local/hadoop3/hdfs/data</value>

<description>datanode目录</description>

</property> <property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>master:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>master:19888</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=/usr/local/hadoop3</value>

</property>

<property>

<name>mapreduce.map.env</name>

<value>HADOOP_MAPRED_HOME=/usr/local/hadoop3</value>

</property>

<property>

<name>mapreduce.reduce.env</name>

<value>HADOOP_MAPRED_HOME=/usr/local/hadoop3</value>

</property> <property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>master</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>master:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>master:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>master:8031</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>master:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>master:8088</value>

</property>

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

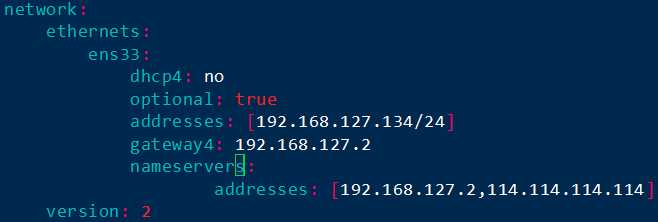

</property>master、slave1、slave2# hostnamectl set-hostname xxx/etc/cloud/cloud.cfg文件,则修改preserve_hostname为true192.168.127.134、192.168.127.135、192.168.127.136# vim /etc/netplan/50-cloud-init.yaml

# netplan apply# vim /etc/hosts192.168.127.134 master

192.168.127.135 slave1

192.168.127.136 slave2ssh-keygen -t rsa -P ""# cd ~/.ssh

# scp -P 22 slave1:~/.ssh/id_rsa.pub id_rsa.pub1

# scp -P 22 slave2:~/.ssh/id_rsa.pub id_rsa.pub2

# cat id_rsa.pub >> authorized_keys

# cat id_rsa.pub1 >> authorized_keys

# cat id_rsa.pub2 >> authorized_keys# scp -P 22 authorized_keys slave1:~/.ssh/

# scp -P 22 authorized_keys slave2:~/.ssh/cd /usr/local/hadoop3/sbinstart-dfs.sh、stop-dfs.sh:HDFS_DATANODE_USER=root

HADOOP_SECURE_DN_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=rootstart-yarn.sh、stop-yarn.sh:YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root# /usr/local/hadoop3/sbin/start-all.shjpsmaster:8088、master:9870查看hadoop自带的web服务/usr/local/hadoop3# hdfs dfs -mkdir -p /data/input# hdfs dfs -put README.txt /data/input# hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar wordcount /data/input /data/output/result# hdfs dfs -cat /data/output/result/part-r-00000标签:buffer rop net src serve ima 运行 ica 安装

原文地址:https://www.cnblogs.com/chien-wong/p/11746282.html