标签:bsp handle build parse domain com utils 问题 环境

环境

Windows7

Python3.65

scrapy1.74

PyInstaller3.5

创建打包脚本

在与scrapy.cfg同路径创建start.py

# -*- coding: utf-8 -*- from scrapy.crawler import CrawlerProcess from scrapy.utils.project import get_project_settings # import robotparser # 必加的依赖 import scrapy.spiderloader import scrapy.statscollectors import scrapy.logformatter import scrapy.dupefilters import scrapy.squeues import scrapy.extensions.spiderstate import scrapy.extensions.corestats import scrapy.extensions.telnet import scrapy.extensions.logstats import scrapy.extensions.memusage import scrapy.extensions.memdebug import scrapy.extensions.feedexport import scrapy.extensions.closespider import scrapy.extensions.debug import scrapy.extensions.httpcache import scrapy.extensions.statsmailer import scrapy.extensions.throttle import scrapy.core.scheduler import scrapy.core.engine import scrapy.core.scraper import scrapy.core.spidermw import scrapy.core.downloader import scrapy.downloadermiddlewares.stats import scrapy.downloadermiddlewares.httpcache import scrapy.downloadermiddlewares.cookies import scrapy.downloadermiddlewares.useragent import scrapy.downloadermiddlewares.httpproxy import scrapy.downloadermiddlewares.ajaxcrawl import scrapy.downloadermiddlewares.chunked import scrapy.downloadermiddlewares.decompression import scrapy.downloadermiddlewares.defaultheaders import scrapy.downloadermiddlewares.downloadtimeout import scrapy.downloadermiddlewares.httpauth import scrapy.downloadermiddlewares.httpcompression import scrapy.downloadermiddlewares.redirect import scrapy.downloadermiddlewares.retry import scrapy.downloadermiddlewares.robotstxt import scrapy.spidermiddlewares.depth import scrapy.spidermiddlewares.httperror import scrapy.spidermiddlewares.offsite import scrapy.spidermiddlewares.referer import scrapy.spidermiddlewares.urllength import scrapy.pipelines import scrapy.core.downloader.handlers.http import scrapy.core.downloader.contextfactory process = CrawlerProcess(get_project_settings()) # 参数1:爬虫名 参数2:域名 process.crawl(‘biqubao_spider‘,domain=‘biqubao.com‘) process.start() # the script will block here until the crawling is finished

打包完成后生成了三个文件:dist,build(可删),start.spec(可删)

问题1:

关于导入robotparser库这个问题,本文参考的文章是导入了这个库,可是经过尝试后发现没有安装这个库。

Scrapy会自动解析机器人协议的,所以尝试不导入这个库发现可以成功打包。

问题2:

可能你的Scrapy项目有使用其他的库,所以在这个打包脚本上你需要导入你使用的所有库。

问题3:

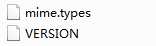

打包成功后运行不了程序,因为少两个问题,如下图

你需要在dist中添加scrapy文件夹,里面包含这两个文件,这两个文件在安装的scrapy的库中。

参考文章:https://blog.csdn.net/la_vie_est_belle/article/details/79017358

标签:bsp handle build parse domain com utils 问题 环境

原文地址:https://www.cnblogs.com/luocodes/p/11827850.html