标签:pat 简单 变量 分布式部署 pac hbase tar head 解压

mkdir module

vi /etc/profile

JAVA_HOME=/usr/local/jdk1.8.0_151

HADOOP_HOME=/opt/module/hadoop-2.10.0

CLASSPATH=.:$JAVA_HOME/lib.tools.jar

PATH=$JAVA_HOME/bin:$PATH:$HADOOP_HOME/bin

export JAVA_HOME CLASSPATH PATH

配置完毕,刷新

source /etc/profile

这就安装完毕了,简单吧。。。

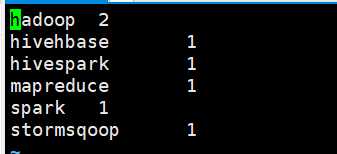

echo ‘hadoop mapreduce hivehbase spark stormsqoop hadoop hivespark‘ > data/wc.input

bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.10.0.jar wordcount ../data/wc.input output

进入hadoop目录

cd /opt/module/hadoop-2.10.0/etc/hadoop

export JAVA_HOME=/usr/local/jdk1.8.0_151

<configuration>

<!-- 指定HDFS中namenode的路径 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

<!-- 指定HDFS运行时产生的文件的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop-2.10.0/data/tmp</value>

</property>

</configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

bin/hdfs namenode -format

sbin/hadoop-daemon.sh start namenode

sbin/hadoop-daemon.sh start datanode

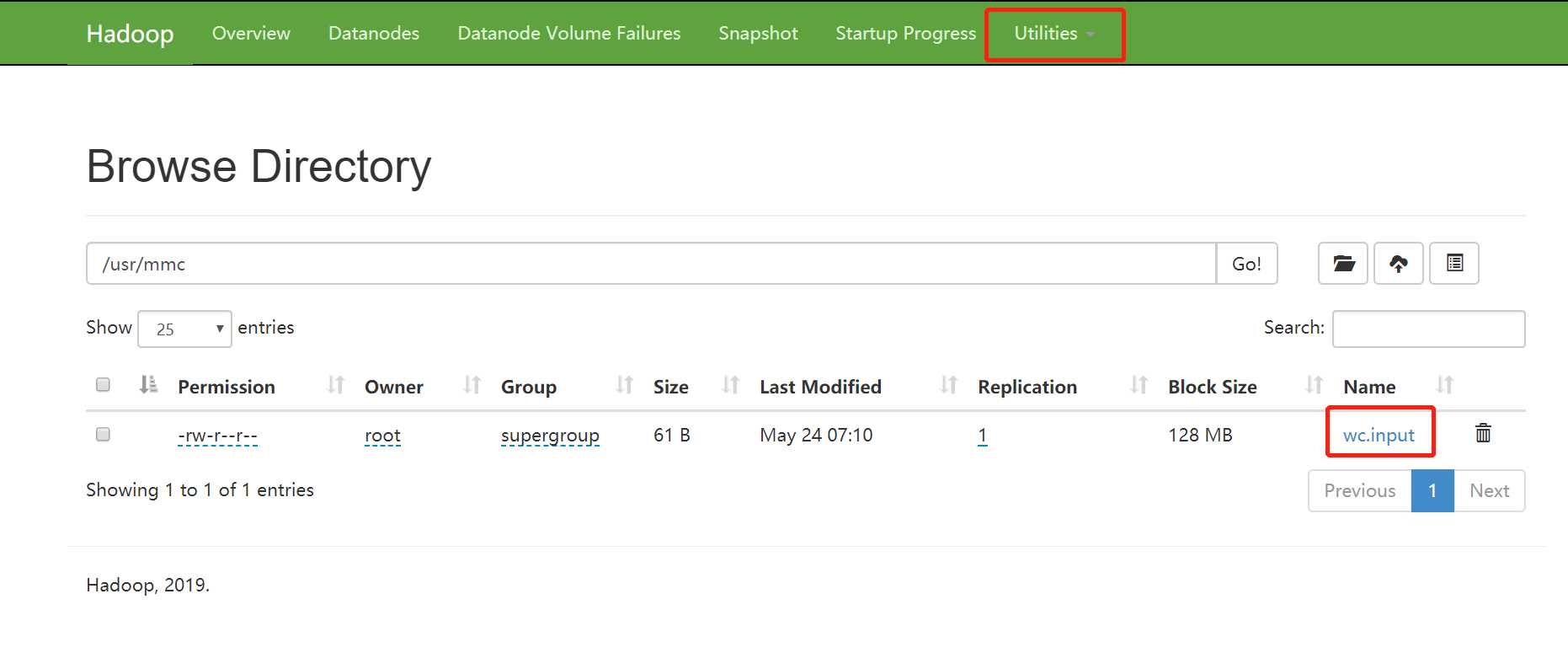

bin/hdfs dfs -mkdir -p /usr/mmc

bin/hdfs dfs -put /opt/module/data/wc.input /usr/mmc

bin/hdfs dfs -rm -r /usr/mmc

网页上查看效果:

export JAVA_HOME=/usr/local/jdk1.8.0_151

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop101</value>

</property>

</configuration>

hadoop101那里要配置为你虚拟机的hostname

export JAVA_HOME=/usr/local/jdk1.8.0_151

mv mapred-site.xml.template mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

sbin/yarn-daemon.sh start resourcemanager

sbin/yarn-daemon.sh start nodemanager

hdfs dfs -mkdir -p /usr/mmc/input

hdfs dfs -put ../data/wc.input /usr/mmc/input

注意:运行之前用jps查看下,这些都启动没有NameNode、NodeManager 、DataNode、ResourceManager

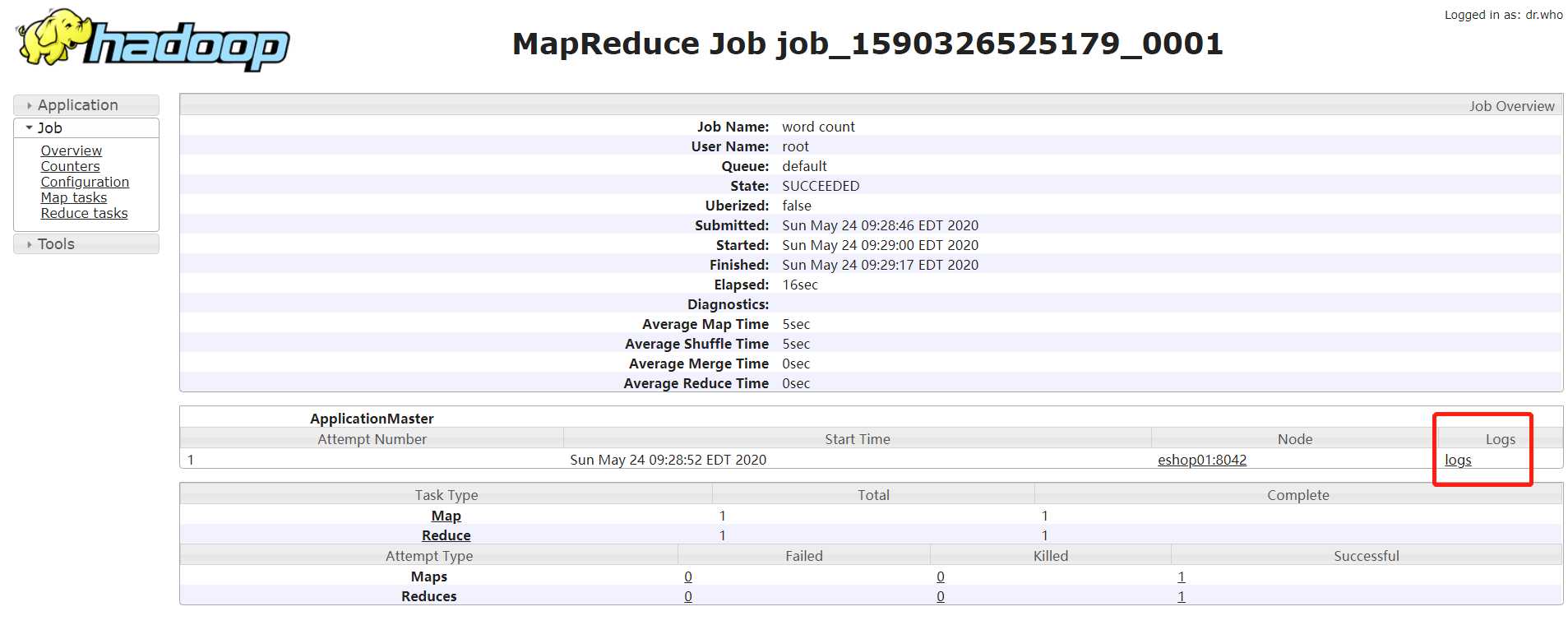

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.10.0.jar wordcount /usr/mmc/input /usr/mmc/output

http://192.168.1.21:8088/cluster

此时可以看到执行的进度了,但是那个History链接还是点不动,需要启动历史服务器

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>eshop01:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>eshop01:19888</value>

</property>

</configuration>

sbin/mr-jobhistory-daemon.sh start historyserver

注意:开启日志聚集需要重启Nodemanager,resourcemanager,historymanager

<!--开启日志聚集功能 -->

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<!-- 日志保留时间 -->

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

启动Nodemanager,resourcemanager,historymanager

运行实例程序

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.10.0.jar wordcount /usr/mmc/input /usr/mmc/output

标签:pat 简单 变量 分布式部署 pac hbase tar head 解压

原文地址:https://www.cnblogs.com/javammc/p/12953388.html