标签:data time memory 修改 参数 使用 ystemd 服务器 还需要

实践环境准备我这里使用的是五台CentOS-7.7的虚拟机,具体信息如下表:

| 系统版本 | IP地址 | 节点角色 | CPU | Memory | Hostname |

|---|---|---|---|---|---|

| CentOS-7.7 | 192.168.243.143 | master | >=2 | >=2G | m1 |

| CentOS-7.7 | 192.168.243.144 | master | >=2 | >=2G | m2 |

| CentOS-7.7 | 192.168.243.145 | master | >=2 | >=2G | m3 |

| CentOS-7.7 | 192.168.243.146 | worker | >=2 | >=2G | n1 |

| CentOS-7.7 | 192.168.243.147 | worker | >=2 | >=2G | n2 |

这五台机器均需事先安装好Docker,由于安装过程比较简单这里不进行介绍,可以参考官方文档:

软件版本说明:

以下是搭建k8s集群过程中ip、端口等网络相关配置的说明,后续将不再重复解释:

# 3个master节点的ip

192.168.243.143

192.168.243.144

192.168.243.145

# 2个worker节点的ip

192.168.243.146

192.168.243.147

# 3个master节点的hostname

m1、m2、m3

# api-server的高可用虚拟ip(keepalived会用到,可自定义)

192.168.243.101

# keepalived用到的网卡接口名,一般是eth0,可执行ip a命令查看

ens32

# kubernetes服务ip网段(可自定义)

10.255.0.0/16

# kubernetes的api-server服务的ip,一般是cidr的第一个(可自定义)

10.255.0.1

# dns服务的ip地址,一般是cidr的第二个(可自定义)

10.255.0.2

# pod网段(可自定义)

172.23.0.0/16

# NodePort的取值范围(可自定义)

8400-89001、主机名必须每个节点都不一样,并且保证所有点之间可以通过hostname互相访问。设置hostname:

# 查看主机名

$ hostname

# 修改主机名

$ hostnamectl set-hostname <your_hostname>配置host,使所有节点之间可以通过hostname互相访问:

$ vim /etc/hosts

192.168.243.143 m1

192.168.243.144 m2

192.168.243.145 m3

192.168.243.146 n1

192.168.243.147 n22、安装依赖包:

# 更新yum

$ yum update -y

# 安装依赖包

$ yum install -y conntrack ipvsadm ipset jq sysstat curl wget iptables libseccomp3、关闭防火墙、swap,重置iptables:

# 关闭防火墙

$ systemctl stop firewalld && systemctl disable firewalld

# 重置iptables

$ iptables -F && iptables -X && iptables -F -t nat && iptables -X -t nat && iptables -P FORWARD ACCEPT

# 关闭swap

$ swapoff -a

$ sed -i ‘/swap/s/^\(.*\)$/#\1/g‘ /etc/fstab

# 关闭selinux

$ setenforce 0

# 关闭dnsmasq(否则可能导致docker容器无法解析域名)

$ service dnsmasq stop && systemctl disable dnsmasq

# 重启docker服务

$ systemctl restart docker4、系统参数设置:

# 制作配置文件

$ cat > /etc/sysctl.d/kubernetes.conf <<EOF

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

vm.swappiness=0

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

EOF

# 生效文件

$ sysctl -p /etc/sysctl.d/kubernetes.conf由于二进制的搭建方式需要各个节点具备k8s组件的二进制可执行文件,所以我们得将准备好的二进制文件copy到各个节点上。为了方便文件的copy,我们可以选择一个中转节点(随便一个节点),配置好跟其他所有节点的免密登录,这样在copy的时候就不需要反复输入密码了。

我这里选择m1作为中转节点,首先在m1节点上创建一对密钥:

[root@m1 ~]# ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:9CVdxUGLSaZHMwzbOs+aF/ibxNpsUaaY4LVJtC3DJiU root@m1

The key‘s randomart image is:

+---[RSA 2048]----+

| .o*o=o|

| E +Bo= o|

| . *o== . |

| . + @o. o |

| S BoO + |

| . *=+ |

| .=o |

| B+. |

| +o=. |

+----[SHA256]-----+

[root@m1 ~]# 查看公钥的内容:

[root@m1 ~]# cat ~/.ssh/id_rsa.pub

ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQDF99/mk7syG+OjK5gFFKLZDpMWcF3BEF1Gaa8d8xNIMKt2qGgxyYOC7EiGcxanKw10MQCoNbiAG1UTd0/wgp/UcPizvJ5AKdTFImzXwRdXVbMYkjgY2vMYzpe8JZ5JHODggQuGEtSE9Q/RoCf29W2fIoOKTKaC2DNyiKPZZ+zLjzQr8sJC3BRb1Tk4p8cEnTnMgoFwMTZD8AYMNHwhBeo5NXZSE8zyJiWCqQQkD8n31wQxVgSL9m3rD/1wnsBERuq3cf7LQMiBTxmt1EyqzqM4S1I2WEfJkT0nJZeY+zbHqSJq2LbXmCmWUg5LmyxaE9Ksx4LDIl7gtVXe99+E1NLd root@m1

[root@m1 ~]# 然后把id_rsa.pub文件中的内容copy到其他机器的授权文件中,在其他节点执行下面命令(这里的公钥替换成你生成的公钥):

$ echo "ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQDF99/mk7syG+OjK5gFFKLZDpMWcF3BEF1Gaa8d8xNIMKt2qGgxyYOC7EiGcxanKw10MQCoNbiAG1UTd0/wgp/UcPizvJ5AKdTFImzXwRdXVbMYkjgY2vMYzpe8JZ5JHODggQuGEtSE9Q/RoCf29W2fIoOKTKaC2DNyiKPZZ+zLjzQr8sJC3BRb1Tk4p8cEnTnMgoFwMTZD8AYMNHwhBeo5NXZSE8zyJiWCqQQkD8n31wQxVgSL9m3rD/1wnsBERuq3cf7LQMiBTxmt1EyqzqM4S1I2WEfJkT0nJZeY+zbHqSJq2LbXmCmWUg5LmyxaE9Ksx4LDIl7gtVXe99+E1NLd root@m1" >> ~/.ssh/authorized_keys测试一下能否免密登录,可以看到我这里登录m2节点不需要输入密码:

[root@m1 ~]# ssh m2

Last login: Fri Sep 4 15:55:59 2020 from m1

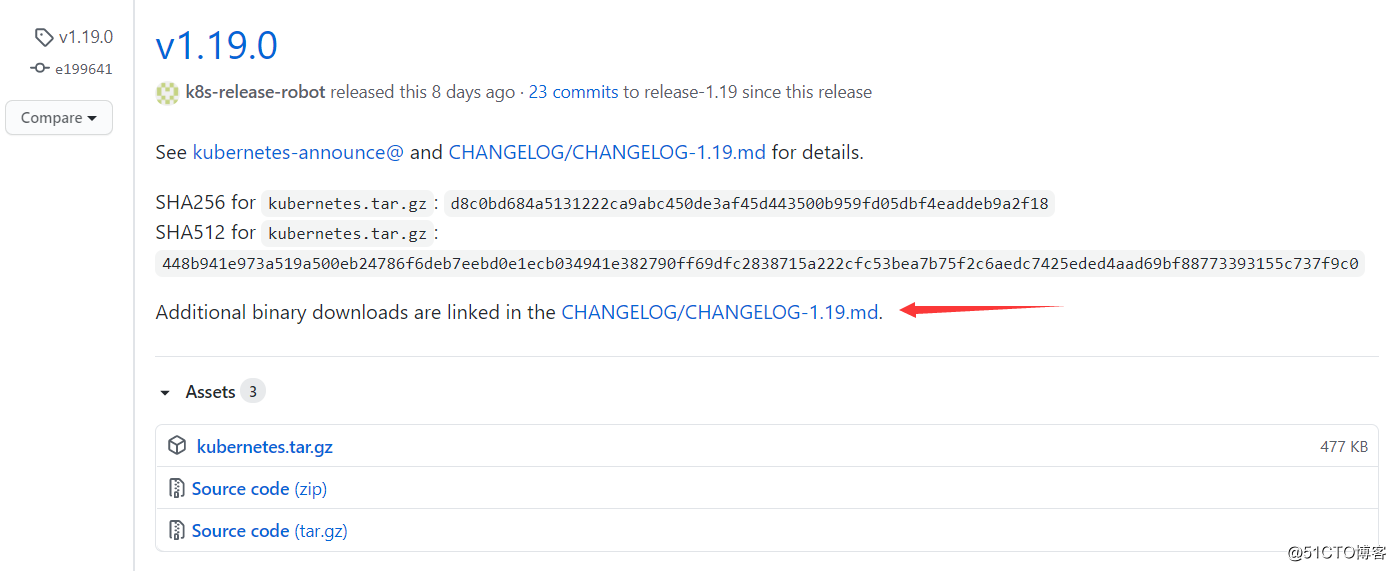

[root@m2 ~]# 我们首先下载k8s的二进制文件,k8s的官方下载地址如下:

我这里下载的是1.19.0版本,注意下载链接是在CHANGELOG/CHANGELOG-1.19.md里面:

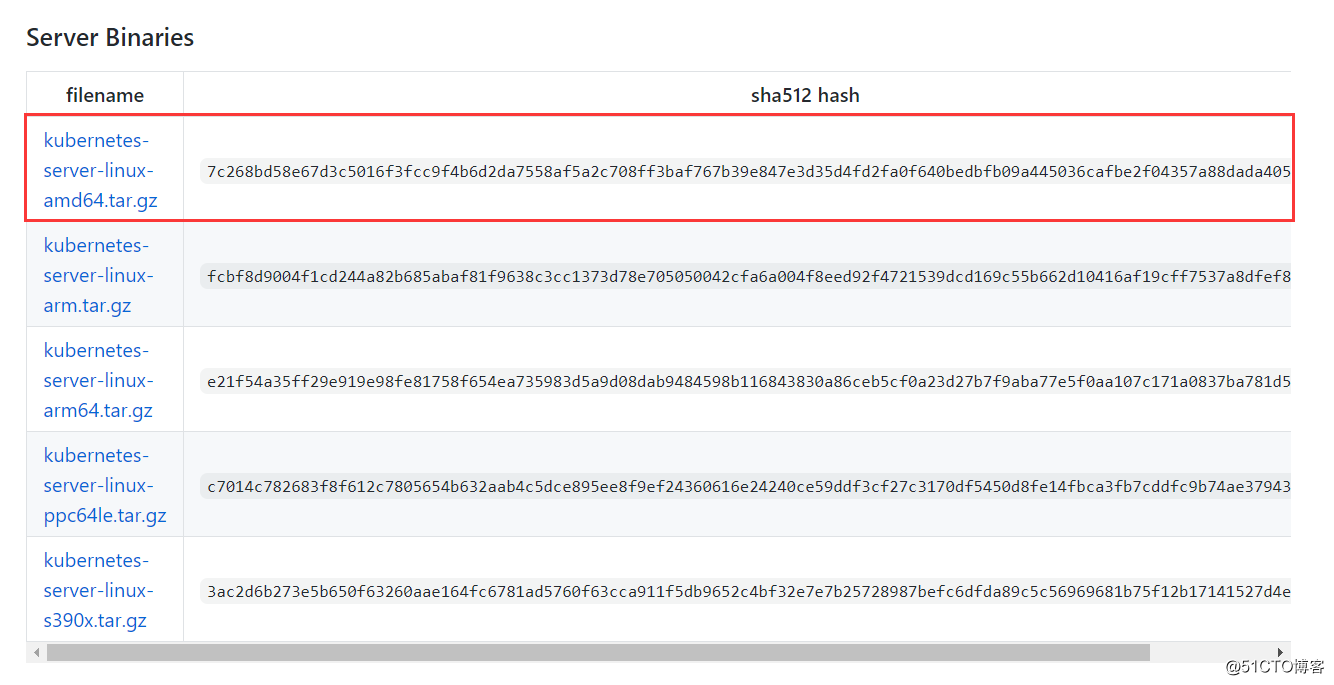

只需要在“Server Binaries”一栏选择对应的平台架构下载即可,因为Server的压缩包里已经包含了Node和Client的二进制文件:

复制下载链接,到系统上下载并解压:

[root@m1 ~]# cd /usr/local/src

[root@m1 /usr/local/src]# wget https://dl.k8s.io/v1.19.0/kubernetes-server-linux-amd64.tar.gz # 下载

[root@m1 /usr/local/src]# tar -zxvf kubernetes-server-linux-amd64.tar.gz # 解压k8s的二进制文件都存放在kubernetes/server/bin/目录下:

[root@m1 /usr/local/src]# ls kubernetes/server/bin/

apiextensions-apiserver kube-apiserver kube-controller-manager kubectl kube-proxy.docker_tag kube-scheduler.docker_tag

kubeadm kube-apiserver.docker_tag kube-controller-manager.docker_tag kubelet kube-proxy.tar kube-scheduler.tar

kube-aggregator kube-apiserver.tar kube-controller-manager.tar kube-proxy kube-scheduler mounter

[root@m1 /usr/local/src]# 为了后面copy文件方便,我们需要整理一下文件,将不同节点所需的二进制文件统一放在相同的目录下。具体步骤如下:

[root@m1 /usr/local/src]# mkdir -p k8s-master k8s-worker

[root@m1 /usr/local/src]# cd kubernetes/server/bin/

[root@m1 /usr/local/src/kubernetes/server/bin]# for i in kubeadm kube-apiserver kube-controller-manager kubectl kube-scheduler;do cp $i /usr/local/src/k8s-master/; done

[root@m1 /usr/local/src/kubernetes/server/bin]# for i in kubelet kube-proxy;do cp $i /usr/local/src/k8s-worker/; done

[root@m1 /usr/local/src/kubernetes/server/bin]# 整理后的文件都被放在了相应的目录下,k8s-master目录存放master所需的二进制文件,k8s-worker目录则存放了worker节点所需的文件:

[root@m1 /usr/local/src/kubernetes/server/bin]# cd /usr/local/src

[root@m1 /usr/local/src]# ls k8s-master/

kubeadm kube-apiserver kube-controller-manager kubectl kube-scheduler

[root@m1 /usr/local/src]# ls k8s-worker/

kubelet kube-proxy

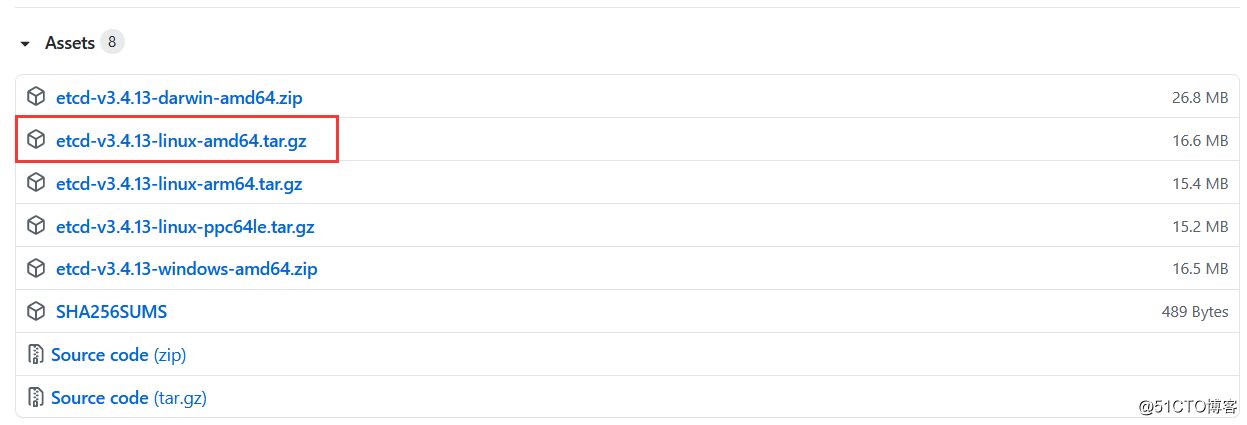

[root@m1 /usr/local/src]# k8s依赖于etcd做分布式存储,所以接下来我们还需要下载etcd,官方下载地址如下:

我这里下载的是3.4.13版本:

同样,复制下载链接到系统上使用wget命令进行下载并解压:

[root@m1 /usr/local/src]# wget https://github.com/etcd-io/etcd/releases/download/v3.4.13/etcd-v3.4.13-linux-amd64.tar.gz

[root@m1 /usr/local/src]# mkdir etcd && tar -zxvf etcd-v3.4.13-linux-amd64.tar.gz -C etcd --strip-components 1

[root@m1 /usr/local/src]# ls etcd

Documentation etcd etcdctl README-etcdctl.md README.md READMEv2-etcdctl.md

[root@m1 /usr/local/src]# 将etcd的二进制文件拷贝到k8s-master目录下:

[root@m1 /usr/local/src]# cd etcd

[root@m1 /usr/local/src/etcd]# for i in etcd etcdctl;do cp $i /usr/local/src/k8s-master/; done

[root@m1 /usr/local/src/etcd]# ls ../k8s-master/

etcd etcdctl kubeadm kube-apiserver kube-controller-manager kubectl kube-scheduler

[root@m1 /usr/local/src/etcd]# 在所有节点上创建/opt/kubernetes/bin目录:

$ mkdir -p /opt/kubernetes/bin将二进制文件分发到相应的节点上:

[root@m1 /usr/local/src]# for i in m1 m2 m3; do scp k8s-master/* $i:/opt/kubernetes/bin/; done

[root@m1 /usr/local/src]# for i in n1 n2; do scp k8s-worker/* $i:/opt/kubernetes/bin/; done给每个节点设置PATH环境变量:

[root@m1 /usr/local/src]# for i in m1 m2 m3 n1 n2; do ssh $i "echo ‘PATH=/opt/kubernetes/bin:$PATH‘ >> ~/.bashrc"; donecfssl是非常好用的CA工具,我们用它来生成证书和秘钥文件。安装过程比较简单,我这里选择在m1节点上安装。首先下载cfssl的二进制文件:

[root@m1 ~]# mkdir -p ~/bin

[root@m1 ~]# wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -O ~/bin/cfssl

[root@m1 ~]# wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -O ~/bin/cfssljson将这两个文件授予可执行的权限:

[root@m1 ~]# chmod +x ~/bin/cfssl ~/bin/cfssljson设置一下PATH环境变量:

[root@m1 ~]# vim ~/.bashrc

PATH=~/bin:$PATH

[root@m1 ~]# source ~/.bashrc验证一下是否能正常执行:

[root@m1 ~]# cfssl version

Version: 1.2.0

Revision: dev

Runtime: go1.6

[root@m1 ~]# 根证书是集群所有节点共享的,所以只需要创建一个 CA 证书,后续创建的所有证书都由它签名。首先创建一个ca-csr.json文件,内容如下:

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "seven"

}

]

}执行以下命令,生成证书和私钥

[root@m1 ~]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca生成完成后会有以下文件(我们最终想要的就是ca-key.pem和ca.pem,一个秘钥,一个证书):

[root@m1 ~]# ls *.pem

ca-key.pem ca.pem

[root@m1 ~]# 将这两个文件分发到每个master节点上:

[root@m1 ~]# for i in m1 m2 m3; do ssh $i "mkdir -p /etc/kubernetes/pki/"; done

[root@m1 ~]# for i in m1 m2 m3; do scp *.pem $i:/etc/kubernetes/pki/; done接下来我们需要生成etcd节点使用的证书和私钥,创建ca-config.json文件,内容如下:

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}然后创建etcd-csr.json文件,内容如下:

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.243.143",

"192.168.243.144",

"192.168.243.145"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "seven"

}

]

}hosts里的ip是master节点的ip有了以上两个文件以后就可以使用如下命令生成etcd的证书和私钥:

[root@m1 ~]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

[root@m1 ~]# ls etcd*.pem # 执行成功会生成两个文件

etcd-key.pem etcd.pem

[root@m1 ~]# 然后将这两个文件分发到每个etcd节点:

[root@m1 ~]# for i in m1 m2 m3; do scp etcd*.pem $i:/etc/kubernetes/pki/; done创建etcd.service文件,用于后续可以通过systemctl命令去启动、停止及重启etcd服务,内容如下:

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

Documentation=https://github.com/coreos

[Service]

Type=notify

WorkingDirectory=/var/lib/etcd/

ExecStart=/opt/kubernetes/bin/etcd --data-dir=/var/lib/etcd --name=m1 --cert-file=/etc/kubernetes/pki/etcd.pem --key-file=/etc/kubernetes/pki/etcd-key.pem --trusted-ca-file=/etc/kubernetes/pki/ca.pem --peer-cert-file=/etc/kubernetes/pki/etcd.pem --peer-key-file=/etc/kubernetes/pki/etcd-key.pem --peer-trusted-ca-file=/etc/kubernetes/pki/ca.pem --peer-client-cert-auth --client-cert-auth --listen-peer-urls=https://192.168.243.143:2380 --initial-advertise-peer-urls=https://192.168.243.143:2380 --listen-client-urls=https://192.168.243.143:2379,http://127.0.0.1:2379 --advertise-client-urls=https://192.168.243.143:2379 --initial-cluster-token=etcd-cluster-0 --initial-cluster=m1=https://192.168.243.143:2380,m2=https://192.168.243.144:2380,m3=https://192.168.243.145:2380 --initial-cluster-state=new

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target将该配置文件分发到每个master节点:

[root@m1 ~]# for i in m1 m2 m3; do scp etcd.service $i:/etc/systemd/system/; done分发完之后,需要在除了m1以外的其他master节点上修改etcd.service文件的内容,主要修改如下几处:

# 修改为所在节点的hostname

--name=m1

# 以下几项则是将ip修改为所在节点的ip,本地ip不用修改

--listen-peer-urls=https://192.168.243.143:2380

--initial-advertise-peer-urls=https://192.168.243.143:2380

--listen-client-urls=https://192.168.243.143:2379,http://127.0.0.1:2379

--advertise-client-urls=https://192.168.243.143:2379 接着在每个master节点上创建etcd的工作目录:

[root@m1 ~]# for i in m1 m2 m3; do ssh $i "mkdir -p /var/lib/etcd"; done在各个etcd节点上执行如下命令启动etcd服务:

$ systemctl daemon-reload && systemctl enable etcd && systemctl restart etcdsystemctl start etcd 会卡住一段时间,为正常现象。查看服务状态,状态为active (running)代表启动成功:

$ systemctl status etcd如果没有启动成功,可以查看启动日志排查下问题:

$ journalctl -f -u etcd第一步还是一样的,首先生成api-server的证书和私钥。创建kubernetes-csr.json文件,内容如下:

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.243.143",

"192.168.243.144",

"192.168.243.145",

"192.168.243.101",

"10.255.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "seven"

}

]

}生成证书、私钥:

[root@m1 ~]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes

[root@m1 ~]# ls kubernetes*.pem

kubernetes-key.pem kubernetes.pem

[root@m1 ~]# 分发到每个master节点:

[root@m1 ~]# for i in m1 m2 m3; do scp kubernetes*.pem $i:/etc/kubernetes/pki/; done创建kube-apiserver.service文件,内容如下:

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

ExecStart=/opt/kubernetes/bin/kube-apiserver --enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota --anonymous-auth=false --advertise-address=192.168.243.143 --bind-address=0.0.0.0 --insecure-port=0 --authorization-mode=Node,RBAC --runtime-config=api/all=true --enable-bootstrap-token-auth --service-cluster-ip-range=10.255.0.0/16 --service-node-port-range=8400-8900 --tls-cert-file=/etc/kubernetes/pki/kubernetes.pem --tls-private-key-file=/etc/kubernetes/pki/kubernetes-key.pem --client-ca-file=/etc/kubernetes/pki/ca.pem --kubelet-client-certificate=/etc/kubernetes/pki/kubernetes.pem --kubelet-client-key=/etc/kubernetes/pki/kubernetes-key.pem --service-account-key-file=/etc/kubernetes/pki/ca-key.pem --etcd-cafile=/etc/kubernetes/pki/ca.pem --etcd-certfile=/etc/kubernetes/pki/kubernetes.pem --etcd-keyfile=/etc/kubernetes/pki/kubernetes-key.pem --etcd-servers=https://192.168.243.143:2379,https://192.168.243.144:2379,https://192.168.243.145:2379 --enable-swagger-ui=true --allow-privileged=true --apiserver-count=3 --audit-log-maxage=30 --audit-log-maxbackup=3 --audit-log-maxsize=100 --audit-log-path=/var/log/kube-apiserver-audit.log --event-ttl=1h --alsologtostderr=true --logtostderr=false --log-dir=/var/log/kubernetes --v=2

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target将该配置文件分发到每个master节点:

[root@m1 ~]# for i in m1 m2 m3; do scp kube-apiserver.service $i:/etc/systemd/system/; done分发完之后,需要在除了m1以外的其他master节点上修改kube-apiserver.service文件的内容。只需要修改以下一项:

# 修改为所在节点的ip即可

--advertise-address=192.168.243.143然后在所有的master节点上创建api-server的日志目录:

[root@m1 ~]# for i in m1 m2 m3; do ssh $i "mkdir -p /var/log/kubernetes"; done在各个master节点上执行如下命令启动api-server服务:

$ systemctl daemon-reload && systemctl enable kube-apiserver && systemctl restart kube-apiserver查看服务状态,状态为active (running)代表启动成功:

$ systemctl status kube-apiserver检查是否有正常监听6443端口:

[root@m1 ~]# netstat -lntp |grep 6443

tcp6 0 0 :::6443 :::* LISTEN 24035/kube-apiserve

[root@m1 ~]# 如果没有启动成功,可以查看启动日志排查下问题:

$ journalctl -f -u kube-apiserver在两个主节点上安装keepalived即可(一主一备),我这里选择在m1和m2节点上安装:

$ yum install -y keepalived在m1和m2节点上创建一个目录用于存放keepalived的配置文件:

[root@m1 ~]# for i in m1 m2; do ssh $i "mkdir -p /etc/keepalived"; done在m1(角色为master)上创建配置文件如下:

[root@m1 ~]# mv /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf.back

[root@m1 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id keepalive-master

}

vrrp_script check_apiserver {

# 检测脚本路径

script "/etc/keepalived/check-apiserver.sh"

# 多少秒检测一次

interval 3

# 失败的话权重-2

weight -2

}

vrrp_instance VI-kube-master {

state MASTER # 定义节点角色

interface ens32 # 网卡名称

virtual_router_id 68

priority 100

dont_track_primary

advert_int 3

virtual_ipaddress {

# 自定义虚拟ip

192.168.243.101

}

track_script {

check_apiserver

}

}在m2(角色为backup)上创建配置文件如下:

[root@m1 ~]# mv /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf.back

[root@m1 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id keepalive-backup

}

vrrp_script check_apiserver {

script "/etc/keepalived/check-apiserver.sh"

interval 3

weight -2

}

vrrp_instance VI-kube-master {

state BACKUP

interface ens32

virtual_router_id 68

priority 99

dont_track_primary

advert_int 3

virtual_ipaddress {

192.168.243.101

}

track_script {

check_apiserver

}

}分别在m1和m2节点上创建keepalived的检测脚本:

$ vim /etc/keepalived/check-apiserver.sh # 创建检测脚本,内容如下

#!/bin/sh

errorExit() {

echo "*** $*" 1>&2

exit 1

}

# 检查本机api-server是否正常

curl --silent --max-time 2 --insecure https://localhost:6443/ -o /dev/null || errorExit "Error GET https://localhost:6443/"

# 如果虚拟ip绑定在本机上,则检查能否通过虚拟ip正常访问到api-server

if ip addr | grep -q 192.168.243.101; then

curl --silent --max-time 2 --insecure https://192.168.243.101:6443/ -o /dev/null || errorExit "Error GET https://192.168.243.101:6443/"

fi分别在master和backup上启动keepalived服务:

$ systemctl enable keepalived && service keepalived start查看服务状态,状态为active (running)代表启动成功:

$ systemctl status keepalived查看有无正常绑定虚拟ip:

$ ip a |grep 192.168.243.101访问测试,能返回数据代表服务是正在运行的:

[root@m1 ~]# curl --insecure https://192.168.243.101:6443/

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {

},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

}

[root@m1 ~]#如果没有启动成功,可以查看日志排查下问题:

$ journalctl -f -u keepalivedkubectl 是 kubernetes 集群的命令行管理工具,它默认从 ~/.kube/config 文件读取 kube-apiserver 地址、证书、用户名等信息。

kubectl 与 apiserver https 安全端口通信,apiserver 对提供的证书进行认证和授权。kubectl 作为集群的管理工具,需要被授予最高权限,所以这里创建具有最高权限的 admin 证书。首先创建admin-csr.json文件,内容如下:

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "seven"

}

]

}使用cfssl工具创建证书和私钥:

[root@m1 ~]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

[root@m1 ~]# ls admin*.pem

admin-key.pem admin.pem

[root@m1 ~]# kubeconfig 为 kubectl 的配置文件,包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书。

1、设置集群参数:

[root@m1 ~]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.243.101:6443 --kubeconfig=kube.config2、设置客户端认证参数:

[root@m1 ~]# kubectl config set-credentials admin --client-certificate=admin.pem --client-key=admin-key.pem --embed-certs=true --kubeconfig=kube.config3、设置上下文参数:

[root@m1 ~]# kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=kube.config4、设置默认上下文:

[root@m1 ~]# kubectl config use-context kubernetes --kubeconfig=kube.config5、拷贝文件配置文件并重命名为~/.kube/config:

[root@m1 ~]# cp kube.config ~/.kube/config在执行 kubectl exec、run、logs 等命令时,apiserver 会转发到 kubelet。这里定义 RBAC 规则,授权 apiserver 调用 kubelet API。

[root@m1 ~]# kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes

clusterrolebinding.rbac.authorization.k8s.io/kube-apiserver:kubelet-apis created

[root@m1 ~]# 1、查看集群信息:

[root@m1 ~]# kubectl cluster-info

Kubernetes master is running at https://192.168.243.101:6443

To further debug and diagnose cluster problems, use ‘kubectl cluster-info dump‘.

[root@m1 ~]# 2、查看集群中所有命名空间下的资源信息:

[root@m1 ~]# kubectl get all --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.255.0.1 <none> 443/TCP 43m

[root@m1 ~]# 4、查看集群中的组件状态:

[root@m1 ~]# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Unhealthy Get "http://127.0.0.1:10251/healthz": dial tcp 127.0.0.1:10251: connect: connection refused

controller-manager Unhealthy Get "http://127.0.0.1:10252/healthz": dial tcp 127.0.0.1:10252: connect: connection refused

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

[root@m1 ~]# kubectl是用于与k8s集群交互的一个命令行工具,操作k8s基本离不开这个工具,所以该工具所支持的命令比较多。好在kubectl支持设置命令补全,使用kubectl completion -h可以查看各个平台下的设置示例。这里以Linux平台为例,演示一下如何设置这个命令补全,完成以下操作后就可以使用tap键补全命令了:

[root@m1 ~]# yum install bash-completion -y

[root@m1 ~]# source /usr/share/bash-completion/bash_completion

[root@m1 ~]# source <(kubectl completion bash)

[root@m1 ~]# kubectl completion bash > ~/.kube/completion.bash.inc

[root@m1 ~]# printf "

# Kubectl shell completion

source ‘$HOME/.kube/completion.bash.inc‘

" >> $HOME/.bash_profile

[root@m1 ~]# source $HOME/.bash_profilecontroller-manager启动后将通过竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。当 leader 节点不可用后,剩余节点将再次进行选举产生新的 leader 节点,从而保证服务的可用性。

创建controller-manager-csr.json文件,内容如下:

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"192.168.243.143",

"192.168.243.144",

"192.168.243.145"

],

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:kube-controller-manager",

"OU": "seven"

}

]

}生成证书、私钥:

[root@m1 ~]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes controller-manager-csr.json | cfssljson -bare controller-manager

[root@m1 ~]# ls controller-manager*.pem

controller-manager-key.pem controller-manager.pem

[root@m1 ~]# 分发到每个master节点:

[root@m1 ~]# for i in m1 m2 m3; do scp controller-manager*.pem $i:/etc/kubernetes/pki/; done创建kubeconfig:

# 设置集群参数

[root@m1 ~]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.243.101:6443 --kubeconfig=controller-manager.kubeconfig

# 设置客户端认证参数

[root@m1 ~]# kubectl config set-credentials system:kube-controller-manager --client-certificate=controller-manager.pem --client-key=controller-manager-key.pem --embed-certs=true --kubeconfig=controller-manager.kubeconfig

# 设置上下文参数

[root@m1 ~]# kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=controller-manager.kubeconfig设置默认上下文:

[root@m1 ~]# kubectl config use-context system:kube-controller-manager --kubeconfig=controller-manager.kubeconfig分发controller-manager.kubeconfig文件到每个master节点上:

[root@m1 ~]# for i in m1 m2 m3; do scp controller-manager.kubeconfig $i:/etc/kubernetes/; done创建kube-controller-manager.service文件,内容如下:

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

ExecStart=/opt/kubernetes/bin/kube-controller-manager --port=0 --secure-port=10252 --bind-address=127.0.0.1 --kubeconfig=/etc/kubernetes/controller-manager.kubeconfig --service-cluster-ip-range=10.255.0.0/16 --cluster-name=kubernetes --cluster-signing-cert-file=/etc/kubernetes/pki/ca.pem --cluster-signing-key-file=/etc/kubernetes/pki/ca-key.pem --allocate-node-cidrs=true --cluster-cidr=172.23.0.0/16 --experimental-cluster-signing-duration=87600h --root-ca-file=/etc/kubernetes/pki/ca.pem --service-account-private-key-file=/etc/kubernetes/pki/ca-key.pem --leader-elect=true --feature-gates=RotateKubeletServerCertificate=true --controllers=*,bootstrapsigner,tokencleaner --horizontal-pod-autoscaler-use-rest-clients=true --horizontal-pod-autoscaler-sync-period=10s --tls-cert-file=/etc/kubernetes/pki/controller-manager.pem --tls-private-key-file=/etc/kubernetes/pki/controller-manager-key.pem --use-service-account-credentials=true --alsologtostderr=true --logtostderr=false --log-dir=/var/log/kubernetes --v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target将kube-controller-manager.service配置文件分发到每个master节点上:

[root@m1 ~]# for i in m1 m2 m3; do scp kube-controller-manager.service $i:/etc/systemd/system/; done在各个master节点上启动kube-controller-manager服务,具体命令如下:

$ systemctl daemon-reload && systemctl enable kube-controller-manager && systemctl restart kube-controller-manager查看服务状态,状态为active (running)代表启动成功:

$ systemctl status kube-controller-manager查看leader信息:

[root@m1 ~]# kubectl get endpoints kube-controller-manager --namespace=kube-system -o yaml

apiVersion: v1

kind: Endpoints

metadata:

annotations:

control-plane.alpha.kubernetes.io/leader: ‘{"holderIdentity":"m1_ae36dc74-68d0-444d-8931-06b37513990a","leaseDurationSeconds":15,"acquireTime":"2020-09-04T15:47:14Z","renewTime":"2020-09-04T15:47:39Z","leaderTransitions":0}‘

creationTimestamp: "2020-09-04T15:47:15Z"

managedFields:

- apiVersion: v1

fieldsType: FieldsV1

fieldsV1:

f:metadata:

f:annotations:

.: {}

f:control-plane.alpha.kubernetes.io/leader: {}

manager: kube-controller-manager

operation: Update

time: "2020-09-04T15:47:39Z"

name: kube-controller-manager

namespace: kube-system

resourceVersion: "1908"

selfLink: /api/v1/namespaces/kube-system/endpoints/kube-controller-manager

uid: 149b117e-f7c4-4ad8-bc83-09345886678a

[root@m1 ~]# 如果没有启动成功,可以查看日志排查下问题:

$ journalctl -f -u kube-controller-managerscheduler启动后将通过竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。当 leader 节点不可用后,剩余节点将再次进行选举产生新的 leader 节点,从而保证服务的可用性。

创建scheduler-csr.json文件,内容如下:

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"192.168.243.143",

"192.168.243.144",

"192.168.243.145"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:kube-scheduler",

"OU": "seven"

}

]

}生成证书和私钥:

[root@m1 ~]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes scheduler-csr.json | cfssljson -bare kube-scheduler

[root@m1 ~]# ls kube-scheduler*.pem

kube-scheduler-key.pem kube-scheduler.pem

[root@m1 ~]# 分发到每个master节点:

[root@m1 ~]# for i in m1 m2 m3; do scp kube-scheduler*.pem $i:/etc/kubernetes/pki/; done创建kubeconfig:

# 设置集群参数

[root@m1 ~]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.243.101:6443 --kubeconfig=kube-scheduler.kubeconfig

# 设置客户端认证参数

[root@m1 ~]# kubectl config set-credentials system:kube-scheduler --client-certificate=kube-scheduler.pem --client-key=kube-scheduler-key.pem --embed-certs=true --kubeconfig=kube-scheduler.kubeconfig

# 设置上下文参数

[root@m1 ~]# kubectl config set-context system:kube-scheduler --cluster=kubernetes --user=system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig设置默认上下文:

[root@m1 ~]# kubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig分发kube-scheduler.kubeconfig文件到每个master节点上:

[root@m1 ~]# for i in m1 m2 m3; do scp kube-scheduler.kubeconfig $i:/etc/kubernetes/; done创建kube-scheduler.service文件,内容如下:

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

ExecStart=/opt/kubernetes/bin/kube-scheduler --address=127.0.0.1 --kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig --leader-elect=true --alsologtostderr=true --logtostderr=false --log-dir=/var/log/kubernetes --v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target将kube-scheduler.service配置文件分发到每个master节点上:

[root@m1 ~]# for i in m1 m2 m3; do scp kube-scheduler.service $i:/etc/systemd/system/; done在每个master节点上启动kube-scheduler服务:

$ systemctl daemon-reload && systemctl enable kube-scheduler && systemctl restart kube-scheduler查看服务状态,状态为active (running)代表启动成功:

$ service kube-scheduler status查看leader信息:

[root@m1 ~]# kubectl get endpoints kube-scheduler --namespace=kube-system -o yaml

apiVersion: v1

kind: Endpoints

metadata:

annotations:

control-plane.alpha.kubernetes.io/leader: ‘{"holderIdentity":"m1_f6c4da9f-85b4-47e2-919d-05b24b4aacac","leaseDurationSeconds":15,"acquireTime":"2020-09-04T16:03:57Z","renewTime":"2020-09-04T16:04:19Z","leaderTransitions":0}‘

creationTimestamp: "2020-09-04T16:03:57Z"

managedFields:

- apiVersion: v1

fieldsType: FieldsV1

fieldsV1:

f:metadata:

f:annotations:

.: {}

f:control-plane.alpha.kubernetes.io/leader: {}

manager: kube-scheduler

operation: Update

time: "2020-09-04T16:04:19Z"

name: kube-scheduler

namespace: kube-system

resourceVersion: "3230"

selfLink: /api/v1/namespaces/kube-system/endpoints/kube-scheduler

uid: c2f2210d-b00f-4157-b597-d3e3b4bec38b

[root@m1 ~]# 如果没有启动成功,可以查看日志排查下问题:

$ journalctl -f -u kube-scheduler首先我们需要预先下载镜像到所有的节点上,由于有些镜像不***无法下载,所以这里提供了一个简单的脚本拉取阿里云仓库的镜像并修改了tag:

[root@m1 ~]# vim download-images.sh

#!/bin/bash

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.2

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.2 k8s.gcr.io/pause-amd64:3.2

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.2

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.7.0

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.7.0 k8s.gcr.io/coredns:1.7.0

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.7.0将脚本分发到其他节点上:

[root@m1 ~]# for i in m2 m3 n1 n2; do scp download-images.sh $i:~; done然后让每个节点执行该脚本:

[root@m1 ~]# for i in m1 m2 m3 n1 n2; do ssh $i "sh ~/download-images.sh"; done拉取完成后,此时各个节点应有如下镜像:

$ docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/coredns 1.7.0 bfe3a36ebd25 2 months ago 45.2MB

k8s.gcr.io/pause-amd64 3.2 80d28bedfe5d 6 months ago 683kB创建 token 并设置环境变量:

[root@m1 ~]# export BOOTSTRAP_TOKEN=$(kubeadm token create --description kubelet-bootstrap-token --groups system:bootstrappers:worker --kubeconfig kube.config)创建kubelet-bootstrap.kubeconfig:

# 设置集群参数

[root@m1 ~]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.243.101:6443 --kubeconfig=kubelet-bootstrap.kubeconfig

# 设置客户端认证参数

[root@m1 ~]# kubectl config set-credentials kubelet-bootstrap --token=${BOOTSTRAP_TOKEN} --kubeconfig=kubelet-bootstrap.kubeconfig

# 设置上下文参数

[root@m1 ~]# kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig设置默认上下文:

[root@m1 ~]# kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig在worker节点上创建k8s配置文件存储目录并把生成的配置文件copy到每个worker节点上:

[root@m1 ~]# for i in n1 n2; do ssh $i "mkdir /etc/kubernetes/"; done

[root@m1 ~]# for i in n1 n2; do scp kubelet-bootstrap.kubeconfig $i:/etc/kubernetes/kubelet-bootstrap.kubeconfig; done在worker节点上创建密钥存放目录:

[root@m1 ~]# for i in n1 n2; do ssh $i "mkdir -p /etc/kubernetes/pki"; done把CA证书分发到每个worker节点上:

[root@m1 ~]# for i in n1 n2; do scp ca.pem $i:/etc/kubernetes/pki/; done创建kubelet.config.json配置文件,内容如下:

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/pki/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "192.168.243.146",

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "cgroupfs",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"featureGates": {

"RotateKubeletClientCertificate": true,

"RotateKubeletServerCertificate": true

},

"clusterDomain": "cluster.local.",

"clusterDNS": ["10.255.0.2"]

}把kubelet配置文件分发到每个worker节点上:

[root@m1 ~]# for i in n1 n2; do scp kubelet.config.json $i:/etc/kubernetes/; done注意:分发完成后需要修改配置文件中的address字段,改为所在节点的IP

创建kubelet.service文件,内容如下:

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/opt/kubernetes/bin/kubelet --bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig --cert-dir=/etc/kubernetes/pki --kubeconfig=/etc/kubernetes/kubelet.kubeconfig --config=/etc/kubernetes/kubelet.config.json --network-plugin=cni --pod-infra-container-image=k8s.gcr.io/pause-amd64:3.2 --alsologtostderr=true --logtostderr=false --log-dir=/var/log/kubernetes --v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target把kubelet的服务文件分发到每个worker节点上

[root@m1 ~]# for i in n1 n2; do scp kubelet.service $i:/etc/systemd/system/; donekublet 启动时会查找 --kubeletconfig 参数配置的文件是否存在,如果不存在则使用 --bootstrap-kubeconfig 向 kube-apiserver 发送证书签名请求 (CSR)。

kube-apiserver 收到 CSR 请求后,对其中的 Token 进行认证(事先使用 kubeadm 创建的 token),认证通过后将请求的 user 设置为 system:bootstrap:,group 设置为 system:bootstrappers,这就是Bootstrap Token Auth。

bootstrap赋权,即创建一个角色绑定:

[root@m1 ~]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --group=system:bootstrappers然后就可以启动kubelet服务了,在每个worker节点上执行如下命令:

$ mkdir -p /var/lib/kubelet

$ systemctl daemon-reload && systemctl enable kubelet && systemctl restart kubelet查看服务状态,状态为active (running)代表启动成功:

$ systemctl status kubelet如果没有启动成功,可以查看日志排查下问题:

$ journalctl -f -u kubelet确认kubelet服务启动成功后,接着到master上Approve一下bootstrap请求。执行如下命令可以看到两个worker节点分别发送了两个 CSR 请求:

[root@m1 ~]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-0U6dO2MrD_KhUCdofq1rab6yrLvuVMJkAXicLldzENE 27s kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:seh1w7 Pending

node-csr-QMAVx75MnxCpDT5QtI6liNZNfua39vOwYeUyiqTIuPg 74s kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:seh1w7 Pending

[root@m1 ~]# 然后Approve这两个请求即可:

[root@m1 ~]# kubectl certificate approve node-csr-0U6dO2MrD_KhUCdofq1rab6yrLvuVMJkAXicLldzENE

certificatesigningrequest.certificates.k8s.io/node-csr-0U6dO2MrD_KhUCdofq1rab6yrLvuVMJkAXicLldzENE approved

[root@m1 ~]# kubectl certificate approve node-csr-QMAVx75MnxCpDT5QtI6liNZNfua39vOwYeUyiqTIuPg

certificatesigningrequest.certificates.k8s.io/node-csr-QMAVx75MnxCpDT5QtI6liNZNfua39vOwYeUyiqTIuPg approved

[root@m1 ~]# 创建 kube-proxy-csr.json 文件,内容如下:

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "seven"

}

]

}生成证书和私钥:

[root@m1 ~]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

[root@m1 ~]# ls kube-proxy*.pem

kube-proxy-key.pem kube-proxy.pem

[root@m1 ~]# 执行如下命令创建kube-proxy.kubeconfig文件:

# 设置集群参数

[root@m1 ~]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.243.101:6443 --kubeconfig=kube-proxy.kubeconfig

# 设置客户端认证参数

[root@m1 ~]# kubectl config set-credentials kube-proxy --client-certificate=kube-proxy.pem --client-key=kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

# 设置上下文参数

[root@m1 ~]# kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig切换默认上下文:

[root@m1 ~]# kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig分发kube-proxy.kubeconfig文件到各个worker节点上:

[root@m1 ~]# for i in n1 n2; do scp kube-proxy.kubeconfig $i:/etc/kubernetes/; done创建kube-proxy.config.yaml文件,内容如下:

apiVersion: kubeproxy.config.k8s.io/v1alpha1

# 修改为所在节点的ip

bindAddress: {worker_ip}

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 172.23.0.0/16

# 修改为所在节点的ip

healthzBindAddress: {worker_ip}:10256

kind: KubeProxyConfiguration

# 修改为所在节点的ip

metricsBindAddress: {worker_ip}:10249

mode: "iptables"将kube-proxy.config.yaml文件分发到每个worker节点上:

[root@m1 ~]# for i in n1 n2; do scp kube-proxy.config.yaml $i:/etc/kubernetes/; done创建kube-proxy.service文件,内容如下:

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy --config=/etc/kubernetes/kube-proxy.config.yaml --alsologtostderr=true --logtostderr=false --log-dir=/var/log/kubernetes --v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target将kube-proxy.service文件分发到所有worker节点上:

[root@m1 ~]# for i in n1 n2; do scp kube-proxy.service $i:/etc/systemd/system/; done创建kube-proxy服务所依赖的目录:

[root@m1 ~]# for i in n1 n2; do ssh $i "mkdir -p /var/lib/kube-proxy && mkdir -p /var/log/kubernetes"; done然后就可以启动kube-proxy服务了,在每个worker节点上执行如下命令:

$ systemctl daemon-reload && systemctl enable kube-proxy && systemctl restart kube-proxy查看服务状态,状态为active (running)代表启动成功:

$ systemctl status kube-proxy如果没有启动成功,可以查看日志排查下问题:

$ journalctl -f -u kube-proxy我们使用calico官方的安装方式来部署。创建目录(在配置了kubectl的节点上执行):

[root@m1 ~]# mkdir -p /etc/kubernetes/addons在该目录下创建calico-rbac-kdd.yaml文件,内容如下:

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: calico-node

rules:

- apiGroups: [""]

resources:

- namespaces

verbs:

- get

- list

- watch

- apiGroups: [""]

resources:

- pods/status

verbs:

- update

- apiGroups: [""]

resources:

- pods

verbs:

- get

- list

- watch

- patch

- apiGroups: [""]

resources:

- services

verbs:

- get

- apiGroups: [""]

resources:

- endpoints

verbs:

- get

- apiGroups: [""]

resources:

- nodes

verbs:

- get

- list

- update

- watch

- apiGroups: ["extensions"]

resources:

- networkpolicies

verbs:

- get

- list

- watch

- apiGroups: ["networking.k8s.io"]

resources:

- networkpolicies

verbs:

- watch

- list

- apiGroups: ["crd.projectcalico.org"]

resources:

- globalfelixconfigs

- felixconfigurations

- bgppeers

- globalbgpconfigs

- bgpconfigurations

- ippools

- globalnetworkpolicies

- globalnetworksets

- networkpolicies

- clusterinformations

- hostendpoints

verbs:

- create

- get

- list

- update

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: calico-node

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: calico-node

subjects:

- kind: ServiceAccount

name: calico-node

namespace: kube-system然后分别执行如下命令完成calico的安装:

[root@m1 ~]# kubectl apply -f /etc/kubernetes/addons/calico-rbac-kdd.yaml

[root@m1 ~]# kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml等待几分钟后查看Pod状态,均为Running才代表部署成功:

[root@m1 ~]# kubectl get pod --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-5bc4fc6f5f-z8lhf 1/1 Running 0 105s

kube-system calico-node-qflvj 1/1 Running 0 105s

kube-system calico-node-x9m2n 1/1 Running 0 105s

[root@m1 ~]# 在/etc/kubernetes/addons/目录下创建coredns.yaml配置文件:

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

health {

lameduck 5s

}

ready

kubernetes cluster.local in-addr.arpa ip6.arpa {

fallthrough in-addr.arpa ip6.arpa

}

prometheus :9153

forward . /etc/resolv.conf {

max_concurrent 1000

}

cache 30

loop

reload

loadbalance

}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: kube-dns

kubernetes.io/name: "CoreDNS"

spec:

# replicas: not specified here:

# 1. Default is 1.

# 2. Will be tuned in real time if DNS horizontal auto-scaling is turned on.

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

selector:

matchLabels:

k8s-app: kube-dns

template:

metadata:

labels:

k8s-app: kube-dns

spec:

priorityClassName: system-cluster-critical

serviceAccountName: coredns

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

nodeSelector:

kubernetes.io/os: linux

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: k8s-app

operator: In

values: ["kube-dns"]

topologyKey: kubernetes.io/hostname

containers:

- name: coredns

image: coredns/coredns:1.7.0

imagePullPolicy: IfNotPresent

resources:

limits:

memory: 170Mi

requests:

cpu: 100m

memory: 70Mi

args: [ "-conf", "/etc/coredns/Corefile" ]

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

readOnly: true

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

securityContext:

allowPrivilegeEscalation: false

capabilities:

add:

- NET_BIND_SERVICE

drop:

- all

readOnlyRootFilesystem: true

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

readinessProbe:

httpGet:

path: /ready

port: 8181

scheme: HTTP

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

---

apiVersion: v1

kind: Service

metadata:

name: kube-dns

namespace: kube-system

annotations:

prometheus.io/port: "9153"

prometheus.io/scrape: "true"

labels:

k8s-app: kube-dns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: kube-dns

clusterIP: 10.255.0.2

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

protocol: TCP

- name: metrics

port: 9153

protocol: TCP-i参数指定dns的clusterIP,通常为kubernetes服务ip网段的第二个,ip相关的定义在本文开头有说明然后执行如下命令部署coredns:

[root@m1 ~]# kubectl create -f /etc/kubernetes/addons/coredns.yaml

serviceaccount/coredns created

clusterrole.rbac.authorization.k8s.io/system:coredns created

clusterrolebinding.rbac.authorization.k8s.io/system:coredns created

configmap/coredns created

deployment.apps/coredns created

service/kube-dns created

[root@m1 ~]# 查看Pod状态:

[root@m1 ~]# kubectl get pod --all-namespaces | grep coredns

kube-system coredns-7bf4bd64bd-ww4q2 1/1 Running 0 3m40s

[root@m1 ~]# 查看集群中的节点状态:

[root@m1 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

n1 Ready <none> 3h30m v1.19.0

n2 Ready <none> 3h30m v1.19.0

[root@m1 ~]# 在m1节点上创建nginx-ds.yml配置文件,内容如下:

apiVersion: v1

kind: Service

metadata:

name: nginx-ds

labels:

app: nginx-ds

spec:

type: NodePort

selector:

app: nginx-ds

ports:

- name: http

port: 80

targetPort: 80

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: nginx-ds

labels:

addonmanager.kubernetes.io/mode: Reconcile

spec:

selector:

matchLabels:

app: nginx-ds

template:

metadata:

labels:

app: nginx-ds

spec:

containers:

- name: my-nginx

image: nginx:1.7.9

ports:

- containerPort: 80然后执行如下命令创建nginx ds:

[root@m1 ~]# kubectl create -f nginx-ds.yml

service/nginx-ds created

daemonset.apps/nginx-ds created

[root@m1 ~]# 稍等一会后,检查Pod状态是否正常:

[root@m1 ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ds-4f48f 1/1 Running 0 63s 172.16.40.130 n1 <none> <none>

nginx-ds-zsm7d 1/1 Running 0 63s 172.16.217.10 n2 <none> <none>

[root@m1 ~]# 在每个worker节点上去尝试ping Pod IP(master节点没有安装calico所以不能访问Pod IP):

[root@n1 ~]# ping 172.16.40.130

PING 172.16.40.130 (172.16.40.130) 56(84) bytes of data.

64 bytes from 172.16.40.130: icmp_seq=1 ttl=64 time=0.073 ms

64 bytes from 172.16.40.130: icmp_seq=2 ttl=64 time=0.055 ms

64 bytes from 172.16.40.130: icmp_seq=3 ttl=64 time=0.052 ms

64 bytes from 172.16.40.130: icmp_seq=4 ttl=64 time=0.054 ms

^C

--- 172.16.40.130 ping statistics ---

4 packets transmitted, 4 received, 0% packet loss, time 2999ms

rtt min/avg/max/mdev = 0.052/0.058/0.073/0.011 ms

[root@n1 ~]# 确认Pod IP能够ping通后,检查Service的状态:

[root@m1 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.255.0.1 <none> 443/TCP 17h

nginx-ds NodePort 10.255.4.100 <none> 80:8568/TCP 11m

[root@m1 ~]# 在每个worker节点上尝试访问nginx-ds服务(master节点没有proxy所以不能访问Service IP):

[root@n1 ~]# curl 10.255.4.100:80

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

width: 35em;

margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif;

}

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

[root@n1 ~]# 在每个节点上检查NodePort的可用性,NodePort会将服务的端口与宿主机的端口做映射,正常情况下所有节点都可以通过worker节点的 IP + NodePort 访问到nginx-ds服务:

$ curl 192.168.243.146:8568

$ curl 192.168.243.147:8568需要创建一个Nginx Pod,首先定义一个pod-nginx.yaml配置文件,内容如下:

apiVersion: v1

kind: Pod

metadata:

name: nginx

spec:

containers:

- name: nginx

image: nginx:1.7.9

ports:

- containerPort: 80然后基于该配置文件去创建Pod:

[root@m1 ~]# kubectl create -f pod-nginx.yaml

pod/nginx created

[root@m1 ~]# 使用如下命令进入到Pod里:

[root@m1 ~]# kubectl exec nginx -i -t -- /bin/bash查看dns配置,nameserver的值需为 coredns 的clusterIP:

root@nginx:/# cat /etc/resolv.conf

nameserver 10.255.0.2

search default.svc.cluster.local. svc.cluster.local. cluster.local. localdomain

options ndots:5

root@nginx:/# 接着测试是否可以正确解析Service的名称。如下能根据nginx-ds这个名称解析出对应的IP:10.255.4.100,代表dns也是正常的:

root@nginx:/# ping nginx-ds

PING nginx-ds.default.svc.cluster.local (10.255.4.100): 48 data byteskubernetes服务也能正常解析:

root@nginx:/# ping kubernetes

PING kubernetes.default.svc.cluster.local (10.255.0.1): 48 data bytes将m1节点上的kubectl配置文件拷贝到其他两台master节点上:

[root@m1 ~]# for i in m2 m3; do ssh $i "mkdir ~/.kube/"; done

[root@m1 ~]# for i in m2 m3; do scp ~/.kube/config $i:~/.kube/; done到m1节点上执行如下命令将其关机:

[root@m1 ~]# init 0然后查看虚拟IP是否成功漂移到了m2节点上:

[root@m2 ~]# ip a |grep 192.168.243.101

inet 192.168.243.101/32 scope global ens32

[root@m2 ~]# 接着测试能否在m2或m3节点上使用kubectl与集群进行交互,能正常交互则代表集群已经具备了高可用:

[root@m2 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

n1 Ready <none> 4h2m v1.19.0

n2 Ready <none> 4h2m v1.19.0

[root@m2 ~]# dashboard是k8s提供的一个可视化操作界面,用于简化我们对集群的操作和管理,在界面上我们可以很方便的查看各种信息、操作Pod、Service等资源,以及创建新的资源等。dashboard的仓库地址如下,

dashboard的部署也比较简单,首先定义dashboard-all.yaml配置文件,内容如下:

apiVersion: v1

kind: Namespace

metadata:

name: kubernetes-dashboard

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

nodePort: 8523

type: NodePort

selector:

k8s-app: kubernetes-dashboard

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kubernetes-dashboard

type: Opaque

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-csrf

namespace: kubernetes-dashboard

type: Opaque

data:

csrf: ""

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-key-holder

namespace: kubernetes-dashboard

type: Opaque

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-settings

namespace: kubernetes-dashboard

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

rules:

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update ‘kubernetes-dashboard-settings‘ config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster", "dashboard-metrics-scraper"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"]

verbs: ["get"]

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

rules:

# Allow Metrics Scraper to get metrics from the Metrics server

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

containers:

- name: kubernetes-dashboard

image: kubernetesui/dashboard:v2.0.3

imagePullPolicy: Always

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --namespace=kubernetes-dashboard

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

ports:

- port: 8000

targetPort: 8000

selector:

k8s-app: dashboard-metrics-scraper

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: dashboard-metrics-scraper

template:

metadata:

labels:

k8s-app: dashboard-metrics-scraper

annotations:

seccomp.security.alpha.kubernetes.io/pod: ‘runtime/default‘

spec:

containers:

- name: dashboard-metrics-scraper

image: kubernetesui/metrics-scraper:v1.0.4

ports:

- containerPort: 8000

protocol: TCP

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 8000

initialDelaySeconds: 30

timeoutSeconds: 30

volumeMounts:

- mountPath: /tmp

name: tmp-volume

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

volumes:

- name: tmp-volume

emptyDir: {}创建dashboard服务:

[root@m1 ~]# kubectl create -f dashboard-all.yaml

namespace/kubernetes-dashboard created

serviceaccount/kubernetes-dashboard created

service/kubernetes-dashboard created

secret/kubernetes-dashboard-certs created

secret/kubernetes-dashboard-csrf created

secret/kubernetes-dashboard-key-holder created

configmap/kubernetes-dashboard-settings created

role.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

deployment.apps/kubernetes-dashboard created

service/dashboard-metrics-scraper created

deployment.apps/dashboard-metrics-scraper created

[root@m1 ~]# 查看deployment运行情况:

[root@m1 ~]# kubectl get deployment kubernetes-dashboard -n kubernetes-dashboard

NAME READY UP-TO-DATE AVAILABLE AGE

kubernetes-dashboard 1/1 1 1 20s

[root@m1 ~]# 查看dashboard pod运行情况:

[root@m1 ~]# kubectl --namespace kubernetes-dashboard get pods -o wide |grep dashboard

dashboard-metrics-scraper-7b59f7d4df-xzxs8 1/1 Running 0 82s 172.16.217.13 n2 <none> <none>

kubernetes-dashboard-5dbf55bd9d-s8rhb 1/1 Running 0 82s 172.16.40.132 n1 <none> <none>

[root@m1 ~]# 查看dashboard service的运行情况:

[root@m1 ~]# kubectl get services kubernetes-dashboard -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes-dashboard NodePort 10.255.120.138 <none> 443:8523/TCP 101s

[root@m1 ~]# 到n1节点上查看8523端口是否有被正常监听:

[root@n1 ~]# netstat -ntlp |grep 8523

tcp 0 0 0.0.0.0:8523 0.0.0.0:* LISTEN 13230/kube-proxy

[root@n1 ~]# 为了集群安全,从 1.7 开始,dashboard 只允许通过 https 访问,我们使用NodePort的方式暴露服务,可以使用 https://NodeIP:NodePort 地址访问。例如使用curl进行访问:

[root@n1 ~]# curl https://192.168.243.146:8523 -k

<!--

Copyright 2017 The Kubernetes Authors.

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

-->

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>Kubernetes Dashboard</title>

<link rel="icon"

type="image/png"

href="assets/images/kubernetes-logo.png" />

<meta name="viewport"

content="width=device-width">

<link rel="stylesheet" href="styles.988f26601cdcb14da469.css"></head>

<body>

<kd-root></kd-root>

<script src="runtime.ddfec48137b0abfd678a.js" defer></script><script src="polyfills-es5.d57fe778f4588e63cc5c.js" nomodule defer></script><script src="polyfills.49104fe38e0ae7955ebb.js" defer></script><script src="scripts.391d299173602e261418.js" defer></script><script src="main.b94e335c0d02b12e3a7b.js" defer></script></body>

</html>

[root@n1 ~]# -k参数指定不验证证书进行https请求关于自定义证书

默认dashboard的证书是自动生成的,肯定是非安全的证书,如果大家有域名和对应的安全证书可以自己替换掉。使用安全的域名方式访问dashboard。

在dashboard-all.yaml中增加dashboard启动参数,可以指定证书文件,其中证书文件是通过secret注进来的。

- --tls-cert-file=dashboard.cer

- --tls-key-file=dashboard.key

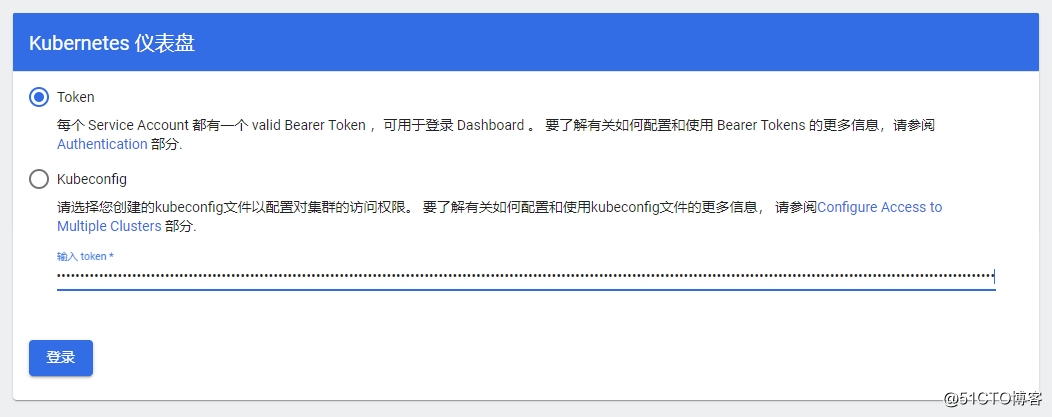

Dashboard 默认只支持 token 认证,所以如果使用 KubeConfig 文件,需要在该文件中指定 token,我们这里使用token的方式登录。

首先创建service account:

[root@m1 ~]# kubectl create sa dashboard-admin -n kube-system

serviceaccount/dashboard-admin created

[root@m1 ~]#创建角色绑定关系:

[root@m1 ~]# kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

clusterrolebinding.rbac.authorization.k8s.io/dashboard-admin created

[root@m1 ~]# 查看dashboard-admin的Secret名称:

[root@m1 ~]# kubectl get secrets -n kube-system | grep dashboard-admin | awk ‘{print $1}‘

dashboard-admin-token-757fb

[root@m1 ~]# 打印Secret的token:

[root@m1 ~]# ADMIN_SECRET=$(kubectl get secrets -n kube-system | grep dashboard-admin | awk ‘{print $1}‘)

[root@m1 ~]# kubectl describe secret -n kube-system ${ADMIN_SECRET} | grep -E ‘^token‘ | awk ‘{print $2}‘

eyJhbGciOiJSUzI1NiIsImtpZCI6Ilhyci13eDR3TUtmSG9kcXJxdzVmcFdBTFBGeDhrOUY2QlZoenZhQWVZM0EifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tNzU3ZmIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiYjdlMWVhMzQtMjNhMS00MjZkLWI0NTktOGI2NmQxZWZjMWUzIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.UlKmcZoGb6OQ1jE55oShAA2dBiL0FHEcIADCfTogtBEuYLPdJtBUVQZ_aVICGI23gugIu6Y9Yt7iQYlwT6zExhUzDz0UUiBT1nSLe94CkPl64LXbeWkC3w2jee8iSqR2UfIZ4fzY6azaqhGKE1Fmm_DLjD-BS-etphOIFoCQFbabuFjvR8DVDss0z1czhHwXEOvlv5ted00t50dzv0rAZ8JN-PdOoem3aDkXDvWWmqu31QAhqK1strQspbUOF5cgcSeGwsQMfau8U5BNsm_K92IremHqOVvOinkR_EHslomDJRc3FYbV_Jw359rc-QROSTbLphRfvGNx9UANDMo8lA

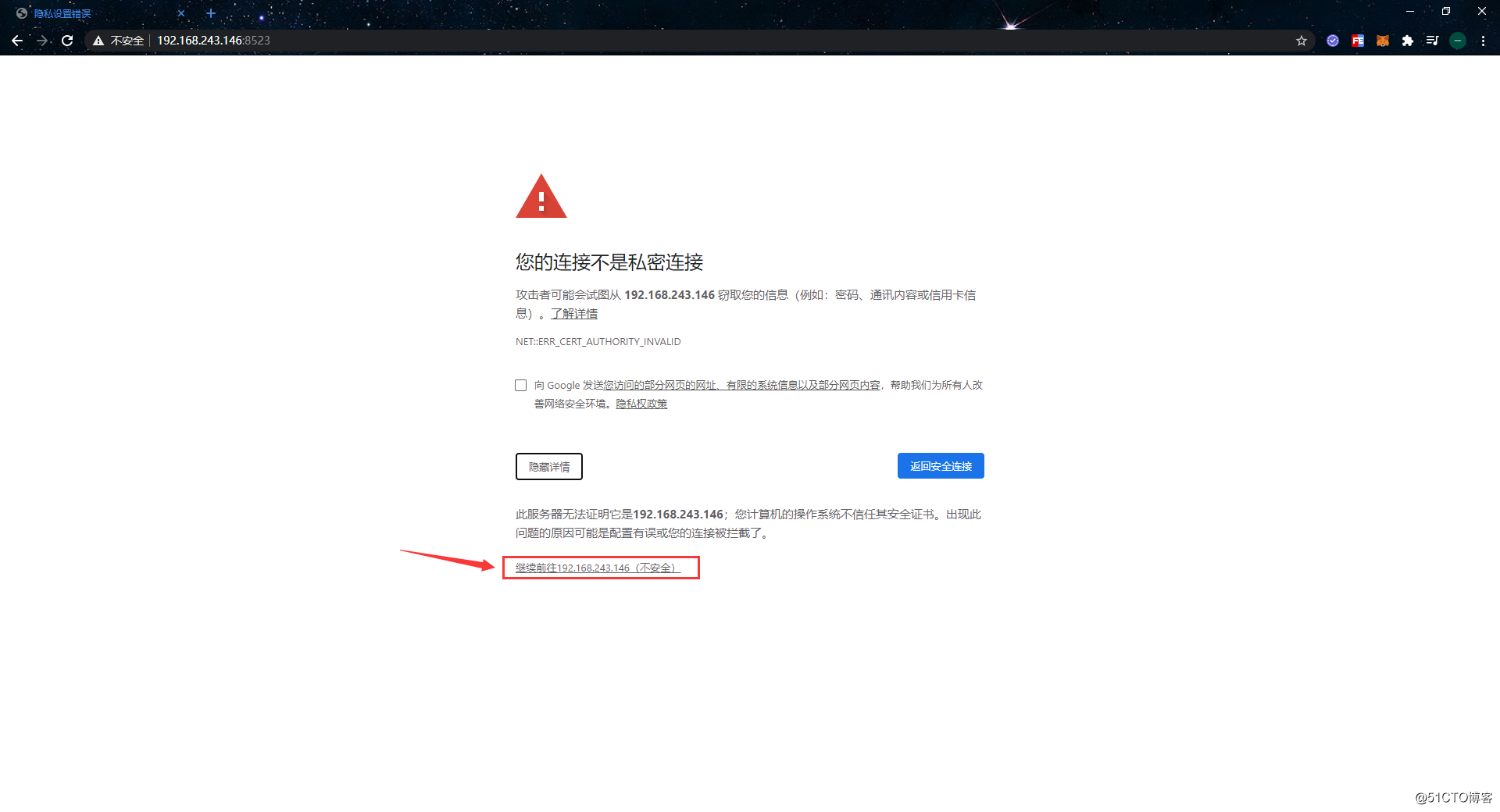

[root@m1 ~]# 获取到token后,使用浏览器访问https://192.168.243.146:8523,由于是dashboard是自签的证书,所以此时浏览器会提示警告。不用理会直接点击“高级” -> “继续前往”即可:

然后输入token:

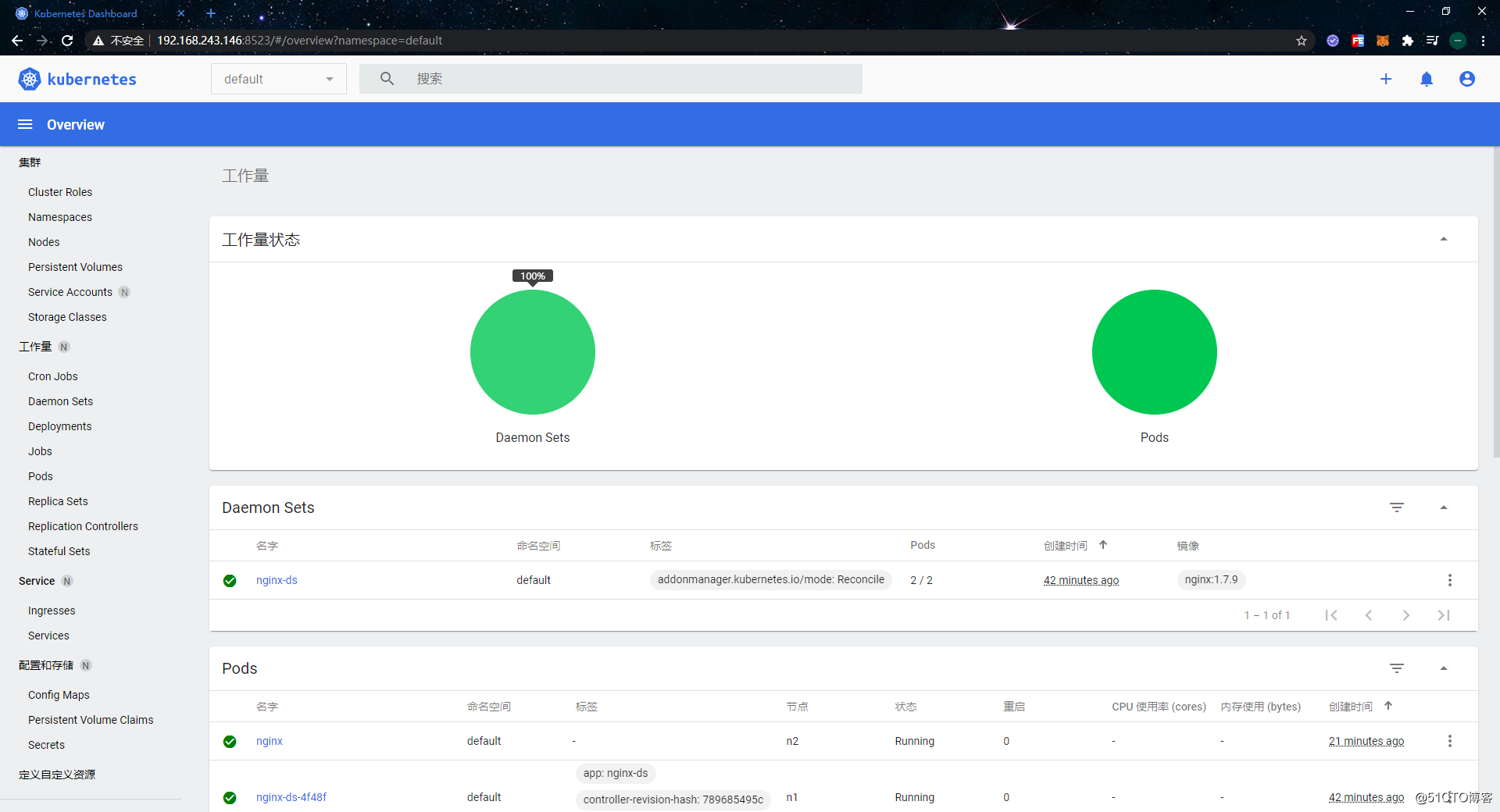

成功登录后首页如下:

可视化界面也没啥可说的,这里就不进一步介绍了,可以自行探索一下。我们使用二进制方式搭建高可用的k8s集群之旅至此就结束了,本文篇幅可以说是非常的长,这也是为了记录每一步的操作细节,所以为了方便还是使用kubeadm吧。

标签:data time memory 修改 参数 使用 ystemd 服务器 还需要

原文地址:https://blog.51cto.com/zero01/2529035