标签:这一 就是 inf class 分词 pen 路径 txt mamicode

import jieba

excludes = {"什么","一个","我们","那里","你们","如今","说道","知道","起来","姑娘","这里","出来","他们","众人","自己",

"一面","只见","怎么","两个","没有","不是","不知","这个","听见","这样","进来","咱们","告诉","就是",

"东西","袭人","回来","只是","大家","只得","老爷","丫头","这些","不敢","出去","所以","不过","的话","不好",

"姐姐","探春","鸳鸯","一时","不能","过来","心里","如此","今日","银子","几个","答应","二人","还有","只管",

"这么","说话","一回","那边","这话","外头","打发","自然","今儿","罢了","屋里","那些","听说","小丫头","不用","如何"}

txt = open("红楼梦.txt","r",encoding=‘utf-8‘).read()

‘‘‘

不写明路径的话,默认和保存的python文件在同一目录下 注意打开格式是utf-8,这个可以打开txt文件,选择另存为,注意界面右下角的格式

‘‘‘

words = jieba.lcut(txt)

‘‘‘

利用jieba库将红楼梦的所有语句分成词汇

‘‘‘

counts = {}

‘‘‘

创建的一个空的字典

‘‘‘

for word in words:

if len(word) == 1: #如果长度是一,可能是语气词之类的,应该删除掉

continue

else:

counts[word] = counts.get(word,0) + 1

‘‘‘

如果字典中没有这个健(名字)则创建,如果有这个健那么就给他的计数加一

[姓名:数量],这里是数量加一

‘‘‘

for word in excludes:

del(counts[word])

‘‘‘

#这一步:如果列出的干扰词汇在分完词后的所有词汇中那么删除

‘‘‘

items = list(counts.items())

‘‘‘

把保存[姓名:个数]的字典转换成列表

‘‘‘

items.sort(key=lambda x:x[1],reverse = True)

‘‘‘

对上述列表进行排序,‘True‘是降序排列

‘‘‘

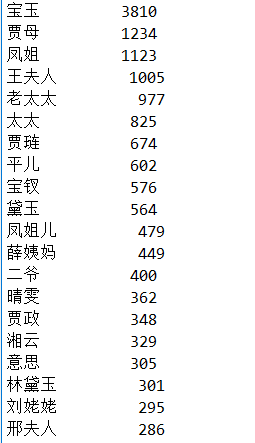

for i in range(20):

word,count = items[i]

print("{0:<10}{1:>5}".format(word,count))

标签:这一 就是 inf class 分词 pen 路径 txt mamicode

原文地址:https://www.cnblogs.com/1999lyx/p/13974469.html