标签:des http io ar os sp for strong on

很赞的注释:

* nc_connection.[ch] * Connection (struct conn) * + + + * | | | * | Proxy | * | nc_proxy.[ch] | * / * Client Server * nc_client.[ch] nc_server.[ch]

* nc_message.[ch]

* _message (struct msg)

* + + .

* | | .

* / \ .

* Request Response .../ nc_mbuf.[ch] (mesage buffers)

* nc_request.c nc_response.c .../ nc_memcache.c; nc_redis.c (_message parser)

* Messages in nutcracker are manipulated by a chain of processing handlers,

* where each handler is responsible for taking the input and producing an

* output for the next handler in the chain. This mechanism of processing

* loosely conforms to the standard chain-of-responsibility design pattern

* Client+ Proxy Server+

* (nutcracker)

* .

* msg_recv {read event} . msg_recv {read event}

* + . +

* | . |

* \ . /

* req_recv_next . rsp_recv_next

* + . +

* | . | Rsp

* req_recv_done . rsp_recv_done <===

* + . +

* | . |

* Req \ . /

* ===> req_filter* . *rsp_filter

* + . +

* | . |

* \ . /

* req_forward-// (1) . (3) \\-rsp_forward

* .

* .

* msg_send {write event} . msg_send {write event}

* + . +

* | . |

* Rsp‘ \ . / Req‘

* <=== rsp_send_next . req_send_next ===>

* + . +

* | . |

* \ . /

* rsp_send_done-// (4) . (2) //-req_send_done

*

*

* (1) -> (2) -> (3) -> (4) is the normal flow of transaction consisting

* of a single request response, where (1) and (2) handle request from

* client, while (3) and (4) handle the corresponding response from the

* server.

好有爱的注释!!

对应这段注释的代码:

struct conn *

conn_get(void *owner, bool client, bool redis)

{

struct conn *conn;

conn = _conn_get();

conn->client = client ? 1 : 0;

if (conn->client) {

conn->recv = msg_recv;

conn->recv_next = req_recv_next;

conn->recv_done = req_recv_done;

conn->send = msg_send;

conn->send_next = rsp_send_next;

conn->send_done = rsp_send_done;

conn->close = client_close;

conn->active = client_active;

conn->ref = client_ref;

conn->unref = client_unref;

conn->enqueue_inq = NULL;

conn->dequeue_inq = NULL;

conn->enqueue_outq = req_client_enqueue_omsgq;

conn->dequeue_outq = req_client_dequeue_omsgq;

} else {

conn->recv = msg_recv;

conn->recv_next = rsp_recv_next;

conn->recv_done = rsp_recv_done;

conn->send = msg_send;

conn->send_next = req_send_next;

conn->send_done = req_send_done;

conn->close = server_close;

conn->active = server_active;

conn->ref = server_ref;

conn->unref = server_unref;

conn->enqueue_inq = req_server_enqueue_imsgq;

conn->dequeue_inq = req_server_dequeue_imsgq;

conn->enqueue_outq = req_server_enqueue_omsgq;

conn->dequeue_outq = req_server_dequeue_omsgq;

}

conn->ref(conn, owner);

return conn;

}

我们按照 messsage.c 里面的4个步骤

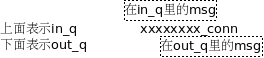

后面的图都采用这样的表示方法:

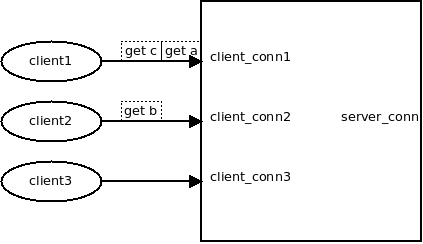

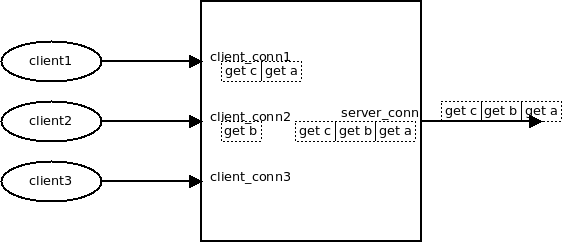

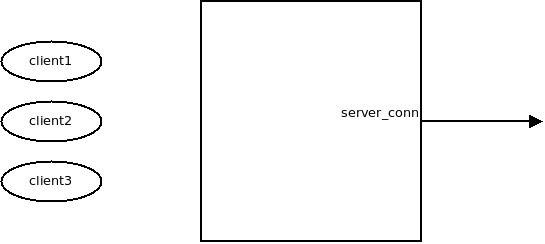

考察只有一个后端的情况, 假设有2个client要发送3个请求过来

此时回调函数:

conn->recv = msg_recv; conn->recv_next = req_recv_next; conn->recv_done = req_recv_done;

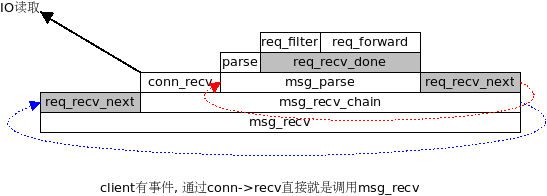

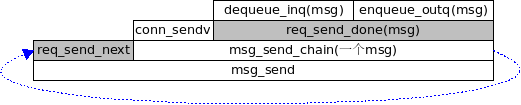

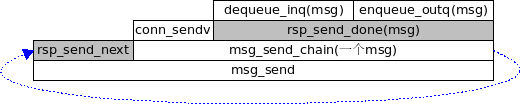

函数调用栈:

每次发生 req_recv_done(req_forward), 就会调用 req_forward()

req_forward(struct context *ctx, struct conn *c_conn, struct msg *msg)

{

if (!msg->noreply) {

c_conn->enqueue_outq(ctx, c_conn, msg);

}

//获得到后端的连接. (可能是新建, 或者从pool里面获取)

s_conn = server_pool_conn(ctx, c_conn->owner, key, keylen);

s_conn->enqueue_inq(ctx, s_conn, msg);

event_add_out(ctx->evb, s_conn);

}

就有一个msg就出现在client_conn->out_q, 同时出现在server_conn->in_q

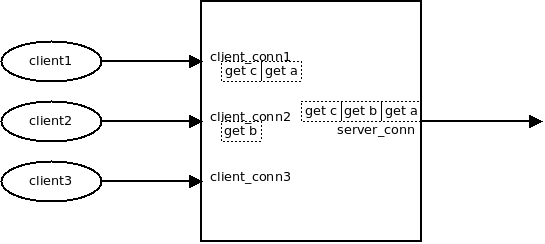

req_forward用server_pool_conn获得一个server_conn.

对于server_conn来说, 因为挂了epoll_out事件, 很快就会调用 conn->send,也就是msg_send.

此时:

conn->send = msg_send; conn->send_next = req_send_next; conn->send_done = req_send_done;

调用栈:

#这时, 每次发生 req_send_done, 这个msg就被放到server_conn->out_q

注意, 此时两个msg依然在client_conn->in_q里面

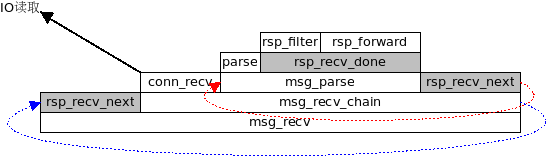

因为server_conn的 epoll_in是一直开着的, 响应很快回来后, 就到了server_conn的 msg_recv 这个过程和调用栈1类似,不过两个函数钩子不一样(图中灰色框)

conn->recv = msg_recv;(和<1>一样) conn->recv_next = rsp_recv_next; conn->recv_done = rsp_recv_done;

这里 rsp_recv_next 的作用是, 拿到下一个要接收的msg

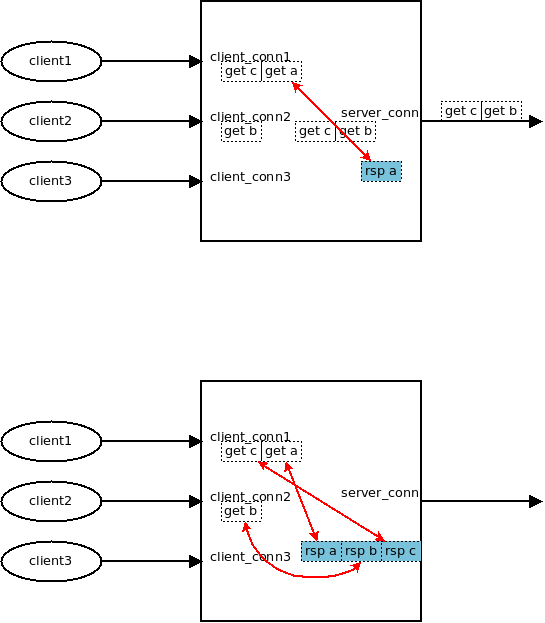

rsp_forward 会把 msg从 server_conn->outq 里面摘掉, 同时设置req和resp之间的一一对应关系

//establish msg <-> pmsg (response <-> request) link pmsg->peer = msg; msg->peer = pmsg;

上面这个代码是整个过程的精华所在

这时候, client的q_out上排队的req, 就有了对应的response

这时也会设置:

event_add_out(ctx->evb, c_conn);

每收到一个rsp, 就从server_conn的out_q摘掉, 并设置一一对应关系, 如下:

现在每个请求的msg都有了一个对应的response msg, client_conn的out事件也挂上了, 下面这个调用栈:

最终, 一切归于沉寂, 后端连接依然在:

twemproxy源码分析之四:处理流程ji(内容属于转载。

标签:des http io ar os sp for strong on

原文地址:http://www.cnblogs.com/shenhang/p/4156058.html