标签:

2352 /* 2353 * Incoming packets are placed on per-cpu queues 2354 */ 2355 struct softnet_data { 2356 struct list_head poll_list; 2357 struct sk_buff_head process_queue; 2358 2359 /* stats */ 2360 unsigned int processed; 2361 unsigned int time_squeeze; 2362 unsigned int cpu_collision; 2363 unsigned int received_rps; 2364 #ifdef CONFIG_RPS 2365 struct softnet_data *rps_ipi_list; 2366 #endif 2367 #ifdef CONFIG_NET_FLOW_LIMIT 2368 struct sd_flow_limit __rcu *flow_limit; 2369 #endif 2370 struct Qdisc *output_queue; 2371 struct Qdisc **output_queue_tailp; 2372 struct sk_buff *completion_queue; 2373 2374 #ifdef CONFIG_RPS 2375 /* Elements below can be accessed between CPUs for RPS */ 2376 struct call_single_data csd ____cacheline_aligned_in_smp; 2377 struct softnet_data *rps_ipi_next; 2378 unsigned int cpu; 2379 unsigned int input_queue_head; 2380 unsigned int input_queue_tail; 2381 #endif 2382 unsigned int dropped; 2383 struct sk_buff_head input_pkt_queue; //对所有进入分组建立一个链表。 2384 struct napi_struct backlog; 2385 2386 };

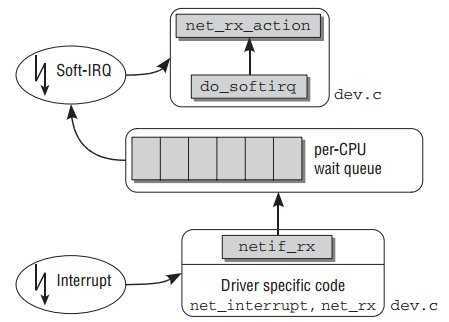

3329 static int netif_rx_internal(struct sk_buff *skb) 3330 { 3331 int ret; 3332 3333 net_timestamp_check(netdev_tstamp_prequeue, skb); 3334 3335 trace_netif_rx(skb); 3336 #ifdef CONFIG_RPS //RPS 和 RFS 相关代码 3337 if (static_key_false(&rps_needed)) { 3338 struct rps_dev_flow voidflow, *rflow = &voidflow; 3339 int cpu; 3340 3341 preempt_disable(); 3342 rcu_read_lock(); 3343 3344 cpu = get_rps_cpu(skb->dev, skb, &rflow); //选择合适的CPU id 3345 if (cpu < 0) 3346 cpu = smp_processor_id(); 3347 3348 ret = enqueue_to_backlog(skb, cpu, &rflow->last_qtail); 3349 3350 rcu_read_unlock(); 3351 preempt_enable(); 3352 } else 3353 #endif 3354 { 3355 unsigned int qtail; 3356 ret = enqueue_to_backlog(skb, get_cpu(), &qtail); //将skb入队 3357 put_cpu(); 3358 } 3359 return ret; 3360 } 3361 3362 /** 3363 * netif_rx - post buffer to the network code 3364 * @skb: buffer to post 3365 * 3366 * This function receives a packet from a device driver and queues it for 3367 * the upper (protocol) levels to process. It always succeeds. The buffer 3368 * may be dropped during processing for congestion control or by the 3369 * protocol layers. 3370 * 3371 * return values: 3372 * NET_RX_SUCCESS (no congestion) 3373 * NET_RX_DROP (packet was dropped) 3374 * 3375 */ 3376 3377 int netif_rx(struct sk_buff *skb) 3378 { 3379 trace_netif_rx_entry(skb); 3380 3381 return netif_rx_internal(skb); 3382 }

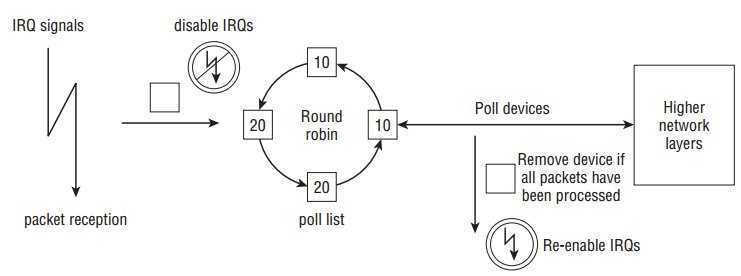

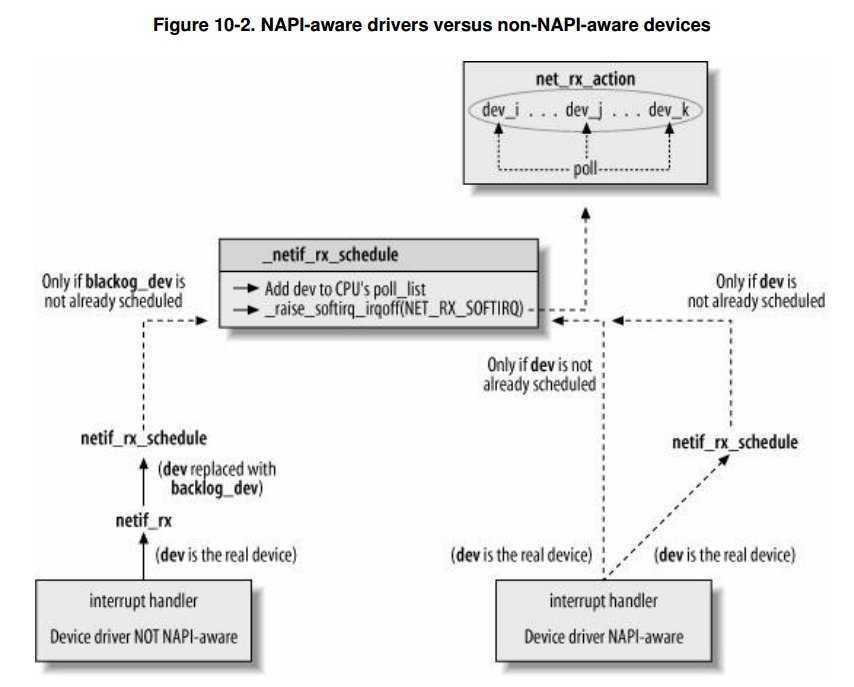

3286 static int enqueue_to_backlog(struct sk_buff *skb, int cpu, 3287 unsigned int *qtail) 3288 { 3289 struct softnet_data *sd; 3290 unsigned long flags; 3291 unsigned int qlen; 3292 3293 sd = &per_cpu(softnet_data, cpu); //获得此cpu上的softnet_data 3294 3295 local_irq_save(flags); 3296 3297 rps_lock(sd); 3298 qlen = skb_queue_len(&sd->input_pkt_queue); 3299 if (qlen <= netdev_max_backlog && !skb_flow_limit(skb, qlen)) { 3300 if (qlen) { 3301 enqueue: 3302 __skb_queue_tail(&sd->input_pkt_queue, skb); 3303 input_queue_tail_incr_save(sd, qtail); 3304 rps_unlock(sd); 3305 local_irq_restore(flags); 3306 return NET_RX_SUCCESS; 3307 } 3308 3309 /* Schedule NAPI for backlog device 3310 * We can use non atomic operation since we own the queue lock 3311 */ 3312 if (!__test_and_set_bit(NAPI_STATE_SCHED, &sd->backlog.state)) { 3313 if (!rps_ipi_queued(sd)) 3314 ____napi_schedule(sd, &sd->backlog); //只有qlen是0的时候才执行到这里 3315 } 3316 goto enqueue; 3317 } 3318 3319 sd->dropped++; 3320 rps_unlock(sd); 3321 3322 local_irq_restore(flags); 3323 3324 atomic_long_inc(&skb->dev->rx_dropped); 3325 kfree_skb(skb); 3326 return NET_RX_DROP; 3327 }

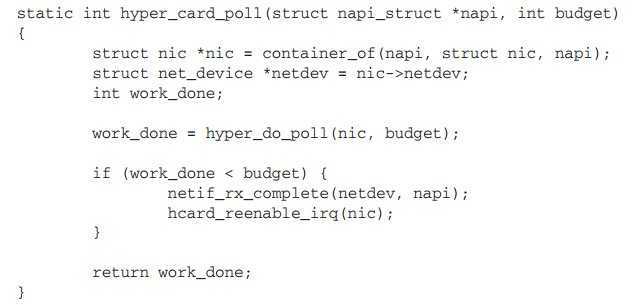

296 /* 297 * Structure for NAPI scheduling similar to tasklet but with weighting 298 */ 299 struct napi_struct { 300 /* The poll_list must only be managed by the entity which 301 * changes the state of the NAPI_STATE_SCHED bit. This means 302 * whoever atomically sets that bit can add this napi_struct 303 * to the per-cpu poll_list, and whoever clears that bit 304 * can remove from the list right before clearing the bit. 305 */ 306 struct list_head poll_list; //用作链表元素 307 308 unsigned long state; 309 int weight; //设备权重 310 unsigned int gro_count; 311 int (*poll)(struct napi_struct *, int); //设备提供的轮询函数 312 #ifdef CONFIG_NETPOLL 313 spinlock_t poll_lock; 314 int poll_owner; 315 #endif 316 struct net_device *dev; 317 struct sk_buff *gro_list; 318 struct sk_buff *skb; 319 struct hrtimer timer; 320 struct list_head dev_list; 321 struct hlist_node napi_hash_node; 322 unsigned int napi_id; 323 };

2215 static irqreturn_t e100_intr(int irq, void *dev_id) 2216 { 2217 struct net_device *netdev = dev_id; 2218 struct nic *nic = netdev_priv(netdev); 2219 u8 stat_ack = ioread8(&nic->csr->scb.stat_ack); 2220 2221 netif_printk(nic, intr, KERN_DEBUG, nic->netdev, 2222 "stat_ack = 0x%02X\n", stat_ack); 2223 2224 if (stat_ack == stat_ack_not_ours || /* Not our interrupt */ 2225 stat_ack == stat_ack_not_present) /* Hardware is ejected */ 2226 return IRQ_NONE; 2227 2228 /* Ack interrupt(s) */ 2229 iowrite8(stat_ack, &nic->csr->scb.stat_ack); 2230 2231 /* We hit Receive No Resource (RNR); restart RU after cleaning */ 2232 if (stat_ack & stat_ack_rnr) 2233 nic->ru_running = RU_SUSPENDED; 2234 2235 if (likely(napi_schedule_prep(&nic->napi))) { //设置state为NAPI_STATE_SCHED 2236 e100_disable_irq(nic); 2237 __napi_schedule(&nic->napi); //将设备添加到 poll list,并开启软中断。 2238 } 2239 2240 return IRQ_HANDLED; 2241 }

3013 /* Called with irq disabled */ 3014 static inline void ____napi_schedule(struct softnet_data *sd, 3015 struct napi_struct *napi) 3016 { 3017 list_add_tail(&napi->poll_list, &sd->poll_list); 3018 __raise_softirq_irqoff(NET_RX_SOFTIRQ); 3019 } 4375 /** 4376 * __napi_schedule - schedule for receive 4377 * @n: entry to schedule 4378 * 4379 * The entry‘s receive function will be scheduled to run. 4380 * Consider using __napi_schedule_irqoff() if hard irqs are masked. 4381 */ 4382 void __napi_schedule(struct napi_struct *n) 4383 { 4384 unsigned long flags; 4385 4386 local_irq_save(flags); 4387 ____napi_schedule(this_cpu_ptr(&softnet_data), n); 4388 local_irq_restore(flags); 4389 } 4390 EXPORT_SYMBOL(__napi_schedule);

标签:

原文地址:http://www.cnblogs.com/lxgeek/p/4182029.html