标签:

final private[streaming] class DStreamGraph extends Serializable with Logging {

private val inputStreams = new ArrayBuffer[InputDStream[_]]()

private val outputStreams = new ArrayBuffer[DStream[_]]()

var rememberDuration: Duration = null

var checkpointInProgress = false

var zeroTime: Time = null

var startTime: Time = null

var batchDuration: Duration = null

def addInputStream(inputStream: InputDStream[_]) {

this.synchronized {

inputStream.setGraph(this)

inputStreams += inputStream

}

}

def addOutputStream(outputStream: DStream[_]) {

this.synchronized {

outputStream.setGraph(this)

outputStreams += outputStream

}

}

def getInputStreams() = this.synchronized { inputStreams.toArray }

def getOutputStreams() = this.synchronized { outputStreams.toArray }

def getReceiverInputStreams() = this.synchronized {

inputStreams.filter(_.isInstanceOf[ReceiverInputDStream[_]])

.map(_.asInstanceOf[ReceiverInputDStream[_]])

.toArray

}

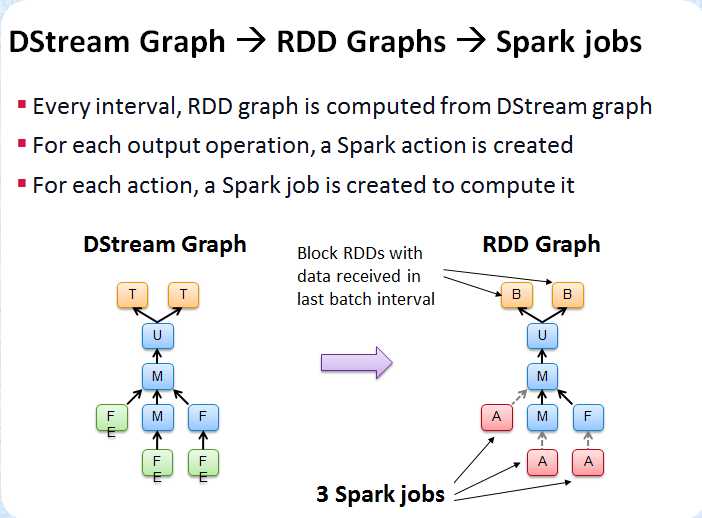

def generateJobs(time: Time): Seq[Job] = {

logDebug("Generating jobs for time " + time)

val jobs = this.synchronized {

outputStreams.flatMap(outputStream => outputStream.generateJob(time))

}

logDebug("Generated " + jobs.length + " jobs for time " + time)

jobs

}

@throws(classOf[IOException])

private def writeObject(oos: ObjectOutputStream): Unit = Utils.tryOrIOException {

logDebug("DStreamGraph.writeObject used")

this.synchronized {

checkpointInProgress = true

logDebug("Enabled checkpoint mode")

oos.defaultWriteObject()

checkpointInProgress = false

logDebug("Disabled checkpoint mode")

}

}

@throws(classOf[IOException])

private def readObject(ois: ObjectInputStream): Unit = Utils.tryOrIOException {

logDebug("DStreamGraph.readObject used")

this.synchronized {

checkpointInProgress = true

ois.defaultReadObject()

checkpointInProgress = false

}

}

/**

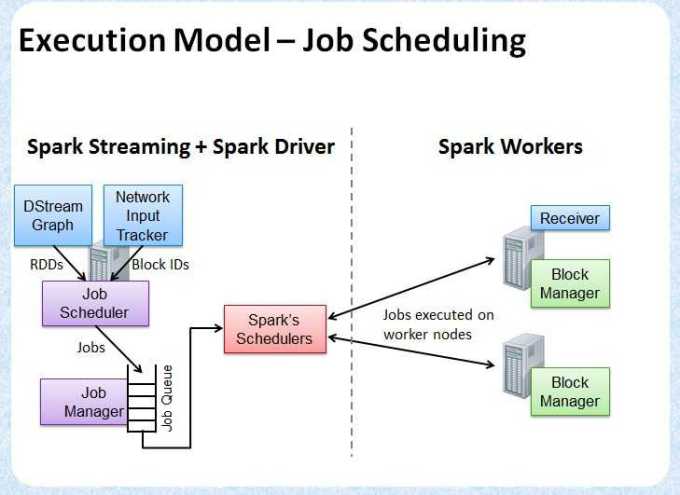

* This class schedules jobs to be run on Spark. It uses the JobGenerator to generate

* the jobs and runs them using a thread pool.

*/

private[streaming]

class JobScheduler(val ssc: StreamingContext) extends Logging {

private val jobSets = new ConcurrentHashMap[Time, JobSet]

private val numConcurrentJobs = ssc.conf.getInt("spark.streaming.concurrentJobs", 1)

private val jobExecutor = Executors.newFixedThreadPool(numConcurrentJobs)

private val jobGenerator = new JobGenerator(this)

val clock = jobGenerator.clock

val listenerBus = new StreamingListenerBus()

// These two are created only when scheduler starts.

// eventActor not being null means the scheduler has been started and not stopped

var receiverTracker: ReceiverTracker = null

private var eventActor: ActorRef = null

def start(): Unit = synchronized {

if (eventActor != null) return // scheduler has already been started

logDebug("Starting JobScheduler")

eventActor = ssc.env.actorSystem.actorOf(Props(new Actor {

def receive = {

case event: JobSchedulerEvent => processEvent(event)

}

}), "JobScheduler")

listenerBus.start()

receiverTracker = new ReceiverTracker(ssc)

receiverTracker.start()

jobGenerator.start()

logInfo("Started JobScheduler")

}

def submitJobSet(jobSet: JobSet) {

if (jobSet.jobs.isEmpty) {

logInfo("No jobs added for time " + jobSet.time)

} else {

jobSets.put(jobSet.time, jobSet)

jobSet.jobs.foreach(job => jobExecutor.execute(new JobHandler(job)))

logInfo("Added jobs for time " + jobSet.time)

}

}

private class JobHandler(job: Job) extends Runnable {

def run() {

eventActor ! JobStarted(job)

job.run()

eventActor ! JobCompleted(job)

}

}

private def handleJobCompletion(job: Job) {

job.result match {

case Success(_) =>

val jobSet = jobSets.get(job.time)

jobSet.handleJobCompletion(job)

logInfo("Finished job " + job.id + " from job set of time " + jobSet.time)

if (jobSet.hasCompleted) {

jobSets.remove(jobSet.time)

jobGenerator.onBatchCompletion(jobSet.time)

logInfo("Total delay: %.3f s for time %s (execution: %.3f s)".format(

jobSet.totalDelay / 1000.0, jobSet.time.toString,

jobSet.processingDelay / 1000.0

))

listenerBus.post(StreamingListenerBatchCompleted(jobSet.toBatchInfo))

}

case Failure(e) =>

reportError("Error running job " + job, e)

}

}

spark streaming 4: DStreamGraph JobScheduler

标签:

原文地址:http://www.cnblogs.com/zwCHAN/p/4274819.html